Last Updated on April 16, 2026 by PostUpgrade

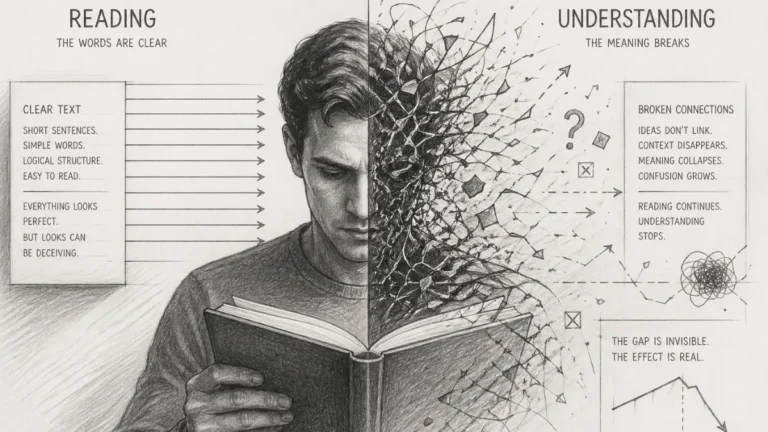

How to Structure Evidence So Content Becomes Interpretable

Your evidence is not missing — it is structurally invisible, so the system cannot connect it to your claims.

TL;DR: Content fails not because it lacks evidence, but because evidence is disconnected, misaligned, or too distant to be interpreted. As a result, AI systems cannot validate, extract, or reuse claims, causing entire sections to be excluded from visibility. The mechanism is structural: broken claim–evidence proximity and missing reasoning chains prevent interpretation. The solution is to anchor every claim, maintain proximity, distribute validation across sections, and convert data into reasoning. When applied, content becomes interpretable, extractable, and reusable across AI-driven systems.

This is where most content silently fails — fix this, or your content never enters the system.

Critical condition: If claim–evidence linkage is not established at the moment of interpretation, the content is not partially evaluated — it is fully excluded from the system and never enters extraction, validation, or reuse pipelines.

If your content feels “well-supported” but still gets ignored, this is the reason. The system does not evaluate what it cannot structurally connect.

Adding evidence is not enough. If evidence is not structurally integrated, the system still ignores your content. The goal is not to “add data” but to make every claim interpretable through verifiable signals.

Definition: Evidence interpretability is the system’s ability to connect claims with verifiable data, forming structured reasoning units that can be validated, extracted, and reused.

Step 1: Anchor Every Claim to Verifiable Data

If a claim cannot be validated at the moment it appears, it does not enter interpretation. It is skipped as non-actionable content.

A claim without a verifiable anchor does not enter interpretation. It remains a descriptive statement that cannot be validated, extracted, or reused. The first step is to convert every important claim into a traceable unit supported by data, research, or documented observation.

This does not mean adding references at the end of the section. It means each claim must directly connect to a source that confirms or explains it. When this connection exists, the system can detect a stable reasoning unit instead of isolated text.

This is where most content fails: evidence exists, but not at the point where interpretation happens.

In practice, this means identifying every key statement and attaching a measurable or documented reference to it. The result is not more content, but content that becomes visible to analytical systems.

Failure state: Claims without immediate validation are not queued for later interpretation. They are discarded at the point of appearance and never become part of the system’s reasoning layer.

Step 2: Maintain Claim–Evidence Proximity

Distance between claim and evidence breaks interpretation. The system does not reconnect meaning across gaps.

Even when evidence exists, it often fails because it is placed too far from the claim. When a claim appears in one paragraph and the supporting data appears later, the system cannot reliably connect them.

Principle: Evidence becomes interpretable only when it is structurally aligned with claims, maintaining proximity, continuity, and explicit reasoning across the entire content.

Claim–evidence proximity ensures that the validation signal appears immediately next to the statement it supports. This creates a compact reasoning block that can be interpreted without reconstructing context across multiple sections.

Mechanism Breakdown:

- claim is introduced as a clear statement

- data is attached immediately after the claim

- explanation clarifies how the data supports the claim

- validation emerges through traceable connection

- continuity is preserved into the next idea

This sequence is not optional. If even one step is missing, the reasoning unit collapses.

When this sequence is maintained, interpretation becomes stable. When it is broken, the system treats the content as fragmented and unreliable.

Irreversible break: Once claim–evidence proximity is lost, the system does not attempt reconstruction. The reasoning unit collapses and is permanently excluded from interpretation.

Example: A claim supported by data placed several paragraphs away fails to form a reasoning unit, while immediate claim–evidence pairing enables stable interpretation and extraction.

Step 3: Distribute Evidence Across the Entire Structure

Partial validation creates partial visibility. Unsupported sections are not weakened — they are excluded.

A common mistake is concentrating evidence in one part of the article while leaving other sections unsupported. This creates structural imbalance where only part of the content is interpretable.

Evidence must be distributed so that each section maintains its own validation layer. This allows the system to process the article as a sequence of complete reasoning units rather than a mix of supported and unsupported claims.

System-level consequence: Sections without embedded validation are not weakened — they do not exist in the interpretative map. The system processes only structurally complete units and ignores everything else.

In practical terms, this means every section must stand on its own as a complete reasoning unit.

Failure Pattern:

- evidence appears only at the end of the article

- citations are listed without explanation

- data exists without a clear claim

- sections rely on assumptions instead of verifiable signals

When evidence distribution is uneven, interpretation does not degrade gradually. Entire sections are excluded because they lack structural validation.

Next: once evidence is present and distributed, it must be transformed into reasoning, not just inserted as raw data.

Step 4: Convert Data Into Reasoning (Not Decoration)

Data without interpretation does not support claims. It remains unused by the system.

Data alone does not create interpretability. If numbers, statistics, or references appear without explanation, they remain disconnected signals that the system cannot use.

The final step is converting data into reasoning by explicitly linking it to the claim. This means explaining what the data shows, why it matters, and how it supports the argument.

This is where most evidence fails: it exists, but does not explain anything.

Dead signal condition: Data without explicit reasoning is not interpreted later. It is treated as noise and permanently excluded from meaning extraction.

This leads to a critical transition: content moves from “having evidence” to “being interpretable.” When data is integrated into explanation, it becomes part of the reasoning chain rather than an isolated reference.

This layer does not complete the system. Structuring evidence at the claim level only enables local interpretation, but without a full evidence-based writing system, reasoning remains fragmented and breaks under scale. If this step is not extended into a structured workflow, the content still fails to sustain interpretability across sections and is excluded from consistent extraction. To see how evidence becomes a complete system rather than isolated fixes, continue to the next layer: evidence-based writing system.

This transition determines whether content becomes usable or remains structurally invisible.

When all four steps are applied together, content becomes structurally interpretable. Without this system, evidence does not strengthen the argument—it remains invisible to the systems that determine whether the content exists at all.

Checklist:

- Is every claim directly connected to verifiable evidence?

- Does evidence appear immediately next to the claim?

- Are reasoning chains clearly explained, not implied?

- Is evidence distributed across all sections of the content?

- Does each section maintain its own validation layer?

- Can each claim be interpreted without reconstructing context?

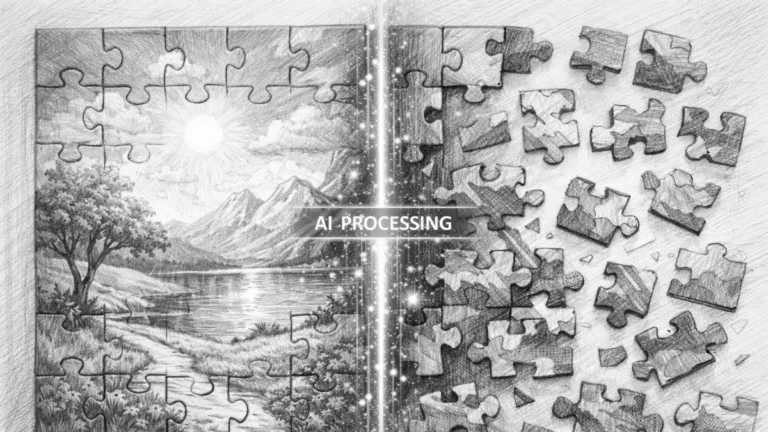

Structural Logic of Evidence Interpretation in AI Systems

- Claim–evidence binding. Interpretability emerges when claims are structurally linked to verifiable signals, forming traceable reasoning units that systems can validate without reconstructing missing context.

- Reasoning chain continuity. Consistent proximity and distribution of evidence across sections enables stable interpretation, preventing fragmentation and exclusion during extraction and reuse processes.

These structural properties define how evidence transitions from isolated data into interpretable reasoning within AI-driven content evaluation systems.

Evidence Interpretation Flow Model

AI systems reconstruct meaning by transforming claims and evidence into structured reasoning chains. This model shows how validation signals become interpretable units and where structural gaps break interpretation.

[Claim Formation]

↓

[Evidence Anchoring]

↓

[Claim–Evidence Proximity]

↓

[Reasoning Integration]

↓

[Distributed Validation]

↓

─────────────────────────

↓

[Interpretation Layer]

↓

[Meaning Extraction]

↓

[Content Reuse in AI Systems]

Failure Principle: When claims are not structurally linked to evidence, interpretation does not degrade—it stops. The system cannot validate or extract meaning and excludes the content entirely.

Final consequence: Content without structured evidence is not competing, not ranking, and not evaluated. It is never processed by the system at all. Visibility begins only after interpretability is structurally achieved.

FAQ: Evidence Structuring for AI Interpretation

Why does content fail even when evidence is present?

Content fails when evidence is not structurally connected to claims, making it impossible for AI systems to validate or interpret meaning.

What is claim–evidence proximity?

Claim–evidence proximity ensures that supporting data appears immediately next to the statement, creating a stable and interpretable reasoning unit.

Why is distributing evidence across sections important?

Uneven evidence distribution creates gaps where sections lack validation, causing AI systems to exclude unsupported parts of the content.

Why is data alone not enough for AI interpretation?

Data without explanation remains a disconnected signal, preventing systems from linking it to claims and forming usable reasoning chains.

How does structured evidence improve AI visibility?

When claims, evidence, and reasoning are aligned, content becomes interpretable, allowing AI systems to extract, validate, and reuse it.

Glossary: Key Terms in Evidence Structuring

This glossary defines the core concepts required to make evidence interpretable for AI systems and structured reasoning.

Verifiable Anchor

A direct reference or data point attached to a claim, enabling systems to validate and interpret it without ambiguity.

Claim–Evidence Proximity

The structural placement of evidence immediately next to a claim, forming a compact and interpretable reasoning unit.

Reasoning Chain

A sequence linking claims, evidence, and explanation, allowing systems to extract meaning and reuse structured knowledge.

Distributed Validation

The presence of supporting evidence across all sections, ensuring that each part of the content remains interpretable.

Interpretability

The ability of AI systems to reconstruct meaning from structured claims and evidence without requiring external inference.