Last Updated on April 19, 2026 by PostUpgrade

Why AI Ignores Content: The Trust Signal Failure Layer

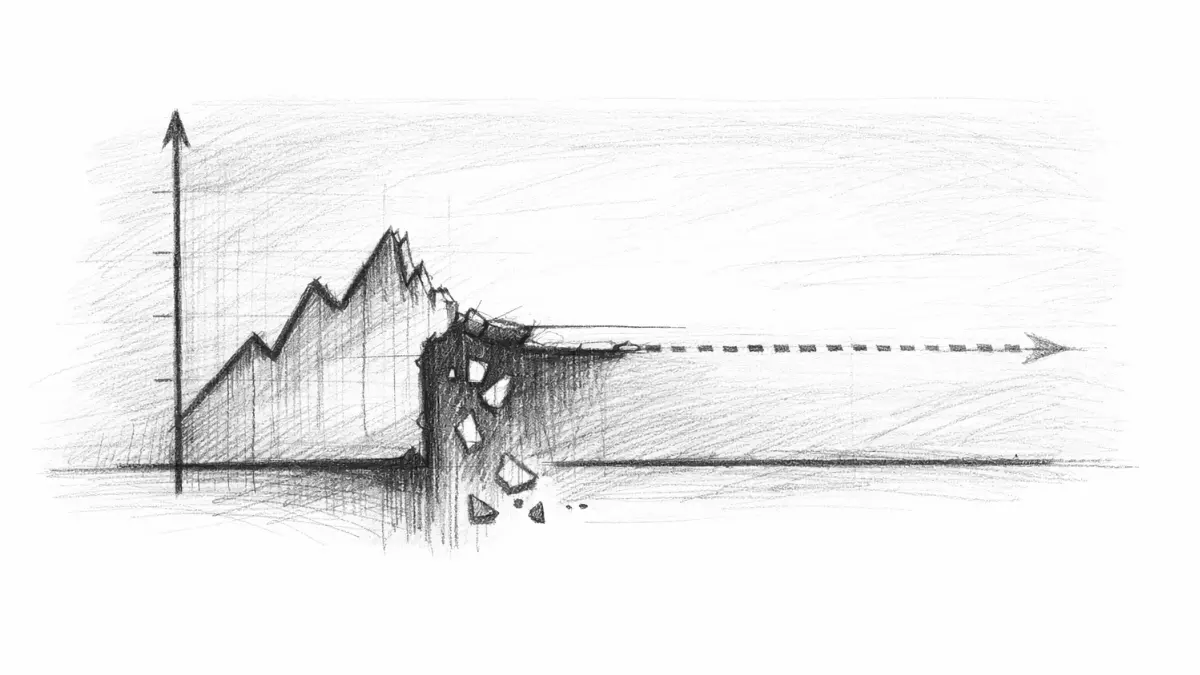

AI is not ignoring your content — it is blocking it before interpretation even starts.

TL;DR: Most content never reaches AI interpretation, so it cannot be extracted, reused, or shown in answers. The consequence is zero visibility, regardless of quality. The mechanism is missing trust signals that prevent content from entering processing pipelines. Fixing structure, evidence, and consistency allows AI to interpret and reuse content, restoring visibility.

This is the exact point where content disappears before it has any chance to exist in AI systems.

This is not a visibility problem. Your content is being removed before AI systems even decide what it means—and you will never see it used anywhere.

Most content is not competing in AI systems. It is being removed before it is even seen. The real problem is not ranking failure, but structural exclusion from interpretation.

If you miss this layer, nothing you publish will ever appear in AI-driven systems, no matter how much you optimize later.

Definition: AI content eligibility is the condition in which structural and trust signals allow content to enter interpretation pipelines instead of being excluded before processing begins.

Content that lacks trust signals does not get evaluated, compared, or scored. It never exists inside AI systems as a usable input. It is filtered out at the earliest stage and never becomes part of AI-generated answers because it never enters the system at all.

The Moment Content Gets Excluded Before Interpretation

If content fails here, nothing else matters. Interpretation never starts, and visibility becomes impossible.

The critical failure happens before any meaning is extracted. AI systems decide whether content is eligible for interpretation based on structural and verification signals, not on perceived quality or intent.

This means the majority of content never reaches the stage where it could be understood. It is not misunderstood or undervalued, it is simply ignored as non-processable input.

This is the exact point where content disappears from the system without ever being analyzed.

At this stage, the system is not asking “is this good content?” It is asking “can this be reliably interpreted?” and if the answer is no, the content is excluded immediately.

To understand how these signals determine eligibility and processing, this deeper breakdown of AI trust signals in modern discovery systems explains how models detect and validate structural reliability before interpretation begins.

This leads to a non-obvious conclusion. Content does not fail gradually in AI systems, it fails instantly at the entry point.

This exclusion is not a subjective quality judgment—it is the result of a strict validation system that determines whether content is allowed into AI processing at all. Before any meaning is constructed, models evaluate structural consistency, evidence presence, and signal reliability in a defined sequence. If these conditions are not met, content is rejected at the entry point and never becomes part of the system. A deeper breakdown of how AI trust signals work at the interpretation level explains how this validation process operates and why content is either accepted into the pipeline or excluded before interpretation even begins.

Missing Trust Signals = Zero Entry Into AI Systems

Without trust signals, content does not compete. It never enters the system at all.

AI systems rely on detectable patterns to determine whether content can be processed. These patterns include structure, evidence, and consistency across entities and terminology.

When these signals are missing, the system cannot reliably segment or validate the content. As a result, it cannot convert text into structured meaning that can be reused.

This is not a matter of partial degradation. The absence of trust signals creates a binary outcome where content either enters the system or is completely excluded.

In practical terms, this means your content does not exist in AI-generated answers because it was never accepted into the system.

Principle: AI systems do not degrade weak content—they filter it out before interpretation if trust signals are incomplete or structurally inconsistent.

This leads to a critical mechanism that determines whether content is processed or rejected.

Mechanism Breakdown

Step 1: Input parsing

The system scans structure, headings, and layout patterns to detect segmentation boundaries and content hierarchy.

Step 2: Signal validation

It evaluates whether the content includes stable terminology, factual grounding, and consistent entity references.

Step 3: Eligibility decision

If signals are incomplete or inconsistent, the system flags the content as unreliable and stops processing.

Step 4: Interpretation gate

Only content that passes validation moves forward into semantic interpretation and reuse pipelines.

This leads to a strict rule. If trust signals are weak, interpretation never starts.

Interpretation Anchor: AI systems do not interpret content first and then evaluate it. They evaluate structural and trust signals first and only interpret content that passes this gate.

Why “Good Content” Still Fails in AI Search

This is where most creators get it wrong. Quality alone does not guarantee interpretation.

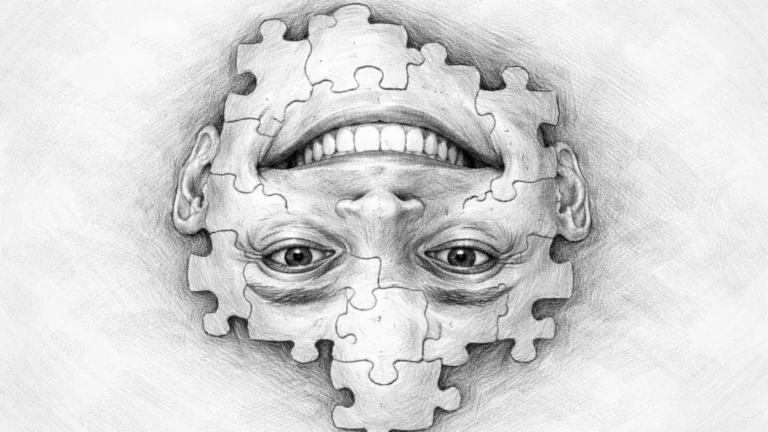

Content can appear high quality to humans and still fail completely in AI systems. The difference lies in how quality is evaluated.

Human evaluation is based on clarity, usefulness, and style. AI evaluation is based on structural predictability and verification signals.

Example: A page with inconsistent structure and no verifiable signals cannot be segmented into stable units, causing AI systems to reject it before any interpretation occurs.

This creates a mismatch where well-written content is still invisible because it does not meet machine-level requirements. The system cannot infer structure or validate claims without explicit signals.

At this stage, visibility is not reduced—it is completely removed.

This leads to a hidden failure pattern. Content that feels complete to a human reader can still be structurally incomplete for AI interpretation.

Failure Pattern

No structural consistency means the system cannot segment meaning into stable units. Without clear boundaries, paragraphs become ambiguous and unusable.

No evidence signals means the system cannot verify claims. Without data or references, statements are treated as low-confidence and are not reused.

No entity consistency means the system cannot connect concepts across content. Without stable naming, it cannot build a reliable internal representation.

Each of these failures compounds the others. The result is not lower visibility, but total exclusion from AI-generated outputs.

Hidden Failure Patterns That Kill AI Visibility

These failures are not obvious, but they determine whether your content exists in AI systems.

The most damaging failures are not obvious. They occur in ways that are invisible to traditional SEO analysis.

One pattern is structural drift, where formatting changes across sections or articles. This breaks pattern recognition and reduces confidence in interpretation.

Another pattern is semantic inconsistency, where the same concept is described differently across pages. This prevents the system from building stable connections.

A third pattern is missing verification, where claims are not supported by data or external references. This creates uncertainty that blocks reuse.

These failures are not isolated. They interact to form a system-level breakdown where content becomes non-interpretable.

This leads to a practical question. Not how to improve ranking, but how to ensure content is even processed.

This leads to a simple but critical question: does your content even enter the system?

Checklist:

- Does the content contain clear structural signals for segmentation?

- Are trust signals present to validate interpretation eligibility?

- Is terminology consistent across sections and related pages?

- Does the content include verifiable evidence or factual grounding?

- Can each paragraph function as an independent unit of meaning?

- Would AI systems interpret this content without ambiguity?

If multiple answers are “no”, the content is likely excluded before interpretation. In that state, optimization does not improve performance because the content is not part of the system at all.

Next: once content passes this entry layer, the next constraint is not visibility but reuse. This is where deeper system mechanics determine whether content becomes part of AI-generated answers or remains unused.

Structural Conditions of AI Content Eligibility

- Pre-interpretation filtering. Structural and verification signals determine whether content is accepted into processing or excluded before interpretation begins.

- Signal-dependent segmentation. Consistent structure and terminology define whether AI systems can segment meaning into usable units or reject the content entirely.

These structural conditions explain how AI systems decide content eligibility, shaping whether information becomes interpretable or remains excluded from generative processing.

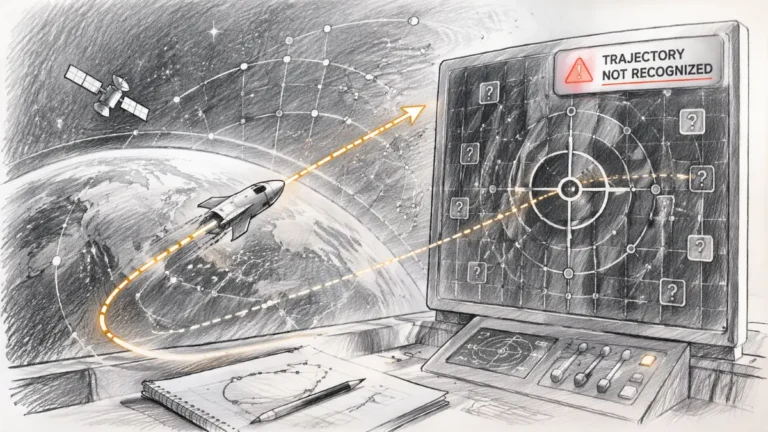

AI Content Eligibility and Interpretation Flow

AI systems do not process all content equally. They first evaluate structural and trust signals to decide whether content enters the interpretation pipeline or is excluded before meaning is reconstructed.

[Structural Signals Check]

↓

[Trust Signal Validation]

↓

[Content Eligibility Decision]

↓

─────────────────────────

↓

[Interpretation Layer]

↓

[Meaning Extraction]

↓

[Content Reuse in AI Systems]

Failure Principle: If trust signals fail at early validation, content never enters interpretation. AI systems do not degrade weak inputs—they exclude them and shift to more reliable sources.

FAQ: Why AI Ignores Content

Why does AI ignore content without trust signals?

AI does not ignore content randomly. If structural and verification signals are missing, the content is excluded before interpretation and never enters processing.

Is ignored content still evaluated by AI systems?

No. Content without sufficient trust signals is filtered out at the entry stage, meaning it is never evaluated, ranked, or reused in AI outputs.

What causes content to be excluded from AI answers?

Exclusion happens when structure is inconsistent, evidence is missing, or entities are not aligned, making the content unreliable for machine interpretation.

Can high-quality content still fail in AI search?

Yes. Human-readable quality does not guarantee AI usability. Without clear structure and verification signals, content cannot be processed or reused.

How do AI systems decide which content to process?

AI systems rely on structural consistency, factual grounding, and trust signals to determine whether content qualifies for interpretation and reuse.

Glossary: Key Terms in AI Content Exclusion

This glossary defines the core concepts that explain why content is filtered out before interpretation in AI-driven systems.

AI Content Exclusion

The process where content is filtered out before interpretation because it lacks structural or verification signals required by AI systems.

Trust Signals

Machine-detectable indicators such as structure, evidence, and consistency that determine whether content can be processed and reused.

Content Eligibility

The condition that defines whether content meets the minimum structural and verification requirements to enter AI processing pipelines.

Pre-Interpretation Filtering

The stage where AI systems evaluate structural reliability and remove content before any meaning extraction or analysis begins.

AI Processing Pipeline

A sequence of stages where content is validated, interpreted, and transformed into reusable knowledge within AI-driven systems.