Last Updated on April 19, 2026 by PostUpgrade

How AI Trust Signals Work: The Interpretation Mechanism

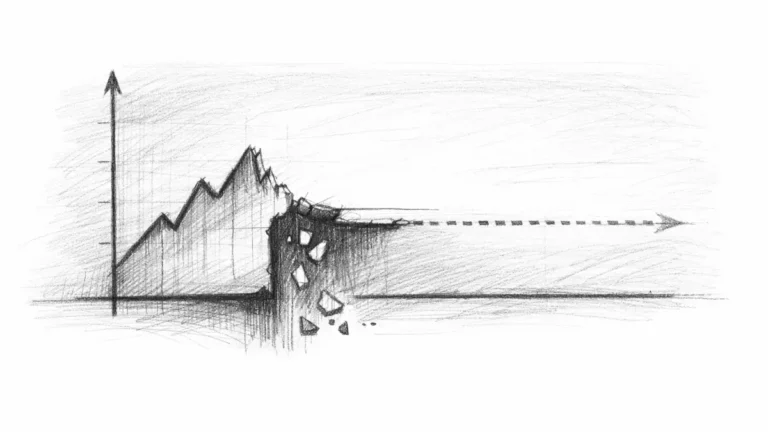

AI does not evaluate your content step by step—it validates signals first, and anything that fails is never interpreted or reused.

TL;DR: Content fails because AI cannot detect or validate trust signals, so interpretation, extraction, and reuse never happen. The mechanism is a pipeline of detection, validation, and alignment where inconsistent signals break confidence. Fixing structural, factual, and entity signals allows AI to process and reuse content reliably.

This is where most content breaks—if signals fail here, nothing moves forward in the system.

AI does not interpret your content first. It evaluates signals, and if they fail, your content never moves forward.

AI does not “trust” content in a human sense. It evaluates whether signals inside the page can be detected, validated, and reused without ambiguity. If those signals are weak or inconsistent, the system cannot reliably process the content.

This creates a clear mechanism behind how AI evaluates content trust. Understanding this mechanism explains how AI detects reliable content, how it interprets structure and evidence, and how it decides content credibility.

How AI Systems Actually Evaluate Content

This is where most assumptions break. AI does not judge quality—it validates whether signals can be processed.

AI systems do not begin with meaning. They begin with signal detection and only move forward when patterns meet minimum reliability conditions. This shifts the entire evaluation process from “quality judgment” to “pattern validation.”

At this point, content either becomes interpretable or starts losing reliability immediately.

Content is first scanned for machine-detectable structures that define boundaries, relationships, and consistency. These signals allow the system to convert raw text into structured units that can be processed and compared.

Definition: AI trust signals are machine-detectable patterns that allow systems to detect, validate, and align content before interpretation and reuse decisions are made.

This leads to a layered evaluation model. Instead of reading the page as a whole, AI systems break it into interpretable segments and assess whether each segment meets structural and factual expectations.

In practical terms, this means your content must produce detectable signals before it can be understood or reused.

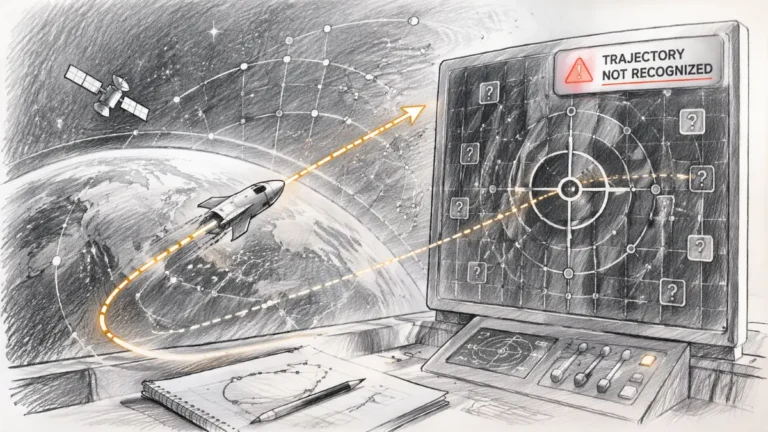

The Trust Signal Detection Pipeline (Step-by-Step)

This pipeline determines everything. If signals fail at any stage, interpretation weakens or stops.

AI evaluation follows a strict sequence where each stage determines whether the next stage can occur. If signals fail at any point, the system reduces confidence and limits reuse.

This leads to a structured sequence that defines how signals are processed inside AI systems.

The interpretation mechanism explains how AI detects and validates trust signals—but detection alone does not make content usable. If signals are not intentionally constructed as a system, they fail during validation and never reach reuse. A practical system for building AI trust signals step by step shows how to turn structural, factual, and entity signals into a stable configuration that AI can consistently detect, validate, and rely on.

Mechanism Breakdown

Step 1: Signal detection

The system scans for structural markers such as headings, segmentation patterns, and consistent formatting. These signals define how content can be divided into meaningful units.

Step 2: Signal validation

Detected signals are evaluated for consistency and clarity. The system checks whether terminology, entities, and relationships remain stable across the content.

This is where inconsistent signals reduce confidence and weaken further interpretation.

Step 3: Context alignment

Signals are compared across sections to confirm that meaning remains coherent. Misalignment reduces confidence and weakens interpretability.

Step 4: Reuse decision

Only content that maintains stable signals across all layers is selected for reuse in AI-generated outputs. Weak signals limit or block this step entirely.

Interpretation Anchor: AI systems do not interpret content continuously—they move through a validation chain where each stage determines whether interpretation can exist at all.

Principle: AI systems do not interpret content continuously—they evaluate signal stability at each stage, increasing or reducing confidence before allowing reuse.

Structural vs Factual vs Entity Signals Explained

AI does not rely on a single signal. It builds trust through multiple signal layers working together.

AI systems rely on multiple signal categories that work together to establish trust. Each category contributes a different type of validation, and failure in one category weakens the entire system.

Structural signals define how content is organized. These include headings, paragraph boundaries, and layout patterns that allow segmentation into interpretable units.

Factual signals provide evidence and grounding. These include data points, references, and verifiable statements that support claims and reduce uncertainty.

Entity signals ensure consistency across concepts. These include stable terminology, repeated naming patterns, and clear relationships between ideas.

These categories are not independent. They interact to form a unified trust model that determines whether content can be reliably processed.

If these signals fail to align, the system does not degrade interpretation—it prevents it entirely.

To see how these categories form a complete system, this breakdown of core categories of trust signals AI relies on expands their role within the full interpretation pipeline.

Failure Pattern

When one category becomes inconsistent, the system cannot maintain confidence across the pipeline. Structural instability breaks segmentation, missing evidence weakens validation, and inconsistent entities disrupt meaning alignment.

These failures compound each other. The result is not a single weak signal, but a systemic drop in confidence that limits interpretation and reuse.

For your content, this means all signal types must align, or overall trust will drop.

Why Consistency Matters More Than Quality

Consistency is what keeps signals detectable. Without it, even strong content becomes unreliable.

Consistency determines whether signals remain detectable across the entire page. Without consistency, even strong individual elements cannot form a stable interpretation.

AI systems evaluate patterns across sections, not isolated paragraphs. This means that variation in structure, terminology, or evidence reduces confidence, even if each part seems valid on its own.

At this stage, variation does not improve content—it breaks interpretation.

This leads to a critical distinction. High-quality content can still fail if its signals are not consistent, while simpler content can succeed if its signals remain stable.

If these conditions are met, AI systems can interpret and reuse the content with high confidence. If not, the system reduces trust and limits visibility within AI-generated outputs.

This leads to the next layer. Once signals are stable, the question is not whether content is interpreted, but how it is selected and reused across different AI contexts.

This leads to a key question: are your signals stable enough to be trusted?

Checklist:

- Can AI systems detect structural, factual, and entity signals across the page?

- Are signals consistent between sections without contradictions?

- Do detected signals align to form a stable interpretation?

- Is evidence clear enough to support validation at each stage?

- Does terminology remain stable across all references?

- Would AI systems maintain confidence throughout the entire pipeline?

Signal Processing Conditions in AI Interpretation

- Signal detection layering. Structural, factual, and entity signals are scanned as distinct but interdependent layers that define whether content can be segmented and validated.

- Validation coherence modeling. Consistency across signals determines whether detected patterns can be aligned into a stable interpretation or rejected during processing.

These signal conditions explain how AI systems move from detection to validation, shaping whether content becomes interpretable or remains unusable.

AI Trust Signal Evaluation Pipeline

AI systems do not interpret content directly. They process it through a signal-based pipeline where detection, validation, and alignment determine whether meaning can be reconstructed and reused.

[Signal Detection]

↓

[Signal Classification]

↓

[Signal Validation]

↓

[Context Alignment]

↓

[Confidence Modeling]

↓

─────────────────────────

↓

[Interpretation Layer]

↓

[Meaning Extraction]

↓

[Content Reuse in AI Systems]

Failure Principle: If signals are inconsistent or fail validation, confidence drops and interpretation becomes unstable. AI systems reduce reuse or discard the content in favor of more reliable inputs.

FAQ: How AI Trust Signals Work

How do AI trust signals work in content evaluation?

AI systems detect, validate, and align structural, factual, and entity signals to determine whether content can be interpreted and reused.

How does AI evaluate content trust?

Trust is evaluated through signal consistency across sections, where stable patterns increase confidence and enable interpretation and reuse.

What signals does AI use to detect reliable content?

AI relies on structural organization, verifiable evidence, and consistent entities to detect whether content can be processed reliably.

How does AI decide content credibility?

Credibility is determined by how well signals align across the page, allowing AI systems to maintain confidence during interpretation.

Why does inconsistency break AI interpretation?

When signals conflict or change across sections, AI systems lose confidence, reducing interpretation accuracy and limiting content reuse.

Glossary: Key Terms in AI Trust Signal Evaluation

This glossary defines the core concepts behind how AI systems detect, validate, and interpret trust signals in content.

Trust Signals

Machine-detectable patterns that allow AI systems to evaluate whether content can be interpreted, validated, and reused reliably.

Signal Detection

The process where AI systems scan content to identify structural, factual, and entity signals for further evaluation.

Signal Validation

The stage where detected signals are checked for consistency, clarity, and alignment before interpretation continues.

Context Alignment

The process of ensuring that meaning and signals remain consistent across sections, enabling stable interpretation.

Content Reuse

The outcome where validated and aligned content is selected and integrated into AI-generated answers and summaries.