Last Updated on April 5, 2026 by PostUpgrade

Why AI Ignores Page Structure: Hidden Failure Layer

Your content is not being ignored — it is being structurally rewritten into something AI cannot recognize.

TL;DR: The problem is not content quality but structural misclassification, where AI fails to reconstruct a stable layout and assigns incorrect hierarchy. This leads to broken interpretation, failed extraction, and zero reuse in AI systems. The mechanism is ambiguity in layout signals that forces probabilistic hierarchy decisions. Stabilizing structure restores correct interpretation and enables visibility across AI-driven environments.

If this structural layer fails, every optimization you apply after it becomes irrelevant.

AI Does Not Read Structure — It Reconstructs It

If this reconstruction fails at this stage, the entire meaning of the page becomes unstable and cannot be reliably interpreted.

Definition: AI understanding depends on the model’s ability to reconstruct structural hierarchy from layout signals and map it into a stable meaning representation.

Most content creators assume that AI reads pages the way humans do. This assumption is incorrect. AI systems do not read linearly. They reconstruct structure before interpreting meaning.

This leads to a critical constraint. If the structure cannot be reconstructed reliably, the meaning cannot be interpreted correctly.

This does not reduce accuracy — it collapses interpretation entirely, making the content unusable for AI systems.

AI models first detect layout signals such as headings, spacing, grouping, and visual hierarchy. These signals are not decorative. They are the primary inputs used to build a structural map of the page.

Because of this, structure is not a formatting layer. It is the foundation of interpretation.

Principle: When structural signals remain stable, AI assigns hierarchy deterministically; when signals conflict, interpretation becomes probabilistic and unstable.

Structural misclassification = incorrect assignment of hierarchy roles by AI

This occurs when a model assigns the wrong structural role to elements such as headings, sections, or grouped content blocks.

Where Layout Interpretation Actually Breaks

The critical failure happens before meaning is processed, making all downstream interpretation dependent on a potentially incorrect structure.

The failure does not happen at the level of text. It happens earlier, during structure reconstruction.

AI systems follow a consistent internal process:

- detect visual and structural signals

- segment content into blocks

- infer hierarchy between blocks

- assign semantic roles

- reconstruct meaning

Structure reconstruction: the process of converting layout signals into a hierarchical model used for interpretation.

The break occurs when signals are inconsistent or ambiguous. At that moment, the model cannot confidently determine structure.

This leads directly to forced hierarchy estimation, where the model assigns structure based on probability rather than certainty.

Because of this, it makes a probabilistic decision. That decision defines the entire interpretation path.

Once this path is set incorrectly, the model cannot recover the intended structure later in the process.

Mechanism Breakdown

- AI detects layout signals

- Signals conflict → ambiguity

- Model assigns wrong hierarchy

- Meaning map breaks

- Extraction fails

Example: When a heading visually resembles body text or spacing patterns conflict, AI may assign incorrect hierarchy, causing the meaning map to collapse and preventing reliable extraction.

This cascade is not gradual. Once the hierarchy is wrong, every downstream layer inherits the error.

This is the exact point where structurally “correct” content becomes invisible.

Structural Inconsistency Creates False Hierarchy

When multiple structural signals compete, the model is forced to choose a hierarchy that may not reflect the actual content logic.

Structural inconsistency: conflicting layout signals that prevent AI from assigning a single stable hierarchy.

Structural inconsistency is the primary cause of misclassification. It introduces multiple competing signals that cannot be resolved into a stable hierarchy.

For example, a section may visually appear as a heading but lack consistent spacing or positioning. Another block may look like supporting content but be formatted with equal visual weight.

Because of this, the model cannot distinguish primary from secondary elements.

As a result, the model may invert importance, treating supporting content as primary and collapsing the intended structure.

This leads to false hierarchy construction.

Instead of recognizing a clear structure, the model builds an incorrect structural tree. In this tree:

- headings may be treated as body text

- supporting content may be treated as primary

- boundaries between sections may disappear

This is not a partial degradation. It is a complete structural reinterpretation.

After this point, the system no longer processes your content as intended.

To understand how this failure emerges in real systems, see why structural ambiguity leads to incorrect hierarchy inference.Why Small Layout Errors Cause Total Failure

Even minor inconsistencies can trigger a full breakdown of structural interpretation rather than a partial degradation.

A common misconception is that small layout issues have small effects. This assumption is false.

Layout interpretation is not tolerant to inconsistency. It is dependency-based. Each layer depends on the previous one.

Because of this, small errors propagate.

For example:

- a slightly inconsistent spacing pattern can shift block grouping

- a misplaced heading level can invert hierarchy

- a mixed container can blur section boundaries

These small inconsistencies do not stay local — they redefine the entire structural model used for interpretation.

Each of these changes affects how the model constructs the structural map.

This leads directly to the next layer of failure, where semantic interpretation becomes disconnected from the intended structure.

Once the map is incorrect, semantic interpretation becomes unreliable.

Failure Patterns

- inconsistent spacing

- broken heading levels

- mixed containers

- visual signals contradict each other

These are not cosmetic issues. They are structural failure triggers.

Checklist:

- Are layout signals consistent across sections?

- Do heading levels reflect true hierarchy without ambiguity?

- Are content blocks clearly separated by spacing and structure?

- Do visual patterns repeat predictably across the page?

- Is there any conflict between visual weight and semantic role?

- Can hierarchy be reconstructed without probabilistic assumptions?

Conclusion

AI does not ignore your content because it lacks quality. It ignores it because it cannot construct a stable structural interpretation.

When layout signals are inconsistent, the model does not partially understand your page. It reconstructs it incorrectly.

This leads to a complete breakdown of meaning extraction, summarization, and reuse.

At this point, the system no longer treats the page as a reliable source, removing it from downstream AI-driven processes.

Structural misclassification is therefore not a minor issue. It is the primary failure layer in AI visibility.

If the structure is unstable, no optimization layer can compensate.

This means that improving content quality alone will not restore visibility if structural interpretation is already broken.

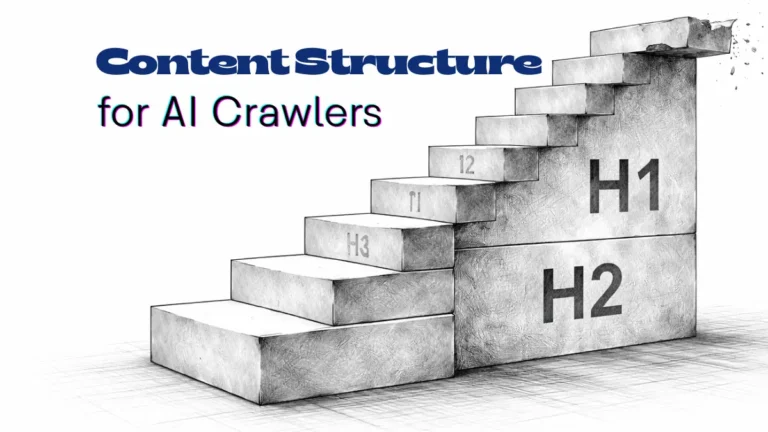

Structural Logic of AI-First Page Architecture

- Structural hierarchy mapping. Layered heading depth defines how AI segments content into discrete semantic units and resolves contextual relationships between sections.

- Layout pattern standardization. Repeated structural patterns create predictable segmentation signals, enabling consistent interpretation across generative systems.

These structural properties determine how reliably AI systems reconstruct page logic, influencing semantic coherence and interpretation stability.

Structural Reconstruction Failure Model

AI systems reconstruct page meaning by resolving structural signals into a hierarchical representation. This model reflects how ambiguity in layout signals leads to misclassification and disrupts the entire interpretation chain.

[Layout Signals Detection]

↓

[Block Segmentation]

↓

[Hierarchy Assignment]

↓

[Semantic Role Mapping]

↓

[Structure Reconstruction]

↓

─────────────────────────

↓

[Interpretation Layer]

↓

[Meaning Map Formation]

↓

[Content Extraction / Reuse]

Failure Principle: When layout signals conflict, hierarchy assignment becomes probabilistic, producing an incorrect structural model. This misclassification propagates through all subsequent layers, leading to failed interpretation and content exclusion from AI-driven systems.

FAQ: Structural Interpretation in AI Systems

Why does AI ignore structurally correct pages?

AI ignores pages when it cannot reconstruct a stable hierarchy. Even visually correct layouts may produce ambiguous signals that lead to structural misclassification.

What is structural misclassification?

Structural misclassification occurs when AI assigns incorrect hierarchy roles to elements, breaking the internal representation of the page.

How do layout signals affect interpretation?

Layout signals define segmentation and hierarchy. When these signals conflict, AI cannot determine structure reliably and shifts to probabilistic interpretation.

Why do small layout errors cause large failures?

Interpretation is dependency-based. Minor inconsistencies propagate through structural layers, distorting the entire meaning reconstruction process.

What happens after structure reconstruction fails?

Once the structural model is incorrect, meaning extraction collapses. The content becomes unreliable for summarization and is excluded from AI reuse.

Glossary: Structural Interpretation Terms

This glossary defines the core concepts required to understand how AI systems reconstruct, misclassify, and interpret page structure.

Structural Misclassification

Incorrect assignment of hierarchy roles by AI systems, leading to a broken internal representation of page structure.

Layout Signals

Visual and structural cues such as headings, spacing, and grouping that AI uses to reconstruct hierarchy and segment content.

Structural Reconstruction

The process by which AI systems convert layout signals into a hierarchical model used for interpretation and meaning extraction.

False Hierarchy

An incorrect structural model where content importance and relationships are misassigned due to ambiguous layout signals.

Meaning Map

The internal representation AI builds to connect sections and derive meaning, which collapses when structural errors occur.