Last Updated on April 19, 2026 by PostUpgrade

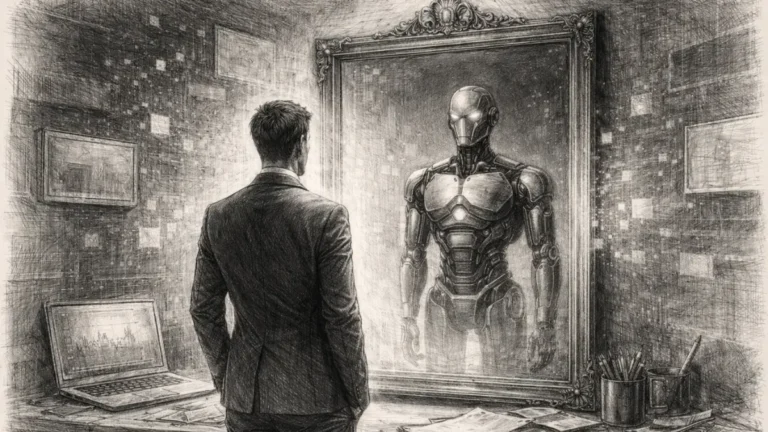

How to Build AI Trust Signals: A Practical Implementation System

Adding improvements is not enough—AI only accepts content where all trust signals work as a system.

TL;DR: Content fails because signals are added partially, so AI cannot validate or interpret it reliably. The mechanism is a system of structure, evidence, and entity consistency that must align across the page. Building all signals together allows AI to detect, interpret, and reuse content with confidence.

This is where most content breaks—partial implementation keeps it unverified and unusable.

If your content is not built as a complete signal system, AI does not partially trust it—it cannot use it at all.

If you skip this, your content may look complete—but for AI systems, it never becomes usable.

How to build AI trust signals in content is not about adding isolated improvements. It is about creating a system where structure, evidence, and consistency work together so AI can detect, validate, and reuse your content.

Most content fails not because it is low quality, but because it does not produce stable signals. This article shows how to build those signals into a repeatable system that makes content usable for AI.

The Minimum Structure Required for AI Interpretation

This is where implementation begins. Without structure, no signal can be detected or validated.

AI systems cannot interpret content without a stable structure. Before meaning is processed, the system must detect clear segmentation and boundaries that define how content is organized.

Definition: Trust-ready content is content built as a unified system of structural, factual, and entity signals that AI systems can detect, validate, and reuse without ambiguity.

Structure is not formatting. It is the foundation that allows signals to be detected and converted into interpretable units.

At this stage, weak structure does not reduce quality—it prevents interpretation entirely.

In practical terms, this means your content must be structured before any other optimization can work.

Mechanism Breakdown

Step 1: Define clear section boundaries

Use consistent H2–H4 hierarchy to separate ideas into predictable segments. This allows AI systems to identify where each concept begins and ends.

Step 2: Stabilize paragraph structure

Ensure each paragraph expresses one reasoning unit. This reduces ambiguity and allows signals to remain isolated and detectable.

Step 3: Align structural patterns across sections

Repeat the same structural logic throughout the page. Variation in structure breaks detection and reduces confidence.

This leads to the first practical layer of implementation. To extend this system into deeper execution, this breakdown of practical techniques for strengthening trust signals in content expands how structural signals connect with validation and reuse.

How to Add Evidence Signals That AI Can Verify

Structure alone is not enough. Without evidence, AI systems cannot validate meaning.

Structure alone does not create trust. AI systems require evidence signals that confirm whether claims can be validated and reused.

Evidence is not about authority signals in the traditional sense. It is about whether information can be verified within the content itself.

This leads to a critical layer where signals must support claims, not just structure them.

Mechanism Breakdown

Step 1: Attach evidence to key claims

Support statements with data points, references, or explicit explanations. This allows AI to validate meaning without external inference.

Step 2: Keep evidence local to the concept

Place supporting information close to the statement it validates. This maintains alignment and reduces ambiguity.

This is where missing evidence weakens validation and breaks confidence across the system.

Step 3: Maintain consistency in evidence patterns

Use similar formats for presenting evidence across sections. This helps AI detect patterns and apply validation consistently.

This leads to a more stable interpretation layer. When evidence is structured correctly, AI systems can confirm meaning instead of estimating it.

Interpretation Anchor: AI systems do not interpret content continuously—they confirm signal stability at each layer before allowing meaning to propagate further.

Principle: AI systems do not accept partial improvements—only fully integrated signal systems can support stable interpretation and content reuse.

Failure Pattern

Partial evidence creates instability. When some sections include validation and others do not, AI systems reduce confidence and limit reuse.

This is why adding evidence selectively does not solve the problem. The system requires full coverage to remain stable.

How to Stabilize Entity and Terminology Signals

Even with structure and evidence, inconsistent terminology breaks meaning across the page.

AI systems rely on consistent entities to maintain meaning across content. If terminology shifts, the system cannot reliably connect concepts.

This is where meaning fragmentation begins if terminology is not controlled.

Entity signals define how ideas are linked. They ensure that references remain stable and relationships are preserved.

For your content, this means consistency is required for AI to connect and track meaning across sections.

Mechanism Breakdown

Step 1: Define core terms early

Introduce key concepts clearly and use the same terminology throughout the content. This prevents fragmentation.

Step 2: Maintain naming consistency

Avoid switching between synonyms when referring to the same concept. Consistency increases detection reliability.

Step 3: Reinforce relationships between entities

Clarify how concepts relate to each other using repeated patterns. This strengthens alignment across sections.

This leads to a stable semantic layer. When entities remain consistent, AI systems can track meaning across the entire page.

Example: A page where structure is stable, evidence supports every key claim, and terminology remains consistent allows AI systems to validate signals across sections and reuse content reliably.

Failure Pattern

Inconsistent terminology breaks alignment. When the same concept is described differently, AI systems lose confidence and weaken interpretation.

This effect compounds across sections. The result is reduced trust and limited reuse.

How to Turn Content Into a Trust System (Not a Page)

This is the final shift. Content must function as a system, not a collection of improvements.

Trust signals do not work in isolation. They must operate together as a system that continuously supports detection, validation, and interpretation.

At this point, partial implementation does not help—it keeps content unusable for AI systems.

Content becomes reliable when structure, evidence, and entity consistency reinforce each other across all sections.

Mechanism Breakdown

Step 1: Integrate structure, evidence, and entities

Ensure all three signal types are present and aligned in every section. This creates a unified system.

Step 2: Maintain consistency across the entire page

Do not allow variation between sections. Stability is what enables interpretation.

Step 3: Validate the system as a whole

Check whether signals remain detectable and aligned from start to finish. This determines whether content can be reused.

This leads to the final outcome. Content is no longer evaluated as isolated parts but as a complete system of signals.

In practical terms, this determines whether your content can move from detection to reuse without interruption.

This leads to the key question: does your content operate as a complete signal system?

Checklist:

- Are structural, evidence, and entity signals present in every section?

- Do all signals align without contradictions across the page?

- Can AI systems validate each key claim with clear supporting data?

- Is terminology stable when referring to the same concepts?

- Does the content function as a unified system rather than isolated improvements?

- Would AI systems maintain confidence from detection to reuse?

If these conditions are met, content becomes trust-ready and can be interpreted and reused reliably by AI systems. If not, even well-written content remains unstable and limited in visibility.

If the system is incomplete, AI does not downgrade your content—it simply never relies on it.

Signal Integration Logic in Trust-Ready Content

- Signal system integration. Structure, evidence, and entity signals must operate as a unified system where each layer supports detection, validation, and interpretation.

- Cross-layer consistency enforcement. Stability across all signal types determines whether content can maintain confidence and remain usable within AI-driven processing systems.

These conditions define how content transitions from isolated elements into a stable trust system that AI can interpret and reuse.

AI Trust Signal Assembly Model

AI systems do not interpret isolated elements. They assemble content through a coordinated signal system where structure, evidence, and entity consistency must align to enable interpretation and reuse.

[Structural Framework]

↓

[Evidence Integration]

↓

[Entity Stabilization]

↓

[Signal Alignment]

↓

[System Consistency]

↓

─────────────────────────

↓

[Interpretation Readiness]

↓

[Meaning Extraction]

↓

[Content Reuse in AI Systems]

Failure Principle: If signals are implemented partially or fail to align, the system does not become weaker—it remains unusable. AI systems require full signal integration to allow interpretation and reuse.

FAQ: How to Build AI Trust Signals

How do you build AI trust signals in content?

Trust signals are built by aligning structure, evidence, and entity consistency so AI systems can detect, validate, and interpret content reliably.

What makes content usable for AI systems?

Content becomes usable when signals are complete and consistent, allowing AI to process meaning without gaps or contradictions.

How do you optimize content for AI trust?

Optimization requires building signals as a system, not individually, ensuring structure, evidence, and terminology work together.

Why does partial implementation fail?

When signals are incomplete or inconsistent, AI systems cannot validate content, reducing confidence and preventing interpretation.

How do you improve AI content visibility?

Visibility improves when content forms a stable trust system that AI can interpret, validate, and reuse across generated outputs.

Glossary: Key Terms in AI Trust Signal Implementation

This glossary defines the core concepts required to build content that AI systems can detect, validate, and reuse reliably.

Trust-Ready Content

Content structured with aligned signals that allow AI systems to interpret, validate, and reuse meaning without ambiguity.

Structural Signals

Layout and hierarchy elements that allow AI to segment content into clear, interpretable units.

Evidence Signals

Verifiable data and supporting statements that enable AI systems to validate claims during interpretation.

Entity Consistency

Stable use of terminology that allows AI systems to maintain meaning and relationships across the entire content.

Signal Integration

The process of combining structure, evidence, and entity signals into a unified system for reliable interpretation.