Last Updated on April 12, 2026 by PostUpgrade

How Entity-Based SEO Actually Works

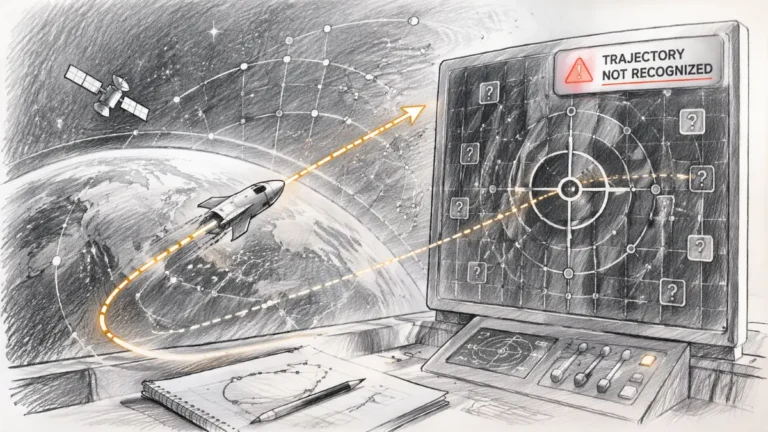

If you are trying to rank content and nothing happens, this is where the process actually breaks. Not at optimization, not at links — but at interpretation.

Your content is not being ranked — it is being structurally rejected before it ever becomes part of the system.

TL;DR: Most content fails not because of poor optimization, but because AI cannot reliably interpret it as entities and relationships. When detection, disambiguation, or graph construction breaks, the system does not downgrade the page — it excludes it entirely from interpretation. The mechanism is entity processing into structured graphs that enable meaning propagation. The solution is to stabilize entity detection, enforce clear context, and build explicit relationships so AI can extract and reuse your content. The outcome is inclusion in the graph, which is the only path to visibility in AI-driven systems.

If your entities do not stabilize at the detection layer, everything that follows in this article never happens.

AI does not read pages.

It converts content into entities, relationships, and graphs.

Every piece of content is decomposed into identifiable units, mapped into a structured network, and evaluated based on how those units connect and propagate meaning.

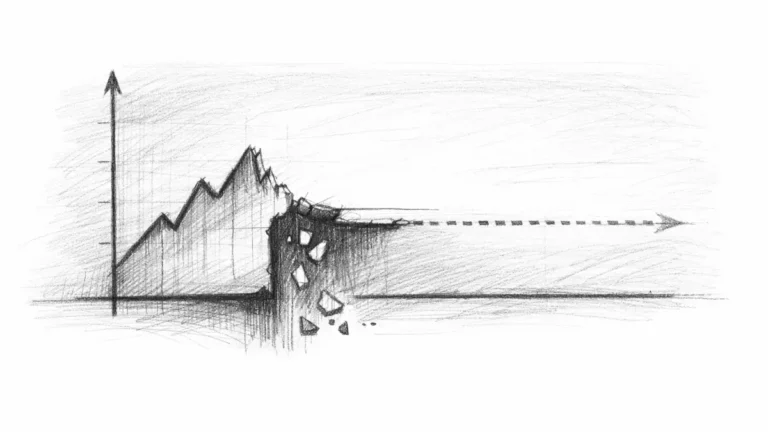

If this pipeline breaks at any stage, content is not misranked — it is excluded from interpretation.

From Text to Entities: Detection Layer

If entities are not detected at this stage, your content does not degrade in visibility — it never enters the system at all.

This is the first gate. If entities are not detected, nothing else happens.

Definition: Entity processing is the transformation of raw text into identifiable units that can be classified, connected, and used as nodes in an AI-readable graph structure.

AI systems process raw text using named entity recognition. At this stage, the system extracts concepts, objects, organizations, attributes, and actions. Each detected unit is evaluated as a potential entity candidate.

Entity processing is the transformation of text into structured graph nodes.

The detection process operates through token classification, contextual signals, and statistical pattern recognition. Text is decomposed into tokens, tokens become candidates, and candidates are classified into entities.

This is where most content fails silently — the system does not notify you, it simply stops processing.

If detection confidence is low, the system does not attempt correction. It discards the unit entirely.

When wording is vague, when context is mixed, or when phrases are generic, entity identity does not stabilize. In these cases, the system does not degrade evaluation — it terminates it.

No entities means no system entry.

In practical terms, this means that no amount of optimization can compensate for missing or unstable entity detection.

Entity Disambiguation and Context Resolution

Even when entities are detected, most content breaks at this stage due to unstable meaning resolution.

Detection alone does not define meaning. The system must determine which exact entity is being referenced.

Ambiguity is resolved through contextual signals, co-occurrence patterns, semantic proximity, and attribute matching. The system evaluates surrounding content to select one entity from multiple candidates.

A stable outcome requires a single interpretation. References must remain consistent, and competing meanings must be eliminated.

When context is unclear, the system distributes probability across multiple entities. This reduces confidence and weakens downstream processing.

- If disambiguation fails, the system constructs the wrong entity.

- If the wrong entity is selected, the graph is built incorrectly.

- If the graph is incorrect, relevance cannot propagate.

This is not a ranking issue. It is a structural misinterpretation.

This is where structural failure becomes irreversible and directly impacts the graph that follows.

At this point, fixing visibility no longer depends on optimization but on rebuilding the underlying entity structure itself. If entities are not explicitly defined, context is not controlled, and relationships are not constructed, the system cannot recover interpretation at any later stage. This is why understanding how to fix entity-based SEO for AI visibility becomes critical, because visibility is restored not by improving content, but by reconstructing entities as a stable, connected system that AI can interpret and reuse.

Principle: AI systems only maintain stable interpretation when entities are clearly defined and context resolves ambiguity into a single, consistent meaning.

How Entity Graphs Are Built

At this point, interpretation either becomes structured and reusable — or collapses completely.

Once entities are resolved, systems connect them into a structured graph.

Entities function as nodes. Relationships function as edges. The result is a network that represents meaning.

Example: A page that explicitly connects entities through clear relationships allows AI systems to build a graph where meaning can propagate and be reused in generated outputs.

This structure is created through entity linking, relationship extraction, hierarchy mapping, and contextual association. Each connection defines how entities influence each other.

Content is no longer processed as a document. It is transformed into a network of connected nodes.

This is where traditional SEO logic breaks, because documents do not exist at this stage — only structures do.

This is the exact stage where how entity relationships propagate relevance becomes the deciding factor for visibility. Without structured connections, entities remain isolated and cannot transfer meaning across the system.

If entities are present but disconnected, the graph cannot form. If relationships are implicit instead of explicit, connections are not recognized. If hierarchy is missing, structure collapses.

Without a graph, there is no mechanism for relevance to exist.

For your content, this means that relationships must be explicitly constructed, not assumed.

How Relevance Propagates Through Relationships

This is where visibility is actually decided — not by keywords, but by how meaning moves through connections.

Graphs are evaluated dynamically. Relevance is not assigned to pages — it flows through connections.

Each entity influences others through its position and relationships within the graph. Strong nodes transfer trust. Dense connections amplify relevance. Consistent context stabilizes interpretation.

Propagation depends on structure.

If structure fails here, even correct entities cannot produce visibility.

If relationships are missing, relevance cannot move. If links are weak, influence degrades. If hierarchy is broken, direction is lost.

An entity without relationships does not partially function. It does not participate in the system.

Relevance is not calculated locally. It emerges from the network.

From Graph to AI Output (Reuse Layer)

This is the final filter where most content disappears — not because it is bad, but because it cannot be reused.

At the final stage, AI systems do not return pages. They select and reuse entities.

The system evaluates the graph, filters nodes by confidence and authority, and selects those that can be reliably used in generated outputs. Answer synthesis is built from these selected entities.

Only entities with stable definitions, strong relationships, and sufficient authority are reused.

Weak entities are ignored. Isolated nodes are excluded. Ambiguous meanings are filtered out.

The transformation is direct:

Graph → selected nodes → generated answer

Content is never retrieved as a whole. It is decomposed, filtered, and reconstructed through entity-level selection.

What happens next is the full pipeline that determines whether your content survives or gets excluded.

Mechanism

- Answer synthesis

- Named entity detection

- Contextual disambiguation

- Entity linking

- Graph construction

- Relevance scoring

Failure

- weak authority → no reuse

- broken context → wrong entity

- no relationships → no propagation

Checklist:

- Are entities clearly defined and consistently referenced throughout the content?

- Does context eliminate ambiguity and support one stable interpretation?

- Are relationships between entities explicitly expressed, not implied?

- Can the content be reconstructed as a coherent entity graph?

- Does each section contribute to entity clarity and connection strength?

- Is the content structured for extraction rather than just reading?

Entity Interpretation Logic in AI Systems

- Entity detection thresholding. Text is segmented into candidate units where only sufficiently confident patterns are elevated into recognized entities within the system.

- Context resolution stability. Surrounding signals determine which entity interpretation persists, while ambiguity distributes probability and weakens structural certainty.

These mechanisms define how content is either accepted into entity-based systems or excluded from interpretation due to unstable meaning resolution.

For content creators, this defines the exact point where interpretation either stabilizes or collapses.

Entity Interpretation Flow Model

AI systems do not process pages as documents. They reconstruct meaning through entity transformation, where text is converted into structured nodes, relationships, and reusable outputs. This diagram represents how each layer contributes to entity interpretation and where failures break the chain.

[Entity Detection]

↓

[Context Disambiguation]

↓

[Entity Linking]

↓

[Graph Construction]

↓

[Relationship Mapping]

↓

─────────────────────────

↓

[Relevance Propagation]

↓

[Entity Selection]

↓

[Answer Generation]

Failure Principle: If entity detection, disambiguation, or graph construction becomes unstable, interpretation does not degrade—it stops. AI systems discard unresolved entities and exclude the content from reuse.

Every step in this chain must remain stable — one break invalidates everything that follows.

FAQ: Entity-Based SEO

What is entity-based SEO?

Entity-based SEO focuses on how AI systems interpret content as entities, relationships, and graphs rather than isolated pages.

How does AI detect entities in content?

AI uses named entity recognition, token classification, and contextual signals to extract identifiable units from text.

Why is entity disambiguation critical?

Disambiguation ensures that AI selects the correct meaning of an entity, preventing structural misinterpretation and incorrect graph construction.

How do entity graphs influence visibility?

Entity graphs connect concepts into networks where relevance propagates, allowing AI systems to evaluate and reuse structured meaning.

Why is content excluded from AI systems?

Content is excluded when entities are unclear, relationships are missing, or graph structure fails to stabilize for interpretation.

Glossary: Key Terms in Entity-Based SEO

This glossary defines core concepts used in entity-based systems to support consistent interpretation and AI-level understanding.

Entity Detection

The process of identifying recognizable concepts, objects, or terms in text that can be transformed into structured units for AI interpretation.

Disambiguation

The mechanism by which AI selects the correct meaning of an entity based on context, eliminating competing interpretations.

Entity Graph

A structured network where entities act as nodes and relationships define how meaning is connected and propagated.

Relevance Propagation

The process through which importance and meaning flow across connected entities within a graph structure.

Entity Reuse

The selection and integration of stable entities by AI systems when generating answers instead of retrieving full documents.