Last Updated on March 22, 2026 by PostUpgrade

How to Use Lists, Tables, and Cards in Generative SEO

AI does not interpret your article as a whole—it extracts lists, tables, and cards as isolated modules and ignores everything that cannot be segmented into bounded semantic units.

TL;DR: The content explains that unstructured narrative reduces extraction because AI cannot reliably segment meaning. As a result, visibility drops since models fail to reuse content across panels and summaries. The solution is to structure content into lists, tables, and cards that enable deterministic interpretation, extraction, and reuse. This increases snippet presence, panel visibility, and long-term AI-driven discoverability.

If your content is not modular, AI will skip it even if it ranks.

Structured content components define how modern generative systems interpret, extract, and reuse information. Lists, tables, and cards operate as machine-readable structural units that reduce ambiguity and increase deterministic parsing. As a result, generative SEO no longer focuses solely on ranking signals but on structural clarity that enables extraction across AI interfaces. Therefore, structured content components become a primary architectural requirement rather than a formatting preference.

Generative visibility shifts from link-based authority toward reusable semantic modules. Large language models prioritize bounded content elements that isolate meaning and preserve internal logic. Consequently, lists segment atomic statements, tables encode relationships, and cards encapsulate concepts. Each format functions as a predictable extraction surface for systems that generate answers instead of ranking pages.

Enterprise publishers must design pages for AI comprehension, not only for human scanning behavior. In addition, scalable environments require consistent component-driven layouts to prevent semantic drift across large content clusters. Organizations operating at scale depend on modularization to maintain clarity across hundreds of articles. Therefore, lists, tables, and cards become structural primitives within component-based content architecture.

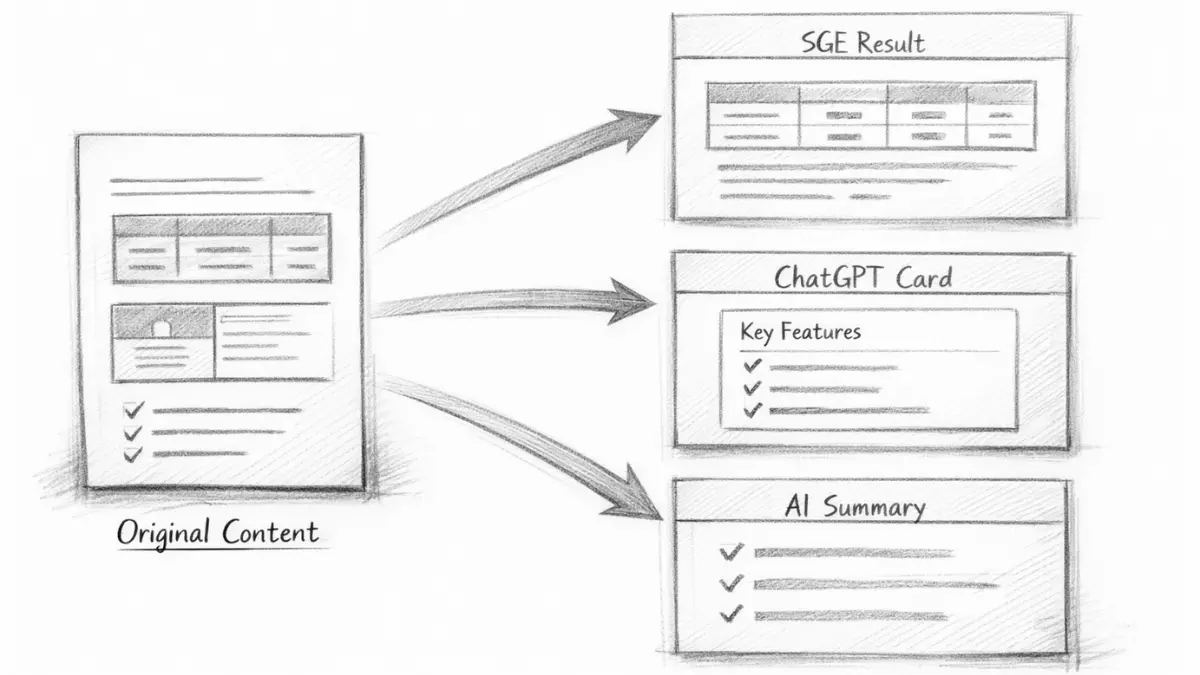

This article defines how to implement these formats within generative SEO frameworks. It explains how structured elements influence snippet extraction, panel visibility, and knowledge reuse across systems such as SGE, ChatGPT Search Cards, Perplexity, and Gemini. Furthermore, it provides enterprise-level structuring logic supported by research institutions and measurable structural signals. The objective is to establish a repeatable model for content modularization that improves long-term AI-driven accessibility while reinforcing the strategic role of structured content components.

Structured Content Components as the Foundation of Generative Visibility

Content components for generative visibility determine how AI systems prioritize, segment, and reuse information. Generative systems prioritize structural clarity because extraction depends on bounded semantic units rather than narrative continuity. Therefore, visibility increasingly depends on the precision and predictability of structural units within a page. This logic aligns with research from Stanford Natural Language Institute, which demonstrates that large language models rely on structured patterns for stable interpretation.

Structured content components — discrete, machine-recognizable content units that isolate meaning and reduce interpretive variance. These units function as semantic containers that define scope, context, and logical boundaries. Consequently, they reduce entropy during model inference and increase reuse stability across generative environments.

Definition: AI interpretation in generative SEO refers to a model’s ability to segment structured content components such as lists, tables, and cards into bounded semantic units that preserve meaning, hierarchy, and relational signals during extraction.

Claim: Generative visibility depends on explicit structural units.

Rationale: AI models extract reusable modules rather than interpret entire narratives holistically.

Mechanism: Segmented components reduce ambiguity by isolating claims, definitions, and relationships.

Counterargument: Narrative prose may still rank in traditional search environments where full-document evaluation dominates.

Conclusion: Structural modularity increases reuse probability in generative interfaces that prioritize extractable segments.

Semantic Role of Content Components in Machine Interpretation

The semantic role of content components defines how meaning becomes stable under machine processing. Models interpret structured elements in SEO content as bounded inference zones, which allows them to assign confidence scores to individual units. Consequently, a component hierarchy model enables deterministic reasoning because each structural level defines scope and relevance.

Content blocks vs components represent a structural distinction rather than a stylistic preference. A block refers to visual grouping, while a component functions as a semantic module with a defined interpretive boundary. Therefore, structured content components must be designed with explicit conceptual intent rather than layout convenience.

In practice, this means that each component must carry a single stable meaning. When meaning overlaps across adjacent sections, extraction reliability decreases. Clear semantic isolation improves generative reuse and strengthens component-level visibility.

Component Hierarchy Model and Structural Stability

A component hierarchy model organizes structural units into layered semantic containers. At the top level, H2 headings define conceptual domains, while H3 and H4 subdivisions refine mechanism and application. As a result, hierarchy creates predictable navigation for both crawlers and generative systems.

This hierarchy supports content components for generative visibility because it defines interpretive depth. Higher-level components establish scope, while lower-level units refine meaning and implementation logic. Consequently, structured elements for AI indexing function as coordinated signals rather than isolated fragments.

When hierarchy remains consistent across pages, AI systems recognize repeated structural patterns. Predictable layering stabilizes interpretation and increases reuse across generative answers.

Component-Based Content Architecture as a Visibility Mechanism

Component-based content architecture formalizes how structural units interact within a page. Instead of linear exposition, information architecture through components organizes meaning into reusable modules. Therefore, each unit becomes independently extractable while maintaining logical cohesion within the whole.

Machine-readable content components function as indexing anchors. They reduce ambiguity because each component contains a single concept, mechanism, or implication. As a result, generative systems can surface content modules without reconstructing surrounding narrative context.

A structured architecture transforms pages into modular knowledge systems. This approach improves snippet eligibility and enhances panel extraction across AI interfaces.

Information Architecture Through Components

Information architecture through components defines how content modules relate structurally. Each component must declare scope, contain stable terminology, and align with a single intent. Consequently, AI-friendly content modules reduce interpretive variance during answer synthesis.

Additionally, component clarity for machine reading depends on explicit headings and controlled terminology. When authors reuse consistent structural templates, semantic drift decreases. Stable vocabulary strengthens extraction reliability across large content clusters.

Clear architecture ensures that each module communicates one bounded idea. Generative systems can therefore retrieve content without reinterpretation.

Microcase: Structural Transformation in Enterprise Documentation

An enterprise documentation platform redesigned FAQ pages into modular card-based content presentation SEO. The redesign replaced paragraph-based explanations with discrete concept cards and structured data tables for AI retrieval. As a result, extraction into SGE panels increased within three reporting cycles.

The change reduced narrative density and increased machine-readable content components. Analytics logs showed improved snippet presence and higher visibility in generative answer summaries. Consequently, component consistency across pages became a documented editorial requirement.

This example demonstrates that structural clarity influences generative reuse directly. When components align with search intent and isolate meaning, visibility becomes measurable at the module level.

Implications for Enterprise Content Systems

Component consistency across pages stabilizes interpretation at scale. Enterprises managing large clusters must enforce controlled templates to prevent structural drift. Therefore, component alignment with search intent becomes a governance principle rather than an optimization tactic.

Content components for generative visibility operate as strategic assets in long-term AI-driven accessibility. Structural discipline reduces interpretive noise and increases extraction reliability across evolving generative systems. Over time, modular architecture produces cumulative visibility advantages because reusable components persist within model memory patterns.

Lists as AI Parsing Units in Structured SEO

Bullet lists for AI parsing shape how large language models segment, compress, and reuse information. LLMs segment enumerations deterministically because list formatting creates predictable boundaries for token grouping. Therefore, list structures influence extraction and summarization outcomes directly. Research from MIT CSAIL highlights how structural cues improve machine-level parsing efficiency in transformer-based architectures.

Bullet list — a linear enumeration structure that isolates atomic statements for independent parsing. Each bullet functions as a bounded semantic unit with minimal contextual dependency. Consequently, models can process each item without reconstructing surrounding narrative flow.

Claim: Lists increase deterministic parsing.

Rationale: Models tokenize enumerations as discrete units rather than continuous prose.

Mechanism: Each bullet becomes a semantic boundary that isolates a single claim or fact.

Counterargument: Overuse reduces narrative cohesion and may fragment conceptual continuity.

Conclusion: Lists should isolate stable facts only and avoid replacing analytical reasoning.

List Formatting for Generative Engines

List formatting for generative engines transforms narrative statements into extraction-ready components. Structured elements in SEO content such as bullets and numbered sequences create predictable structural patterns. As a result, models recognize repetition in formatting and assign higher extraction confidence to list-based segments.

Additionally, consistent bullet syntax reduces interpretive ambiguity. When each item expresses one declarative fact, AI systems compress content elements for AI summaries with minimal reconstruction. Therefore, lists function as compression units within generative environments.

In practice, this means each bullet must communicate one stable assertion. Multiple claims within a single item reduce parsing clarity and weaken extraction reliability.

Structured Elements in SEO Content and Extraction Stability

Structured elements in SEO content operate as interpretive anchors within generative pipelines. Lists define explicit segmentation logic that models treat as structured boundaries. Consequently, list formatting for generative engines increases snippet eligibility across answer-driven interfaces.

Content presentation for answer engines depends on predictable formatting patterns. When enumerations remain concise and logically ordered, AI systems reuse items independently in summary outputs. This structural predictability enhances component clarity for machine reading.

Clear segmentation allows generative systems to extract list items without reconstructing narrative context. Each bullet therefore becomes a reusable micro-component within broader content architecture.

Content Elements for AI Summaries and Ordered Reasoning

Content elements for AI summaries must remain atomic and logically stable. Lists enable ordered reasoning when sequence matters, especially in instructional or procedural contexts. Numbered lists support ordered reasoning reuse because sequence encoding carries semantic weight.

Conversely, unordered bullet lists isolate independent facts without implying hierarchy. This distinction allows component-level content optimization by aligning list type with intent structure. Predictable ordering strengthens extraction accuracy.

A list should reflect its semantic function. When order matters, use numbering. When isolation matters, use bullets.

Extraction Behavior Across List Types

Different list types produce distinct AI extraction behavior because formatting signals influence interpretive modeling. Bullet lists support direct snippet extraction. Numbered lists enable ordered reasoning reuse. Checklists support instructional compression by isolating actionable steps.

| List Type | AI Extraction Behavior |

|---|---|

| Bullet list | Direct snippet extraction |

| Numbered list | Ordered reasoning reuse |

| Checklist | Instructional compression |

Lists define extraction-ready boundaries.

Implications for Structural Optimization

Component clarity for machine reading increases when lists isolate stable meaning. Overloaded bullets reduce interpretability and decrease reuse probability. Therefore, component-level content optimization requires editorial discipline in enumeration design.

Lists must align with structural intent rather than visual preference. When authors integrate bullet lists strategically within structured content components, generative systems identify reusable knowledge modules more reliably. Over time, disciplined list formatting strengthens generative visibility across answer-driven environments.

Tables as Knowledge Compression Mechanisms

Tabular data for AI retrieval plays a central role in structured comparison and inference acceleration. Generative systems process relational data more efficiently when content appears in matrix form rather than linear prose. Therefore, tables function as compression devices that encode multi-variable meaning within bounded structural fields. Research from Berkeley Artificial Intelligence Research (BAIR) emphasizes that structured representations improve model-level reasoning stability across structured inputs.

Structured data tables for AI — matrix-based content representations that encode relational signals. Each row and column establishes semantic roles that reduce ambiguity in comparison tasks. Consequently, tables transform descriptive content into relational logic that models can evaluate deterministically.

Claim: Tables reduce inferential ambiguity.

Rationale: Models detect column relationships and map variables across structured fields.

Mechanism: Structured cells define semantic roles and isolate comparative attributes.

Counterargument: Poor labeling weakens signal clarity and reduces interpretability.

Conclusion: Tables must use explicit headers to preserve semantic precision.

Tabular Structures for Knowledge Extraction

Tabular structures for knowledge extraction isolate relational meaning within bounded cells. Instead of relying on narrative comparison, tables encode attributes into aligned columns. As a result, models process structured comparison with reduced contextual reconstruction.

Optimizing content presentation formats requires aligning structure with interpretive intent. Tables excel in environments where contrast, differentiation, or classification defines the analytical goal. Therefore, generative systems treat well-structured tables as high-confidence relational modules.

When rows represent entities and columns represent attributes, semantic mapping becomes explicit. This clarity improves extraction reliability and supports structured inference across generative interfaces.

Structured Data Tables for AI and Relational Logic

Structured data tables for AI enable relational inference through positional consistency. Each column header functions as a semantic anchor, while each cell inherits contextual meaning from that header. Consequently, AI-readable layout components transform descriptive content into relational signals.

AI systems interpret tables as structured argument frameworks rather than narrative paragraphs. This structural encoding reduces interpretive variance because relationships become visually and semantically explicit. As a result, structured data tables for AI support compression without semantic loss.

Clear column labeling remains essential. Ambiguous headers introduce noise and weaken extraction precision.

Comparative Modeling Across Component Types

Tables outperform lists when relational comparison defines the analytical objective. Lists isolate features, while tables align features across entities. Cards encapsulate concepts independently, whereas tables expose relational interplay.

This distinction allows component optimization for SGE panels because generative systems surface structured comparisons more reliably than descriptive explanations. When comparative modeling aligns with search intent, table-based presentation increases structured inference visibility.

Choosing the correct component depends on semantic function. Comparison requires tables. Isolation requires lists. Concept encapsulation requires cards.

| Component | Use Case | AI Benefit |

|---|---|---|

| List | Feature isolation | Snippet reuse |

| Table | Comparative modeling | Structured inference |

| Card | Concept isolation | Panel extraction |

Tables define relational clarity through structural alignment.

Implications for Generative Extraction and Panel Optimization

Component optimization for SGE panels improves when relational information appears in tabular form. Generative interfaces prioritize concise comparative modules because they reduce answer synthesis complexity. Therefore, content components and snippet extraction benefit from matrix-based compression in comparative contexts.

Tables must remain semantically bounded and consistently labeled across clusters. Structural consistency strengthens model confidence and reduces inferential drift over time. Consequently, enterprise publishers should treat tabular design as a knowledge engineering discipline rather than a formatting choice.

Cards as Semantic Containers for Generative Interfaces

Information cards for discovery define how generative interfaces surface modular knowledge units. Modern AI systems increasingly present answers in card-based layouts rather than continuous documents. Therefore, visibility depends on how effectively content translates into bounded semantic modules. Research from Allen Institute for Artificial Intelligence (AI2) demonstrates that modular knowledge representations improve answer synthesis stability in generative systems.

Information card — a bounded content module that encapsulates one concept with definition, mechanism, and implication. Each card isolates a single semantic unit and prevents cross-concept contamination. Consequently, information cards operate as reusable containers within structured content components.

Claim: Cards map directly to generative answer panels.

Rationale: Interfaces display modular content units rather than full-page narratives.

Mechanism: Encapsulation preserves meaning by isolating definition, mechanism, and implication within one bounded container.

Counterargument: Cards may fragment long reasoning if conceptual continuity spans multiple modules.

Conclusion: Cards must represent complete conceptual units to maintain extraction integrity.

Card-Based Content Presentation SEO

Card-based content presentation SEO converts longform explanations into discrete semantic blocks. Each card functions as a micro-component within longform content and preserves interpretive stability. As a result, generative systems extract individual cards without reconstructing adjacent narrative context.

Micro-components in longform content reduce semantic drift across sections. Instead of embedding multiple ideas within a paragraph, card-based structuring isolates one concept per container. Consequently, extraction reliability increases because the semantic boundary remains explicit.

Each card should include a definition, a mechanism explanation, and a short implication statement. This internal structure transforms narrative text into machine-readable content components that align with generative interfaces.

Content Modules for Generative Systems

Content modules for generative systems rely on predictable structural templates. When authors apply consistent card formatting, AI models detect stable patterns across pages. Therefore, machine-readable content components become scalable knowledge units rather than isolated formatting choices.

Encapsulation strengthens interpretive clarity because each card maintains conceptual independence. Structured content components embedded as cards reduce ambiguity during answer generation. As a result, panel-based visibility improves when cards mirror interface logic.

A well-designed card isolates one idea, defines it clearly, and explains its operational mechanism. This structural discipline aligns directly with extraction requirements.

Microcase: Concept Cards in a SaaS Knowledge Base

A SaaS knowledge base implemented a componentized publishing strategy using concept cards across its documentation cluster. Each concept card contained a concise definition, operational explanation, and implementation implication. Consequently, answer panel appearances increased across generative search environments.

Internal analytics showed improved knowledge graph integration because relational nodes aligned with card-level segmentation. The redesign reduced narrative density and increased structured presentation for AI answers. Over time, component-based editorial planning standardized the card template across all product documentation.

This example demonstrates that modular card design influences generative visibility measurably. When semantic containers remain consistent, reuse probability increases.

Implications for Editorial Governance and AI Answer Surfaces

Component-based editorial planning must treat cards as architectural units rather than decorative blocks. Structured presentation for AI answers depends on predictable modular boundaries. Therefore, governance policies should define card templates, terminology rules, and scope constraints.

Information cards for discovery function as extraction-ready semantic containers. When enterprises enforce consistent card-level formatting, generative systems identify reusable conceptual modules more reliably. Over time, disciplined card implementation strengthens structured content components across AI-driven interfaces.

Component-Driven Page Structure for Generative Engines

Component-driven page structure determines how generative engines interpret, prioritize, and reuse longform content. The broader architectural framework for organizing these components within AI-friendly pages is explained in this guide to AI page structure optimization, which outlines how hierarchical layout and semantic sequencing help generative systems interpret content accurately. Pages must be architected for segmentation because models process hierarchical signals before semantic depth. Therefore, structural sequencing directly influences extraction stability and visibility outcomes. Research from Carnegie Mellon LTI confirms that hierarchical cues support structured language modeling and improve interpretive precision.

Component-driven page structure — page architecture composed of discrete reusable units arranged hierarchically. Each unit operates as a semantic container within a layered framework. Consequently, hierarchy becomes an interpretive map rather than a visual outline.

Claim: Segmented pages outperform linear pages in AI reuse.

Rationale: Models scan structural cues before reconstructing contextual meaning.

Mechanism: Hierarchy signals importance and defines scope boundaries across content units.

Counterargument: Excess segmentation reduces cohesion when structural units fragment conceptual continuity.

Conclusion: Hierarchy must follow semantic logic to preserve interpretability and reuse stability.

Structural Sequencing as Interpretive Signal

Structural sequencing transforms a page into a predictable interpretive framework. When H2 sections define concept containers, models assign domain-level scope to each segment. As a result, content structure through components strengthens semantic isolation across sections.

Additionally, predictable heading depth reduces ambiguity during extraction. Generative engines rely on structural gradients to evaluate conceptual importance. Therefore, AI-friendly content modules must align with hierarchical signaling to ensure stable reuse.

Clear sequencing ensures that meaning flows logically from general concepts to applied details. This progression supports deterministic parsing across longform layouts.

Hierarchical Mapping and Reuse Logic

Hierarchical mapping encodes semantic depth through structural layers. H2 headings introduce conceptual scope, H3 headings refine mechanisms, and H4 headings provide applied detail. Consequently, hierarchy communicates interpretive granularity to generative systems.

Models interpret deeper levels as subordinate clarifications rather than independent claims. This structural encoding prevents semantic overlap between components. As a result, reuse probability increases because each level maintains a defined interpretive role.

When hierarchy remains consistent across pages, generative engines recognize repeated structural patterns. This consistency stabilizes AI-friendly content modules at scale.

Example: A comparison table with explicit headers, aligned relational columns, and consistent terminology enables generative systems to extract structured contrasts directly into SGE panels without reconstructing surrounding narrative explanation.

Functional Roles Across Structural Levels

Each hierarchical layer performs a distinct semantic function. Clear role differentiation reduces interpretive ambiguity and improves extraction reliability. Therefore, component-driven page structure depends on disciplined level assignment rather than stylistic preference.

| Hierarchy Level | Function |

|---|---|

| H2 | Concept container |

| H3 | Mechanism subdivision |

| H4 | Applied detail |

Hierarchy defines interpretability depth.

Implications for Longform Layout Design

Content structure through components ensures that longform pages remain extractable at multiple granularity levels. AI-friendly content modules emerge when structural boundaries align with conceptual logic. Therefore, authors must design layout as semantic architecture rather than narrative flow.

Component-driven page structure increases generative stability across answer interfaces. Over time, disciplined hierarchical design strengthens structured content components and improves reuse probability in evolving generative environments.

Lists, Tables, and Cards in Snippet and Panel Extraction

Content components and snippet extraction determine how generative systems surface information across answer-driven interfaces. Answer engines prioritize extractable blocks because they must compress knowledge into bounded response formats. Therefore, lists, tables, and cards act as structural signals that map directly to visibility surfaces. Research from the Oxford Internet Institute shows that platform-level design increasingly shapes how information becomes visible in AI-mediated environments.

Snippet extraction — model-level reuse of bounded semantic segments. A snippet represents a structurally isolated unit that preserves meaning outside its original page context. Consequently, formatting stability directly influences extraction probability.

Claim: Structured components increase snippet probability.

Rationale: AI favors compact retrievable blocks that minimize reconstruction effort.

Mechanism: Predictable formatting reduces entropy and clarifies semantic boundaries.

Counterargument: Over-optimization may reduce readability and fragment conceptual flow.

Conclusion: Extraction clarity must align with semantic clarity to preserve both usability and reuse.

Surface-Specific Component Alignment

Generative interfaces differ in how they display extracted information. SGE panels often present relational data, whereas ChatGPT surfaces encapsulated concept cards. Meanwhile, Perplexity frequently highlights atomic bullet summaries. Therefore, content modules for generative systems must align with interface-specific formatting logic.

Structured elements for AI indexing influence how each surface interprets bounded units. Tables encode relational contrast, cards encapsulate single conceptual definitions, and lists isolate discrete claims. Consequently, component optimization for SGE panels depends on mapping structural type to interface behavior.

Alignment improves visibility stability because formatting reinforces platform expectations. Mismatched structure weakens extraction probability.

Formatting Signals and Extraction Behavior

Each generative surface prioritizes specific formatting signals during extraction. Explicit headers support table-based comparison in SGE panels. Definition-first structures increase ChatGPT card reuse. Atomic bullet formatting supports concise summarization in Perplexity.

Predictable formatting reduces entropy during model inference. As a result, structured content components become reusable across multiple generative systems. Consistent formatting enhances interpretive confidence at the model level.

Formatting must reflect semantic purpose rather than aesthetic preference. When structure aligns with functional intent, extraction reliability increases.

Component Mapping Across Interfaces

The relationship between structural component and extraction surface follows predictable patterns. Different interfaces reward different component types. Therefore, strategic formatting improves generative exposure.

| Surface | Preferred Component | Formatting Signal |

|---|---|---|

| SGE Panel | Table | Explicit headers |

| ChatGPT Card | Concept card | Definition-first |

| Perplexity Summary | List | Atomic bullets |

Formatting must match interface logic.

Implications for Generative Visibility Strategy

Component optimization for SGE panels requires deliberate structural alignment. Authors must evaluate which interface dominates target visibility and design accordingly. Consequently, structured elements for AI indexing become tactical levers rather than secondary formatting decisions.

Lists, tables, and cards operate as extraction primitives within structured content components. When enterprises standardize component mapping to interface behavior, snippet extraction becomes predictable. Over time, this alignment strengthens long-term generative visibility across evolving AI-driven platforms.

Designing Reusable Content Components for Enterprise Scale

Designing reusable content components determines whether large editorial clusters remain structurally stable over time. Large clusters require structural consistency because generative systems learn recurring patterns across pages. Therefore, governance becomes a prerequisite for scalable generative visibility. The importance of standardized structural control aligns with documentation principles outlined by NIST, which emphasize repeatability and consistency in system design.

Reusable component — a standardized structural module deployable across multiple pages. Each module follows a defined template and controlled terminology. Consequently, reuse strengthens interpretive stability and reduces semantic drift across clusters.

Claim: Standardization increases generative stability.

Rationale: Models reward structural consistency and recognize recurring formatting patterns.

Mechanism: Terminology stability reduces semantic drift and reinforces predictable inference.

Counterargument: Over-standardization limits nuance and may restrict conceptual flexibility.

Conclusion: Governance must preserve clarity without rigidity to maintain adaptive scalability.

Governance Framework for Component Templates

Reusable content requires predefined structural templates. Templates define heading depth, internal definition placement, and formatting logic. As a result, component modularization for visibility becomes predictable across hundreds of pages.

Editorial systems must enforce consistent structural logic. Authors should apply identical definition patterns, DRC placement, and segmentation rules. Consequently, component consistency across pages reduces interpretive variance during extraction.

Templates prevent structural entropy in expanding clusters. Without template governance, component logic fragments over time.

Terminology Stability and Structural Alignment

Terminology stability preserves semantic coherence across reusable modules. When authors maintain identical vocabulary for recurring concepts, generative systems map nodes consistently. Therefore, structured content components become part of a stable semantic network.

Header alignment further reinforces consistency. H2 sections must define conceptual domains uniformly across pages. H3 and H4 layers must reflect the same mechanism–application hierarchy in every instance.

Stable terminology and aligned headers strengthen AI-friendly content modules. Predictable patterns increase reuse reliability across generative surfaces.

Operational Controls for Enterprise Clusters

Enterprise editorial systems require procedural enforcement of component governance. Without auditing mechanisms, structural drift accumulates gradually. Therefore, operational controls must integrate into publishing workflows.

- Define component templates

- Standardize terminology

- Align headers across pages

- Audit structural drift quarterly

Standardization enables scalability.

Implications for Long-Term Generative Stability

Component modularization for visibility transforms editorial practice into structural engineering. Consistent templates enable predictable extraction and strengthen generative stability across evolving AI systems. Consequently, enterprise publishers reduce semantic noise while preserving conceptual clarity.

Component consistency across pages accumulates structural authority over time. When reusable modules align with structured content components, generative systems detect stable architectural patterns. Over extended publishing cycles, governance-driven reuse increases extraction reliability and improves long-term generative accessibility.

Measuring Component Effectiveness in Generative SEO

Component-level content optimization determines whether structured visibility translates into measurable outcomes. Structured visibility requires measurement because generative reuse occurs at the module level rather than at the page level. Therefore, enterprises must evaluate structural performance independently from traditional ranking metrics. Data modeling standards discussed within OECD Data Explorer reinforce the importance of disaggregated indicators when assessing system-level performance.

Component effectiveness — measurable extraction, reuse, and citation frequency of a structural module. Each component functions as a quantifiable unit within structured content components. Consequently, performance analysis must track module-level signals rather than aggregate page impressions.

Claim: Component impact must be measured independently.

Rationale: Page-level metrics hide structural performance differences between modules.

Mechanism: Extraction frequency reveals reuse value across generative interfaces.

Counterargument: Attribution models remain imperfect because AI systems do not expose full visibility logs.

Conclusion: Component metrics complement page analytics and provide structural insight.

Extraction-Based Performance Indicators

Extraction-based indicators measure how often structured elements appear in generative panels. When SGE surfaces a relational table, it confirms successful compression and formatting alignment. As a result, content components for generative visibility can be evaluated by panel appearance frequency.

Tracking extraction reveals structural precision rather than keyword ranking. Generative systems reuse modules that demonstrate bounded clarity and formatting consistency. Therefore, structured content components must be measured through extraction-oriented metrics.

Independent module tracking prevents false attribution. Page impressions alone cannot isolate structural impact.

Citation and Reuse Signals Across Interfaces

Citation frequency represents a second performance dimension. When Perplexity or similar systems reuse atomic lists, reuse becomes observable through answer logs and user-facing summaries. Consequently, citation frequency reflects compression success.

Snippet presence in Search Console indicates successful summarization alignment. Although generative systems operate differently from traditional search engines, compression signals remain measurable. Therefore, cross-platform observation improves component-level content optimization.

Reuse signals must be interpreted cautiously. Not every appearance equates to structural quality, yet repeated reuse indicates stable module design.

Component-Level Metric Framework

Enterprises require a structured measurement framework that separates module performance from page visibility. Clear indicators strengthen strategic decisions regarding component redesign. The following model organizes core signals:

| Metric | Data Source | Signal Type |

|---|---|---|

| Panel appearances | SGE tracking | Extraction |

| Answer reuse | Perplexity logs | Citation |

| Snippet presence | Search Console | Compression |

Measurement validates structural investment.

Implications for Generative Strategy and ROI

Content components for generative visibility function as measurable assets rather than formatting choices. When organizations track module-level extraction, structural optimization becomes data-driven. Consequently, structured content components evolve based on reuse patterns rather than speculative formatting changes.

Component-level content optimization aligns ROI analysis with generative behavior. Over time, module tracking reveals which lists, tables, and cards sustain visibility across evolving interfaces. Enterprises that measure structural effectiveness gain long-term stability in generative ecosystems.

Checklist:

- Are lists isolating atomic, single-claim statements?

- Do tables use explicit column headers to encode relational signals?

- Does each card encapsulate one complete conceptual unit?

- Is hierarchical depth (H2–H4) aligned with semantic scope?

- Are structured content components consistent across pages?

- Does formatting reduce ambiguity during snippet extraction?

Interpretive Signals in Component-Based Content Architecture

- Component boundary encoding. Discrete structural units such as lists, tables, and cards establish bounded semantic regions that AI systems treat as independently retrievable modules.

- Relational density signaling. Tabular alignments and columnar logic expose attribute relationships explicitly, reducing inferential reconstruction during generative processing.

- Enumerative compression cues. List-based segmentation creates atomic statement groupings that models interpret as low-entropy extraction zones.

- Hierarchical depth differentiation. Layered heading structures communicate conceptual scope and subordinate refinement, enabling multi-level interpretation within longform content.

- Encapsulated module persistence. Card-style semantic containers preserve definition–mechanism–implication integrity, supporting reuse across generative answer surfaces.

These architectural properties clarify how component-based page structures are parsed, segmented, and reassembled by generative systems, independent of surface-level formatting choices.

FAQ: Structured Content Components in Generative SEO

What are structured content components?

Structured content components are bounded units such as lists, tables, and cards that isolate meaning, reduce ambiguity, and improve extraction reliability in generative systems.

Why do lists improve generative visibility?

Lists segment atomic statements into predictable boundaries, allowing AI models to extract and reuse individual facts without reconstructing surrounding narrative context.

How do tables support AI retrieval?

Tables encode relational signals through aligned columns and explicit headers, enabling structured comparison and reducing inferential ambiguity during answer generation.

What makes information cards effective in generative interfaces?

Information cards encapsulate a single concept with definition and mechanism, preserving semantic integrity across panel-based answer surfaces.

How does hierarchy influence AI interpretation?

Layered H2–H3–H4 structures define conceptual scope and refinement depth, guiding models through segmented reasoning paths in longform layouts.

Why is component consistency important at enterprise scale?

Consistent templates and terminology stabilize semantic patterns across large clusters, increasing reuse probability and reducing structural drift.

How can component effectiveness be measured?

Effectiveness is evaluated through panel appearances, answer reuse frequency, and snippet presence, which reflect module-level extraction performance.

What is snippet extraction in generative systems?

Snippet extraction is the reuse of bounded semantic segments in AI-generated responses, based on clarity, structure, and formatting predictability.

How do structured elements differ from visual formatting?

Structured elements define semantic boundaries and relational roles, while visual formatting only affects presentation without influencing interpretive logic.

What role do structured content components play in long-term AI accessibility?

They create stable, reusable knowledge modules that remain interpretable across evolving generative interfaces and indexing systems.

Glossary: Key Terms in Structured Content Architecture

This glossary defines the structural terminology used in this article to ensure consistent interpretation of lists, tables, cards, and hierarchical components by both editorial systems and AI models.

Structured Content Components

Discrete structural units such as lists, tables, and cards that isolate meaning and enable deterministic extraction by generative systems.

Information Card

A bounded semantic container encapsulating a single concept with definition, mechanism, and implication for reuse in answer interfaces.

Tabular Compression

A matrix-based representation that encodes relational signals through aligned rows and columns to reduce inferential ambiguity.

Enumerative Segmentation

The isolation of atomic statements within bullet or numbered lists to create predictable extraction-ready boundaries.

Component Hierarchy

A layered arrangement of H2, H3, and H4 structures that defines conceptual scope, mechanism refinement, and applied detail depth.

Snippet Extraction

The reuse of bounded semantic segments in AI-generated responses based on clarity, formatting stability, and structural predictability.

Component Consistency

The practice of maintaining identical structural templates and terminology across pages to prevent semantic drift in large clusters.

Structural Sequencing

The ordered progression of conceptual layers that signals interpretive depth and supports hierarchical reasoning in generative systems.

Extraction Surface

An interface context such as SGE, chat-based cards, or summary panels where structured modules are displayed and reused.

Component Effectiveness

The measurable frequency of module-level extraction, reuse, and citation across generative environments.