Last Updated on March 1, 2026 by PostUpgrade

Voice-Driven Discovery and the Era of Ambient Search

Voice driven discovery defines a structural transformation in how information becomes accessible within digital systems. This article establishes a machine-readable framework for understanding ambient search, conversational retrieval, and predictive surfacing in AI-mediated environments. The analysis integrates semantic architecture, visibility mechanics, and evidence-based research to support long-term AI-driven accessibility.

Voice Driven Discovery as a Structural Shift in Information Access

Voice driven discovery defines a transition from typed queries to spoken intent resolution within ambient computing environments. According to research on speech interface systems conducted at MIT CSAIL, machine interpretation accuracy increases when structured input signals reduce ambiguity in intent mapping. Therefore, voice driven discovery restructures digital visibility models by replacing link-based retrieval with entity-based synthesis. The scope includes structural, behavioral, and infrastructural implications that directly affect generative inclusion.

Voice driven discovery is a retrieval paradigm where spoken input triggers contextual information surfacing without requiring traditional page navigation. It operates through AI-mediated interpretation layers that prioritize semantic clarity over interface navigation depth.

Definition: AI understanding in voice driven discovery environments is the model’s ability to interpret spoken intent, structural hierarchy, and defined entities in a way that enables accurate response synthesis and stable conversational reuse.

Claim: Voice driven discovery restructures how information becomes visible in digital systems.

Rationale: Spoken input compresses intent expression and removes interface friction.

Mechanism: AI models interpret speech signals, map them to semantic entities, and generate synthesized responses instead of ranked links.

Counterargument: Typed search remains dominant in transactional scenarios.

Conclusion: Voice driven discovery operates as a parallel visibility layer that requires structural adaptation.

Speech Driven Information Retrieval Infrastructure

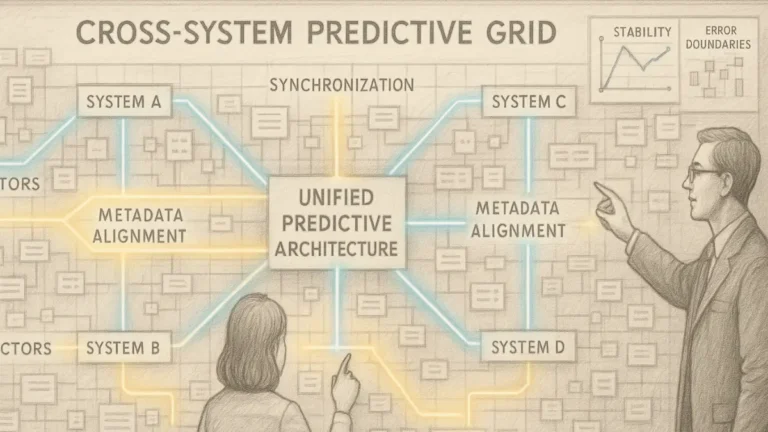

Speech driven information retrieval requires coordinated processing layers that transform audio signals into structured semantic output. Consequently, the reliability of this infrastructure determines whether generative systems can consistently surface authoritative information. Furthermore, infrastructure design directly influences response latency, interpretive precision, and visibility probability.

Speech driven information retrieval integrates automatic speech recognition, intent classification, and response synthesis within a unified semantic architecture. These systems depend on stable entity definitions and consistent terminology to prevent semantic drift across interactions. In addition, interoperability standards align parsing rules with knowledge graph models to ensure extractable reasoning units.

In practical terms, speech driven information retrieval converts spoken language into structured meaning that AI systems can evaluate and reuse. If the structural layer fails, visibility decreases because models cannot reliably extract or rank content segments.

Real Time Voice Retrieval Pipelines

Real time voice retrieval pipelines operate as sequential processing chains that minimize latency while preserving semantic fidelity. First, the system captures audio input and converts it into text. Next, natural language processing modules interpret intent and map expressions to predefined entities. Finally, response engines synthesize contextual outputs based on structured knowledge graphs.

Low latency processing is critical because spoken interaction tolerates minimal delay. Therefore, real time voice retrieval must optimize computational efficiency without compromising entity resolution accuracy. Research in speech modeling demonstrates that processing delays above 300 milliseconds reduce perceived system responsiveness in conversational environments.

When systems process speech instantly and interpret intent correctly, users receive direct answers rather than search result lists. As a result, structured content that supports immediate synthesis becomes more visible in generative environments.

Spoken Query Optimization Mechanisms

Spoken query optimization mechanisms adapt content architecture to the compressed structure of voice inputs. Spoken queries typically contain fewer tokens than typed queries, yet they encode contextual signals such as tone and intent. Therefore, systems must disambiguate meaning through probabilistic mapping rather than keyword matching.

Spoken query optimization aligns entity definitions, explicit terminology, and semantic containers with conversational phrasing patterns. It reduces ambiguity by embedding micro-definitions and stable vocabulary within content blocks. Consequently, generative models can extract precise response segments instead of paraphrasing loosely structured paragraphs.

Clear definitions and stable terminology allow AI systems to recognize entities even when users phrase questions differently. Thus, spoken query optimization increases inclusion probability within synthesized answers.

| Layer | Function | Structural Requirement |

|---|---|---|

| Audio Capture | Converts speech to text | Low latency processing |

| Intent Parsing | Maps query to semantic entities | Stable entity definitions |

| Response Generation | Synthesizes contextual output | Structured knowledge graph |

These layers operate sequentially and collectively determine interpretive reliability in voice-driven environments.

Ambient Search Experience and Continuous Listening Interfaces

Ambient search experience expands retrieval beyond explicit commands and integrates information surfacing into persistent environments. Consequently, discovery shifts from episodic interaction to continuous contextual mediation. This transformation redefines how users encounter information and how systems prioritize responses.

Ambient search experience relies on contextual modeling and behavioral inference rather than isolated queries. It integrates environmental signals, prior interactions, and probabilistic intent modeling. Therefore, content visibility increasingly depends on structural clarity and factual density.

In practical operation, ambient search experience means that information appears when context suggests relevance, not only when users type or speak a direct request.

Continuous Listening Interfaces

Continuous listening interfaces maintain passive audio monitoring to detect activation signals and contextual cues. These systems operate within defined privacy parameters and activate processing pipelines only after detecting intent markers. Consequently, continuous listening interfaces extend the discovery window beyond discrete interaction events.

Continuous listening interfaces depend on efficient signal detection algorithms and contextual modeling frameworks. They interpret background signals and convert them into structured interaction triggers. As a result, AI systems anticipate potential informational needs and prepare response candidates before full queries are expressed.

When devices remain context-aware, discovery becomes proactive rather than reactive. Therefore, structured content that anticipates likely questions gains higher inclusion probability.

Always On Search Environment

Always on search environment refers to persistent AI-mediated retrieval availability across devices and platforms. It integrates smart speakers, mobile assistants, and embedded systems into a unified ambient layer. Consequently, search becomes a continuous background function rather than a separate action.

Always on search environment increases the frequency of passive search interaction because users engage through short spoken prompts. Furthermore, generative systems evaluate contextual signals in real time and select concise answer segments. Therefore, structured semantic containers improve extractability in these environments.

When search functions operate continuously, visibility depends on structural clarity and entity alignment rather than backlink authority alone. This infrastructural layer establishes the baseline for passive search interaction and ambient information exposure.

Ambient Search Paradigm and Ecosystem Transformation

Ambient search paradigm describes search that operates in the background of user environments. According to digital transformation analyses published by the OECD, persistent AI integration reshapes information access across sectors and platforms. Consequently, the ambient search paradigm introduces structural consequences for discovery systems and redefines visibility metrics beyond session-based interaction.

Ambient search paradigm is a context-aware retrieval model that surfaces information proactively through AI mediation. It integrates behavioral signals, environmental context, and device continuity to trigger contextual information surfacing without requiring explicit navigation.

Claim: Ambient search shifts discovery from user-initiated queries to context-driven surfacing.

Rationale: Devices operate persistently and interpret behavioral signals.

Mechanism: AI assistants evaluate environmental context, prior interactions, and probabilistic intent signals.

Counterargument: Privacy constraints may limit persistent context tracking.

Conclusion: Ambient search requires trust-aligned structural architecture.

AI Assistants and Background Search Systems

Ambient AI assistants function as mediation layers between user intent and distributed information sources. Therefore, ambient AI assistants do not wait for discrete queries but continuously evaluate contextual signals to determine relevance thresholds. This structural persistence transforms discovery from episodic retrieval into continuous interpretive evaluation.

Ambient AI assistants integrate background search systems that operate without visible user commands. These systems monitor device state, location signals, interaction history, and temporal context. As a result, information surfacing becomes predictive rather than reactive, and visibility depends on structural clarity and entity alignment.

In operational terms, ambient AI assistants evaluate context even when users remain silent. Consequently, content optimized for contextual inference gains priority within generative surfacing layers.

Background Search Systems

Background search systems operate as passive computational layers that analyze contextual data streams in real time. They process interaction logs, device metadata, and behavioral signals to estimate probable informational needs. Therefore, background search systems rely on structured semantic containers to match inferred intent with authoritative response segments.

Background search systems also depend on probabilistic modeling frameworks that score relevance without explicit query strings. Because of this, entity stability and definitional clarity increase inclusion probability within predictive output generation. Furthermore, structural consistency reduces misinterpretation risk across repeated interactions.

When search logic runs continuously in the background, discovery shifts from active request to contextual anticipation. As a result, traditional navigation loses primacy within AI-mediated ecosystems.

Passive Search Interaction

Passive search interaction refers to retrieval events triggered without direct user input. Instead, systems interpret environmental context and initiate informational prompts or summaries. Therefore, passive search interaction extends discovery into ambient environments where attention spans remain limited.

Passive search interaction reduces reliance on manual navigation and increases dependency on generative summarization. Consequently, structured knowledge graphs and explicit entity mapping become decisive visibility signals. In addition, proactive content inclusion depends on factual density and definitional precision.

When users receive information before typing or speaking a query, interaction logic changes fundamentally. Thus, passive search interaction restructures exposure metrics and shifts authority from link rank to response inclusion.

Smart Speaker Discovery Behavior and Zero Click Voice Discovery

Smart speaker discovery behavior reflects interaction patterns where users rely on spoken prompts within domestic or mobile environments. Consequently, smart speaker discovery behavior emphasizes concise answer synthesis over exploratory browsing. Data from global device adoption studies show steady growth in smart assistant usage across OECD economies since 2019.

Smart speaker discovery behavior reinforces zero click voice discovery because devices return direct synthesized answers. Therefore, traffic shifts from page visits to spoken attribution and contextual summaries. Visibility becomes dependent on structural extractability rather than click-through optimization.

Users increasingly expect direct answers from smart speakers instead of navigating search result pages. As a result, structured semantic architecture determines whether content becomes part of synthesized output.

Zero Click Voice Discovery

Zero click voice discovery describes retrieval outcomes where AI systems provide complete responses without requiring further navigation. Therefore, zero click voice discovery displaces traditional search result interaction and reduces measurable click-based metrics. Instead, exposure shifts toward answer-level inclusion.

Zero click voice discovery depends on entity recognition, factual verification, and structural clarity. Generative systems evaluate authoritative alignment before synthesizing output. Consequently, brands with stable terminology and validated data gain higher probability of verbal citation within ambient systems.

When AI assistants provide complete answers, users no longer visit source pages to retrieve information. Thus, zero click voice discovery redefines ecosystem incentives and prioritizes structured extractability.

- proactive voice recommendations

- predictive voice search models

- voice discovery user behavior

- voice assistant discovery flow

These elements collectively demonstrate that ecosystem transformation favors contextual inference, structured response generation, and AI-mediated surfacing. These patterns demonstrate ecosystem-level displacement of traditional navigation logic.

Conversational Voice Discovery and Intent Modeling

Conversational voice discovery integrates dialogue-based interaction with entity mapping and dynamic clarification loops. According to adoption data published by the Pew Research Center, voice-enabled assistant usage has increased steadily across US households, reinforcing conversational retrieval patterns. Consequently, conversational voice discovery restructures discovery logic by prioritizing iterative refinement over single-query resolution. This architecture directly influences content design, extractability, and generative inclusion.

Conversational voice discovery is a multi-turn interaction process where AI systems refine intent across successive exchanges. It relies on contextual memory, entity continuity, and structured response segmentation to maintain interpretive stability across dialogue turns.

Claim: Conversational voice discovery increases interpretive depth but reduces direct traffic.

Rationale: Multi-turn clarification enhances answer precision.

Mechanism: Voice query intent modeling interprets ambiguity through contextual memory.

Counterargument: Ambiguity persists in domain-specific terminology.

Conclusion: Content must embed explicit semantic boundaries.

Voice Query Intent Modeling Framework

Voice query intent modeling functions as the interpretive core of conversational systems. It translates ambiguous spoken phrases into structured semantic representations. Therefore, voice query intent modeling determines whether AI systems can accurately refine user intent across multiple turns.

Voice query intent modeling integrates probabilistic classification, entity disambiguation, and conversational state tracking. It maintains contextual continuity across exchanges while updating intent hypotheses dynamically. Consequently, structured content with stable terminology improves interpretive reliability because it reduces semantic drift during clarification cycles.

In practical terms, voice query intent modeling allows AI systems to understand follow-up questions that lack explicit context. Thus, content optimized for entity stability becomes more likely to remain referenced throughout extended dialogue.

Conversational Discovery Architecture

Conversational discovery architecture defines the structural framework that supports multi-turn retrieval. It includes memory layers, entity graphs, and response synthesis modules that operate in coordinated cycles. Therefore, conversational discovery architecture prioritizes context retention over isolated query processing.

Conversational discovery architecture depends on structured semantic containers that separate definitions, mechanisms, and implications. Because of this separation, AI systems can extract modular reasoning units and recombine them within dialogue. Furthermore, stable entity mapping ensures that follow-up responses remain consistent with prior answers.

When architecture preserves conversational state, discovery becomes iterative rather than transactional. As a result, structured clarity directly increases inclusion probability within sustained interaction sequences.

Conversational Answer Surfacing

Conversational answer surfacing describes the process through which AI systems generate synthesized responses during dialogue. It selects concise segments that directly address refined intent parameters. Therefore, conversational answer surfacing favors content with high definitional precision and factual density.

Conversational answer surfacing evaluates structural signals such as entity clarity, evidence references, and logical progression. Consequently, content structured into concept blocks and mechanism blocks gains higher extraction stability. In addition, consistent terminology reduces the risk of interpretive inconsistency across dialogue turns.

When AI systems surface answers conversationally, they prioritize clarity and coherence over page depth. Thus, modular reasoning units become essential for generative reuse.

Speech Based Knowledge Access and Contextual Voice Navigation

Speech based knowledge access enables users to retrieve structured information through spoken dialogue rather than visual navigation. Consequently, speech based knowledge access reduces reliance on interface-based browsing and increases dependency on contextual interpretation. This shift reinforces the importance of explicit semantic architecture.

Speech based knowledge access integrates contextual voice navigation to maintain continuity between dialogue turns. Contextual voice navigation refers to the process by which AI systems adjust response pathways based on evolving intent. Therefore, contextual voice navigation depends on persistent entity alignment and stable vocabulary structures.

In everyday interaction, speech based knowledge access allows users to ask follow-up questions without restating full context. As a result, content that preserves semantic boundaries remains visible across multi-turn exchanges.

Contextual Voice Navigation

Contextual voice navigation guides conversational flow through structured interpretation of prior dialogue. It evaluates conversation history, intent progression, and entity continuity before generating the next response. Therefore, contextual voice navigation reduces ambiguity through cumulative semantic mapping.

Contextual voice navigation also influences inclusion probability because generative systems prefer content that supports iterative reasoning. Structured definitions, explicit relationships, and modular explanation blocks enhance navigation stability. Consequently, conversational continuity becomes a structural visibility factor.

When navigation adapts to prior context rather than isolated prompts, users experience coherent interaction. Thus, structured clarity ensures that AI systems maintain consistent interpretive alignment.

In 2023, Pew Research Center data showed rising adoption of smart speakers among US households. Users increasingly requested summaries instead of websites. AI systems returned synthesized responses. This pattern reduced exposure to link-based navigation.

Voice First Discovery Design and Visibility Architecture

Voice first discovery design prioritizes answer synthesis over link ranking and restructures visibility logic accordingly. Research in computational language understanding conducted by Stanford NLP demonstrates that structured entity alignment improves extraction accuracy in generative systems. Therefore, voice first discovery design requires architectural adjustments that support response-level inclusion rather than page-level ranking. This structural transformation directly affects how content becomes reusable in AI-mediated environments.

Voice first discovery design is a structural approach that optimizes information for spoken output and assistant interpretation. It emphasizes concise semantic units, explicit definitions, and extractable reasoning modules that support conversational synthesis.

Principle: In voice driven discovery systems, structural stability and explicit semantic boundaries increase the probability that generative engines will include content in synthesized responses rather than exclude it from conversational output.

Claim: Voice first discovery design alters ranking logic into response inclusion logic.

Rationale: Generative engines select extractable segments rather than pages.

Mechanism: Structured definitions, entity clarity, and fact density determine inclusion.

Counterargument: Entertainment content resists structural compression.

Conclusion: Precision architecture becomes mandatory.

Voice Interaction Visibility and Voice Led Content Visibility

Voice interaction visibility describes the probability that structured content becomes part of spoken AI responses. Consequently, voice interaction visibility depends on semantic clarity rather than hyperlink authority. It reflects the ability of generative engines to extract precise answer segments during conversational retrieval.

Voice interaction visibility aligns directly with voice led content visibility, which measures response-level exposure instead of click-based metrics. Therefore, structured entity definitions and stable terminology increase inclusion probability. Moreover, explicit logical progression enhances interpretive reliability across multi-turn dialogue.

When AI systems generate spoken responses, they prioritize clarity, coherence, and extractability. As a result, voice interaction visibility becomes a structural outcome of architectural precision.

Voice Led Content Visibility

Voice led content visibility refers to inclusion of structured content within synthesized spoken responses. It replaces page ranking with segment selection as the primary exposure mechanism. Consequently, content architecture must anticipate extraction boundaries.

Voice led content visibility depends on definitional density and fact alignment. Generative engines evaluate whether segments can stand independently without losing semantic coherence. Therefore, modular reasoning units increase reusability across varied conversational contexts.

When content segments remain self-contained and semantically stable, AI systems can safely reuse them in response generation. Thus, visibility shifts from navigation pathways to response inclusion logic.

Voice Response Ranking Factors

Voice response ranking factors determine which structured segments generative systems prioritize during synthesis. These factors include entity clarity, factual verification, contextual alignment, and structural stability. Therefore, voice response ranking factors extend beyond keyword matching and focus on semantic integrity.

Voice response ranking factors also evaluate citation presence and definitional precision. Because generative engines integrate probabilistic reasoning, they prefer segments with consistent terminology and verifiable claims. Consequently, structured architecture directly influences inclusion probability.

When response generation prioritizes extractable reasoning blocks, ranking becomes a matter of semantic reliability. Thus, architectural stability functions as a decisive signal in voice-driven environments.

Voice Discovery Content Framework

Voice discovery content framework defines the structural blueprint that aligns content architecture with generative inclusion logic. It integrates entity definitions, mechanism explanations, and implication blocks into reusable modules. Therefore, voice discovery content framework supports predictable extraction across conversational interfaces.

Voice discovery content framework relies on four primary signal categories that determine response inclusion probability. These signals operate through structured parsing and probabilistic scoring models. Consequently, alignment between semantic clarity and contextual intent increases generative visibility.

In operational terms, voice discovery content framework organizes content so that AI systems can interpret, extract, and recombine reasoning units without ambiguity.

| Signal Type | Input Source | Evaluation Model | Visibility Outcome |

|---|---|---|---|

| Entity Clarity | Structured text | NLP parsing | Inclusion probability |

| Fact Density | Data references | Citation analysis | Trust weighting |

| Context Match | Intent signals | Semantic scoring | Response ranking |

| Structural Stability | Layout hierarchy | Extraction models | Reusability |

These structural signals collectively determine whether content becomes part of synthesized voice responses rather than remaining confined to link-based ranking systems.

Ambient Intelligence Search Systems and Predictive Models

Ambient intelligence search systems extend voice driven discovery into predictive environments where contextual signals determine informational timing. Research conducted at Berkeley Artificial Intelligence Research (BAIR) demonstrates that contextual learning models improve anticipatory response accuracy when structured signals remain stable. Consequently, voice driven discovery evolves from reactive response generation to proactive contextual mediation. This transition strengthens the structural integration between predictive modeling and generative inclusion logic.

Ambient intelligence search systems are AI frameworks that anticipate information needs using contextual data streams. Within voice driven discovery environments, these systems process device history, environmental signals, temporal patterns, and probabilistic intent models to initiate contextual information surfacing without explicit prompts.

Claim: Predictive voice search models redefine content timing within voice driven discovery systems.

Rationale: Systems surface information before explicit demand in ambient voice driven discovery environments.

Mechanism: Contextual signals, device history, and probabilistic inference trigger proactive voice recommendations aligned with voice driven discovery architecture.

Counterargument: Prediction errors may reduce trust and weaken confidence in voice driven discovery outputs.

Conclusion: Reliability signals must stabilize predictive layers to sustain trust in voice driven discovery ecosystems.

Ambient Information Exposure and Proactive Voice Recommendations

Ambient information exposure increases the strategic relevance of voice driven discovery because it enables AI systems to surface content without direct prompts. Consequently, ambient information exposure operates as a continuous layer within voice driven discovery infrastructure. This layer reshapes how structured knowledge becomes visible across devices.

Ambient information exposure relies on proactive voice recommendations that integrate contextual inference and entity stability. Therefore, proactive voice recommendations evaluate prior interaction patterns, device context, and semantic alignment before surfacing information inside voice driven discovery interfaces. Furthermore, consistent terminology and definitional clarity increase prediction accuracy and reduce interpretive ambiguity.

When AI systems anticipate needs and surface information automatically, voice driven discovery becomes a timing-based visibility model. As a result, structured architecture directly influences predictive inclusion probability.

Proactive Voice Recommendations

Proactive voice recommendations function as system-initiated outputs within voice driven discovery environments. These recommendations rely on predictive scoring models that evaluate contextual signals in real time. Consequently, proactive voice recommendations depend on structured content blocks that remain extractable without additional clarification.

Proactive voice recommendations also require fact density and entity consistency to maintain trust in voice driven discovery outputs. Generative systems assess reliability through citation signals and definitional precision. Therefore, structured knowledge graphs and stable vocabulary enhance inclusion likelihood within predictive layers of voice driven discovery.

When recommendations appear before explicit demand, users experience a continuous form of voice driven discovery. Thus, content that anticipates informational needs gains structural priority.

Predictive Voice Search Models

Predictive voice search models operate through probabilistic inference mechanisms that estimate user intent trajectories inside voice driven discovery systems. These models analyze device usage history, conversational context, and environmental patterns. Therefore, predictive voice search models shift voice driven discovery from query resolution to intent forecasting.

Predictive voice search models evaluate semantic match scores across structured repositories optimized for voice driven discovery. Consequently, modular reasoning blocks and explicit entity mapping increase extraction stability. Moreover, contextual coherence across segments enhances model confidence during synthesis.

When prediction models operate effectively, voice driven discovery becomes anticipatory rather than reactive. As a result, content architecture must align with probabilistic selection criteria rather than static ranking metrics.

Conversational AI Discovery Systems

Conversational AI discovery systems combine predictive inference with dialogue management frameworks within voice driven discovery ecosystems. They integrate contextual memory, entity continuity, and proactive surfacing into unified interaction loops. Consequently, conversational AI discovery systems extend voice driven discovery into sustained conversational engagement.

Conversational AI discovery systems evaluate structural clarity before including content in generated responses within voice driven discovery interfaces. Therefore, definitional precision, factual verification, and stable terminology directly influence inclusion probability. In addition, predictive alignment with likely follow-up questions increases multi-turn continuity.

When conversational AI discovery systems integrate predictive layers, voice driven discovery becomes anticipatory and iterative. Thus, structured semantic architecture stabilizes inclusion across both proactive and reactive retrieval pathways in voice driven discovery environments.

Hands Free Content Access and Structural Adaptation

Hands free content access expands voice driven discovery into physical environments where users interact without screens. Research in speech interaction systems conducted by Carnegie Mellon LTI confirms that spoken interfaces require compact semantic structures to maintain comprehension accuracy. Therefore, hands free content access changes architectural priorities within voice driven discovery systems and demands structural adaptation for audio-first output. This transformation directly affects information density, segmentation logic, and extractability.

Hands free content access refers to interaction where users receive information without screen engagement. Within voice driven discovery ecosystems, this interaction model prioritizes concise, structured, and modular content units that can be delivered through speech synthesis.

Claim: Hands free content access compresses informational depth into concise spoken units.

Rationale: Audio channels impose temporal constraints.

Mechanism: Speech driven discovery optimization reduces redundancy and increases definition density within voice driven discovery environments.

Counterargument: Complex research topics exceed short-form response capacity.

Conclusion: Layered modular design mitigates compression loss in voice driven discovery systems.

Voice Activated Search Flows

Voice activated search flows define the interaction sequence that begins with spoken input and ends with synthesized output. Consequently, voice activated search flows restructure navigation logic because users no longer scan result pages. Instead, they rely on concise spoken responses generated within voice driven discovery environments.

Voice activated search flows depend on stable entity definitions and clear structural segmentation. Therefore, voice activated search flows prioritize content blocks that can stand independently without visual context. In addition, predictable formatting enhances model confidence during extraction and synthesis.

When search flows operate through speech alone, clarity becomes non-negotiable. Thus, voice activated search flows reward structured reasoning units over narrative depth.

Speech Driven Discovery Optimization

Speech driven discovery optimization refers to architectural adjustments that enhance extractability within voice driven discovery systems. It reduces repetition and increases definitional density so that spoken output remains concise and coherent. Consequently, speech driven discovery optimization transforms long-form content into modular semantic containers.

Speech driven discovery optimization also aligns terminology stability with probabilistic inference models. Because audio output lacks visual reinforcement, ambiguity must be minimized at the sentence level. Therefore, structured micro-definitions and explicit logical progression increase inclusion probability within spoken synthesis.

When optimization removes redundancy and clarifies entities, generative systems deliver responses efficiently. As a result, structured compression improves reliability within hands free environments.

Example: A structurally segmented explanation with defined entities and concise reasoning blocks allows conversational AI systems to extract high-confidence segments for spoken delivery without requiring visual reinforcement.

Conversational AI Discovery Systems

Conversational AI discovery systems operate as dialogue management layers within voice driven discovery ecosystems. In hands free contexts, these systems must maintain context continuity without visual cues. Consequently, conversational AI discovery systems depend on modular reasoning units that can adapt across multi-turn exchanges.

Conversational AI discovery systems evaluate response length, semantic clarity, and contextual relevance before generating spoken output. Therefore, structured semantic boundaries become decisive for inclusion stability. In addition, entity continuity across turns enhances coherence within audio-only interactions.

When users interact through speech alone, conversational AI discovery systems must deliver precise answers without visual fallback. Thus, layered modular design preserves informational integrity even when output remains brief.

- hands free content access

- contextual voice navigation

- voice first discovery design

- spoken query optimization

These structural elements collectively support voice driven discovery in environments where screen-based navigation becomes secondary to spoken interaction.

Zero Click Voice Discovery and Traffic Redistribution

Zero click voice discovery restructures voice driven discovery by removing traditional page visits from the interaction cycle. Empirical studies published in peer-reviewed work by OpenAI demonstrate that generative summarization alters click-through dynamics in AI-mediated environments. Consequently, zero click voice discovery redistributes digital visibility metrics from traffic volume to response inclusion frequency. This shift changes how organizations evaluate performance within voice driven discovery systems.

Zero click voice discovery is a retrieval outcome where AI provides a complete answer without requiring a site visit. Within voice driven discovery ecosystems, this model replaces link navigation with synthesized responses that integrate entity-based attribution.

Claim: Zero click voice discovery reduces measurable traffic but increases brand citation exposure.

Rationale: AI systems summarize authoritative sources instead of directing users to full pages.

Mechanism: Entity recognition links brand mentions to synthesized answers within voice driven discovery interfaces.

Counterargument: Citation omission limits recognition and weakens attribution stability.

Conclusion: Entity-based authority increases probability of verbal attribution in voice driven discovery systems.

Voice Discovery User Behavior and Voice Assistant Discovery Flow

Voice discovery user behavior reflects interaction patterns shaped by conversational AI and predictive surfacing. Therefore, voice discovery user behavior increasingly favors direct answers over exploratory browsing. Users prioritize efficiency and expect concise responses within voice driven discovery environments.

Voice discovery user behavior also aligns with structured response delivery because generative systems reduce cognitive load. Consequently, voice assistant discovery flow replaces multi-page navigation with iterative clarification cycles. In addition, response-level inclusion becomes more influential than traditional ranking metrics.

When users interact primarily through speech, behavior shifts toward answer consumption rather than page exploration. Thus, voice discovery user behavior redefines visibility from visit-based to inclusion-based metrics.

Voice Assistant Discovery Flow

Voice assistant discovery flow defines the sequential logic that begins with spoken input and ends with synthesized output. It integrates speech recognition, intent parsing, and response generation within unified pipelines. Therefore, voice assistant discovery flow operates inside voice driven discovery systems without exposing traditional result pages.

Voice assistant discovery flow also depends on entity continuity and semantic stability. Generative systems evaluate structured segments and select extractable reasoning units for synthesis. Consequently, inclusion probability depends on definitional precision and contextual alignment.

When discovery flows remain confined within conversational interfaces, traffic redistribution becomes structural rather than incidental. Thus, voice assistant discovery flow formalizes zero click interaction as a core retrieval model.

Background Search Systems

Background search systems operate continuously and influence zero click voice discovery by preparing response candidates before explicit queries. These systems analyze context, device history, and prior dialogue within voice driven discovery ecosystems. Therefore, background search systems reduce latency and increase proactive inclusion.

Background search systems also evaluate entity authority and factual alignment before generating synthesized answers. Consequently, content with stable terminology and structured semantic boundaries gains higher probability of verbal citation. In addition, consistent entity representation reduces attribution omission.

When background systems anticipate informational needs, synthesized answers become the primary exposure mechanism. As a result, traffic redistribution reflects structural inclusion rather than user navigation.

In 2022, OpenAI research demonstrated that summarization models reduced click-through rates in controlled test environments. However, brand recall increased when entity references were preserved. Exposure shifted from page visits to answer inclusion. Visibility redefined performance metrics within voice driven discovery ecosystems.

Structural Implications for Ambient Search Visibility Strategy

Ambient search visibility strategy consolidates voice driven discovery, predictive modeling, and conversational retrieval into a unified enterprise architecture. The governance principles outlined in the NIST AI Risk Management Framework emphasize structural consistency, reliability, and traceability as prerequisites for trustworthy AI systems. Consequently, ambient search visibility strategy must formalize operational requirements that stabilize inclusion probability across voice driven discovery environments. This synthesis transforms isolated optimization tactics into systemic governance.

Ambient search visibility strategy is a systemic optimization model for voice-led, predictive, and conversational environments. It integrates entity clarity, semantic containers, and hierarchical architecture into a coherent framework that supports long-term AI-driven accessibility.

Claim: Ambient search visibility strategy requires structural consistency across semantic layers.

Rationale: Generative systems extract modular reasoning blocks rather than entire documents.

Mechanism: Stable terminology, defined entities, and hierarchical architecture increase inclusion likelihood within voice driven discovery systems.

Counterargument: Rapid platform changes disrupt optimization stability and introduce interpretive variance.

Conclusion: Structural governance ensures long-term AI-driven accessibility and sustained visibility within ambient ecosystems.

Voice Discovery Content Framework Integration

Voice discovery content framework integration aligns modular reasoning units with enterprise governance standards. Therefore, voice discovery content framework must maintain consistent terminology and explicit micro-definitions across all content layers. This alignment reduces interpretive variance during extraction.

Voice discovery content framework integration also synchronizes structural segmentation with generative inclusion logic. Consequently, concept blocks, mechanism blocks, and implication blocks must remain clearly separated and semantically bounded. In addition, cross-referenced entity definitions stabilize inclusion across multi-turn dialogue.

When enterprises integrate structured frameworks into editorial workflows, visibility becomes predictable rather than incidental. Thus, voice discovery content framework integration transforms optimization into architectural policy.

Ambient Search Visibility Strategy

Ambient search visibility strategy formalizes how content architecture supports inclusion across predictive and conversational systems. It requires stable hierarchical headings, explicit definitions, and modular segmentation. Therefore, ambient search visibility strategy extends beyond technical SEO and becomes an organizational governance model.

Ambient search visibility strategy also depends on reliability signals such as citation consistency and factual verification. Generative systems prioritize extractable reasoning units that demonstrate semantic stability. Consequently, structural discipline directly influences long-term inclusion probability.

When governance aligns with extraction logic, ambient search visibility strategy produces sustained inclusion across evolving AI interfaces. As a result, enterprises reduce volatility caused by platform changes.

Conversational Answer Surfacing

Conversational answer surfacing operates as the final interpretive layer within voice driven discovery ecosystems. It selects concise reasoning modules that align with contextual intent and predictive inference. Therefore, conversational answer surfacing requires structural clarity at every semantic boundary.

Conversational answer surfacing also depends on consistency across prior dialogue turns. Stable entity representation and defined terminology prevent interpretive drift. Consequently, structured modular design enhances multi-turn continuity and reuse.

Checklist:

- Are core entities explicitly defined within voice driven discovery architecture?

- Is hierarchical structure consistently applied across H2–H4 layers?

- Do reasoning blocks remain modular and extractable for conversational synthesis?

- Are predictive signals aligned with contextual intent modeling?

- Does terminology remain stable across ambient search environments?

- Is structural governance preserved across updates and platform changes?

When conversational answer surfacing integrates predictive context with modular reasoning units, inclusion remains stable across voice and ambient environments. Thus, structural coherence sustains enterprise-level visibility.

- speech driven information retrieval

- ambient search paradigm

- voice interaction visibility

- proactive voice recommendations

- contextual voice navigation

These structural components collectively define an enterprise-ready ambient search visibility strategy that aligns voice driven discovery with long-term AI-driven accessibility.

Interpretive Architecture of Voice-Driven and Ambient Systems

- Conversational depth segmentation. Multi-turn interaction structures enable AI systems to isolate dialogue-dependent semantic units and preserve contextual continuity across generative responses.

- Predictive context layering. Structured separation between contextual signals, entity definitions, and response modules allows anticipatory models to evaluate inclusion without structural collapse.

- Entity stabilization boundaries. Explicit entity framing reduces interpretive drift within voice-driven discovery environments and supports consistent semantic reuse in synthesized outputs.

- Response-level modularization. Segmented reasoning blocks function as independent extraction nodes, increasing compatibility with ambient search architectures and spoken answer systems.

- Temporal relevance signaling. Structural alignment between predictive inference layers and semantic containers enables timing-based surfacing without compromising interpretive coherence.

These architectural signals explain how AI and generative systems interpret page structure within voice-driven discovery environments, preserving semantic continuity and extraction stability across conversational and ambient contexts.

FAQ: Voice Driven Discovery and Ambient Search

What is voice driven discovery?

Voice driven discovery is a retrieval paradigm where spoken input triggers contextual information surfacing and synthesized answers instead of traditional ranked link navigation.

How does voice driven discovery differ from traditional search?

Traditional search presents ranked pages for manual selection, while voice driven discovery delivers structured responses generated from extractable semantic blocks.

What is the ambient search paradigm?

Ambient search paradigm refers to context-aware retrieval systems that surface information proactively through AI mediation without requiring explicit queries.

What is zero click voice discovery?

Zero click voice discovery is a retrieval outcome where AI provides a complete synthesized answer without requiring a page visit, shifting visibility from clicks to inclusion.

How do predictive voice search models affect visibility?

Predictive voice search models anticipate intent using contextual data and probabilistic inference, increasing inclusion probability for structurally stable content.

What role does structure play in voice led content visibility?

Structured definitions, hierarchical segmentation, and semantic clarity enable generative systems to extract modular reasoning units for spoken responses.

How does conversational voice discovery refine intent?

Conversational voice discovery uses multi-turn dialogue and contextual memory to refine ambiguous intent and stabilize entity interpretation across exchanges.

Why does traffic redistribution occur in voice environments?

Traffic redistribution occurs because AI systems summarize authoritative sources directly in responses, reducing page visits while increasing response-level exposure.

What defines an ambient search visibility strategy?

An ambient search visibility strategy integrates entity stability, hierarchical architecture, and predictive alignment to sustain inclusion across voice and conversational systems.

How does hands free content access influence architecture?

Hands free content access compresses informational depth into concise spoken units, requiring modular design and high definition density for reliable synthesis.

Glossary: Key Terms in Voice Driven Discovery

This glossary defines the essential terminology used throughout this article to maintain semantic stability and support consistent interpretation by AI and generative systems.

Voice Driven Discovery

A retrieval paradigm where spoken input activates contextual information surfacing and synthesized responses instead of ranked page lists.

Ambient Search Paradigm

A context-aware retrieval model that operates persistently in the background and surfaces information proactively through AI mediation.

Conversational Voice Discovery

A multi-turn interaction process in which AI systems refine intent across successive spoken exchanges using contextual memory.

Zero Click Voice Discovery

A retrieval outcome where AI delivers a complete spoken answer without requiring a user to visit the original source page.

Predictive Voice Search Models

Probabilistic inference systems that anticipate informational needs using contextual signals, device history, and behavioral patterns.

Voice Interaction Visibility

The probability that structured content becomes part of a synthesized spoken response within conversational AI systems.

Semantic Container

A modular content block that encapsulates a single concept, mechanism, or implication to support reliable extraction by generative models.

Entity Stability

The consistent use of defined entities and terminology across sections to prevent semantic drift in AI interpretation.

Response Inclusion Logic

A visibility model where generative systems select extractable reasoning segments instead of ranking entire pages.

Structural Governance

An enterprise-level discipline that maintains consistent hierarchy, terminology, and semantic boundaries to support long-term AI-driven accessibility.