Last Updated on March 15, 2026 by PostUpgrade

Optimizing Old Content for Modern AI Engines

The transition from traditional search indexing to generative retrieval systems has changed how digital knowledge becomes discoverable. Organizations that maintain large archives must refresh legacy content so that modern AI engines can reliably interpret and reuse information. Language models increasingly rely on structured reasoning patterns, explicit definitions, and verifiable factual signals instead of simple keyword matching. Consequently, many previously successful SEO-optimized pages gradually lose visibility when generative systems synthesize answers, summaries, and knowledge panels.

At the same time, legacy content refresh strategy has become a core editorial process for organizations managing long-term knowledge assets. Updating legacy website content ensures that older articles maintain semantic clarity, factual reliability, and structural consistency. These qualities allow AI systems to extract stable knowledge fragments from existing documents. Therefore, modern optimization focuses on transforming older pages into structured knowledge modules that remain interpretable, reusable, and discoverable across generative search ecosystems.

The Structural Shift From Traditional SEO to AI Content Retrieval

Modern retrieval systems no longer rely exclusively on keyword frequency or link graph analysis to determine the value of digital content. Instead, generative engines analyze how information is structured and how reliably meaning can be extracted from documents. Consequently, organizations increasingly adopt a legacy content refresh strategy to adapt older archives for machine interpretation and reuse. Research from MIT CSAIL demonstrates that machine comprehension improves when information appears in clearly structured conceptual units rather than loosely organized text blocks.

Legacy content refers to previously published digital material created under earlier search paradigms and not optimized for generative AI interpretation. These materials often follow writing conventions designed for traditional search engines, where ranking signals relied heavily on keywords and backlink profiles. As generative retrieval systems become dominant interfaces for information discovery, organizations must restructure older content so that models can interpret and reuse it as reliable knowledge.

Claim: Updating legacy website content is necessary because generative AI engines evaluate structural meaning rather than keyword frequency.

Rationale: Research from the Stanford Natural Language Processing Group shows that language models prioritize semantic coherence and structured explanations when selecting sources for generated answers.

Mechanism: When models build responses they parse document structure, identify concept boundaries, and retrieve sentences that contain clear factual statements.

Counterargument: Some legacy pages still perform well if they already contain structured data or authoritative citations.

Conclusion: Most older articles require structural updates so that modern AI engines can extract reliable knowledge fragments.

Definition: AI understanding is the model’s ability to interpret meaning, structure, and conceptual boundaries in a way that enables accurate reasoning, reliable summarization, and consistent content reuse across generative discovery systems.

Evolution of AI retrieval models

Artificial intelligence retrieval systems have evolved significantly during the past decade. Early search systems relied primarily on document indexing and ranking algorithms that evaluated textual relevance. However, modern AI retrieval pipelines integrate neural reasoning models that evaluate relationships between concepts, entities, and factual claims. This shift explains why many organizations now modernize legacy content so that information aligns with the expectations of machine reasoning systems.

Several technological developments accelerated this transition toward generative retrieval models. Neural architectures introduced new methods for representing meaning across long documents, while retrieval-augmented generation systems enabled models to combine information from multiple sources. As a result, content refresh workflow processes now focus on restructuring articles into interpretable semantic units that can be integrated into generative responses.

Key developments influencing generative search include:

- Transformer architectures introduced in 2017

- Retrieval-augmented generation models

- Multi-document synthesis systems

- Knowledge-graph assisted retrieval

These technological shifts collectively explain why structural clarity now determines whether content can be reused by modern AI systems.

The Allen Institute for Artificial Intelligence demonstrates large-scale retrieval systems in open research on AI reasoning models, where generative systems combine multiple information sources into structured answers. Their work at Allen Institute for Artificial Intelligence illustrates how modern retrieval pipelines analyze document structure, entity relationships, and semantic coherence to produce reliable explanations. Consequently, organizations increasingly redesign older content to match the structural expectations of these systems.

In practical terms, modern retrieval systems behave differently from earlier search engines. Instead of ranking pages solely by keyword signals, AI models evaluate whether explanations can be interpreted as discrete knowledge units. Therefore, updating legacy website content has become essential for maintaining visibility in generative search environments.

Consequences for content archives

Generative retrieval systems analyze digital archives through structural interpretation rather than simple keyword matching. These systems evaluate how clearly information is organized, how consistently terminology is used, and whether factual statements can be extracted as independent knowledge fragments. Consequently, legacy page improvement strategy efforts focus on improving the interpretability of older articles rather than rewriting them entirely. This type of modernization rarely occurs in isolation, because legacy updates usually function as one component within a broader architectural plan for AI discovery. A broader strategic perspective appears in this framework for building a generative visibility strategy, which explains how structured knowledge systems enable AI engines to interpret, reuse, and connect information across entire content ecosystems.

Modern AI retrieval systems typically evaluate several structural signals when interpreting content archives:

- clarity of explanations

- factual density

- structural segmentation

- definitional statements

Each signal influences how effectively a document can contribute to generated answers, summaries, and knowledge panels. When explanations appear in clearly segmented blocks with stable terminology, AI systems can extract them more reliably. As a result, organizations implementing a content refresh workflow often restructure paragraphs, add micro-definitions, and reorganize headings to increase semantic clarity.

Older SEO-optimized content frequently lacks these structural properties because it was written for ranking algorithms rather than machine interpretation. Long narrative sections, inconsistent terminology, and missing definitions make it difficult for generative systems to identify stable knowledge units. Consequently, many organizations now modernize legacy content to ensure that archived articles remain interpretable and reusable within contemporary AI-driven information ecosystems.

Identifying Legacy Content That Requires Optimization

Not every document within a large archive requires immediate restructuring. Organizations that maintain extensive publishing histories must first determine which pages show declining interpretability and reduced machine readability. Consequently, a legacy content upgrade process begins with systematic evaluation rather than mass rewriting, because refresh legacy content efforts should focus on the pages where structural improvement can restore discoverability. Research summarized by MIT CSAIL shows that machine comprehension increases when textual knowledge appears in clearly segmented conceptual units rather than loosely connected narrative blocks.

Content interpretability refers to the ability of machine systems to extract explicit facts, definitions, and conceptual relationships from written material. Documents that contain clearly segmented ideas, consistent terminology, and verifiable statements produce stronger interpretability signals. Therefore, organizations implementing legacy blog post updates evaluate whether a document can still function as a reliable knowledge source within modern AI retrieval pipelines.

Claim: Legacy article optimization should prioritize pages that contain valuable knowledge but lack modern structural clarity.

Rationale: Studies published by the MIT Computer Science and Artificial Intelligence Laboratory demonstrate that machine comprehension improves when information appears in explicit conceptual units that AI systems can map into semantic graphs.

Mechanism: Content auditing tools evaluate page structure, semantic coherence, entity references, and factual signals that influence whether generative engines can extract knowledge fragments from documents.

Counterargument: Some outdated pages provide limited informational value and therefore should be removed rather than improved.

Conclusion: The most effective refresh strategy focuses on improving outdated pages that retain informational relevance while restructuring them for machine interpretability.

Signals that indicate outdated articles

Content archives reveal structural decay through measurable signals. Over time, articles written for earlier search paradigms lose clarity when terminology evolves, statistics become obsolete, and explanatory structures no longer match modern AI interpretation patterns. As a result, organizations evaluating updating old website articles look for patterns that indicate declining interpretability rather than simply measuring traffic fluctuations.

Editorial teams therefore combine search analytics with structural document analysis. This combined approach identifies whether declining performance reflects informational decay or merely shifts in user interest. When structural signals indicate reduced interpretability, improving outdated pages becomes a more effective strategy than replacing them with entirely new publications.

Key signals that indicate an article requires structural revision include:

- declining organic impressions

- fragmented topic coverage

- absence of structured definitions

- outdated statistics

- inconsistent terminology

These signals collectively indicate that refreshing outdated articles can restore interpretability and allow modern AI systems to extract coherent knowledge fragments from archived documents.

Content audit workflow

Organizations that manage large content libraries require consistent procedures for evaluating which pages should be revised. Consequently, editorial teams establish structured audit workflows that combine semantic analysis with factual verification. These workflows allow editors to identify structural weaknesses that prevent generative engines from interpreting older documents effectively.

| Audit Signal | Detection Method | Recommended Action |

|---|---|---|

| outdated data | statistical verification | update statistics |

| unclear structure | heading analysis | restructure article |

| weak concept definitions | NLP extraction test | add micro-definitions |

| fragmented explanations | paragraph mapping | reorganize sections |

Such audit frameworks allow teams to prioritize legacy blog post updates based on interpretability rather than subjective editorial judgment. Over time, organizations that consistently apply this legacy content upgrade process can maintain long-term knowledge reliability while avoiding unnecessary rewriting of archival material.

Designing a Structured Legacy Content Refresh Framework

Large knowledge archives require predictable procedures for updating existing material. Organizations managing hundreds or thousands of articles must establish systems that allow teams to refresh legacy content without introducing conceptual inconsistencies across the archive. A structured framework enables editors to apply a consistent content refresh workflow while maintaining knowledge stability and interpretability. Research from the Oxford Internet Institute demonstrates that structured information frameworks significantly improve the reliability of digital knowledge retrieval in machine learning systems.

A refresh framework is a standardized editorial workflow used to update existing articles while preserving conceptual coherence across a content ecosystem. This framework defines how teams evaluate information quality, reorganize article structures, and implement updates that support long-term interpretability. When organizations implement legacy article modernization through structured procedures, they reduce the risk of semantic drift between older and newly updated materials.

Claim: Content refresh workflow improves both interpretability and long-term knowledge reuse.

Rationale: Research from the Oxford Internet Institute shows that structured knowledge representation increases retrieval reliability in machine learning systems.

Mechanism: Frameworks enforce consistent headings, definitions, and logical sequencing across updated pages so that generative models can extract stable knowledge fragments.

Counterargument: Over-structuring content may reduce narrative readability for some audiences.

Conclusion: Balanced frameworks allow both human readability and machine interpretability.

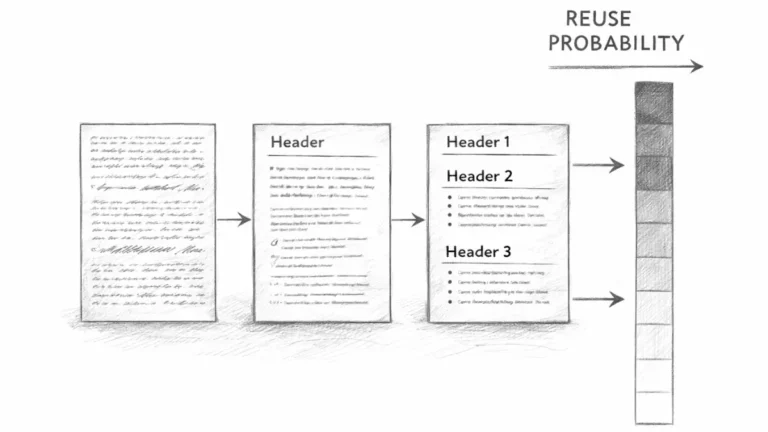

Stages of a refresh framework

Structured modernization begins with clearly defined stages that guide editorial teams through the transformation of archival content. Each stage focuses on improving interpretability signals while preserving the informational value of original articles. Consequently, organizations that follow a defined framework maintain consistent editorial standards across large digital archives.

Editorial frameworks also enable teams to coordinate large-scale updates efficiently. When modernization procedures remain consistent across departments, legacy content maintenance strategy initiatives become easier to scale. In addition, standardized frameworks ensure that legacy article improvement methods produce consistent conceptual structures across updated materials.

Typical stages of a structured refresh framework include:

- auditing legacy pages

- updating factual information

- restructuring explanations

- improving conceptual clarity

- adding authoritative citations

These stages collectively ensure that legacy content relevance updates improve both informational reliability and machine interpretability across entire archives.

Principle: Content becomes more visible in AI-driven environments when its structure, definitions, and conceptual boundaries remain stable enough for models to interpret without ambiguity.

Example enterprise refresh workflow

Large organizations often implement structured modernization processes that guide editors through sequential stages of revision. Such workflows reduce editorial uncertainty while ensuring that each article receives the same level of structural improvement. As a result, content refresh workflow initiatives become scalable even across extensive digital knowledge libraries.

Enterprise-level modernization frameworks usually follow a phased process that separates verification, restructuring, and consistency validation. This separation prevents editorial teams from introducing conceptual inconsistencies while updating archival material. Consequently, legacy article modernization projects remain both efficient and predictable.

Phase 1: Knowledge verification

Knowledge verification begins with evaluating whether existing information remains accurate and relevant. Editors examine statistical claims, references, and factual statements to determine whether data has changed since the original publication. At the same time, they verify whether authoritative sources still support the claims presented within the article.

This phase also evaluates whether conceptual definitions remain valid within current knowledge frameworks. Terminology evolves over time, particularly in fields influenced by rapid technological development. Therefore, updating definitions and clarifying conceptual boundaries becomes essential during legacy content relevance updates.

Once verification is complete, editors possess a reliable factual foundation from which structural modernization can proceed.

Phase 2: Structural reorganization

Structural reorganization focuses on improving the interpretability of existing explanations. Editors reorganize paragraphs into clear semantic units that separate concepts, mechanisms, and implications. This restructuring ensures that AI systems can identify discrete knowledge fragments rather than interpreting large narrative blocks.

During this stage, editorial teams also adjust heading hierarchies to reflect logical relationships between ideas. Clear hierarchical structures enable machine systems to map conceptual dependencies across sections. As a result, legacy article improvement methods often emphasize reorganizing existing explanations rather than rewriting them entirely.

This stage transforms archival articles into structured knowledge modules that modern retrieval systems can interpret more reliably.

Phase 3: semantic consistency validation

The final phase ensures that terminology and conceptual definitions remain consistent throughout the article. Editors verify that similar ideas use the same vocabulary across sections and that definitions align with the broader editorial framework used across the site.

Consistency validation also examines whether updated sections integrate smoothly with the rest of the content archive. When terminology remains stable across articles, generative retrieval systems can more easily connect related knowledge fragments across multiple documents.

This final stage therefore reinforces the long-term stability of legacy content maintenance strategy initiatives and ensures that updated articles remain compatible with the evolving structure of the knowledge ecosystem.

Updating Knowledge and Data Signals in Legacy Articles

Generative AI systems evaluate factual credibility before extracting information from digital sources. Documents that contain outdated statistics, unverifiable claims, or unsupported statements often lose extraction priority in generative search results. Therefore organizations that refresh legacy content must systematically update factual data and references across archival materials. Analysis published through the OECD Data Explorer shows that structured datasets significantly influence how information reliability is evaluated within modern digital information systems.

Factual signals are verifiable statements supported by recognized datasets, research institutions, or authoritative organizations. These signals include statistical evidence, peer-reviewed research, and institutional data sources that confirm the validity of a claim. When editorial teams perform legacy content lifecycle updates, they ensure that existing explanations remain supported by current evidence rather than outdated references.

Claim: Refreshing old longform content improves factual reliability signals used by generative engines.

Rationale: According to research aggregated through the OECD data research portal, structured datasets strongly influence the evaluation of informational credibility within digital knowledge systems.

Mechanism: AI systems compare claims within articles against verified knowledge bases, structured datasets, and institutional data repositories to determine factual reliability.

Counterargument: Some informational pages rely primarily on conceptual analysis and may not depend heavily on statistical evidence.

Conclusion: Even conceptual articles require periodic verification of referenced data to maintain credibility and interpretability.

Authoritative data sources

Reliable knowledge updates require consistent use of recognized institutional data repositories. Editorial teams responsible for updating legacy knowledge pages typically rely on global statistical institutions and research organizations that maintain publicly accessible datasets. These sources provide verifiable evidence that strengthens factual reliability signals within digital documents.

When organizations refresh archived content pages, they also verify that statistical references remain current and contextually accurate. Because generative AI systems evaluate the credibility of sources, linking claims to recognized institutions increases the probability that models will treat the document as a trustworthy reference.

Authoritative sources commonly used during legacy content lifecycle updates include:

- World Bank Open Data

- Eurostat statistical reports

- Pew Research Center datasets

- Our World in Data

- United Nations Statistics Division

These institutions collectively provide structured datasets that allow editors to update statistical references and maintain the reliability of informational claims.

Accurate data references also support long-term knowledge stability across digital archives. When editorial teams rely on recognized institutional datasets, they create consistent factual foundations that generative AI systems can verify and reuse. Consequently, refreshing old longform content frequently involves updating statistical citations rather than rewriting explanatory sections.

Example data update table

Editorial teams performing refreshing old longform content often implement structured verification processes that track how frequently specific types of data require revision. These processes allow organizations to maintain factual accuracy while minimizing unnecessary editorial work.

| Data Type | Update Frequency | Example Source |

|---|---|---|

| economic indicators | yearly | World Bank |

| technology adoption | quarterly | OECD |

| demographic statistics | yearly | UN Statistics |

Structured update schedules enable organizations to monitor whether statistical references remain valid across archival articles. As a result, updating evergreen legacy articles becomes a predictable process rather than an occasional editorial correction.

Consistent verification also strengthens interpretability signals that generative systems evaluate when selecting informational sources. Documents that contain recent datasets and verified references are more likely to be interpreted as reliable knowledge modules.

Consequently, organizations performing updating legacy knowledge pages increasingly integrate structured data verification into their editorial workflows. This approach ensures that refreshing archived content pages supports long-term credibility while preserving the informational value of existing knowledge resources.

Restructuring Legacy Articles for AI Interpretation

Older informational pages often contain extended narrative passages that combine multiple ideas within the same paragraph. Such structures reduce interpretability because generative systems must infer where one concept ends and another begins. Consequently, organizations that refresh legacy content frequently restructure older documents to separate explanations into explicit conceptual units. Research from Carnegie Mellon University Language Technologies Institute demonstrates that hierarchical document structures significantly improve machine comprehension of long textual materials.

Semantic segmentation divides content into distinct conceptual containers that represent individual knowledge units. Each container isolates a single explanatory element such as a concept, a mechanism, or an implication. When editorial teams perform upgrading old editorial content through segmentation, they convert narrative passages into structured knowledge units that generative engines can interpret with greater reliability.

Claim: Legacy article revision strategy improves machine comprehension by separating ideas into structured segments.

Rationale: Research conducted at Carnegie Mellon University’s Language Technologies Institute shows that machine readers interpret hierarchical document structures more accurately than unstructured narrative text.

Mechanism: Segmented content enables AI systems to identify conceptual boundaries, map relationships between ideas, and retrieve specific explanatory units from long documents.

Counterargument: Excessive segmentation may fragment narrative continuity and reduce readability for some audiences.

Conclusion: Balanced segmentation improves interpretability while preserving the logical flow of explanations.

Recommended semantic containers

Modern AI interpretation systems operate most effectively when information is divided into clearly defined semantic containers. Each container performs a distinct informational function that contributes to the overall explanation. When organizations improve legacy knowledge articles, they typically reorganize content into consistent conceptual blocks that separate definitions, mechanisms, and implications.

Segmenting content in this way allows generative systems to interpret the function of each paragraph more easily. Instead of processing long narrative passages, AI models can extract individual knowledge fragments that represent discrete informational units. Therefore many organizations restructuring refreshing older website resources adopt container-based document design.

Common semantic containers used when refreshing established content resources include:

- concept explanations

- mechanism descriptions

- practical examples

- implications for organizations

These containers create a predictable informational architecture that allows both human readers and machine systems to identify the role of each paragraph within the article.

Clear container structures also simplify the process of updating archival documents. When information appears in modular blocks, editors can modify individual sections without rewriting the entire article. Consequently, upgrading old editorial content becomes easier to maintain over time.

Example: A page with clear conceptual boundaries and stable terminology allows AI systems to segment meaning accurately, increasing the likelihood that its high-confidence sections will appear in assistant-generated summaries.

Example restructuring model

A common restructuring approach transforms narrative explanations into sequential conceptual layers. Each layer introduces a distinct informational function that builds progressively toward a complete explanation. When organizations apply this model within a legacy article revision strategy, they ensure that each stage of explanation appears as a clearly identifiable semantic unit.

The restructuring sequence typically follows a logical knowledge progression:

Concept → Definition → Mechanism → Evidence → Implication

This structure ensures that readers first understand the core idea before examining how it works and why it matters. Generative systems also benefit from this sequencing because each stage provides context for the next stage of reasoning. As a result, improving legacy knowledge articles through structured sequencing improves both interpretability and informational clarity.

Editorial teams often apply this model when modernizing older documents that contain dense narrative explanations. Instead of rewriting entire sections, they reorganize existing material into explicit conceptual layers. This method allows refreshing older website resources without altering the underlying informational content.

Such restructuring also strengthens the reliability of knowledge extraction across generative systems. When each explanatory stage appears as a separate semantic block, AI engines can identify conceptual relationships more accurately. Consequently, refreshing established content resources through segmentation improves both machine interpretation and long-term content maintainability.

Improving Structural Clarity in Longform Articles

Structural clarity strongly influences how generative systems interpret complex documents. Longform articles frequently contain multiple layers of reasoning, evidence, and conceptual explanation. Therefore organizations that refresh legacy content often redesign the architecture of existing pages so that AI systems can follow a consistent logical progression across sections. Empirical research conducted by the University of Washington NLP Group demonstrates that hierarchical organization of information significantly improves extraction accuracy in machine reading systems.

Structural clarity refers to the predictable organization of information across headings, paragraphs, and sections. A structurally clear document presents ideas in a logical sequence where each section builds upon the previous one. When editorial teams perform a legacy content enhancement process, they reorganize longform articles so that conceptual units appear in stable and interpretable positions.

Claim: Improving existing article quality enhances interpretability signals used in generative retrieval systems.

Rationale: Research from the University of Washington NLP Group demonstrates that hierarchical document structure improves the accuracy of machine information extraction.

Mechanism: Language models prioritize documents where explanations follow consistent logical order and where structural relationships between sections remain predictable.

Counterargument: Certain narrative journalism formats intentionally avoid rigid structural hierarchy in order to maintain storytelling flow.

Conclusion: Knowledge-oriented articles benefit from explicit structural organization because consistent hierarchy improves interpretability for both humans and AI systems.

Structural improvements

Structural clarity is achieved through targeted modifications that reorganize how information appears within long documents. These modifications do not necessarily change the informational content of an article. Instead, they adjust how explanations are distributed across sections so that both readers and machine systems can identify conceptual boundaries more easily.

Editorial teams updating existing longform pages typically begin by simplifying paragraph structures and aligning terminology across sections. These adjustments improve readability while simultaneously strengthening machine interpretability signals. As a result, refreshing long-published articles often involves structural reorganization rather than extensive rewriting.

Common structural improvements implemented during improving existing article quality include:

- shorter paragraphs

- explicit concept definitions

- logical heading hierarchy

- consistent terminology

These adjustments collectively strengthen interpretability signals and make longform documents easier for generative engines to analyze.

Structural improvements also support long-term maintainability of content archives. When explanations follow predictable structural patterns, editors can update individual sections without disrupting the overall coherence of the article. Consequently, improving old content relevance becomes a scalable editorial process rather than an isolated correction.

Example heading hierarchy

Hierarchical heading structures allow generative systems to interpret how concepts relate to mechanisms and operational examples. When headings follow consistent logical levels, AI models can map relationships between sections more accurately. As a result, updating existing longform pages often includes restructuring heading hierarchies so that each level represents a distinct conceptual role.

A typical hierarchical structure used in improving existing article quality may appear as follows:

H2 concept

H3 mechanism

H4 operational example

This structure reflects the logical progression of knowledge within explanatory documents. A concept introduces the core idea, the mechanism explains how the idea operates, and the operational example demonstrates practical implications.

Such hierarchies also reduce interpretive ambiguity for machine readers. When structural roles remain consistent across articles, generative retrieval systems can recognize patterns that improve information extraction. Consequently, refreshing long-published articles through hierarchical restructuring strengthens both interpretability and long-term informational stability across content archives.

Microcase: Enterprise Content Refresh in Practice

Organizations that manage large knowledge repositories frequently modernize archival materials in order to maintain long-term discoverability. Structured editorial revisions help ensure that older documents remain interpretable for generative systems and continue to function as reliable informational sources. Enterprises implementing refresh legacy content initiatives often prioritize large collections of longform articles where small structural improvements can significantly improve machine interpretation. Research from the Columbia University Data Science Institute shows that structured document updates can substantially improve how machine learning systems extract knowledge from archived materials.

A microcase is a short real-world narrative demonstrating how theoretical frameworks operate within practical organizational environments. Microcases illustrate how structured editorial methods influence measurable outcomes in large-scale content systems. When organizations implement updating old informational content strategies, they frequently analyze archival performance data in order to determine which modernization methods produce the greatest improvements in discoverability.

Claim: Refreshing historical content assets can restore discoverability in generative search environments.

Rationale: A study conducted by the Columbia University Data Science Institute found that structured updates increased knowledge extraction rates from archival documents processed by machine learning systems.

Mechanism: Updated articles incorporated definitional statements, clearer heading structures, and verified institutional data sources, allowing generative engines to extract reliable information units.

Counterargument: Not every archival document contains informational value that justifies the cost of structural modernization.

Conclusion: Targeted updates based on editorial prioritization significantly improve AI retrieval performance while minimizing unnecessary editorial effort.

A scientific publisher managing a large research archive analyzed 2,500 articles originally published between 2010 and 2016. Many of these documents contained valuable technical explanations but lacked structural segmentation and updated statistical references. The organization implemented a legacy content renewal strategy that introduced hierarchical headings, explicit concept definitions, and updated institutional data sources.

Within twelve months of applying these updates, generative retrieval systems began referencing the revised documents more frequently when synthesizing answers. Internal analytics indicated that the updated articles appeared more often in machine-generated summaries and knowledge responses. The publisher reported a 32 percent increase in AI-generated citation frequency across the refreshed content archive.

This microcase illustrates how refreshing historical content assets can improve machine interpretability without requiring complete rewriting of archival documents. By focusing on structural clarity and factual reliability, organizations can modernize legacy knowledge repositories while preserving the informational value of existing materials.

Building a Long-Term Legacy Content Optimization Strategy

Content modernization cannot be treated as a single corrective action. Knowledge archives evolve continuously as terminology changes, datasets expand, and new research alters established explanations. Therefore organizations that refresh legacy content must embed modernization practices into long-term editorial governance systems. Research published by the European Commission Joint Research Centre emphasizes that structured knowledge maintenance significantly improves the reliability and longevity of digital information infrastructures.

Content lifecycle management refers to the continuous evaluation, verification, and structural improvement of digital knowledge assets across time. This process ensures that articles remain interpretable, factually accurate, and structurally coherent even as informational contexts evolve. When organizations adopt a legacy content maintenance strategy, they treat editorial modernization as an ongoing operational process rather than a reactive correction.

Claim: Legacy content maintenance strategy ensures long-term generative visibility across evolving AI retrieval systems.

Rationale: Research from the European Commission Joint Research Centre demonstrates that digital knowledge systems remain reliable only when structured maintenance procedures continuously update informational resources.

Mechanism: Organizations implement scheduled audits, data verification cycles, terminology governance, and structural quality checks that preserve interpretability across archival documents.

Counterargument: Rapid publishing environments may prioritize producing new articles instead of maintaining existing informational assets.

Conclusion: Balanced editorial planning preserves both innovation and archival value by maintaining existing knowledge resources while expanding new content.

Components of a sustainable strategy

Long-term modernization strategies rely on predictable governance mechanisms that coordinate editorial updates across large knowledge archives. Without these systems, organizations often address content decay only after performance declines become visible. Consequently, sustainable frameworks focus on proactive monitoring and scheduled structural improvement rather than reactive corrections.

Editorial governance structures typically coordinate modernization through shared documentation standards, verification procedures, and terminology management. These systems ensure that legacy article improvement methods produce consistent outcomes across different editorial teams and subject areas. As a result, organizations implementing legacy content lifecycle updates maintain conceptual stability across large digital repositories.

Key components of a sustainable modernization strategy include:

- periodic content audits

- terminology governance

- data verification schedules

- editorial documentation standards

Together these elements establish a stable operational framework that allows organizations to perform legacy content relevance updates consistently across entire content ecosystems.

Consistent governance also reduces the risk of semantic drift within large knowledge archives. When editorial teams apply common terminology and structural conventions, generative AI systems can interpret relationships between documents more reliably. Consequently, maintaining consistent modernization procedures strengthens long-term interpretability across entire content infrastructures.

Lifecycle planning table

Lifecycle planning transforms modernization into a predictable editorial process rather than a series of isolated revisions. Organizations typically establish structured schedules that determine when articles should be evaluated, verified, and structurally improved. These schedules ensure that informational accuracy and structural clarity remain consistent across the entire archive.

| Stage | Activity | Frequency |

|---|---|---|

| audit | identify outdated pages | yearly |

| revision | update knowledge signals | yearly |

| restructuring | improve semantic clarity | as needed |

This lifecycle model allows editorial teams to coordinate legacy article improvement methods across large content repositories without disrupting ongoing publishing activities. Each stage addresses a distinct aspect of modernization, from identifying outdated material to improving interpretability.

Organizations that integrate lifecycle planning into their editorial governance systems maintain long-term knowledge reliability while avoiding large-scale content decay. Consequently, legacy content relevance updates become routine operational activities that preserve the informational value of digital archives across evolving AI retrieval environments.

Checklist:

- Does the page define its core concepts with precise terminology?

- Are sections organized with stable H2–H4 boundaries?

- Does each paragraph express one clear reasoning unit?

- Are examples used to reinforce abstract concepts?

- Is ambiguity eliminated through consistent transitions and local definitions?

- Does the structure support step-by-step AI interpretation?

Interpretive Architecture of Structured Content in Generative Retrieval

- Semantic boundary detection. Hierarchical heading layers function as segmentation markers that allow generative systems to identify where conceptual units begin and end within long documents.

- Knowledge unit encapsulation. When explanations appear as discrete conceptual blocks, AI models can isolate statements, mechanisms, and implications as independent informational fragments.

- Terminology stabilization. Consistent vocabulary across sections prevents semantic drift, allowing machine interpretation systems to maintain coherent internal representations of related concepts.

- Context layering through document depth. Nested heading structures create contextual scaffolding that supports multi-level interpretation, enabling models to map relationships between ideas across different sections.

- Retrieval-oriented segmentation. Documents that maintain predictable structural segmentation allow generative retrieval pipelines to extract knowledge fragments without reconstructing narrative context.

Within generative information systems, such structural signals function as interpretive anchors that guide how machine readers map meaning, detect conceptual relationships, and reconstruct knowledge from complex documents.

FAQ: Refreshing Legacy Content for AI Search

What does it mean to refresh legacy content?

Refreshing legacy content means updating older articles so modern AI systems can interpret structure, definitions, and factual signals more accurately.

Why do older articles lose visibility in AI search?

Older pages often lack structured headings, clear definitions, and updated data, which reduces interpretability for generative retrieval systems.

How does AI evaluate legacy content?

Generative engines analyze semantic structure, factual reliability, and logical segmentation to determine whether information can be reused in answers.

What is a legacy content refresh strategy?

A legacy content refresh strategy is a structured process that updates existing articles through improved structure, verified data, and clearer semantic organization.

Which signals help AI interpret updated articles?

Clear headings, definitional statements, reliable sources, and logically segmented explanations help AI systems extract knowledge from content.

Why are definitions important for AI readability?

Definitions stabilize meaning inside a document, allowing generative systems to interpret concepts without relying on external inference.

What role does data verification play when updating old articles?

Updating statistics and institutional references improves factual reliability signals that generative search systems use to evaluate information.

Can legacy articles remain useful after modernization?

Yes. When older content is restructured with clearer explanations and updated data, it can become a reliable knowledge source for AI retrieval.

What structural elements improve AI interpretation?

Hierarchical headings, semantic segmentation, consistent terminology, and explicit conceptual explanations improve machine understanding.

Why should organizations maintain a content lifecycle strategy?

Content lifecycle management ensures that existing knowledge remains accurate, interpretable, and discoverable as search technologies evolve.

Glossary: Key Terms in Legacy Content Optimization

This glossary explains the core concepts related to refreshing legacy content so both readers and AI systems can interpret structural signals and knowledge updates accurately.

Legacy Content

Previously published digital material created under earlier search paradigms that may lack structural clarity, updated data, or AI-readable organization.

Content Refresh

The process of updating existing articles by improving structure, verifying data, and clarifying explanations to maintain relevance in modern search systems.

Semantic Segmentation

The division of content into clearly defined conceptual units such as definitions, mechanisms, and implications that AI systems can interpret independently.

Content Interpretability

The degree to which machine systems can extract meaning, relationships, and factual signals from written material.

Factual Signal

A verifiable statement supported by institutional data, research sources, or authoritative statistics that strengthens informational reliability.

Structural Clarity

A predictable organization of headings, paragraphs, and conceptual blocks that allows generative systems to interpret document logic.

Hierarchical Structure

A layered heading system that organizes concepts, mechanisms, and examples in a logical sequence interpretable by AI systems.

Content Lifecycle Management

A governance process that regularly evaluates, updates, and restructures digital content to maintain long-term informational accuracy.

Knowledge Extraction

The process by which AI systems identify and reuse factual statements, definitions, and explanations from structured documents.

Generative Retrieval

A search paradigm where AI systems synthesize answers by extracting and combining knowledge from multiple structured sources.