Last Updated on February 22, 2026 by PostUpgrade

Context Preservation in Long-Form Page Design

Long-form context preservation defines a structural discipline in enterprise publishing that governs how meaning persists across extended documents. The concept moves beyond stylistic coherence and establishes a machine-usable continuity model that supports AI comprehension and generative visibility. This article treats long-form context preservation as an architectural system that ensures semantic stability, predictable reasoning, and extraction-ready structure across multi-thousand-word pages.

Enterprise publishing environments increasingly operate within AI-mediated discovery systems. Therefore, structural continuity directly affects interpretability by language models and ranking systems that rely on hierarchical parsing. As a result, long documents must encode context persistence architecture rather than rely on narrative flow alone.

Long-form context preservation = systematic structural design that maintains semantic continuity across extended content units. It functions as a control layer that aligns conceptual blocks, mechanism blocks, and implication blocks into a stable semantic framework. Consequently, it enables machine-readable coherence across long-read structural environments.

Enterprise AI comprehension systems process content as structured graphs rather than sequential essays. Research from institutions such as MIT CSAIL and the Stanford Natural Language Processing Group demonstrates that hierarchical encoding increases reasoning reliability in large language models. Therefore, machine-readable architecture becomes a prerequisite for deep content continuity, especially in long-form environments where semantic drift accumulates across scroll depth.

Moreover, generative systems extract knowledge modules rather than full narratives. Accordingly, context persistence architecture must reinforce cross-block context alignment to maintain semantic traceability across sections. When structural meaning persistence is engineered intentionally, AI systems retain stable interpretations even under context window constraints.

This article develops a formal framework for preserving context integrity in long reads. It integrates architectural modeling, semantic flow stabilization, hierarchical reinforcement, and validation systems into a coherent enterprise methodology. Each section builds upon stable terminology and consistent reasoning patterns to prevent semantic fragmentation and to enable long-term interpretability across distributed AI systems.

Architectural Foundations of Long-Form Context Preservation

Long-form context preservation operates as a structural architecture discipline that governs how meaning persists across extended documents. It relies on context persistence architecture and structural meaning persistence as foundational mechanisms that sustain deep content continuity. Consequently, architectural coherence becomes a measurable property rather than an editorial preference.

Context persistence architecture = a structured system that maintains semantic stability across all sections of a long-form document. It defines how conceptual units connect, how semantic dependencies propagate, and how cross-section alignment prevents interpretive drift. Therefore, architectural modeling transforms extended pages into machine-readable systems rather than narrative sequences. The formalization of semantic layers builds upon principles articulated in the W3C Semantic Web standards, which define interoperable meaning structures across distributed systems.

Claim: Long-form context preservation requires architectural modeling at the document level.

Rationale: AI systems parse documents as structured graphs rather than narrative flows, and therefore prioritize hierarchical relationships over stylistic progression.

Mechanism: Context persistence architecture aligns section-level semantics through hierarchical control and macro-level context design that stabilizes meaning across scroll depth.

Counterargument: Surface formatting without structural reinforcement may create visual order, however it fails under long-scroll conditions where semantic dependencies are not encoded.

Conclusion: Architectural control is the prerequisite for semantic continuity in long reads and directly influences interpretability in machine parsing systems.

Definition: AI understanding in long-form systems is the model’s capacity to reconstruct hierarchical semantic relationships across extended documents, preserving context integrity and structural meaning persistence under deep-scroll conditions.

Structural Meaning Persistence as a Conceptual Foundation

Structural meaning persistence defines the ability of a document to retain stable interpretive signals across distributed sections. It functions as a deep content continuity model that binds concepts through structural reinforcement rather than repetition. Consequently, long-read structural coherence emerges from predictable semantic anchors embedded within hierarchical containers.

Architectural systems encode meaning at multiple levels. They stabilize definitions, maintain consistent terminology, and align semantic units through explicit structural signals. As a result, AI extraction engines detect stable relationships instead of isolated informational fragments.

When structural meaning persistence is engineered intentionally, extended documents behave as unified semantic systems rather than collections of independent paragraphs. This coherence allows both human and machine readers to follow reasoning without interpretive breaks.

Mechanisms of Cross-Block Context Alignment

Cross-block context alignment synchronizes conceptual units across distant sections of a document. It relies on hierarchical context reinforcement to ensure that each section reflects the macro-level context design. Therefore, semantic transitions become predictable and traceable across structural layers.

Hierarchical context reinforcement operates by anchoring definitions and preserving controlled vocabulary. Additionally, it aligns thematic signals at both local and global levels. Consequently, macro-level context design ensures that meaning does not fragment as scroll depth increases.

When cross-block alignment functions correctly, each section supports the same conceptual backbone. This approach prevents topic drift and stabilizes interpretive continuity across extended exposition.

Architectural Modeling Example

Architectural modeling translates conceptual continuity into structural layers that machines can parse. The table below illustrates how architectural layers support context persistence architecture and influence AI parsing outcomes.

| Architectural Layer | Context Function | AI Parsing Outcome |

|---|---|---|

| Definition Layer | Stabilizes terminology and anchors core concepts | Consistent entity recognition |

| Hierarchical Layer | Organizes semantic depth and dependencies | Improved reasoning graph construction |

| Reinforcement Layer | Maintains cross-block context alignment | Reduced semantic drift in extraction |

| Validation Layer | Ensures structural meaning persistence | Higher interpretive reliability |

Each architectural layer contributes to deep content continuity. Together they create a coherent semantic system that supports long-read structural coherence.

Persistent Thematic Architecture

Persistent thematic architecture defines the structural reinforcement of a document’s central theme across all sections. It ensures that thematic signals propagate consistently through context persistence architecture. Consequently, semantic dependencies remain stable even when documents exceed typical context window limits.

Thematic persistence relies on controlled terminology and explicit structural anchors. Moreover, it reinforces long-read structural coherence by preventing local reinterpretation of previously defined concepts. Therefore, semantic alignment remains intact across hierarchical depth.

When thematic architecture persists across the entire document, meaning does not fragment. The central concept remains traceable from introduction to conclusion.

Context-Consistent Page Framework

A context-consistent page framework organizes content according to predictable semantic rules. It integrates structural meaning persistence with cross-block context alignment to maintain macro-level stability. As a result, AI systems parse the document as a coherent reasoning structure rather than isolated informational blocks.

This framework distributes conceptual load evenly across sections. Additionally, it aligns headings, definitions, and mechanisms within hierarchical boundaries. Therefore, the page becomes a context-aware architecture that sustains interpretive continuity.

When the framework enforces consistency across structural layers, extended pages maintain semantic integrity. Both human readers and AI systems can reconstruct the reasoning path without ambiguity or loss of context.

Semantic Continuity Systems in Extended Page Design

Long-form context preservation depends on narrative continuity engineering that converts extended narrative stability into measurable structure. It operationalizes semantic flow stabilization and cross-section meaning alignment as enforceable architectural layers rather than stylistic conventions. Consequently, semantic continuity systems become structural instruments that support reasoning integrity across long-form environments.

Semantic flow stabilization = controlled reinforcement of meaning across sequential content blocks. It ensures that each section extends previously established definitions instead of redefining them implicitly. Therefore, sequential meaning preservation becomes an architectural requirement that aligns with research on hierarchical reasoning models developed by the Stanford Natural Language Processing Group, which demonstrates that predictable semantic transitions improve inference reliability.

Claim: Semantic continuity systems increase long-form reasoning stability.

Rationale: AI reasoning depends on predictable semantic transitions that connect hierarchical meaning units across extended documents.

Mechanism: Sequential meaning preservation is enforced through cross-section alignment logic that maintains semantic dependencies between distributed sections.

Counterargument: Fragmented sections disrupt context traceability across sections and weaken reasoning chains in long-scroll environments.

Conclusion: Continuity-driven page structure improves machine-level comprehension and strengthens interpretive stability.

Semantic Flow Stabilization as a Structural Control Layer

Semantic flow stabilization governs how meaning moves across sections without semantic drift. It relies on sequential meaning preservation to maintain conceptual progression that follows structural dependencies. Consequently, each paragraph connects to prior semantic anchors instead of introducing disconnected informational fragments.

This model treats content as an ordered reasoning system rather than a collection of topical segments. Moreover, it ensures that hierarchical context reinforcement extends across long-read structural coherence. Therefore, semantic continuity becomes measurable through alignment consistency rather than stylistic impression.

When semantic flow stabilization operates correctly, extended documents maintain logical progression from introduction to final inference. Meaning accumulates in controlled increments instead of fragmenting across independent blocks.

Mechanisms of Section-to-Section Alignment

Section-to-section semantic alignment ensures that each new structural layer references established definitions and previously stabilized terminology. It reinforces context traceability across sections by linking conceptual nodes through explicit semantic signals. As a result, AI systems reconstruct reasoning graphs with higher reliability.

Context traceability across sections functions through consistent heading logic, controlled vocabulary, and hierarchical reinforcement patterns. Additionally, macro-level context design ensures that transitions preserve semantic continuity. Therefore, alignment becomes an enforceable architectural principle rather than a stylistic guideline.

When section-to-section alignment is structurally encoded, semantic drift declines significantly. Each section extends the same conceptual backbone and sustains long-form reasoning stability across scroll depth.

Operational Microcase: Enterprise Continuity Mapping

An enterprise knowledge platform redesigned its long reads using continuity mapping in page architecture. The team implemented cross-block context alignment and reinforced structural meaning persistence across all documentation layers. After implementation, internal AI extraction consistency increased and summary outputs preserved thematic alignment across scroll depth. Internal review confirmed improved long-form semantic resilience and reduced interpretive variance across automated systems.

The redesign demonstrated that narrative continuity engineering directly influences machine-readable coherence. Moreover, controlled reinforcement prevented topic drift and preserved structural narrative preservation across distributed sections. Therefore, the platform transformed extended narrative stability into measurable interpretive reliability.

Principle: Long-form context preservation strengthens generative interpretability when semantic flow stabilization, hierarchical reinforcement, and cross-section alignment operate as coordinated structural signals rather than isolated formatting decisions.

Long-Form Semantic Resilience

Long-form semantic resilience describes the ability of an extended document to maintain interpretive stability under repeated parsing conditions. It relies on cross-section meaning alignment and structural narrative preservation to sustain semantic continuity across distributed reasoning chains. Consequently, resilience becomes a function of architectural control rather than rhetorical style.

Resilience emerges when semantic dependencies remain intact across hierarchical layers. Furthermore, predictable transitions reduce ambiguity and improve long-read structural coherence. Therefore, documents with stabilized semantic flow withstand repeated extraction without interpretive degradation.

When resilience mechanisms function consistently, extended pages preserve meaning across model updates and context window variations. The reasoning structure remains stable even when partial sections are processed independently.

Structural Narrative Preservation

Structural narrative preservation ensures that narrative continuity engineering supports architectural reinforcement rather than stylistic continuity alone. It integrates sequential meaning preservation with hierarchical context reinforcement to stabilize reasoning progression. As a result, narrative logic becomes structurally encoded instead of implicitly inferred.

This preservation model anchors conceptual definitions and aligns semantic transitions across macro-level context design. Additionally, it prevents fragmentation that weakens deep content continuity models. Therefore, structural narrative preservation directly supports context traceability across sections and strengthens machine-level interpretability.

When structural preservation is systematically implemented, extended documents function as unified reasoning systems. Meaning flows predictably across sections and supports both human understanding and AI-driven extraction.

Hierarchical Modeling for Deep-Scroll Coherence

Long-form context preservation depends on a deep-scroll coherence strategy that maintains semantic stability across extended vertical progression. It requires long-form logic scaffolding that organizes meaning into layered structures rather than linear sequences. Consequently, deep-structure continuity planning becomes a structural hierarchy discipline that controls semantic expansion across scroll depth.

Deep-scroll coherence strategy = alignment of semantic units across scroll depth without semantic drift. It ensures that conceptual blocks remain connected through hierarchical dependencies and that macro-level context design governs local transitions. Therefore, hierarchical modeling transforms extended exposition into a depth-oriented reasoning system aligned with findings from Carnegie Mellon University Language Technologies Institute, which emphasizes structured modeling for improved language system coherence.

Claim: Hierarchical context reinforcement stabilizes extended exposition continuity.

Rationale: AI models prioritize structured depth and layered dependencies over narrative length or stylistic progression.

Mechanism: Long-form reasoning stability emerges from layered alignment across blocks that encode semantic hierarchy and controlled transitions.

Counterargument: Linear writing without hierarchical modeling collapses under context expansion and produces fragmented reasoning paths.

Conclusion: Depth-oriented modeling preserves macro-level interpretability and sustains coherent semantic architecture across long-scroll environments.

Long-Form Reasoning Stability as Hierarchical Outcome

Long-form reasoning stability results from explicit structural layering rather than cumulative narrative accumulation. It relies on context reinforcement patterns that anchor definitions at multiple hierarchical levels. Consequently, reasoning does not depend on proximity between paragraphs but on structured semantic dependencies.

Hierarchical modeling distributes conceptual weight across ordered tiers. Moreover, deep-structure continuity planning ensures that each layer references stable terminology and previously defined constructs. Therefore, macro-level interpretability remains intact even when content is processed in segmented form.

When reasoning stability is engineered hierarchically, extended exposition maintains internal coherence. The document functions as a structured reasoning network instead of a sequential argument chain.

Deep-Structure Continuity Planning and Reinforcement Patterns

Deep-structure continuity planning formalizes how meaning propagates across hierarchical tiers. It integrates context reinforcement patterns that stabilize relationships between definitions, mechanisms, and implications. As a result, semantic dependencies remain traceable across extended sections.

Context reinforcement patterns operate through controlled heading logic and explicit conceptual anchors. Additionally, they align long-read structural coherence with predictable semantic transitions. Therefore, structural hierarchy directly reduces continuity risk as scroll depth increases.

When planning aligns reinforcement across levels, semantic drift declines significantly. Each layer extends the same conceptual framework and sustains interpretive continuity across deep-scroll environments.

Structural Modeling Across Scroll Depth

Hierarchical modeling distributes structural control according to scroll progression. The following matrix demonstrates how scroll depth interacts with structural control intensity and continuity risk.

| Scroll Depth | Structural Control | Continuity Risk |

|---|---|---|

| Introduction Level | High definition density and anchored terminology | Low risk of semantic drift |

| Mid-Scroll Layer | Reinforced cross-block dependencies | Moderate risk if alignment weakens |

| Deep-Scroll Layer | Explicit hierarchical references and recap nodes | Increased risk without reinforcement |

| Terminal Layer | Consolidated macro-level integration | Reduced risk when hierarchy remains intact |

Scroll depth amplifies continuity risk when structural reinforcement declines. However, hierarchical context reinforcement counteracts this risk through explicit layering and semantic anchoring.

Content-Wide Contextual Integrity

Content-wide contextual integrity describes the stability of semantic relationships across the entire document. It emerges when hierarchical layers align with long-form logic scaffolding and cross-block alignment mechanisms. Consequently, the document maintains interpretive consistency regardless of entry point.

Integrity depends on structural reinforcement at both macro and micro levels. Furthermore, it requires that each section references the same conceptual backbone through controlled terminology. Therefore, context persistence architecture remains stable across distributed semantic units.

When contextual integrity spans the entire page, deep-scroll coherence strategy prevents fragmentation. The document retains structural unity even under segmented processing conditions.

Extended Exposition Coherence

Extended exposition coherence ensures that reasoning progression remains stable across large content volumes. It integrates deep-structure continuity planning with layered alignment across blocks. As a result, the exposition evolves without weakening macro-level context design.

This coherence model minimizes continuity risk by reinforcing semantic anchors at predictable intervals. Additionally, it stabilizes long-form reasoning stability through structural repetition of controlled terminology. Therefore, extended exposition coherence becomes a measurable architectural property.

When hierarchical modeling governs exposition, the document sustains coherent reasoning from beginning to end. Meaning remains aligned across scroll depth and supports consistent machine-level interpretation.

Context Memory and Persistence Across Sections

Long-form context preservation requires explicit context memory in page design to maintain persistent thematic architecture across extended documents. Context memory ensures that previously introduced concepts remain active within structural coherence rather than fading across scroll depth. Therefore, semantic continuity depends not only on hierarchy but also on controlled reinforcement of established meaning units.

Context memory = structural reinforcement of previously introduced concepts throughout the document. It operates through anchored references, stable terminology, and explicit conceptual callbacks that prevent semantic drift. Research in large language model processing, including peer-reviewed work published by OpenAI Research, demonstrates that bounded context windows limit retained information, which makes structural anchoring essential for long-form reasoning stability.

Claim: Context anchoring mechanisms reduce semantic loss in extended content.

Rationale: AI models operate within bounded context windows and therefore cannot retain all prior semantic signals without reinforcement.

Mechanism: Anchored references maintain cross-section meaning alignment by reconnecting new sections to previously stabilized definitions and structural anchors.

Counterargument: Redundant repetition without structural control introduces noise and weakens interpretive clarity.

Conclusion: Controlled anchoring sustains context integrity in long reads and stabilizes machine-level comprehension.

Context Anchoring Mechanisms and Structural Recall

Context anchoring mechanisms define how earlier concepts are structurally recalled in later sections. They rely on context-linked section modeling that reconnects distributed blocks to their original definitions. Consequently, the document maintains semantic continuity even when processed in segmented form.

Anchoring does not rely on repetition alone. Instead, it integrates structural signals such as controlled terminology and consistent conceptual framing. Moreover, persistent thematic architecture ensures that each anchor reinforces the same macro-level context design. Therefore, semantic recall becomes an architectural property rather than a rhetorical device.

When anchoring is implemented deliberately, extended documents preserve reasoning chains across sections. Meaning remains stable because structural references maintain alignment between earlier and later semantic units.

Context-Linked Section Modeling and Thematic Persistence

Context-linked section modeling connects distributed content blocks through explicit semantic relationships. It ensures that each new structural unit extends the same thematic backbone. As a result, persistent thematic architecture stabilizes macro-level coherence across the entire document.

This modeling approach integrates structural meaning persistence with hierarchical context reinforcement. Additionally, it prevents interpretive fragmentation that emerges when sections operate independently. Therefore, cross-section meaning alignment remains intact even in deep-scroll environments.

When section modeling follows consistent reinforcement logic, context memory becomes cumulative rather than fragile. The document retains structural unity despite extended exposition.

Extended Narrative Stability

Extended narrative stability emerges when context memory supports continuous reasoning progression. It relies on context anchoring mechanisms that reconnect each new section to established semantic foundations. Consequently, the narrative evolves without severing conceptual dependencies.

Stability depends on structural recall rather than stylistic transition. Furthermore, it aligns long-form logic scaffolding with explicit semantic anchors across scroll depth. Therefore, extended narrative stability becomes measurable through reduced semantic drift.

When stability mechanisms function correctly, the document sustains coherent progression across all structural layers. Meaning does not reset between sections but extends from prior conceptual anchors.

Structural Meaning Persistence

Structural meaning persistence ensures that reinforced concepts retain identical semantic interpretation across distributed sections. It integrates context-linked section modeling with persistent thematic architecture to stabilize macro-level context design. As a result, long-form reasoning stability increases as scroll depth expands.

This persistence model reduces interpretive variance during machine parsing. Additionally, it strengthens cross-section meaning alignment by preventing inconsistent terminology or conceptual redefinition. Therefore, structural meaning persistence safeguards context integrity across extended exposition.

When structural persistence is embedded systematically, long-form documents maintain semantic stability from introduction to conclusion. Context memory supports coherent reasoning and enables reliable extraction across AI systems.

Macro-Level Context Modeling and Layout Design

Long-form context preservation depends on context-aware layout modeling that encodes semantic continuity directly into page architecture. Macro-level context design governs how structural elements distribute meaning across extended documents. Therefore, continuity mapping in page architecture transforms layout from visual arrangement into a semantic control system.

Context-aware layout modeling = alignment of structural design with semantic continuity rules. It integrates hierarchical headings, section segmentation, and semantic containers into a unified architecture. Research from MIT CSAIL demonstrates that structured representation significantly improves reasoning reliability in AI systems, which confirms that layout must reinforce semantic hierarchy rather than decorate it.

Claim: Layout architecture directly influences semantic reinforcement patterns.

Rationale: AI parsing prioritizes structural signals such as hierarchy and segmentation over stylistic cues or formatting aesthetics.

Mechanism: Deep-page logical continuity emerges from hierarchical modeling that encodes dependencies through consistent layout structure.

Counterargument: Decorative layout without semantic alignment may appear organized, however it fails machine extraction and weakens interpretive coherence.

Conclusion: Structural continuity must be encoded at layout level to preserve macro-level interpretability in extended documents.

Context-Aware Layout Modeling as Structural Infrastructure

Context-aware layout modeling organizes semantic units according to hierarchical depth rather than visual symmetry. It ensures that each structural layer supports cross-block context alignment and reinforces macro-level context design. Consequently, layout becomes a semantic infrastructure that stabilizes meaning distribution across scroll depth.

Deep-page logical continuity depends on predictable heading progression and structured segmentation. Moreover, continuity mapping in page architecture connects conceptual blocks to structural containers that preserve semantic dependencies. Therefore, layout operates as a reinforcement mechanism that supports long-form reasoning stability.

When layout aligns with semantic continuity rules, extended documents maintain coherent interpretive signals. The structural hierarchy governs meaning flow and reduces ambiguity in both human and machine parsing.

Deep-Page Logical Continuity Through Structural Alignment

Deep-page logical continuity emerges when hierarchical modeling governs the distribution of semantic load across sections. It integrates continuity mapping in page architecture with consistent heading depth and defined semantic boundaries. As a result, each structural element reinforces previously established context rather than introducing isolated fragments.

Structural alignment reduces interpretive risk as scroll depth increases. Additionally, it ensures that semantic transitions remain predictable and anchored to macro-level context design. Therefore, layout modeling directly influences the stability of reasoning chains across extended exposition.

When layout encodes structural dependencies explicitly, machine systems reconstruct reasoning graphs more reliably. The document behaves as a structured reasoning environment instead of a visual composition.

Structural Encoding Across Layout Elements

Layout elements perform specific semantic functions that influence machine signal strength. The following matrix illustrates how layout components contribute to context-aware modeling and continuity reinforcement.

| Layout Element | Context Function | Machine Signal Strength |

|---|---|---|

| Hierarchical Headings | Encode semantic depth and dependencies | High |

| Section Segmentation | Isolate and align conceptual blocks | High |

| Definition Anchors | Stabilize terminology across sections | Medium to High |

| Recap Nodes | Reinforce macro-level context design | Medium |

| Visual Styling Without Hierarchy | Provide aesthetic cues only | Low |

Hierarchical elements generate strong machine-recognizable signals. In contrast, purely decorative features produce weak interpretive reinforcement.

Example: A long-form page that encodes macro-level context design through hierarchical headings, definition anchors, and reinforced transitions enables AI systems to reconstruct deep-page logical continuity, reducing semantic drift during extraction and summarization.

Macro-Level Context Design

Macro-level context design governs how layout distributes conceptual emphasis across the entire document. It aligns hierarchical modeling with semantic containers to preserve structural meaning persistence. Consequently, macro-level organization prevents local sections from diverging conceptually.

This design principle coordinates section ordering, heading depth, and reinforcement intervals. Furthermore, it integrates context anchoring mechanisms to maintain cross-section meaning alignment. Therefore, macro-level context design functions as the architectural backbone of extended documents.

When macro-level design governs layout decisions, the document sustains deep content continuity. Structural coherence persists across scroll depth and supports reliable interpretation.

Context-Consistent Page Framework

A context-consistent page framework ensures that layout structure remains predictable across all sections. It integrates context-aware layout modeling with hierarchical context reinforcement to maintain stable reasoning patterns. As a result, semantic dependencies remain intact throughout the page.

This framework reduces semantic noise and improves extraction reliability. Additionally, it supports structural meaning persistence by aligning visual organization with conceptual architecture. Therefore, the page becomes a coherent semantic system rather than a sequence of isolated visual segments.

When layout and semantic architecture operate as a unified system, long-form documents maintain interpretive stability. Machine parsing recognizes consistent structural cues and reconstructs reasoning without fragmentation.

Cross-Section Alignment and Semantic Stability

Long-form context preservation depends on cross-section meaning alignment to maintain structural narrative preservation across independent document units. Alignment ensures that distributed sections operate within the same macro-level context design. Therefore, long-form semantic resilience becomes a measurable outcome of consistent structural coordination.

Cross-section meaning alignment = systematic consistency of semantic units across independent sections. It requires that definitions, mechanisms, and implications remain stable regardless of section boundaries. Research on structured reasoning models at the Allen Institute for Artificial Intelligence (AI2) emphasizes distributed representation alignment, which supports the need for consistent semantic nodes across extended documents.

Claim: Cross-section alignment increases extraction reliability.

Rationale: AI models compare distributed meaning nodes and detect inconsistencies across sections when semantic units diverge.

Mechanism: Structural narrative preservation enforces semantic continuity by anchoring each section to a shared conceptual backbone.

Counterargument: Topic drift reduces long-read structural coherence and weakens cross-block context alignment.

Conclusion: Alignment across sections stabilizes interpretation and strengthens machine-level reasoning integrity.

Cross-Section Meaning Alignment as Structural Discipline

Cross-section meaning alignment functions as a structural discipline rather than a stylistic guideline. It ensures that each independent section reinforces identical semantic definitions and controlled terminology. Consequently, long-read structural coherence emerges from predictable cross-block dependencies.

Alignment requires explicit coordination between hierarchical levels. Moreover, it integrates context reinforcement patterns that prevent semantic reinterpretation in later sections. Therefore, alignment strengthens semantic flow stabilization across extended exposition.

When alignment mechanisms operate consistently, the document maintains structural unity. Meaning remains synchronized across distributed sections and supports long-form semantic resilience.

Long-Read Structural Coherence and Semantic Resilience

Long-read structural coherence arises when alignment persists across all hierarchical tiers. It integrates structural meaning persistence with continuity mapping in page architecture to sustain reasoning stability. As a result, semantic dependencies remain intact despite increased scroll depth.

Long-form semantic resilience depends on this coherence. Additionally, it ensures that macro-level context design governs section-level reasoning. Therefore, interpretive variance declines when structural alignment enforces consistent semantic relationships.

When structural coherence spans the entire document, distributed sections operate as coordinated semantic modules. Each module reinforces the same conceptual system and prevents fragmentation.

Structural Narrative Preservation

Structural narrative preservation stabilizes reasoning progression across independent content units. It integrates cross-section meaning alignment with hierarchical context reinforcement to prevent topic drift. Consequently, narrative continuity becomes structurally encoded rather than inferred.

Preservation depends on explicit anchoring of core definitions and consistent reinforcement intervals. Furthermore, it aligns sequential meaning preservation with macro-level context design. Therefore, structural narrative preservation directly strengthens long-read structural coherence.

When preservation mechanisms are embedded at architectural level, extended documents maintain interpretive stability. Each section extends a unified reasoning structure instead of redefining it.

Context Reinforcement Patterns

Context reinforcement patterns coordinate distributed semantic units through repeated structural alignment. They ensure that each new section reconnects to established conceptual anchors. As a result, long-form semantic resilience increases as the document expands.

These patterns operate through controlled terminology and consistent hierarchical referencing. Additionally, they reduce semantic drift by stabilizing context persistence architecture. Therefore, reinforcement patterns act as structural safeguards for extended exposition.

When reinforcement is applied systematically, semantic stability persists across the entire page. Machine parsing systems detect consistent meaning relationships and reconstruct coherent reasoning graphs without fragmentation.

Validation Frameworks and Integrity Controls

Long-form context preservation requires formal validation systems that measure whether semantic continuity remains stable across document depth. Structural meaning persistence must therefore transition from an assumed property to a verified variable. Consequently, validation frameworks transform long-form context preservation into an auditable architectural discipline.

Context integrity = measurable stability of semantic units across document depth. It reflects whether definitions, mechanisms, and thematic anchors maintain consistent interpretation throughout extended exposition. Standards developed by the National Institute of Standards and Technology (NIST) emphasize structured data integrity controls, which provide a methodological foundation for verifying semantic consistency in complex information systems.

Claim: Validation frameworks are required for scalable context persistence architecture.

Rationale: Enterprise systems require measurable consistency across distributed semantic units rather than informal editorial assurance.

Mechanism: Context traceability across sections enables integrity audits that compare hierarchical alignment, terminology stability, and reinforcement intervals.

Counterargument: Informal editorial review cannot detect deep semantic drift that emerges across long-scroll environments.

Conclusion: Structured validation supports content-wide contextual integrity and reinforces long-form context preservation at scale.

Context Traceability Across Sections as Audit Mechanism

Context traceability across sections provides a measurable pathway for verifying semantic continuity. It tracks how definitions propagate through hierarchical layers and how structural meaning persistence remains consistent across independent sections. Therefore, traceability functions as the operational backbone of validation systems.

Traceability integrates controlled terminology, anchored definitions, and cross-block context alignment. Moreover, it aligns with the deep content continuity model by measuring whether semantic dependencies remain intact. Consequently, validation becomes systematic rather than interpretive.

When traceability is embedded into long-form context preservation workflows, semantic stability becomes observable. Structural deviations are detected early, which prevents cumulative interpretive errors across extended documents.

Content-Wide Contextual Integrity and Continuity Metrics

Content-wide contextual integrity quantifies whether macro-level context design remains stable across scroll depth. It evaluates hierarchical context reinforcement and measures alignment consistency between distributed semantic units. As a result, integrity shifts from abstract concept to operational metric.

Validation systems assess structural coherence through continuity mapping indicators. Additionally, they analyze whether reinforcement intervals maintain stable semantic flow stabilization. Therefore, integrity metrics directly support long-form context preservation by confirming that architectural controls function as intended.

When contextual integrity is continuously monitored, semantic drift declines significantly. The document sustains deep content continuity even as structural complexity increases.

Operational Microcase: Large-Scale Documentation Audit

A global documentation platform applied hierarchical context reinforcement across 1,500 pages to stabilize structural meaning persistence. Automated semantic audits identified a 12% reduction in inconsistency across distributed sections. Extraction reliability improved in downstream AI systems that relied on consistent semantic nodes. Internal knowledge reuse increased because validated continuity strengthened long-form context preservation across the entire repository.

The audit demonstrated that validation frameworks directly influence semantic resilience. Moreover, measurable context integrity allowed teams to detect structural weaknesses before they affected interpretability. Therefore, scalable validation reinforces macro-level context design and sustains architectural coherence.

Context Integrity in Long Reads

Context integrity in long reads reflects the stability of semantic anchors across extended exposition. It depends on continuous reinforcement and measurable traceability. Consequently, integrity becomes a dynamic variable that evolves as documents expand.

Integrity systems compare distributed semantic nodes to ensure consistent interpretation. Furthermore, they detect deviations in terminology or conceptual framing. Therefore, long-form context preservation remains reliable when integrity checks operate at each structural layer.

When integrity monitoring is systematic, long reads maintain coherent reasoning. Semantic alignment persists across scroll depth and supports predictable machine parsing outcomes.

Macro-Level Context Design Under Validation

Macro-level context design governs how validation integrates with architectural controls. It aligns hierarchical modeling with traceability metrics to ensure that structural continuity remains intact. As a result, macro-level design becomes both generative and evaluative.

Validation mechanisms assess whether layout modeling, reinforcement patterns, and thematic anchors operate cohesively. Additionally, they measure alignment consistency across distributed sections. Therefore, macro-level context design under validation sustains long-form context preservation through measurable architectural discipline.

When macro-level design and validation operate together, semantic stability becomes durable. Extended documents preserve interpretive coherence and support reliable extraction across AI systems.

Enterprise Workflow for Long-Form Context Preservation

Long-form context preservation becomes sustainable only when it operates as an enterprise workflow rather than an isolated editorial practice. It integrates context persistence architecture, deep-scroll coherence strategy, and semantic flow stabilization into a unified operational system. Therefore, long-form context preservation must be embedded into structured publishing cycles to ensure continuity at scale.

Long-form context engineering = systematic design, reinforcement, and validation of semantic continuity across extended documents. It combines architectural modeling, hierarchical reinforcement, and measurable context integrity into repeatable processes. Research from the Harvard Data Science Initiative highlights the importance of reproducible data and modeling workflows, which parallels the need for structured continuity governance in long-form semantic systems.

Claim: Enterprise workflow integration ensures scalable narrative continuity engineering.

Rationale: Manual continuity control does not scale across distributed teams and large document ecosystems.

Mechanism: Workflow embeds structural checks at each publishing stage, including definition anchoring, cross-section alignment validation, and reinforcement pattern auditing.

Counterargument: Over-structuring can reduce editorial flexibility and slow content iteration.

Conclusion: Controlled workflow enables persistent thematic architecture at scale while preserving structural meaning persistence.

Deep-Scroll Coherence Strategy Within Workflow Systems

Deep-scroll coherence strategy must be formalized as a stage within the editorial pipeline. It ensures that semantic units remain aligned as documents expand in length and complexity. Consequently, hierarchical context reinforcement becomes a planned activity rather than an emergent property.

Workflow systems define checkpoints where long-form logic scaffolding is verified. Moreover, sequential meaning preservation is assessed through structured traceability metrics. Therefore, continuity risk decreases when coherence controls are embedded into standardized publishing stages.

When workflow integration governs deep-scroll modeling, extended documents maintain stable reasoning progression. Structural alignment becomes predictable across teams and production cycles.

Long-Form Logic Scaffolding and Sequential Reinforcement

Long-form logic scaffolding defines how reasoning layers are constructed and validated during drafting. It integrates sequential meaning preservation with cross-block context alignment to stabilize semantic progression. As a result, reasoning chains remain intact throughout document expansion.

Sequential reinforcement operates through controlled terminology checks and macro-level context verification. Additionally, workflow rules ensure that new sections reconnect to established semantic anchors. Therefore, logic scaffolding supports long-form context preservation across distributed editorial processes.

When scaffolding mechanisms are standardized, semantic drift declines significantly. Each document evolves within the same structural discipline and preserves interpretive coherence.

Workflow-Based Context Governance

Enterprise workflow transforms context preservation into measurable governance. The matrix below illustrates how different workflow stages encode structural controls and integrity metrics.

| Workflow Stage | Context Control Mechanism | Integrity Metric |

|---|---|---|

| Planning | Definition anchoring and macro-level context mapping | Terminology consistency rate |

| Drafting | Hierarchical context reinforcement and sequential alignment | Cross-section alignment index |

| Review | Context traceability audit and structural coherence validation | Semantic drift percentage |

| Publication | Reinforcement interval verification and layout integrity check | Extraction reliability score |

| Maintenance | Periodic integrity reassessment and terminology normalization | Context stability variance |

Each stage embeds context-aware controls that reinforce architectural discipline. Consequently, long-form context preservation remains stable throughout the document lifecycle.

Context-Aware Page Architecture in Enterprise Systems

Context-aware page architecture integrates layout modeling with workflow governance. It ensures that structural hierarchy, heading logic, and reinforcement anchors remain consistent across large-scale content systems. Therefore, page architecture becomes a managed semantic environment rather than a static template.

Enterprise systems apply automated validation checks to preserve structural meaning persistence. Furthermore, they align macro-level context design with integrity metrics defined during planning. Consequently, context-aware architecture sustains predictable interpretability across extended document repositories.

When architecture and workflow operate in coordination, semantic continuity becomes reproducible. Extended documents maintain alignment regardless of author variation or production scale.

Continuity-Driven Page Structure at Scale

Continuity-driven page structure encodes reinforcement logic directly into structural templates. It integrates deep-scroll coherence strategy with standardized alignment protocols. As a result, narrative continuity engineering becomes embedded within enterprise publishing infrastructure.

This approach balances structural discipline with editorial adaptability. Additionally, it ensures that sequential meaning preservation aligns with measurable context integrity. Therefore, continuity-driven structures enable scalable long-form context preservation without compromising macro-level coherence.

When workflow governance enforces structural consistency, semantic stability becomes durable. Long-form context preservation evolves into a systemic capability that supports reliable AI interpretation across large content ecosystems.

Checklist:

- Are core definitions reinforced consistently across deep-scroll layers?

- Does hierarchical modeling preserve macro-level context design?

- Are semantic transitions structurally encoded rather than stylistic?

- Is cross-section meaning alignment traceable through reinforcement patterns?

- Do validation controls measure context integrity across sections?

- Does the workflow embed structural continuity at each publishing stage?

Interpretive Architecture of Long-Form Context Systems

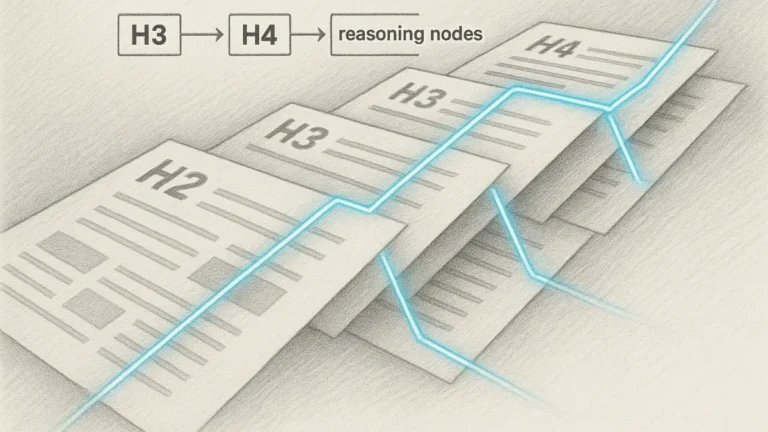

- Hierarchical depth encoding. Layered heading structures signal semantic nesting, allowing generative systems to reconstruct macro-level context design from distributed section boundaries.

- Cross-section dependency signaling. Recurrent conceptual anchors establish traceable relationships between distant blocks, enabling stable interpretation across extended scroll depth.

- Definition-based semantic stabilization. Immediate local definitions reduce interpretive variance by constraining how key constructs are instantiated within internal model graphs.

- Continuity reinforcement markers. Structured transitions and consistent terminology patterns indicate preserved reasoning chains rather than isolated informational fragments.

- Macro-structural coherence alignment. Alignment between layout modeling and conceptual hierarchy supports consistent parsing under segmented indexing and generative retrieval.

These architectural signals clarify how extended documents are interpreted as coherent semantic systems rather than linear narratives, enabling stable reconstruction of reasoning structures within generative environments.

FAQ: Long-Form Context Preservation

What is long-form context preservation?

Long-form context preservation is a structural methodology that maintains semantic continuity across extended documents so that meaning remains stable throughout deep-scroll environments.

Why is context preservation important in long documents?

Extended pages increase the risk of semantic drift. Structured reinforcement, hierarchical modeling, and consistent terminology reduce interpretive fragmentation across sections.

How do AI systems interpret long-form pages?

AI systems reconstruct documents as structured semantic graphs. They rely on stable definitions, hierarchical headings, and cross-section alignment to preserve reasoning integrity.

What causes semantic drift in long-form content?

Semantic drift occurs when sections introduce inconsistent terminology, redefine concepts implicitly, or fail to reinforce prior structural anchors.

How does hierarchical modeling support continuity?

Hierarchical modeling encodes depth relationships between sections, ensuring that new content extends established conceptual frameworks instead of fragmenting them.

What is context memory in page design?

Context memory is the structural reinforcement of previously introduced concepts through anchored references, controlled terminology, and macro-level alignment.

How is context integrity measured?

Context integrity is assessed through traceability across sections, terminology consistency checks, and alignment metrics that detect structural deviations.

What role does layout play in semantic continuity?

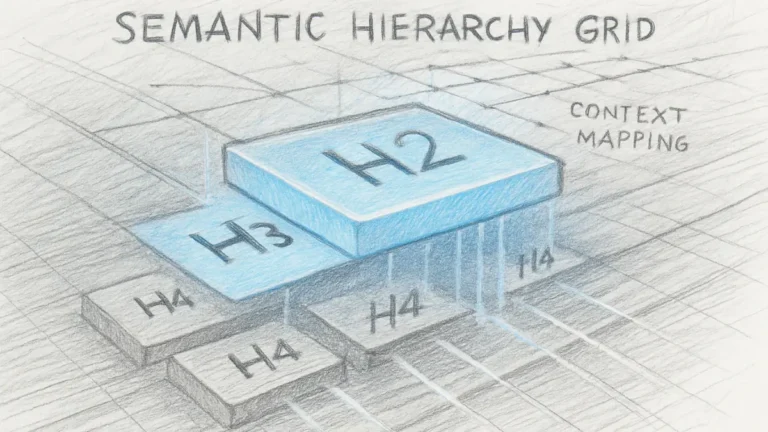

Layout encodes structural hierarchy. Clear H2→H3→H4 depth layers and segmented semantic blocks strengthen machine-readable interpretation.

Can long-form context preservation scale across large content systems?

Yes. Enterprise workflows embed structural validation and reinforcement controls into planning, drafting, and maintenance cycles to sustain continuity at scale.

How does context preservation affect generative search environments?

Generative systems extract modular reasoning units. Stable semantic alignment increases interpretive reliability and reduces variance in generated summaries.

Glossary: Key Terms in Long-Form Context Preservation

This glossary defines the structural terminology used throughout this article to ensure consistent interpretation by both human readers and AI systems operating on extended documents.

Long-Form Context Preservation

A structural discipline that maintains semantic continuity, hierarchical stability, and cross-section alignment across extended page architectures.

Context Persistence Architecture

A document-level structural system that reinforces semantic dependencies and prevents interpretive drift across distributed sections.

Semantic Flow Stabilization

Controlled reinforcement of meaning across sequential content blocks to preserve reasoning continuity throughout scroll depth.

Deep-Scroll Coherence Strategy

A hierarchical modeling approach that aligns semantic units across extended vertical progression without semantic fragmentation.

Context Memory

Structural reinforcement of previously introduced concepts through anchored references and consistent terminology usage.

Cross-Section Meaning Alignment

Systematic consistency of semantic units across independent sections to ensure stable interpretive reconstruction.

Context Integrity

Measurable stability of semantic units across document depth, verified through traceability and structural audits.

Long-Form Context Engineering

Enterprise-level workflow integration of design, reinforcement, and validation mechanisms that sustain semantic continuity at scale.

Structural Narrative Preservation

Architectural enforcement of reasoning continuity across sections to prevent topic drift and interpretive variance.

Macro-Level Context Design

High-level structural coordination of hierarchical layers that governs thematic stability across the entire document.