Last Updated on January 29, 2026 by PostUpgrade

How to Build an Internal Optimization Framework

Enterprise content systems increasingly depend on automated interpretation, retrieval, and reuse. As content volume grows, meaning stability becomes a system-level requirement rather than a property of individual pages. Without structural coordination, interpretation quality degrades even when local content remains accurate.

An internal optimization framework provides the structural logic that keeps meaning consistent across expanding content environments. It aligns editorial decisions, structural rules, and system behavior so that automated agents can interpret content reliably over time. This approach shifts optimization from isolated actions to controlled internal design.

This article explains how governance models, architecture, workflows, measurement logic, and controlled evolution work together as a single system. Each element supports long-term machine comprehension while preserving operational clarity as content ecosystems scale.

Defining an Internal Optimization Framework

Enterprise content systems require explicit structural definitions to remain interpretable as scale increases. An internal optimization framework establishes shared meaning controls that prevent divergence across teams, tools, and automated processes, a requirement aligned with system structuring principles described by NIST. Clear definition precedes implementation because systems cannot enforce consistency without a common internal reference model.

Definition: AI understanding is the model’s ability to interpret meaning, structure, and conceptual boundaries in a way that enables accurate reasoning, reliable summarization, and consistent content reuse across generative discovery systems.

Internal optimization framework: a structured system of rules, architectures, and governance mechanisms that controls how content is created, maintained, and interpreted across internal systems.

Claim: A clearly defined internal optimization framework is required for consistent machine interpretation at scale.

Rationale: Undefined internal systems lead to semantic drift and unstable content reuse.

Mechanism: Formal definitions constrain interpretation paths used by automated systems.

Counterargument: Small sites may rely on informal processes.

Conclusion: At enterprise scale, formal frameworks are mandatory.

Scope boundaries and system ownership

Clear scope boundaries determine where optimization rules apply and where they do not. When boundaries remain explicit, systems avoid conflicting interpretations caused by overlapping responsibilities. Ownership assignments reinforce these boundaries by linking decisions to accountable roles.

System ownership clarifies who maintains definitions, who approves changes, and who resolves conflicts. This structure prevents parallel rule sets from emerging across departments. As a result, optimization logic remains consistent even as teams evolve.

In practical terms, scope boundaries define what each system controls, while ownership defines who keeps those controls stable. Together, they reduce ambiguity and prevent uncontrolled expansion of optimization rules.

Framework versus isolated optimization tactics

A framework differs from isolated tactics because it governs relationships rather than individual actions. Tactics address local issues, while frameworks define how those actions align across systems. Without a framework, optimizations compete instead of reinforcing each other.

Isolated tactics often produce short-term gains but weaken long-term consistency. They introduce exceptions that automated systems cannot reconcile at scale. Over time, these exceptions accumulate and erode interpretability.

A framework replaces disconnected actions with coordinated structure. It ensures that every optimization decision follows the same internal logic and contributes to system-wide stability.

Relationship between internal optimization and operational stability

Operational stability depends on predictable interpretation outcomes. Internal optimization provides this predictability by aligning content creation with structural rules that systems can process consistently. When optimization logic remains stable, operational variance decreases.

Instability emerges when optimization changes lack structural coordination. Systems then interpret similar content differently, leading to unreliable reuse. Stable optimization reduces these discrepancies by enforcing uniform decision paths.

In simpler terms, internal optimization keeps systems behaving the same way over time. This consistency allows operations to scale without introducing interpretive errors.

Internal Optimization Architecture and System Design

Internal optimization architecture defines how structural rules operate across interconnected systems and determines whether optimization logic remains interpretable as complexity grows. An internal optimization architecture provides clarity by specifying how components interact, a principle consistent with system architecture guidance from the W3C. Architectural precision matters more than tooling choice because structure governs interpretation outcomes regardless of implementation details.

Internal optimization architecture: the logical arrangement of components that define how optimization rules are applied across content systems.

Claim: Internal optimization architecture determines system interpretability.

Rationale: Architecture defines interaction boundaries between components.

Mechanism: Clear architectural layers reduce interpretation conflicts.

Counterargument: Ad-hoc architectures may work temporarily.

Conclusion: Long-term scalability requires formal architecture.

Principle: Content becomes more visible in AI-driven environments when its structure, definitions, and conceptual boundaries remain stable enough for models to interpret without ambiguity.

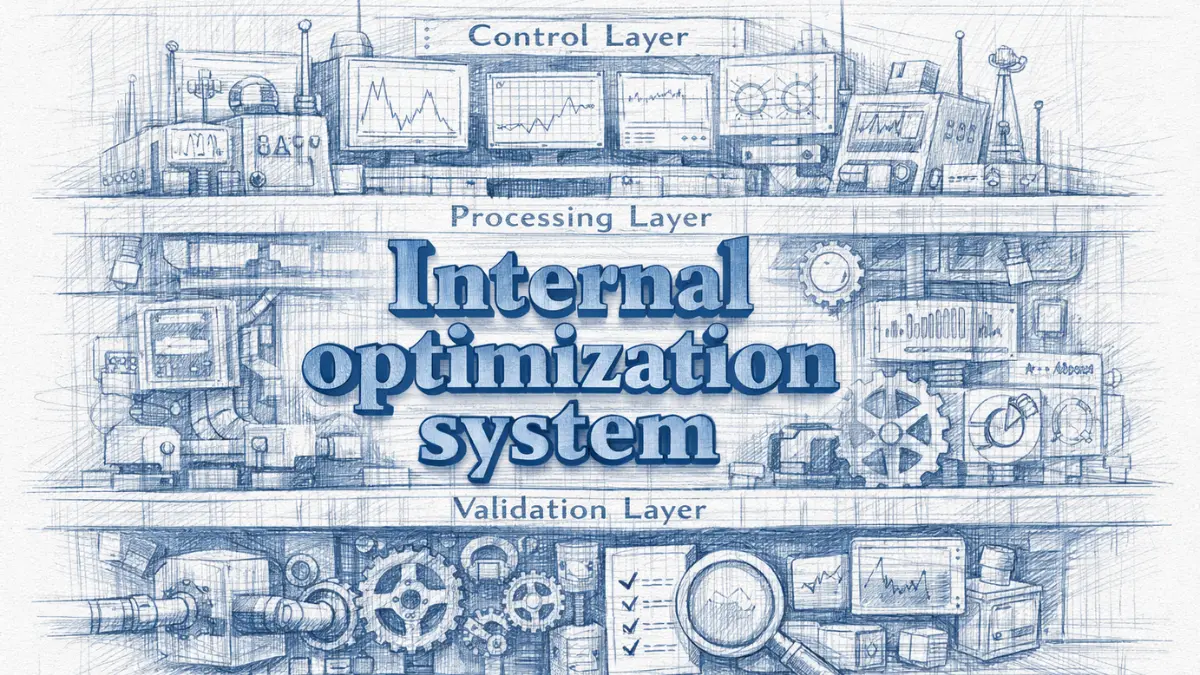

Core layers of an internal optimization system

An internal optimization system relies on clearly defined layers that separate responsibilities and limit cross-dependencies. Each layer performs a distinct function, which prevents optimization logic from leaking across unrelated processes. This separation allows systems to apply rules consistently, even as content volume increases.

Layered design also supports extensibility because changes in one layer do not automatically disrupt others. When layers remain stable, systems can evolve incrementally without destabilizing interpretation logic. This approach preserves clarity across both human and machine interactions.

At a practical level, layered architecture means that governance, execution, and evaluation each operate within their own boundaries. This structure reduces ambiguity and supports predictable optimization behavior.

Separation of control, execution, and evaluation layers

Control layers define what is allowed, execution layers apply those decisions, and evaluation layers measure outcomes. When these layers remain distinct, optimization decisions follow a clear lifecycle rather than forming feedback loops that systems cannot interpret reliably. Separation ensures that rules guide actions instead of reacting to them.

Execution layers should never redefine control logic, and evaluation layers should not enforce rules directly. Violating these boundaries creates circular dependencies that confuse automated interpretation. Clear separation preserves the integrity of optimization signals.

In simpler terms, each layer has a single job and does not interfere with the others. This clarity allows systems to apply optimization logic consistently over time.

Architectural signals and interpretability constraints

Architectural signals emerge from how layers communicate and enforce boundaries. These signals guide automated systems in resolving conflicts and prioritizing rules. When signals remain consistent, interpretation becomes predictable.

Interpretability constraints limit how far optimization logic can propagate across systems. Constraints prevent overreach and ensure that local decisions do not distort global structure. This balance maintains coherence across complex environments.

Put simply, architectural signals tell systems what matters, and constraints tell them where to stop. Together, they keep interpretation stable as systems scale.

| Architectural Layer | Function | Optimization Role |

|---|---|---|

| Control Layer | Governance rules | Constraint enforcement |

| Execution Layer | Content operations | Rule application |

| Evaluation Layer | Measurement | Feedback stabilization |

Governance, Rules, and Optimization Standards

As internal systems expand, governance becomes the mechanism that preserves alignment between decisions and structural intent. Internal optimization governance ensures that optimization logic remains coherent across teams and tools, which directly reflects governance principles articulated by the OECD. Therefore, authority, rules, and enforcement must operate as a unified system rather than as disconnected controls.

Internal optimization governance: the system of rules and accountability that ensures optimization decisions remain consistent.

Claim: Governance is the stabilizing force of internal optimization.

Rationale: Without governance, optimization decisions fragment across teams and systems.

Mechanism: Rules create predictable decision boundaries that guide behavior consistently.

Counterargument: Informal governance may seem flexible during early growth phases.

Conclusion: At scale, predictability provides more value than flexibility.

Rule definition and enforcement

Rule definition establishes the conditions under which systems and teams may act. As a result, clear rules reduce interpretation variance and limit discretionary behavior. Moreover, explicit rules allow automated systems to apply optimization logic without ambiguity.

Enforcement converts defined rules into operational constraints. Consequently, validation checks, audits, and approval workflows maintain rule authority over time. Without enforcement, rules degrade into optional suggestions rather than binding controls.

In practice, rule definition and enforcement operate as a single mechanism. Together, they preserve alignment as organizational complexity increases.

Ownership models for optimization decisions

Ownership models determine who creates, approves, and maintains optimization rules. Therefore, explicit ownership prevents parallel decision paths that generate conflicting standards. In addition, accountability follows ownership rather than outcomes alone.

Centralized ownership improves consistency, while federated ownership supports scale. However, hybrid models often balance both by combining shared standards with localized execution authority. The effectiveness of any model depends on structural clarity.

Simply stated, ownership defines who decides and who ensures continuity. Clear ownership accelerates resolution when conflicts arise.

Standards versus guidelines in optimization systems

Standards define mandatory requirements that systems must follow. Accordingly, they establish non-negotiable boundaries that protect interpretability and consistency. Because standards apply uniformly, they minimize subjective interpretation.

Guidelines, by contrast, provide recommended practices that support standards. Therefore, they introduce flexibility where rigid enforcement would hinder adaptation. However, guidelines cannot replace standards when consistency is critical.

Ultimately, standards define what must happen, while guidelines shape how it happens. Effective governance combines both while prioritizing standards for stability.

- Core governance principles

- Enforcement mechanisms

- Exception handling

Taken together, these elements create a governance structure that sustains consistent optimization decisions while still allowing controlled adaptation over time.

Optimization Workflows and Lifecycle Management

Optimization remains effective only when workflows operate as a controlled sequence over time. An internal optimization workflow provides this control by defining stages, checkpoints, and feedback paths, consistent with process lifecycle principles described by the IEEE. Consequently, lifecycle management replaces ad-hoc actions with predictable progression from intent to validation.

Internal optimization workflow: a repeatable sequence of actions governing optimization execution and revision.

Claim: Structured workflows prevent optimization decay.

Rationale: Unmanaged workflows drift over time and accumulate inconsistencies.

Mechanism: Lifecycle checkpoints enforce periodic validation at defined stages.

Counterargument: Manual reviews may catch issues in limited contexts.

Conclusion: Systemic workflows scale more reliably than manual oversight.

Lifecycle stages of internal optimization

Lifecycle stages define how optimization moves from planning to execution and then to review. First, planning establishes intent and constraints, which aligns decisions with governance rules. Next, execution applies those rules consistently across systems.

Review completes the cycle by validating outcomes against predefined signals. Because each stage has a clear objective, systems avoid skipping critical checks. As a result, optimization logic remains stable as volume increases.

In simpler terms, lifecycle stages ensure that optimization follows an ordered path. This order prevents random changes and preserves consistency over time.

Change control and revision logic

Change control governs how optimization rules evolve without destabilizing existing systems. By defining approval paths and revision thresholds, organizations limit uncontrolled modifications. Therefore, revisions occur intentionally rather than reactively.

Revision logic also determines when changes propagate and when they remain localized. This separation prevents partial updates from introducing conflicts. Over time, controlled revisions maintain alignment between current needs and established structure.

Put simply, change control decides when to adjust and when to preserve. Clear revision logic keeps optimization coherent as conditions change.

| Stage | Objective | Control Signal |

|---|---|---|

| Planning | Define intent | Governance approval |

| Execution | Apply rules | System logs |

| Review | Validate outcomes | Measurement triggers |

Together, structured stages and controlled revisions form a lifecycle that sustains optimization integrity. This approach supports long-term scalability while preserving predictable system behavior.

Content-Level Optimization and Editorial Systems

Editorial operations translate structural intent into executable content decisions. An internal content optimization system aligns authoring practices with optimization logic so that meaning remains consistent from creation through reuse, a requirement supported by editorial systems research in the ACM Digital Library. Consequently, editorial workflows must operate as controlled extensions of internal structure rather than as independent creative processes.

Internal content optimization system: the editorial application of internal optimization rules across content production.

Claim: Editorial systems must align with internal optimization logic.

Rationale: Content inconsistency originates at creation time.

Mechanism: Embedded rules guide authorship decisions.

Counterargument: Skilled editors may compensate manually.

Conclusion: System-level alignment reduces dependency on individuals.

Editorial workflow alignment

Editorial workflow alignment ensures that authorship actions follow predefined optimization rules from the outset. When workflows integrate structural requirements, content enters the system already aligned with interpretation constraints. As a result, downstream corrections become the exception rather than the norm.

Alignment also reduces variance between contributors. Because workflows encode decision paths, individual preferences exert less influence on structural outcomes. Therefore, content remains consistent even as teams expand or rotate.

In practical terms, aligned workflows guide writers toward correct decisions without constant oversight. This guidance preserves consistency while allowing efficient content production.

Terminology control and consistency

Terminology control stabilizes meaning across editorial output. By enforcing shared vocabularies and definitions, systems prevent subtle shifts that confuse automated interpretation. Consequently, content units maintain predictable relationships over time.

Consistency mechanisms often include controlled term lists, validation checks, and editorial references. These tools reduce synonym drift and discourage informal substitutions. As a result, interpretation remains stable across content updates.

Simply stated, terminology control keeps everyone using the same words for the same concepts. This uniformity allows systems to interpret content reliably at scale.

Content review as optimization enforcement

Content review functions as an enforcement layer rather than a stylistic checkpoint. Reviews verify compliance with optimization rules before publication. Therefore, review processes protect structural integrity at the final stage.

Effective review focuses on rule adherence instead of subjective quality judgments. By validating structure, terminology, and alignment, reviews prevent exceptions from entering the system. This focus reduces long-term correction costs.

In essence, content review ensures that optimization rules actually apply. It confirms that editorial intent matches system requirements before content becomes active.

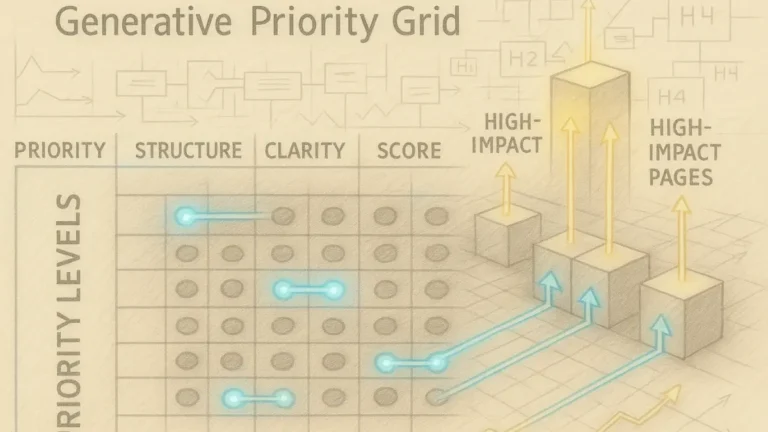

Measurement, Evaluation, and Optimization Signals

Reliable systems require evaluation methods that reflect internal structure rather than external reaction. Internal optimization measurement defines how consistency and reliability are assessed over time, which aligns with system evaluation research from MIT CSAIL. Therefore, effective measurement avoids marketing-oriented indicators and instead focuses on signals that reveal whether structural logic remains intact.

Internal optimization measurement: the evaluation of system consistency and reliability over time.

Claim: Measurement must reflect structural performance, not surface metrics.

Rationale: Superficial metrics fail to capture system stability across time and scale.

Mechanism: Signal-based evaluation tracks internal consistency through repeatable checkpoints.

Counterargument: Traffic metrics are easier to obtain and interpret quickly.

Conclusion: Structural signals provide more durable insight into system health.

Evaluation signals and thresholds

Evaluation signals represent observable indicators of whether optimization rules operate as designed. These signals originate from structural behavior, such as rule adherence, terminology stability, and workflow compliance. As a result, they reveal internal alignment rather than external response.

Thresholds translate signals into actionable boundaries. When values exceed or fall below defined limits, systems trigger review or correction. Consequently, thresholds convert abstract observations into operational controls.

In practical terms, signals describe how the system behaves, while thresholds determine when intervention becomes necessary. Together, they make evaluation systematic and repeatable.

Detecting optimization drift

Optimization drift describes gradual deviation from defined structure without explicit change events. This drift often results from incremental edits, localized exceptions, or inconsistent enforcement. Because drift accumulates slowly, detection requires longitudinal comparison instead of isolated checks.

Detection mechanisms compare current signals against historical baselines. When variance grows beyond tolerance, systems flag instability before interpretation quality degrades. Therefore, early detection prevents minor deviations from becoming systemic failures.

Simply stated, drift detection monitors slow changes that weaken consistency. Early intervention preserves reliability as systems evolve.

- Stability indicators

- Consistency metrics

- Alignment signals

Taken together, these elements form a measurement model that supports long-term reliability. By prioritizing structural signals, systems maintain interpretability even as content volume and complexity increase.

Example: A page with clear conceptual boundaries and stable terminology allows AI systems to segment meaning accurately, increasing the likelihood that its high-confidence sections will appear in assistant-generated summaries.

Scaling Internal Optimization for Large Systems

As content ecosystems expand, an internal optimization framework encounters increasing pressure from volume, organizational growth, and coordination demands. Internal optimization for scale explains how an internal optimization framework adapts to these conditions while preserving consistency, a challenge examined in large-system language research by the Stanford Natural Language Institute. Consequently, scalability becomes a structural concern rather than an operational afterthought.

Internal optimization for scale: the adaptation of optimization systems to increasing content volume and organizational size within an internal optimization framework.

Claim: Scale amplifies optimization weaknesses.

Rationale: Complexity increases faster than oversight capacity as systems grow.

Mechanism: Layered control within an internal optimization framework reduces systemic risk by limiting cross-dependencies.

Counterargument: Small teams may delay formal scaling without immediate consequences.

Conclusion: Early scalability planning prevents later structural failure.

Coordination across teams

Coordination across teams determines whether optimization rules remain coherent as responsibility distributes. When teams operate under a shared internal optimization framework, decisions align even when execution occurs independently. As a result, systems avoid conflicting interpretations caused by parallel processes.

Effective coordination relies on shared definitions, synchronized workflows, and clear escalation paths. These mechanisms reduce friction while preserving consistency. Therefore, coordination transforms scale from a risk factor into a manageable condition.

In practical terms, coordination ensures that teams apply the same structural logic. This alignment protects optimization behavior as organizational boundaries expand.

Managing optimization complexity

Optimization complexity grows nonlinearly as systems add layers, contributors, and content types. Without explicit management, complexity obscures decision paths and weakens interpretability. Managing complexity requires deliberate constraints that limit how rules interact.

Layered controls, modular ownership, and standardized interfaces reduce cognitive and systemic load. These measures keep optimization logic comprehensible despite growth. Consequently, systems maintain clarity even under increasing demands.

Simply stated, complexity management prevents growth from overwhelming structure. Clear limits allow systems to scale without losing control.

A large publishing organization expanded rapidly by adding multiple editorial teams without unified optimization controls. Over time, terminology diverged and workflows conflicted, which led to inconsistent interpretation across platforms. Automated systems began producing variable outputs for similar content until a unified internal optimization framework introduced layered governance and shared definitions.

By addressing coordination and complexity early, internal optimization systems remain resilient. This approach enables large-scale growth without sacrificing interpretability or reliability.

Maintenance, Evolution, and Long-Term Reliability

Long-lived systems depend on sustained alignment between structure and environment. Internal optimization maintenance defines how frameworks remain effective as data sources, organizational processes, and interpretive systems evolve, a concern addressed in reliability and data lifecycle research from the Harvard Data Science Initiative. Consequently, maintenance and evolution must operate as planned system functions rather than reactive interventions.

Internal optimization maintenance: ongoing activities that preserve system reliability by sustaining alignment between defined structure and operational behavior.

Claim: Internal optimization requires continuous maintenance.

Rationale: Static systems degrade as environments, inputs, and interpretive contexts change.

Mechanism: Scheduled reviews and updates preserve alignment across structure, terminology, and workflows.

Counterargument: Stable domains may change slowly and appear to require minimal intervention.

Conclusion: Proactive maintenance prevents sudden failures and preserves long-term reliability.

Controlled evolution models

Controlled evolution models define how systems change without breaking existing structure. These models specify update cadence, approval thresholds, and rollback conditions so that evolution remains intentional. As a result, systems adapt while preserving interpretability.

Evolution control also limits unintended propagation of changes. By isolating updates and sequencing their release, organizations avoid cascading effects across dependent components. Therefore, controlled evolution maintains stability during growth.

In simpler terms, controlled evolution allows systems to change safely. It ensures that updates improve alignment instead of introducing new inconsistencies.

Preventing semantic drift

Semantic drift occurs when meaning shifts gradually without explicit intent. Drift often emerges through incremental edits, localized terminology changes, or inconsistent enforcement. Preventing drift requires continuous comparison between current usage and defined structure.

Prevention mechanisms include periodic audits, definition validation, and cross-system consistency checks. These actions detect divergence early and enable correction before reuse quality declines. Over time, prevention preserves stable interpretation.

Put simply, preventing semantic drift keeps meaning anchored. Regular checks ensure that systems continue to speak the same language as they evolve.

By combining controlled evolution with drift prevention, internal optimization systems remain reliable over extended periods. This approach sustains interpretability and operational confidence as conditions change.

Checklist:

- Does the page define its core concepts with precise terminology?

- Are sections organized with stable H2–H4 boundaries?

- Does each paragraph express one clear reasoning unit?

- Are examples used to reinforce abstract concepts?

- Is ambiguity eliminated through consistent transitions and local definitions?

- Does the structure support step-by-step AI interpretation?

The framework described throughout this article establishes a unified logic that connects governance, architecture, workflows, measurement, and maintenance into a single internal system. Each component reinforces the others by constraining decisions, stabilizing terminology, and preserving predictable interpretation paths. Together, these elements transform optimization from a collection of tactics into a coherent operating model.

Internal consistency emerges when structural rules guide creation, enforcement, evaluation, and evolution without contradiction. Consistency does not rely on individual expertise or manual correction but on repeatable processes that systems can apply reliably. As a result, content remains aligned even as volume, teams, and technical environments change.

Long-term interpretability depends on sustained structure rather than short-term performance signals. By prioritizing clarity, controlled change, and continuous validation, organizations preserve meaning across time and scale. This approach ensures that systems remain understandable, reusable, and reliable as automated interpretation becomes a permanent layer of content consumption.

Interpretive Structure of Internal Optimization Pages

- Hierarchical semantic containment. A stable H2→H3→H4 hierarchy enables AI systems to segment meaning into bounded units, reducing cross-section interference during interpretation.

- Decision-chain localization. Embedded reasoning chains act as fixed interpretive anchors, allowing generative models to reconstruct intent without inferring implicit logic.

- Terminology stabilization signals. Repeated use of explicitly defined terms across sections supports consistent concept resolution during long-context processing.

- Structural role separation. Clear differentiation between conceptual, operational, and evaluative sections prevents semantic collapse under generative summarization.

- Predictable section topology. Uniform structural patterns across the page create reliable extraction paths for AI-driven indexing and reuse.

This structural configuration explains how internal optimization content remains interpretable as a coherent system, independent of surface-level phrasing or contextual compression.

FAQ: Internal Optimization Frameworks

What is an internal optimization framework?

An internal optimization framework is a structured system that governs how content is created, maintained, and interpreted across internal environments.

How does an internal optimization framework differ from isolated optimization tactics?

A framework defines shared structure and decision logic, while isolated tactics address local issues without enforcing system-wide consistency.

Why is internal optimization important for large content systems?

As content volume and organizational complexity increase, internal optimization preserves consistency and prevents interpretive drift across systems.

How do internal systems interpret optimized content?

Systems rely on structural hierarchy, stable terminology, and predictable workflows to resolve meaning without relying on contextual inference.

What role does structure play in internal optimization?

Structure defines semantic boundaries and decision paths, enabling reliable interpretation and reuse across automated environments.

Why is consistency more important than individual optimization effort?

Consistency ensures that systems behave predictably over time, whereas individual efforts cannot prevent long-term divergence at scale.

How are internal optimization systems evaluated?

Evaluation focuses on structural signals such as alignment, stability, and controlled change rather than surface-level performance metrics.

How do internal optimization frameworks evolve over time?

Frameworks evolve through controlled updates, scheduled reviews, and mechanisms designed to prevent semantic drift.

What skills support effective internal optimization?

Effective optimization relies on structural reasoning, terminology discipline, and the ability to design systems rather than individual pages.

Glossary: Key Terms in Internal Optimization

This glossary defines the core terminology used throughout the article to support consistent interpretation by both internal systems and AI-driven models.

Internal Optimization Framework

A structured system of governance, architecture, workflows, and evaluation mechanisms that controls how content is created, maintained, and interpreted across internal environments.

Internal Optimization Architecture

The logical arrangement of control, execution, and evaluation layers that defines how optimization rules are applied across systems.

Optimization Governance

The system of rules, standards, and accountability models that ensures optimization decisions remain consistent over time.

Optimization Workflow

A repeatable sequence of planning, execution, and review actions governing how optimization logic is applied and revised.

Structural Signal

An internal indicator derived from system behavior that reflects alignment, consistency, or deviation from defined structure.

Optimization Drift

Gradual deviation from defined optimization structure caused by incremental changes or inconsistent enforcement.

Controlled Evolution

A managed approach to system change that preserves structural integrity while allowing intentional updates.

Interpretability Boundary

A structural limit that defines where meaning resolution occurs within a system to prevent ambiguity.

Consistency Validation

The process of verifying that content and systems adhere to defined optimization rules over time.

Structural Predictability

The degree to which internal systems follow stable patterns that enable reliable interpretation and reuse.