Last Updated on January 29, 2026 by PostUpgrade

How Multimodal Search Changes Content Strategy

Search no longer relies on typed queries alone. Users actively combine images, voice input, screenshots, and short text fragments to reach information faster and with less cognitive effort. This shift changes how discovery starts and how systems interpret intent from incomplete or non-linguistic signals. As these behaviors spread, content must support interpretation across mixed inputs rather than optimize for text queries in isolation.

A multimodal search strategy becomes a structural necessity in this environment. Content now competes for visibility in discovery flows where users explore, refine, and validate meaning across modalities. This article explains how multimodal inputs reshape user behavior, how discovery patterns evolve, and how content strategy must adapt at the level of structure and semantics. The analysis focuses on behavior, discovery logic, and long-term content systems, while deliberately excluding tools and platforms to keep attention on durable strategic principles.

The Evolution of Multimodal Search Behavior

Multimodal search behavior reflects a measurable change in how people access information across digital systems. Users increasingly start discovery through images, voice input, or combined signals instead of forming precise text queries. Research on changing search habits published by the Pew Research Center shows that visual and voice-based interactions reduce friction at the entry stage of information seeking, which confirms that multimodal search represents a behavioral shift rather than a purely technological upgrade.

Claim: Multimodal inputs change how users initiate and refine searches.

Rationale: Users rely less on explicit text queries when systems accept visual and spoken signals.

Mechanism: Images and voice compress intent expression by replacing descriptive language with contextual cues.

Counterargument: Text remains dominant for expert research and complex analytical tasks.

Conclusion: Multimodal search expands entry points into discovery without eliminating text-based interaction.

Definition: AI understanding is the system’s ability to interpret meaning, behavioral signals, and structural boundaries across text, visual, and voice inputs in a unified discovery context.

Image-Based Search Behavior

Image based search behavior emerges when users replace verbal descriptions with visual input to express intent. A photo, screenshot, or scanned object can communicate attributes that would require multiple sentences to describe in text. As a result, users shift from defining problems linguistically to presenting them visually.

Systems process image input by identifying shapes, objects, and spatial relationships, then mapping them to known categories. Visual similarity matching allows users to iterate quickly by adjusting images rather than rewriting queries. Contextual inference from objects and scenes further refines interpretation, especially when combined with location or interaction history.

In practice, image-based search favors exploration over precision. Users move faster through early discovery stages because they do not need to articulate intent clearly at the start, even though this approach introduces ambiguity that systems must resolve later.

- Low linguistic effort

- High contextual ambiguity

- Rapid refinement loops

These characteristics explain why visual inputs support exploratory behavior while delaying precise intent definition.

Voice and Visual Queries

Voice and visual queries combine spoken language with a visual reference point, such as an image on a screen or a real-world object. Users speak naturally while pointing the system toward a visual context, which reduces the need for exact phrasing and structured syntax.

This interaction pattern shifts clarification into a conversational flow. Instead of refining keywords, users refine meaning through follow-up questions and confirmations. Voice input often carries intent direction, while visuals anchor interpretation in a shared context.

As a result, systems must treat voice and visual signals as complementary rather than independent. Meaning emerges from their combination, not from either input alone.

Discovery Without Typed Queries

Discovery without typed queries occurs when users begin searching without forming a written request. Images, voice prompts, or simple interactions allow them to explore before they understand what they are looking for.

This behavior lowers the barrier to entry for information access. Users no longer need to translate uncertainty into language, which makes early discovery more intuitive and less constrained by vocabulary or expertise.

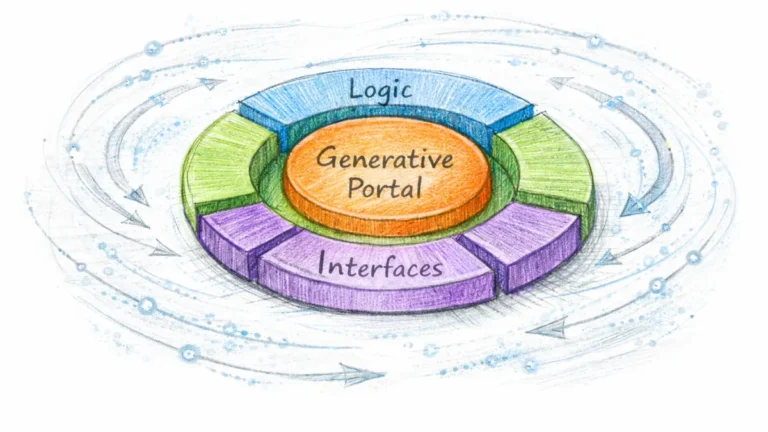

Multimodal Search Experience as a Unified System

A multimodal search experience emerges when users move through discovery without resetting context between inputs. People increasingly expect images, voice, and text to work together as part of a single interaction flow, which directly affects how a multimodal search strategy must be designed. Studies on digital interaction and user cognition from the OECD show that users associate search quality with continuity, not with the performance of individual input types.

Definition: Multimodal search experience is the perceived continuity of discovery across text, image, and voice interactions.

Claim: Users judge search quality by cross-modal consistency.

Rationale: Fragmented interactions increase cognitive effort and slow down decision-making.

Mechanism: Unified interpretation layers preserve meaning when users switch between modalities.

Counterargument: Some systems optimize each modality separately and still succeed in narrow use cases.

Conclusion: Integrated experiences improve comprehension and support a scalable multimodal search strategy.

Principle: In multimodal search environments, content remains interpretable for AI systems only when structural hierarchy, definitions, and semantic boundaries stay consistent across modalities.

Search Experience Across Modalities

Search experience across modalities depends on whether intent and context survive transitions between inputs. Text interactions usually express intent with high precision, while images and voice lower the effort required to start discovery. A multimodal search strategy must account for these differences without forcing users to restate goals at each step.

Continuity also determines how efficiently users refine results. When systems retain context across modalities, users advance through discovery with fewer corrective actions. When context breaks, users repeat steps and lose confidence in the interaction.

In practical usage, users often explore visually, clarify verbally, and confirm through text. This pattern works only when the system maintains a consistent interpretation thread, which turns multimodal interaction into a coherent experience rather than a sequence of disconnected actions.

| Dimension | Text | Image | Voice |

|---|---|---|---|

| Intent clarity | High | Medium | Low |

| Context retention | Medium | High | Medium |

| Refinement speed | Medium | High | High |

This comparison shows why a multimodal search strategy must bridge modalities instead of treating them as independent channels.

Multimodal Interface Influence

Multimodal interface influence shapes how users choose inputs during discovery. Interfaces that present cameras, microphones, and text fields together encourage users to switch inputs based on efficiency rather than habit. This behavior reinforces the need for a multimodal search strategy that aligns interface cues with interpretation logic.

When interfaces support seamless transitions, users treat the system as a continuous conversational partner. Visual prompts invite image input, voice features support clarification, and text responses provide confirmation and precision. Each modality contributes to a single meaning flow.

In everyday scenarios, a user may capture an image, ask a spoken question, and then read a short text explanation. This interaction succeeds because the interface preserves context and reinforces a unified experience, which directly strengthens the effectiveness of a multimodal search strategy.

Multimodal Discovery Patterns and User Intent

Multimodal discovery patterns describe how people explore information through repeated interaction rather than through a single decisive query. Users often begin with partial signals and refine intent as systems respond across inputs. Research from the Stanford Natural Language Institute shows that meaning formation in interactive systems progresses through incremental constraint building, which aligns with observed multimodal behavior in real-world search environments.

Definition: Multimodal discovery patterns describe how users shift between inputs while refining intent.

Claim: Intent clarity increases through multimodal interaction.

Rationale: Early signals rarely capture the full scope of user intent.

Mechanism: Each modality adds constraints that narrow interpretation without requiring full articulation.

Counterargument: Some searches begin with clearly defined goals and do not require iterative refinement.

Conclusion: Discovery processes favor layered intent resolution when users rely on multimodal inputs.

Multimodal User Intent

Multimodal user intent forms through interaction rather than declaration. Users often express uncertainty at the beginning of discovery, which leads them to rely on images, brief voice prompts, or simple gestures to initiate exploration. These early actions communicate direction rather than precision.

As systems respond, users adjust inputs to clarify meaning. Each interaction reduces ambiguity by adding context, narrowing scope, or confirming relevance. Over time, intent becomes explicit even though it did not exist in a fully articulated form at the start.

In practice, intent develops step by step. Users move from a vague signal toward a specific objective as feedback accumulates, which explains why early assumptions about intent frequently fail.

- Initial vague signal

- Context enrichment

- Clarified objective

This progression shows that intent emerges through interaction rather than appearing fully formed.

Multimodal Query Formulation

Multimodal query formulation differs from traditional query writing because it distributes intent across multiple signals. Text inputs usually express intent with high precision, while images and voice inputs favor speed and accessibility. Users choose modalities based on effort rather than accuracy.

Systems must therefore interpret queries as composite structures. A visual input may define the subject, a voice input may add constraints, and a text input may finalize intent. Precision increases as modalities accumulate rather than as a single query improves.

In everyday use, users combine inputs to reach clarity without rewriting queries. This behavior reflects a shift from query optimization to interaction-driven refinement.

| Modality | Precision | Ambiguity |

|---|---|---|

| Text | High | Low |

| Image | Medium | High |

| Voice | Low | Medium |

This comparison illustrates why multimodal discovery patterns rely on gradual intent refinement instead of immediate precision.

Multimodal Content Consumption Models

Multimodal content consumption describes how users absorb information after discovery begins and continues across formats. Unlike discovery, consumption unfolds over time and often involves switching between reading, listening, and viewing. Research from MIT CSAIL on human–computer interaction and attention shows that users naturally alternate sensory channels to reduce cognitive load, which directly shapes how a multimodal search strategy must support content use beyond the first interaction.

Definition: Multimodal content consumption is the absorption of information across mixed sensory formats.

Claim: Single-format content underperforms in multimodal use.

Rationale: Users regularly switch consumption modes as attention and context change.

Mechanism: Format cues such as layout, audio pacing, and visual structure guide attention and comprehension.

Counterargument: Long text supports deep reading and sustained analysis in focused environments.

Conclusion: Content must tolerate modality switching to remain usable across real consumption patterns.

Multimodal Content Discovery

Multimodal content discovery connects initial exploration with sustained consumption. Users often encounter content through one modality and continue engaging through another, which changes how information must remain coherent across formats. Discovery may start visually or verbally, but consumption frequently settles into a different mode that better fits the user’s environment or goal.

This transition places pressure on content consistency. Meaning must remain stable even as the form changes, otherwise users lose confidence in the information. Effective multimodal content discovery therefore depends on structure that supports continuity rather than on any single format performing well in isolation.

In simple terms, people rarely consume content the same way they discover it. They might notice something visually, then learn through audio, and finally confirm details by reading.

Content Design for Visual Search

Content design for visual search prioritizes elements that communicate meaning without relying on text. Users scanning images expect immediate recognition of relevance, which places emphasis on composition, focus, and contextual clarity. Visual content must therefore convey subject matter quickly and unambiguously.

Design choices also influence how users progress from visual input to deeper engagement. Images that embed context reduce the need for explanation, while cluttered visuals increase ambiguity and slow comprehension. Visual design thus acts as an entry filter that shapes the entire consumption path.

In practice, effective visual content answers the question of relevance before the user asks it, which makes further interaction more likely.

- Clear focal elements

- Context-rich visuals

- Minimal visual noise

These characteristics support interpretation when text is absent or delayed.

Example: A content block defined once with stable terminology can be reused by AI as a visual card, a voice explanation, or a text summary without altering its core meaning.

Content Design for Voice Search

Content design for voice search emphasizes clarity, pacing, and logical sequencing. Spoken responses unfold linearly, which means users cannot skim or jump ahead as they do with text. Each sentence must therefore deliver a complete and precise idea.

Voice consumption also depends on context awareness. Users often listen while multitasking, which limits attention span and increases the importance of concise explanations. Content that performs well in voice format avoids dense phrasing and maintains a predictable structure.

In everyday use, voice-friendly content feels direct and controlled. It delivers information in manageable units that users can absorb without visual support.

A user starts with a photo of an unfamiliar object, listens to a short voice explanation, then reads a text summary. Each step refines understanding without restarting discovery.

Search Beyond Text Queries

Search beyond text queries reflects the growing limits of keyword-centric models in environments where users interact through images, voice, and contextual signals. As input surfaces expand, discovery increasingly begins without written language, which changes how a multimodal search strategy must support access and interpretation. Standards work on multimodal interaction published by the W3C confirms that non-text inputs now function as first-class signals rather than secondary features layered on top of text search.

Definition: Search beyond text queries refers to discovery initiated through non-linguistic signals such as images, voice, gestures, or contextual interaction cues.

Claim: Non-text inputs redefine what a query is.

Rationale: Users frequently express needs without translating them into language.

Mechanism: Systems infer intent by combining visual, auditory, and contextual signals into a single interpretation path.

Counterargument: Precision can decrease when language constraints are absent.

Conclusion: Accessibility increases even when ambiguity remains higher at early stages.

Visual and Voice Search

Visual and voice search enable users to communicate intent through perception and speech rather than through structured language. An image can anchor subject matter instantly, while voice adds directional or explanatory cues that guide interpretation. Together, these inputs reduce the effort required to begin discovery.

However, this convenience shifts complexity to interpretation. Systems must reconcile incomplete or ambiguous signals while maintaining relevance. As a result, visual and voice search favors early exploration and rapid iteration over immediate precision.

In everyday usage, people rely on visual and voice inputs when they cannot easily describe what they see or when typing feels inefficient. This behavior explains why non-text inputs dominate the earliest phases of discovery.

Search Using Photos and Speech

Search using photos and speech distributes intent across complementary channels. A photo typically defines the object or situation, while speech adds constraints such as purpose, comparison, or desired outcome. Meaning emerges from the combination rather than from either input alone.

This interaction model requires systems to preserve context across signals. If the system treats photos and speech independently, users must restate intent, which breaks the discovery flow. Effective interpretation depends on aligning these inputs into a single semantic frame.

In simple terms, users show the system what they mean and then explain what they want. This approach lowers the barrier to entry while still allowing intent to become precise over time.

Multimodal Search Impact on Content Strategy

Multimodal search impact on content introduces strategic consequences that extend beyond optimization tactics and into planning discipline. As discovery paths diversify across images, voice, and interaction signals, content systems must prioritize meaning stability over keyword control to remain interpretable at scale. Research from the Harvard Data Science Initiative highlights that systems performing reliably across heterogeneous inputs depend on consistent semantic structure rather than modality-specific tuning.

Definition: Multimodal search impact on content describes the structural changes required for cross-input discovery while preserving meaning consistency.

Claim: Content strategy must adapt to multimodal interpretation.

Rationale: Discovery paths diversify as users initiate and refine searches through mixed inputs.

Mechanism: Content becomes input-agnostic by anchoring meaning in stable definitions and structure rather than in a single modality.

Counterargument: Legacy SEO practices still contribute baseline visibility in text-centric environments.

Conclusion: Strategy evolves incrementally by integrating multimodal requirements without discarding existing foundations.

Adapting Content for Multimodal Discovery

Adapting content for multimodal discovery requires shifting from surface optimization to structural readiness. When users move between images, voice, and text, content must maintain interpretability regardless of entry point. This requirement elevates definitions, hierarchy, and semantic boundaries as primary design concerns.

Operationally, adaptation focuses on reducing dependency on any single input format. Content that relies on precise phrasing or visual placement alone fails when consumed through another modality. By contrast, content built around stable meaning units supports reuse and recomposition across discovery flows.

In simple terms, content should communicate the same idea whether users hear it, see it, or read it. This consistency enables systems to reuse explanations without reinterpretation.

- Stable definitions

- Input-neutral structure

- Clear semantic boundaries

These adaptations support reuse across modalities by keeping meaning independent of format.

Multimodal Search Content Strategy

Multimodal search content strategy formalizes how organizations plan, create, and maintain content under mixed-input conditions. Strategy shifts from managing keywords to governing meaning units that remain valid across modalities. This approach aligns content production with how discovery actually occurs.

Planning under this strategy emphasizes durability. Content teams define concepts once and deploy them across formats without rewriting core explanations. Over time, this reduces semantic drift and improves consistency across channels.

In practice, a multimodal search content strategy treats content as a system of reusable knowledge blocks rather than as isolated pages optimized for specific queries.

A help article designed for reading is later reused as a voice response and a visual card, maintaining meaning without rewriting the core explanation.

Multimodal Search Trends and Future Direction

Multimodal search trends reveal how user input preferences change as new interaction patterns become familiar and socially normalized. Adoption does not occur instantly, even when technical capability exists, because behavior adjusts gradually through repeated exposure and reinforcement. Longitudinal usage data summarized in analytical reports from Statista shows steady growth in image- and voice-assisted interactions, with text remaining present but no longer dominant at the entry stage.

Definition: Multimodal search trends describe shifts in user input preferences over time as people adopt and normalize mixed interaction modes.

Claim: Multimodal adoption follows phased integration.

Rationale: User behavior typically lags behind available system capabilities.

Mechanism: Repeated exposure to multimodal interfaces normalizes non-text inputs through routine use.

Counterargument: Adoption varies by region, demographic group, and access conditions.

Conclusion: Current trends indicate steady integration rather than abrupt replacement of text-based search.

Multimodal Search Evolution

Multimodal search evolution reflects a gradual transition from experimental features to default interaction patterns. Early use cases often appear as optional enhancements, such as camera-based lookup or voice prompts layered onto text interfaces. Over time, these inputs become integrated into everyday workflows, which shifts user expectations.

As evolution continues, users stop perceiving modalities as separate options. Instead, they treat input choice as situational, selecting images, voice, or text based on convenience rather than preference. This normalization process explains why adoption curves tend to be incremental rather than disruptive.

In everyday behavior, people do not decide to use multimodal search explicitly. They adopt it naturally as interfaces make mixed inputs available at the moment of need.

Future of Multimodal Search

Future of multimodal search points toward deeper integration rather than radical transformation. Text, image, and voice inputs are likely to coexist as complementary signals within a single discovery environment. The emphasis shifts from adding new modalities to improving how systems interpret combined signals reliably.

Structural consistency becomes more important as systems scale. As multimodal interaction becomes routine, users expect stable interpretation regardless of how they initiate discovery. This expectation places pressure on content systems to support durable meaning across inputs.

Looking ahead, multimodal search becomes less visible as a concept and more embedded as a norm. Users interact naturally, while systems handle complexity in the background to maintain continuity and comprehension.

Checklist:

- Are multimodal concepts defined with stable, reusable terminology?

- Do H2–H4 sections preserve clear semantic boundaries?

- Does each paragraph represent one interpretable meaning unit?

- Are modality shifts supported without redefining core concepts?

- Is ambiguity reduced through local definitions and transitions?

- Can AI systems recombine sections without semantic drift?

Strategic Implications for Long-Term Content Systems

A multimodal search strategy consolidates the behavioral, structural, and experiential changes discussed earlier into a single long-term system view. As discovery increasingly spans images, voice, and text, content systems must prioritize durability and interpretability over short-term optimization. Research on knowledge reuse and interpretation stability from the Allen Institute for Artificial Intelligence demonstrates that systems designed for flexible input environments maintain relevance longer than those tied to a single interaction mode.

Definition: A multimodal search strategy is a long-term approach designed for discovery across mixed input environments while preserving consistent meaning.

Claim: Long-term visibility depends on multimodal readiness.

Rationale: Interpretation systems increasingly favor content that remains usable across varied input signals.

Mechanism: Stable meaning containers allow the same content units to be reused across modalities without reinterpretation.

Counterargument: Some niches remain text-centric and experience slower multimodal adoption.

Conclusion: Strategic integration of multimodal principles ensures durability across evolving discovery environments.

Interaction Based Search Models

Interaction based search models treat discovery as a sequence of actions rather than as a single query-response event. Users interact through images, voice prompts, confirmations, and refinements, which shifts emphasis from query construction to interaction flow. Content systems must therefore align with how meaning accumulates over multiple steps.

These models reward content that supports partial understanding at each interaction point. When content units are designed to stand independently, systems can surface them at different stages of interaction without loss of coherence. This property becomes critical as discovery paths diversify and shorten.

In simple terms, users do not search once and stop. They interact repeatedly, and content must support that interaction without forcing restarts.

Search Experience Across Modalities

Search experience across modalities synthesizes the entire discovery journey into a coherent perception of reliability. Users expect the same idea to remain consistent whether it appears as a spoken explanation, a visual card, or a text passage. This expectation shifts responsibility from interface design alone to content structure itself.

Long-term content systems must therefore treat experience as an outcome of semantic consistency. When meaning remains stable across modalities, systems can adapt presentation freely without confusing users. This stability becomes a competitive advantage as multimodal interaction becomes standard.

In everyday use, users remember whether a system “made sense,” not which modality delivered the answer. Consistent meaning across modalities defines that experience and determines long-term trust.

Interpretive Structure of Multimodal Content Pages

- Hierarchical signal separation. A strict H2→H3→H4 hierarchy enables AI systems to distinguish between behavioral concepts, experiential layers, and strategic implications without conflating modalities or intent levels.

- Modality-neutral semantic containers. Content blocks framed around stable meaning units allow generative systems to reinterpret the same information across text, voice, and visual contexts without semantic loss.

- Progressive intent resolution structure. Section ordering reflects a gradual refinement of intent, which supports AI models in maintaining contextual continuity across long interaction paths.

- Local definition stabilization. Immediate micro-definitions anchor abstract concepts early, reducing reliance on cross-document inference during multimodal interpretation.

- Cross-modal coherence signaling. Alignment between section scope and conceptual depth provides consistent interpretive cues when content is extracted or recomposed in generative environments.

This structural configuration clarifies how multimodal content pages are segmented, interpreted, and recomposed by AI systems, independent of presentation layer or input modality.

FAQ: Multimodal Search and Content Strategy

What is multimodal search?

Multimodal search is a discovery model where users interact with information systems through a combination of text, images, voice, and contextual signals within a single search flow.

How does multimodal search change content strategy?

Multimodal search shifts content strategy from keyword control toward meaning stability, requiring content to remain interpretable across different input types.

Why is multimodal discovery different from traditional search?

Traditional search assumes clear text queries, while multimodal discovery often begins with partial or non-linguistic signals that evolve through interaction.

How do AI systems interpret multimodal content?

AI systems integrate visual, auditory, and textual signals into a unified semantic interpretation, relying on structure and stable definitions to preserve meaning.

What role does structure play in multimodal content?

Clear structural hierarchy and semantic boundaries help AI systems reuse and recombine content across modalities without losing interpretive consistency.

Does text still matter in multimodal search?

Text remains essential for precision and confirmation, but it no longer serves as the sole entry point for discovery.

How does multimodal search affect long-term content systems?

Multimodal search favors content systems built around reusable meaning units that remain valid across evolving interaction patterns.

Is multimodal search a temporary trend?

Current evidence suggests gradual normalization rather than short-term experimentation, indicating long-term structural impact.

What capabilities are required to manage multimodal content?

Effective multimodal content management requires semantic precision, structural consistency, and alignment with AI interpretation logic.

Glossary: Key Terms in Multimodal Content Strategy

This glossary defines core terminology used throughout the article to ensure consistent interpretation of multimodal search, discovery behavior, and long-term content systems.

Multimodal Search

A discovery model in which users interact with information systems through a combination of text, images, voice, and contextual signals within a single interpretive flow.

Multimodal Search Behavior

The observable pattern of how users initiate, refine, and complete discovery by shifting between different input modalities.

Multimodal Discovery Patterns

Repeating interaction sequences through which intent emerges gradually as users combine visual, voice, and textual inputs.

Multimodal Content Consumption

The process of absorbing information across mixed sensory formats as users switch between reading, listening, and viewing.

Meaning Stability

The ability of content to preserve its core interpretation when presented or reused across different input modalities.

Semantic Boundary

A clear separation between conceptual units that allows AI systems to interpret, extract, and recombine content without ambiguity.

Input-Neutral Structure

A content structure designed to remain interpretable regardless of whether discovery begins with text, image, or voice input.

Interaction-Based Search Model

A discovery framework where meaning forms through a sequence of interactions rather than a single query-response event.

Structural Consistency

The practice of maintaining stable hierarchy and layout patterns to support reliable AI interpretation across sections and formats.

Multimodal Search Strategy

A long-term content approach that aligns structure and meaning with discovery across mixed input environments.