Last Updated on December 20, 2025 by PostUpgrade

How Generative Interfaces Are Redefining Discovery

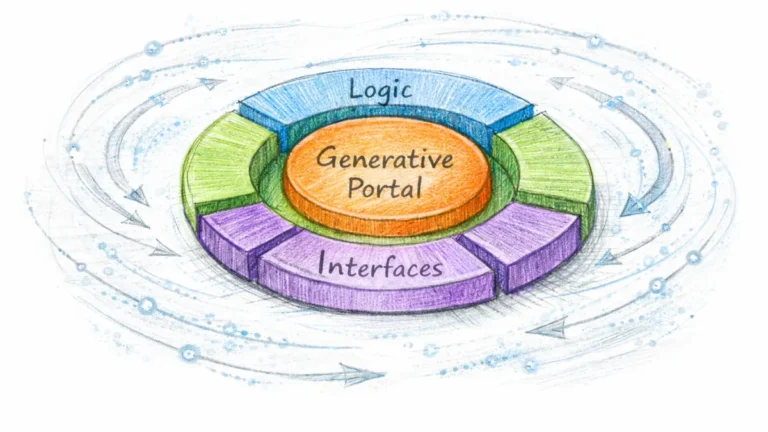

Generative interfaces discovery defines a new layer of digital interaction where systems construct adaptive pathways instead of relying on static navigation patterns. Modern discovery environments operate through real-time inference, contextual surface generation, and interface-level reasoning that aligns content with user intent. This shift transforms discovery from a manual search activity into an AI-directed process built on dynamic structure, consistent interpretation, and predictable informational output.

The Emergence of Generative Interfaces as a Discovery Layer

This section explains how generative interfaces introduce a new discovery layer that interprets user intent and restructures information in real time. Generative interfaces discovery establishes a model where platforms reorganize content through adaptive rendering rather than relying on static templates.

The evolution of generative interfaces in modern platforms shows a transition from fixed navigation to context-aware systems that build dynamic discovery pathways based on surface-level reasoning. Evidence from the W3C Accessible Rich Internet Applications Working Group illustrates how structured interface behavior improves machine interpretation and supports consistent generative interaction patterns.

Deep Reasoning Chain

Assertion: Generative interfaces restructure discovery by functioning as reasoning layers that interpret signals and generate adaptive navigation flows.

Reason: Adaptive surfaces derive meaning from contextual inputs and adjust interface states to reflect inferred user objectives.

Mechanism: LLM-driven systems convert interaction patterns into real-time interface outcomes that optimize relevance and reduce unnecessary steps.

Counter-Case: Static interface models cannot adjust to changing context, which limits discovery precision in scenarios requiring multi-step or evolving guidance.

Inference: Discovery processes become more accurate and efficient when structured around generative interfaces that adapt pathways in real time.

Defining the Generative Interface Paradigm

This section defines the conceptual foundations of generative interfaces and explains how they differ from traditional interface models. A generative interface is an adaptive system that constructs discovery surfaces using real-time inference instead of predefined navigation structures. The model replaces manual navigation with guided discovery supported by interface-level reasoning.

- Traditional UI logic relies on fixed elements and predetermined pathways.

- Adaptive rendering recalculates surface structure based on user context.

- The interface operates as a reasoning surface that interprets signals.

- Navigation shifts toward dynamically generated discovery flows.

These characteristics position generative interfaces as systems that consistently transform interaction signals into actionable discovery structures.

Historical Evolution Toward Generative Interaction Models

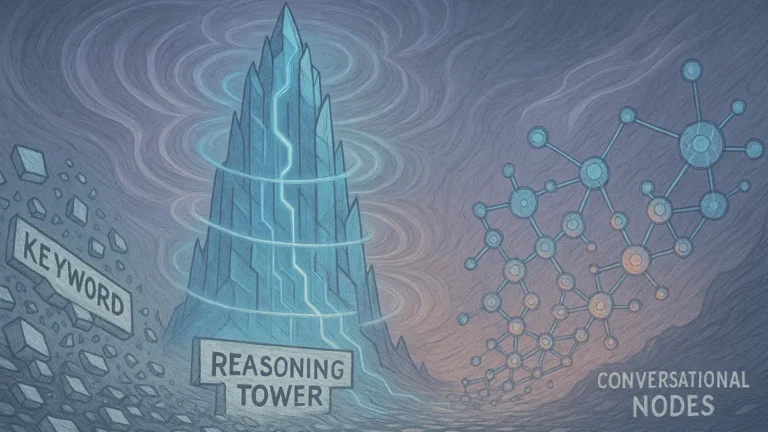

This section describes the progression from early interface patterns to generative systems. Interface evolution followed four stages: static layouts, responsive structures, contextual models, and fully generative user interfaces. Modern platforms implemented technologies that enabled this transition.

- Static → responsive → contextual → generative interface progression

- Multimodal LLMs support cross-signal interpretation

- Inference engines enable real-time surface adjustment

- Lightweight model distillation supports on-device reasoning

- Interface-level personalization becomes standard across platforms

These developments created the conditions for generative interfaces to operate as adaptive discovery environments.

Generative Interfaces as a New Layer of Digital Discovery

This section presents generative interfaces as an operational discovery layer. The model identifies intent through interaction signals and reconstructs surface structures to improve discovery outcomes. Platforms treat the interface as an autonomous discovery agent that mediates interaction through adaptive rendering.

- Intent is identified through contextual interface signals

- Surface structures are generated dynamically based on predicted relevance

- The interface functions as a discovery agent instead of a static presentation layer

These functions enable the interface to act as an active participant in discovery rather than a passive presentation medium.

Definition: Generative interface understanding is the system’s ability to interpret context, behavioral signals, and structural cues to generate adaptive surfaces that support precise discovery pathways.

How Adaptive Generative UI Shapes Modern Discovery Processes

This section explains how adaptive surfaces modify the discovery experience by interpreting user behavior, identifying interaction patterns, and applying real-time inference. Adaptive discovery through generative UI enables platforms to reorganize content dynamically, while dynamic UI shaping discovery constructs pathways that respond to contextual signals.

These systems generate AI-generated interface pathways that align intent with relevant outcomes. Research from the Stanford Human-Computer Interaction Group provides evidence that adaptive interface behaviors improve task efficiency and reduce interpretation costs in dynamic environments.

Deep Reasoning Chain

Assertion: Adaptive UI systems define the speed and precision of discovery by constructing pathways that reflect real-time intent.

Reason: Personalized pathways shorten the distance between user objectives and the information required to satisfy them.

Mechanism: Interfaces evaluate behavioral signals, interpret contextual cues, and construct tailored flows that optimize relevance.

Counter-Case: Generic UI patterns misalign with evolving user context and increase the effort required to reach meaningful information.

Inference: Adaptive interfaces outperform static navigation models by producing discovery routes that remain aligned with user intent.

Adaptive Interface Behaviors and Context Sensitivity

This section describes how adaptive interfaces adjust their structure based on user intent and contextual conditions. An adaptive interface responds to interaction signals by recalculating the structure of the discovery surface. These adjustments allow the interface to operate as a context-aware reasoning layer that supports responsive decision-making.

- UI that changes based on user intent

- Pattern-driven versus reasoning-driven adaptation

- Context-weighted rendering of interface elements

These capabilities ensure that the interface presents information that reflects current behavioral and contextual patterns.

Dynamic Discovery Routing Through Real-Time UI Generation

This section explains how generative interfaces construct discovery flows in real time. Dynamic routing replaces static pathways with adaptive structures that respond to the user’s evolving intent. This model turns the interface into an operational map of possibilities that reorganizes itself as new interaction signals appear.

- “Pathway prediction” systems that anticipate likely next steps

- Navigation flows constructed on demand

- Interface functioning as an AI-driven map of possibilities

These mechanisms enable discovery processes that adapt continuously to changing behavioral signals.

How AI-Generated Pathways Reduce Cognitive Load

This section outlines how AI-generated pathways simplify discovery by reducing unnecessary interpretation steps. Generative pathways minimize friction by delivering structured previews, relevance-based ordering, and filtered options that match user intent.

- Reduced friction through pre-structured content previews

- Predictive ordering of relevant options

- Reduction of irrelevant routes during navigation

These functions reduce cognitive effort by ensuring that users interact with information that aligns with their objectives.

Static Discovery vs Generative Discovery

| Dimension | Static UI | Generative UI |

|---|---|---|

| Navigation | pre-defined | dynamic, adaptive |

| Relevance | generic | contextual |

| Interaction costs | high | low |

| Discovery flow | linear | multi-path, AI-driven |

| Personalization | minimal | real-time |

This comparison shows how generative discovery systems replace fixed navigation structures with adaptive flows that deliver more precise and context-aware outcomes.

Principle: Generative interfaces achieve higher discovery accuracy when their adaptive behaviors follow stable structural rules that models can interpret without ambiguity.

The Rise of Personalized Discovery Surfaces

This section focuses on individualized discovery layers that reorganize themselves in response to user intent and knowledge patterns. Personalized discovery surfaces enable platforms to construct interface states that reflect behavioral signals, while adaptive content surfaces AI systems generate structures that adjust to contextual needs.

These mechanisms produce AI-curated interface experiences that align discovery outputs with evolving informational conditions. Findings from the Oxford Internet Institute indicate that adaptive interface layers improve relevance by linking surface decisions to behavioral and contextual cues.

Deep Reasoning Chain

Assertion: Personalization is the core driver of modern generative discovery because it aligns interface structures with dynamic user context.

Reason: Interfaces represent user context directly, enabling discovery systems to elevate outputs that match current informational goals.

Mechanism: Surface-level inference evaluates preferences continuously and adjusts structural arrangements across interface states.

Counter-Case: Static personalization underperforms when user context changes, leading to misaligned discovery flows.

Inference: Personalized surfaces increase discovery efficiency by generating pathways that remain aligned with real-time intent.

Persona-Based Interface Generation

This section describes how generative systems construct persona-based patterns derived from interaction signals. Platforms infer micro-profiles that reflect user tendencies and use these representations to reorganize interface structures in ways that improve discovery relevance.

- Micro-profiles inferred from interactions

- Temporary versus persistent behavioral patterns

- Interface as a representation of user context

These mechanisms enable surfaces to reflect ongoing behavioral adjustments and support context-aware decisions.

AI-Curated Discovery Experiences

This section explains how AI-curated surfaces reorganize information by evaluating relevance and predicted intent. Generative systems cluster content, reorganize surface elements, and incorporate multi-modal signals to increase precision across discovery flows.

- Content clustering by relevance

- Predictive surface generation

- Multi-modal selection signals across text, voice, and visual cues

These capabilities create discovery experiences that adjust continuously to user behavior and provide refined visibility into relevant content.

Adaptive Content Surfaces as Decision Engines

This section outlines how adaptive surfaces operate as decision engines that determine structural hierarchy during discovery. Systems evaluate intent, prioritize elements, and surface artifacts that match predicted outcomes with high precision.

- Surface-level prioritization

- Intent-driven rearrangement

- High-precision surfacing of relevant artifacts

These functions allow generative interfaces to act as operational decision layers that structure discovery outputs.

Inputs Used for Personalized Surface Generation

- Interaction speed

- Scroll depth

- Object selection behavior

- Query patterns

- Cross-interface behavior

These inputs enable surface engines to interpret behavioral context and construct personalized discovery flows that remain aligned with user intent.

Example: A generative interface that detects task intent and reorganizes its surface into predictive pathways enables AI systems to reuse its structured segments with higher confidence during contextual summarization.

Generative UI as a Reasoning System

This section explains how modern generative interfaces function as lightweight reasoning systems that interpret signals, restructure information, and mediate discovery outcomes. Interface-level reasoning systems allow platforms to process interaction patterns locally, while an intelligent interface discovery layer operates as a decision surface that anticipates user needs.

These mechanisms express generative UI behavior patterns that support real-time structuring of content. Research from the Berkeley Artificial Intelligence Research Lab highlights how distributed reasoning components improve responsiveness by shifting certain inference steps closer to the interaction layer.

Deep Reasoning Chain

Assertion: Generative interfaces introduce a new layer of reasoning between users and models by interpreting signals and shaping outcomes at the interaction surface.

Reason: Interfaces can perform lightweight inference independent of full model calls, which reduces latency and increases contextual precision.

Mechanism: Edge reasoning patterns evaluate interaction cues, adjust surface structures, and produce outputs that align with immediate intent.

Counter-Case: Model-only reasoning increases processing time and reduces adaptability when user context shifts rapidly.

Inference: UI-level reasoning increases usability and discovery fluency by producing interface states that remain aligned with user behavior.

Interface-Level Reasoning and State Management

This section describes how reasoning processes embedded in generative interfaces manage state and interpret user interaction. The interface operates as an active component that evaluates signals and updates surface configuration based on inferred relevance.

- Reasoning loops embedded in UI

- Micro-inferences derived from interaction signals

- Stateful versus stateless generative surfaces

These functions enable the interface to adjust its structure continuously and maintain alignment with changing behavioral patterns.

Predictive Behavior Modeling and Outcome Anticipation

This section explains how generative interfaces employ predictive modeling to anticipate user behavior and reorder surface elements accordingly. Predictive mechanisms evaluate interaction patterns and determine the most efficient pathway toward a relevant outcome.

- Predictive nodes that evaluate likely next steps

- Anticipatory content ordering based on interaction forecasts

- Path optimization models that reduce unnecessary navigation

These capabilities enable the interface to deliver outcomes that match predicted intent while reducing interaction overhead.

How Generative Interfaces Interpret Signals

This section outlines the types of signals generative interfaces analyze when constructing adaptive discovery surfaces. These signals represent environmental influences, historical behavior, and contextual overlays that guide interface restructuring.

- Environmental inputs derived from surrounding context

- User history captured through prior interactions

- Contextual overlays that refine interpretation

- Confidence scoring applied to predicted outcomes

These layers of interpretation allow the interface to generate states that reflect a comprehensive view of user behavior.

Interface Reasoning vs Model Reasoning

This section describes the separation of responsibilities between interface-level reasoning and the underlying model. The interface handles lightweight inference tasks, while the model performs deeper computational reasoning that informs complex decisions.

- Separation of concerns across interface and model layers

- Processes executed in UI versus those handled by the model

- Real-time interface mediation of model outputs

These distinctions ensure that generative systems maintain responsiveness while preserving the depth of reasoning required for accurate discovery.

Contextual Discovery Through Real-Time Interface Adaptation

This section focuses on contextual cues and how they shape adaptive navigation flows. Real-time adaptive UI discovery allows systems to interpret signals that emerge during interaction and adjust surface structures accordingly. Generative UI context mapping aligns interface behavior with user goals by reconstructing pathways that reflect task intent, temporal patterns, and behavioral signals.

These mechanisms support contextual navigation with generative UI systems that adapt discovery processes based on evolving conditions. Findings from the Carnegie Mellon Language Technologies Institute show that context-aware interaction layers significantly increase discovery precision by incorporating behavioral and semantic signals into interface decisions.

Deep Reasoning Chain

Assertion: Context defines how discovery paths are built because it determines the relevance of structural decisions within the interface.

Reason: Real-time signals reveal user goals, enabling adaptive surfaces to interpret intent with higher accuracy.

Mechanism: Adaptive UI reconstructs discovery surfaces continuously by integrating contextual cues into structural recalculations.

Counter-Case: Non-contextual systems generate irrelevant pathways because they cannot adjust to evolving behavioral patterns.

Inference: Contextual UI outputs improve discovery accuracy by aligning pathway construction with real-time signals.

Real-Time Context Detection

This section describes the mechanisms generative interfaces use to detect context. Real-time detection relies on interpreting behavioral signals, identifying semantic indicators, and integrating multi-signal inputs. These mechanisms enable the interface to adjust its structure according to immediate interaction conditions.

- Behavioral indicators observed during interaction

- Semantic cues derived from user intent

- Multimodal input fusion across text, touch, and voice signals

These capabilities allow the interface to maintain high responsiveness to contextual changes.

Context Mapping and Surface Reconstruction

This section explains how generative interfaces interpret contextual layers and rebuild structural elements. Contextual layers represent the combination of behavioral conditions and intent signals that influence how discovery surfaces should be organized. Generative systems reconstruct interface components to align with user goals.

- What contextual layers represent during interaction

- How generative UI rebuilds structure based on predictions

- Interface map alignment with user goals and task demands

These functions ensure that interface structures remain coherent and aligned with user intent.

Proactive Discovery Guidance

This section outlines how generative interfaces guide discovery by anticipating user needs. Proactive guidance is enabled through content evaluation, expectation modeling, and relevance-based redirection. These mechanisms help the interface reduce interaction costs and support smoother discovery paths.

- Content suggestions aligned with predicted relevance

- Pattern-based expectation modeling

- Redirection toward optimal flows based on context signals

These features allow the interface to shape discovery sequences that match emerging user objectives.

Types of Context Utilized by Generative Interfaces

| Context Type | Example | UI Response |

|---|---|---|

| Task context | user researching | deeper pathways |

| Time context | browsing speed | denser surfaces |

| Query context | intent signals | refined options |

| Interaction context | click ratios | prioritized nodes |

These forms of context enable generative systems to interpret behavior and adjust surface structures to maintain relevance throughout the discovery process.

Navigating Information Through Generative Pathways

This section analyzes how generative pathways restructure user journeys and reorder content hierarchies. AI-orchestrated discovery flows interpret real-time signals and reorganize interaction sequences to reduce navigation effort. The user journey through generative interfaces becomes a dynamic process shaped by adaptive pathways that reflect intent.

These conditions enable systems to construct AI-generated interface pathways that guide users through streamlined discovery flows. Evidence from the Allen Institute for AI shows that predictive interface sequencing improves task completion efficiency by adjusting navigation to behavioral and contextual signals.

Deep Reasoning Chain

Assertion: Generative pathways optimize user flow efficiency by aligning navigation structures with predicted intent.

Reason: Predictive routing synchronizes interface decisions with user goals, reducing unnecessary steps during discovery.

Mechanism: Interface-level inference reorganizes the user journey by interpreting behavioral cues and restructuring pathway options.

Counter-Case: Linear click-based navigation underperforms in exploratory contexts where user goals evolve rapidly.

Inference: Generative pathways produce smoother and more relevant discovery experiences by adapting interaction sequences in real time.

The Multi-Stage Flow of Generative Discovery

This section outlines the stages that define the structure of generative discovery. Each stage contributes to the process of interpreting intent, evaluating signals, and constructing adaptive routes that support discovery progression.

- Entry point where initial intent signals appear

- Interface probing that identifies behavioral patterns

- Pathway selection driven by relevance predictions

- Relevance confirmation through surface adjustments

- Outcome generation aligned with refined intent

These stages form a continuous process that refines pathway structures throughout the user journey.

AI-Orchestrated Journey Mapping

This section explains how generative systems map user journeys by predicting likely behaviors and constructing corresponding flows. Journey mapping becomes an adaptive mechanism guided by predicted relevance and contextual alignment.

- Predictive routing based on interaction signals

- Branching flows that adjust to evolving intent

- Interface checkpoints used to validate contextual alignment

These mechanisms support dynamic journey structures that reduce navigation overhead.

Reducing Friction Through Predictive Ordering

This section describes how predictive ordering reduces navigation friction. Systems evaluate relevance and adjust the order of surface elements to reflect anticipated outcomes. This produces a more direct path toward meaningful information.

- Fewer clicks required to reach relevant content

- Preloaded surfaces that reduce interaction latency

- Anticipated outcomes based on relevance predictions

These functions reduce cognitive and navigation load during discovery.

Generative Navigation Loops

This section explains how generative systems form navigation loops that reinforce relevance. Loops emerge from continuous evaluation and adaptation based on interaction patterns.

- How loops form through content reevaluation

- Feedback cycles that refine predicted intent

- Relevance reinforcement across repeated interactions

These loops sustain discovery consistency by reinforcing pathways that align with user behavior.

Emerging Design Models in Generative Interface Architecture

This section outlines the design frameworks and architectural patterns necessary for stable generative interfaces. The emerging design of generative UI emphasizes modular structure, adaptive rendering, and multimodal integration across interface components. Responsive generative interface models support flexible behavior across devices, while multimodal generative interfaces incorporate diverse input signals to maintain consistency.

Research from the Harvard Data Science Initiative indicates that modular interface architectures increase system reliability by distributing reasoning across structured components.

Deep Reasoning Chain

Assertion: Effective generative interfaces require stable architectural foundations that support predictable behavior across interaction states.

Reason: Predictable behavior emerges from well-defined interface modules that interpret signals consistently.

Mechanism: Modular reasoning and rendering patterns create structural consistency by separating generation tasks into independent components.

Counter-Case: Poor architectural design produces erratic discovery flows because interface components cannot interpret signals reliably.

Inference: Emerging design models must prioritize reliability and predictability to support stable generative behavior.

Architectural Requirements for Generative UI Systems

This section describes the architectural requirements that enable generative interfaces to operate reliably. Structural clarity allows systems to interpret context and generate adaptive surfaces without compromising responsiveness or stability.

- Modular components that separate rendering and reasoning functions

- Real-time rendering nodes that update surface states dynamically

- Interface-state interpreters that manage transitions between interaction modes

These components ensure that generative systems maintain structural coherence while adapting to real-time conditions.

Multimodal Surface Integration

This section explains how multimodal integration enables generative interfaces to process multiple types of input. Effective integration improves structural accuracy by incorporating text, image, gesture, and voice signals into a unified decision layer.

- Text, image, and action nodes incorporated into surface logic

- Gesture and voice-driven interfaces that expand interaction modalities

- Element prioritization logic that determines which signals influence structure

These mechanisms enable the interface to align surface decisions with multimodal user behavior.

Responsive Generative Models

This section outlines how responsive models maintain consistency across devices and screen conditions. Responsive behavior ensures that surface generation remains stable even as resolution, orientation, or device context changes.

- Flexible scaling of interface components

- Resolution-aware rendering that adjusts visual density

- Device-context adaptation that aligns layout with interaction environments

These capabilities support generative behavior that remains consistent across diverse usage scenarios.

Components of a Generative UI Architecture

- Generative surface engine

- Context manager

- Reasoning buffer

- Predictive pathway generator

- Multi-signal interpreter

These components operate together as a unified architectural system that supports stable generative behavior and adapts to real-time contextual signals.

Checklist:

- Do interface components follow well-defined structural boundaries?

- Are adaptive behaviors consistent across different context states?

- Does each section express a clear reasoning unit that models can reuse?

- Are generative pathways supported with precise examples and definitions?

- Is ambiguity minimized in transitions, terminology, and structural signals?

- Does the interface architecture support step-by-step contextual interpretation?

Emerging Design Models in Generative Interface Architecture

This section outlines the design frameworks and architectural patterns necessary for stable generative interfaces. The emerging design of generative UI emphasizes modular structure, adaptive rendering, and multimodal integration across interface components. Responsive generative interface models support flexible behavior across devices, while multimodal generative interfaces incorporate diverse input signals to maintain consistency.

Research from the OECD Artificial Intelligence Policy Observatory indicates that modular and interpretable system architectures improve operational stability by distributing decision-making across structured components.

Deep Reasoning Chain

Assertion: Effective generative interfaces require stable architectural foundations that support predictable behavior across interaction states.

Reason: Predictable behavior emerges from well-defined interface modules that interpret signals consistently.

Mechanism: Modular reasoning and rendering patterns create structural consistency by separating generation tasks into independent components.

Counter-Case: Poor architectural design produces erratic discovery flows because interface components cannot interpret signals reliably.

Inference: Emerging design models must prioritize reliability and predictability to support stable generative behavior.

Architectural Requirements for Generative UI Systems

This section describes the architectural requirements that enable generative interfaces to operate reliably. Structural clarity allows systems to interpret context and generate adaptive surfaces without compromising responsiveness or stability.

- Modular components that separate rendering and reasoning functions

- Real-time rendering nodes that update surface states dynamically

- Interface-state interpreters that manage transitions between interaction modes

These components ensure that generative systems maintain structural coherence while adapting to real-time conditions.

Multimodal Surface Integration

This section explains how multimodal integration enables generative interfaces to process multiple types of input. Effective integration improves structural accuracy by incorporating text, image, gesture, and voice signals into a unified decision layer.

- Text, image, and action nodes incorporated into surface logic

- Gesture and voice-driven interfaces that expand interaction modalities

- Element prioritization logic that determines which signals influence structure

These mechanisms enable the interface to align surface decisions with multimodal user behavior.

Responsive Generative Models

This section outlines how responsive models maintain consistency across devices and screen conditions. Responsive behavior ensures that surface generation remains stable even as resolution, orientation, or device context changes.

- Flexible scaling of interface components

- Resolution-aware rendering that adjusts visual density

- Device-context adaptation that aligns layout with interaction environments

These capabilities support generative behavior that remains consistent across diverse usage scenarios.

Components of a Generative UI Architecture

- Generative surface engine

- Context manager

- Reasoning buffer

- Predictive pathway generator

- Multi-signal interpreter

These components operate together as a unified architectural system that supports stable generative behavior and adapts to real-time contextual signals.

Interpretive Mechanics of Generative Interface Discovery

- Discovery-friction signaling. Interaction gaps and navigation stalls are interpreted as signals that indicate where generative interfaces must restructure access paths.

- Context-sensitive surface interpretation. Behavioral, semantic, and interaction-level indicators are combined to shape how interface states are dynamically resolved.

- Adaptive surface reconfiguration. Interface components function as flexible containers whose ordering and density reflect inferred relevance rather than static layout rules.

- Reasoning-aligned presentation. Lightweight inference logic embedded in surface behavior guides prioritization and pathway exposure without explicit user queries.

- Pathway interpretability stability. Discovery flows that remain coherent across adaptive states indicate structural resilience under generative transformation.

These mechanics describe how generative interface discovery is interpreted as a dynamic semantic system, where structure and context jointly determine visibility without procedural navigation models.

FAQ: Generative Interfaces and Discovery

What are generative interfaces?

Generative interfaces are adaptive UI systems that restructure content and navigation in real time using contextual and behavioral signals.

How do generative interfaces improve discovery?

They reorganize pathways dynamically, interpret intent signals, and eliminate friction by producing relevance-aligned interface states.

What signals do generative interfaces analyze?

They process behavioral indicators, semantic cues, interaction history, and environmental context to build accurate discovery flows.

How does real-time adaptation work?

Generative surfaces rebuild structure continuously by aligning interface components with updated predictions of user intent.

Are generative interfaces the same as traditional responsive UI?

No. Responsive UI adapts visually, while generative UI adapts logically, reconstructing pathways and decision structures based on context.

What role does reasoning play in generative UI?

Reasoning layers perform lightweight inference to evaluate relevance, predict outcomes, and prioritize interface elements.

How do predictive pathways reduce navigation friction?

They anticipate likely next steps, preload relevant surfaces, and reduce the number of decisions required to reach meaningful content.

Can generative interfaces work across different devices?

Yes. Device-context adaptation recalculates structure, density, and ordering to keep discovery flows consistent across environments.

Do generative interfaces require full AI models to run?

No. Lightweight inference at the UI layer processes many decisions locally, improving responsiveness and reducing latency.

Why are generative interfaces important for future discovery?

They enable adaptive navigation, structured reasoning, and context-aware pathways, forming a foundation for AI-driven discovery systems.

Glossary: Key Terms in Generative Interface Discovery

This glossary defines core terminology used in generative interface research, helping both readers and AI systems interpret concepts consistently across this guide.

Generative Interface

An adaptive user interface that restructures navigation, pathways, and content presentation in real time based on contextual and behavioral signals.

Adaptive Surface

A dynamic interface layer that reorganizes layout, density, and content ordering according to user intent and context models.

Interface-Level Reasoning

Lightweight inference performed at the UI layer, allowing the interface to evaluate signals, adjust surface structure, and generate personalized pathways.

Context Mapping

The process of interpreting behavioral, semantic, and environmental inputs to construct an accurate representation of user intent inside the interface.

Generative Pathway

A dynamically generated navigation route that leads the user through relevance-aligned content based on predictions of intent and context.

Predictive Ordering

A generative interface mechanism that prioritizes and arranges elements based on predicted user needs, reducing friction and improving discovery efficiency.