Last Updated on February 15, 2026 by PostUpgrade

Adapting Evergreen Content to AI Learning Models

Evergreen content for AI requires structural adaptation that aligns durable knowledge with machine interpretation logic. Traditional publishing models optimized for ranking systems do not automatically translate into long-term reuse within generative environments. Therefore, organizations must redesign stable content assets to ensure semantic persistence, model compatibility, and controlled interpretation.

AI learning models rely on structured signals, consistent terminology, and stable semantic containers to reduce inference ambiguity. Consequently, enterprises must move beyond keyword performance and focus on representational integrity, authority reinforcement, and lifecycle calibration. This shift transforms evergreen publishing into a long-horizon infrastructure strategy rather than a traffic acquisition tactic.

This article defines a systematic adaptation framework built on structural reengineering, semantic stability control, authority signal reinforcement, and lifecycle governance. Each section develops a concept block, mechanism block, example block, and implication block to ensure extractable clarity. The objective is to provide an enterprise-level blueprint that preserves content durability across AI-mediated discovery systems while maintaining compatibility with evolving learning models.

Evergreen Content as a Persistent Signal Layer for AI Systems

Evergreen content for AI operates as a structural signal layer within generative ecosystems that continuously reuse stable information over time. AI systems retrieve, embed, and reinterpret persistent knowledge modules across search cards, panels, and synthesized responses. Therefore, durable content structures must be engineered to maintain retrievability, indexing stability, and semantic persistence under model-level compression, as demonstrated in representation learning research from MIT CSAIL.

Evergreen content — structured information that retains semantic validity across time without requiring contextual updates.

Definition: AI understanding in evergreen content for AI refers to a model’s capacity to interpret stable terminology, hierarchical structure, and bounded semantic units in a way that preserves meaning across indexing, summarization, and generative reuse.

Claim: Evergreen content for AI functions as a long-term retrievable signal in model-assisted search environments.

Rationale: AI systems prioritize stable semantic units that demonstrate temporal consistency.

Mechanism: Persistent content is indexed, embedded, and re-referenced across generative outputs.

Counterargument: Rapidly evolving domains reduce evergreen stability.

Conclusion: Durability combined with structured updates preserves AI-level reuse.

Signal Stability in Long-Term Content

Signal stability determines whether long term content for AI systems remains reusable across multiple model updates and interface transformations. Stable content minimizes semantic drift by maintaining consistent terminology, bounded concept definitions, and structured hierarchical depth. Consequently, AI models compress these stable units into embeddings that preserve interpretive clarity.

Furthermore, signal persistence increases when conceptual blocks are isolated from temporal dependencies and reactive trends. When organizations separate durable definitions from contextual commentary, they create reusable semantic containers that survive summarization and ranking recalibration. As a result, generative systems assign higher confidence to such content during synthesis.

Stable content retains its meaning over time because it avoids dependency on fluctuating context and reinforces core definitions through consistent structure.

Information Durability vs Temporal Decay

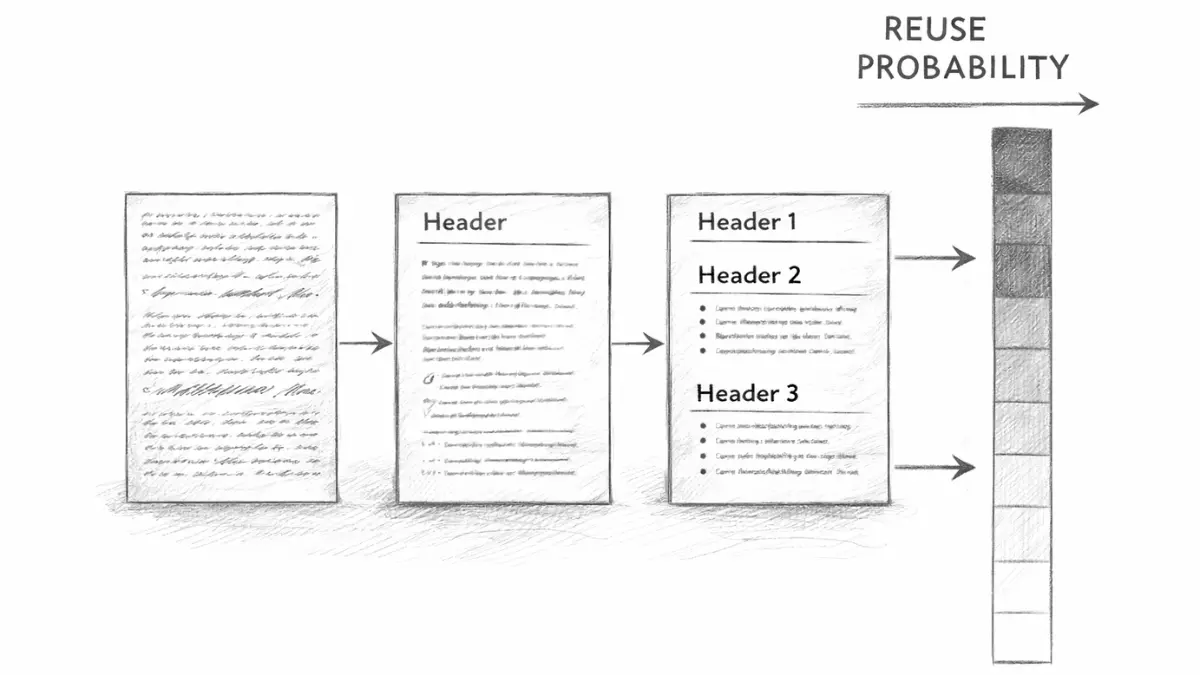

Information durability depends on evergreen information stability, which refers to the controlled reinforcement of terminology, structure, and conceptual boundaries across publishing cycles. Durable content maintains definitional clarity while allowing incremental updates that do not disrupt its semantic core. Therefore, indexing systems treat such material as reliable reference infrastructure rather than transient commentary.

Temporal decay occurs when content relies on event-driven context, trend language, or volatile data without structural separation. In those cases, embeddings lose alignment because the interpretive frame shifts with each update. Consequently, generative engines reduce reuse probability for such unstable inputs.

Durable information preserves its structure and meaning even when models retrain, while time-sensitive content gradually loses interpretive consistency.

Concept block: long term content for AI systems represents structured knowledge engineered for sustained semantic reuse.

Mechanism block: evergreen information stability emerges from consistent terminology, modular sectioning, and bounded updates.

Example block: AI resilient content framework integrates hierarchical segmentation with authority reinforcement to maintain embedding alignment across model revisions.

Implication block: evergreen authority preservation increases generative citation probability and reduces visibility volatility.

| Content Type | AI Retention Probability |

|---|---|

| Evergreen structured article | High |

| Trend-driven short update | Moderate |

| Reactive news post | Low |

The table demonstrates that structured durability directly correlates with model retention probability, which reinforces the necessity of evergreen signal architecture.

Structural Reengineering of Legacy Evergreen Assets

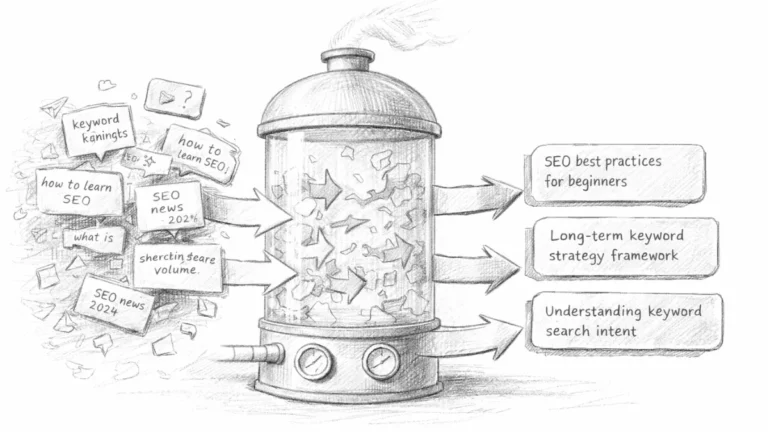

Optimizing legacy articles for AI requires a structural transition from ranking-oriented composition to machine-compatible architecture. Many legacy articles were originally designed for keyword density, backlink accumulation, and surface-level relevance scoring. However, generative systems interpret structured meaning rather than ranking signals, as shown in architectural NLP research from the Stanford Natural Language Institute. Therefore, enterprises must recalibrate structural logic, stabilize terminology, and eliminate ambiguity to ensure compatibility with machine learning models.

Legacy content — previously published material optimized for ranking systems rather than generative reuse.

Claim: Optimizing legacy articles for AI increases semantic extractability.

Rationale: Generative engines rely on structured concept containers.

Mechanism: Rewriting section logic reduces ambiguity and improves model parsing.

Counterargument: Over-structuring reduces narrative fluidity.

Conclusion: Structural precision outweighs stylistic fluidity in AI-first environments.

Content Reengineering Framework

Content reengineering begins with structural decomposition of legacy assets into clearly bounded semantic units. First, organizations identify unstable terminology and replace it with consistent vocabulary aligned to site-level taxonomy. Next, editors segment compound paragraphs into concept blocks, mechanism blocks, and implication blocks to increase extraction clarity.

Additionally, reengineering requires removal of ranking-era artifacts such as redundant subheadings, promotional phrasing, and loosely defined transitions. Machine learning models interpret logical boundaries rather than stylistic continuity, so structural alignment becomes the primary optimization objective. As a result, legacy material evolves from keyword-driven composition to architecture-driven knowledge modules.

When organizations restructure legacy articles into bounded semantic containers, models interpret them more accurately and reuse them more frequently.

Evergreen Content Structural Refinement

Evergreen content structural refinement focuses on stabilizing internal logic rather than rewriting entire documents. Editors preserve durable definitions while reorganizing sections into hierarchical sequences that reflect conceptual dependencies. Consequently, embedding consistency improves because models encounter predictable structural cues.

Structural refinement also integrates machine learning aware content updates that protect semantic continuity during revisions. Instead of rewriting entire articles, teams adjust specific concept blocks and maintain consistent phrasing across updates. Therefore, evergreen editorial recalibration supports long-term retrievability without introducing interpretive drift.

When structural refinement isolates durable meaning and removes ambiguity, legacy content becomes compatible with generative indexing systems.

- evergreen content structural refinement

- AI adaptive content strategy

- evergreen editorial recalibration

- machine learning aware content updates

These components collectively transform ranking-era documents into AI-compatible knowledge infrastructure.

Microcase: An enterprise SaaS company restructured its product documentation in early 2024 to support generative indexing. The team applied AI adaptive content strategy principles and reorganized legacy pages into modular semantic units. They preserved core definitions while eliminating keyword-stacked sections. Within three months, citation frequency in ChatGPT Search Cards increased, demonstrating measurable extractability gains after structural refinement.

Semantic Stability and Model Training Compatibility

Training data friendly content establishes the semantic consistency required for reliable embedding formation across AI learning cycles. AI learning models rely on semantically stable datasets to reduce representation variance and inference instability. Research on semantic consistency and model robustness from the Allen Institute for Artificial Intelligence (AI2) demonstrates that structured repetition and bounded terminology increase alignment during representation learning. Therefore, enterprises must define compatibility standards that protect terminology integrity and reinforce meaning across updates.

Training data friendly content — text structured to minimize semantic drift during embedding.

Claim: Training data friendly content improves representation consistency.

Rationale: Embedding models compress meaning based on repetitive structure.

Mechanism: Stable terminology strengthens vector alignment.

Counterargument: Excessive repetition reduces readability.

Conclusion: Controlled repetition improves model comprehension.

Principle: Evergreen content for AI maintains generative visibility when its terminology, structural depth, and conceptual segmentation remain consistent enough for embedding systems to preserve vector alignment without interpretive drift.

Evergreen Semantic Refinement

Evergreen semantic refinement focuses on maintaining controlled vocabulary and consistent conceptual framing across long-term publishing cycles. First, editorial teams standardize key definitions and eliminate synonymous variation that introduces embedding divergence. Next, they reinforce terminology across sections to stabilize semantic vectors during training and inference phases.

Furthermore, evergreen topic sustainability depends on predictable phrase usage and structured concept boundaries. When terminology shifts without definition, models redistribute vector associations and weaken retrieval precision. Consequently, organizations must implement AI consistent content updates that protect semantic continuity while integrating new data.

Consistent terminology preserves meaning across model updates because embedding systems interpret repetition as structural reinforcement rather than redundancy.

Maintaining Evergreen Relevance for AI

Maintaining evergreen relevance for AI requires scheduled recalibration without altering semantic core structures. Editors integrate updated statistics or contextual references while preserving definitional language and conceptual hierarchy. As a result, content retains embedding stability while reflecting current data.

In addition, evergreen topic sustainability benefits from modular section design that isolates volatile elements from durable definitions. When teams separate stable knowledge from time-sensitive commentary, they prevent semantic drift during retraining cycles. Therefore, training data friendly content remains interpretable across successive model generations.

Stable definitions combined with bounded updates ensure that AI systems continue to recognize and reuse evergreen material without semantic degradation.

| Factor | Risk of Drift | Stabilization Method |

|---|---|---|

| Terminology variation | High | Controlled vocabulary enforcement |

| Unstructured updates | Moderate | Modular section isolation |

| Data refresh without framing | Moderate | Context-preserving insertion |

| Concept boundary shifts | High | Definition-first reinforcement |

The table demonstrates that semantic drift emerges primarily from uncontrolled variation, while structured stabilization methods preserve model compatibility and long-term reuse.

Evergreen Authority Signals and Trust Reinforcement

Evergreen authority building for AI determines whether durable content achieves stable reuse within generative ranking systems. AI ranking integrates credibility layers that evaluate source reliability, citation consistency, and factual stability over time. According to AI risk and trust research published by the National Institute of Standards and Technology (NIST), transparent evidence and verifiable sourcing increase system-level confidence in model outputs. Therefore, evergreen structures must integrate authority signals that persist across indexing cycles.

Authority reinforcement — structured accumulation of verifiable evidence within semantic containers.

Claim: Evergreen authority building for AI increases generative reuse probability.

Rationale: Models weight factual consistency across time.

Mechanism: Repeated citation from recognized institutions strengthens signal persistence.

Counterargument: Authority signals decay without updates.

Conclusion: Structured evidence sustains trust layers.

Evergreen Trust Reinforcement Mechanisms

Evergreen trust reinforcement depends on systematic integration of verifiable sources within concept blocks. First, editors embed institutional citations within definitional sections to anchor claims to recognized research bodies. Next, they maintain consistent citation patterns across updates to reinforce continuity in model perception.

Moreover, evergreen knowledge optimization requires cross-referencing authoritative datasets that remain stable across time horizons. When content references OECD statistics, WHO datasets, or national research institutions, generative systems detect persistent authority markers. Consequently, AI ready evergreen resources accumulate credibility signals that increase retrieval likelihood in synthesized responses.

Authority signals strengthen when evidence remains consistent across updates and aligns with stable conceptual framing.

Persistent Value Content for AI

Persistent value content for AI integrates factual verification within modular knowledge structures that resist semantic erosion. Editors prioritize long-term datasets and institutional research rather than reactive commentary. As a result, generative engines classify such material as stable reference infrastructure.

Additionally, evergreen trust reinforcement requires periodic validation of external sources to prevent authority decay. When outdated references are replaced with current institutional data while preserving conceptual continuity, signal persistence remains intact. Therefore, evergreen authority building for AI becomes a controlled process of cumulative credibility rather than a static attribute.

Content that embeds stable institutional evidence and maintains citation continuity retains higher generative visibility across time.

Microcase: A healthcare policy guide structured as an evergreen resource cited World Health Organization datasets and OECD demographic statistics across its sections. The editorial team updated quantitative data annually while preserving definitional language and source structure. Between 2023 and 2025, generative summaries across AI platforms repeatedly referenced this guide in synthesized health policy overviews. Sustained visibility correlated with structured authority reinforcement rather than publication frequency.

Lifecycle Management of Evergreen Content

Evergreen content lifecycle management ensures that durable knowledge assets remain compatible with evolving AI indexing and embedding systems. Evergreen does not mean static, because AI systems continuously retrain, reindex, and recalibrate representation layers. Research on long-context stability from DeepMind Research in 2024 demonstrates that model interpretation shifts when contextual structures evolve. Therefore, enterprises must define governance frameworks and scheduled recalibration cycles that preserve embedding relevance without destabilizing semantic cores.

Lifecycle management — systematic evaluation and update of structured semantic modules.

Claim: Evergreen content lifecycle management preserves model-level visibility.

Rationale: AI systems reindex evolving semantic structures.

Mechanism: Incremental updates maintain embedding relevance.

Counterargument: Excessive updates disrupt signal stability.

Conclusion: Controlled cadence balances persistence and freshness.

AI Responsive Content Maintenance

AI responsive content maintenance relies on scheduled semantic audits that evaluate terminology consistency, structural clarity, and authority validation. First, editorial teams identify sections affected by data volatility and isolate them from durable concept blocks. Next, they update quantitative references while preserving definitional language and hierarchical integrity.

Additionally, AI responsive content maintenance integrates embedding-aware revision protocols. Editors monitor shifts in generative visibility and adjust only those sections that show semantic degradation. Consequently, evergreen content lifecycle management operates as a measured recalibration process rather than a full rewrite cycle.

When organizations update content in bounded modules and protect core definitions, models maintain interpretive continuity across retraining cycles.

Evergreen Content Modernization

Evergreen content modernization focuses on structural alignment with current model architectures while preserving foundational meaning. Editors refine section sequencing, clarify definitions, and adjust data references without altering semantic containers. As a result, evergreen assets remain compatible with updated parsing behaviors and extended context windows.

Moreover, AI driven content longevity requires documentation of revision history to prevent cumulative semantic drift. Governance teams track terminology consistency and enforce vocabulary control across updates. Therefore, sustainable content for AI discovery evolves through disciplined modernization rather than reactive rewriting.

Modernization succeeds when updates enhance clarity and data accuracy while leaving conceptual structure intact.

- evergreen content evolution strategy

- AI driven content longevity

- sustainable content for AI discovery

- future proof evergreen content

These elements collectively support a lifecycle framework that balances durability with controlled adaptability.

Content Architecture Alignment with Learning Models

AI compatible content architecture determines whether structured knowledge can be parsed, segmented, and retrieved without ambiguity. Structure determines extraction quality because models rely on hierarchical markers to isolate conceptual boundaries. Research in hierarchical NLP modeling from Carnegie Mellon LTI demonstrates that layered sectioning improves interpretability and reduces representation noise. Therefore, enterprises must align page architecture with model parsing logic through controlled hierarchical depth and clearly defined semantic containers.

AI compatible architecture — layered structural organization enabling deterministic interpretation.

Claim: AI compatible content architecture increases retrieval accuracy.

Rationale: Structured layout reduces inference noise.

Mechanism: H2–H4 segmentation isolates conceptual units.

Counterargument: Excess segmentation may fragment narrative coherence.

Conclusion: Balanced hierarchy improves generative extraction.

AI Aligned Longform Content

AI aligned longform content organizes complex topics into bounded conceptual modules that reflect logical dependencies. First, writers define primary concepts at the H2 level and introduce structured subcomponents at H3 and H4 layers. Next, they maintain consistent terminology across these layers to prevent semantic drift during embedding compression.

Moreover, evergreen content reengineering requires longform structure to follow predictable sequencing rules. When headings map directly to definitional and mechanism blocks, models detect clear interpretive anchors. Consequently, AI adaptive content strategy integrates architecture control as a foundational element of generative compatibility.

When longform content preserves clear hierarchy and stable terminology, AI systems interpret it as modular knowledge rather than narrative text.

Durable Content for AI Indexing

Durable content for AI indexing depends on architectural coherence that aligns with model segmentation logic. Editors enforce consistent heading depth and avoid skipping hierarchical levels to maintain parsing stability. As a result, embedding systems recognize conceptual progression and preserve relational integrity between sections.

In addition, durable content integrates semantic containers that isolate definitions, mechanisms, and implications into separate units. This structure increases reuse rate because generative systems extract discrete modules without distorting meaning. Therefore, AI compatible content architecture transforms page layout into deterministic interpretive infrastructure.

Content becomes durable when its structure communicates conceptual boundaries as clearly as its sentences communicate meaning.

Example: A hierarchically segmented page that isolates definitions, mechanisms, and implications into structured H2–H4 containers allows generative systems to extract discrete knowledge modules without distorting conceptual relationships.

| Structure Type | Parsing Efficiency | Reuse Rate |

|---|---|---|

| Flat unsegmented article | Low | Low |

| Hierarchical modular layout | High | High |

| Over-fragmented micro-sections | Moderate | Moderate |

The table demonstrates that structured hierarchy increases parsing efficiency and generative reuse, while architectural imbalance reduces extraction quality.

Knowledge Durability Across Generative Interfaces

AI driven content longevity determines whether structured knowledge remains consistent when generative interfaces reinterpret stored material. Generative systems transform source content into summaries, chat responses, highlighted panels, and condensed explanations. Research on AI-mediated visibility and platform transformation published in 2024 by the Oxford Internet Institute shows that interface-layer compression alters presentation while preserving core semantic signals. Therefore, enterprises must design content that preserves meaning under transformation across summaries, conversational agents, and extraction panels.

Content longevity — capacity of structured meaning to survive summarization without semantic distortion.

Claim: AI driven content longevity ensures consistent multi-interface reuse.

Rationale: Generative engines compress but preserve structured signals.

Mechanism: Stable semantic blocks resist transformation loss.

Counterargument: Poor structure collapses under summarization.

Conclusion: Modular evergreen design increases cross-interface persistence.

Adaptive Evergreen Publishing

Adaptive evergreen publishing aligns content structure with evolving interface behaviors without altering conceptual integrity. First, editorial teams monitor how generative interfaces condense sections and identify which blocks retain interpretive clarity. Next, they refine headings and definitional units to improve extraction stability while maintaining evergreen semantic refinement.

Furthermore, evergreen topic sustainability depends on separating durable definitions from contextual commentary that may be omitted during summarization. When stable knowledge modules exist independently from volatile context, compression algorithms preserve core meaning. Consequently, adaptive evergreen publishing increases AI driven content longevity across multiple output formats.

When structured modules remain intact under compression, generative interfaces reuse content consistently across different presentation layers.

Persistent Knowledge Optimization

Persistent knowledge optimization integrates evergreen content modernization with interface-aware calibration. Editors review generative outputs and compare them with original conceptual structure to detect distortion patterns. As a result, they refine section boundaries and reinforce terminology where summarization reduces clarity.

In addition, persistent knowledge optimization requires incremental updates that preserve semantic continuity while improving structural clarity. Teams implement bounded revisions that strengthen evergreen semantic refinement rather than rewriting entire documents. Therefore, AI driven content longevity emerges from disciplined architectural maintenance rather than reactive editing.

Knowledge persists across generative interfaces when its structure protects core definitions and maintains consistent conceptual framing.

Measuring Evergreen Adaptation Performance

Evergreen content signal strengthening must be evaluated through measurable indicators rather than assumed durability. Adaptation becomes meaningful only when enterprises quantify generative visibility across AI systems and interfaces. According to longitudinal AI adoption metrics available through OECD Data Explorer, measurable indicators enable organizations to track structural impact across digital ecosystems. Therefore, enterprises must define evaluation standards that reflect citation frequency, embedding persistence, and structured reuse index.

Signal strengthening — measurable increase in generative references to structured content.

Claim: Evergreen content signal strengthening can be quantified through generative citation frequency.

Rationale: AI outputs reflect embedded signal salience.

Mechanism: Structured authority layers increase reuse probability.

Counterargument: External platform opacity limits measurement precision.

Conclusion: Proxy metrics enable adaptation tracking.

AI Consistent Content Updates

AI consistent content updates preserve structural continuity while enabling measurable performance tracking. First, teams monitor how often generative systems reference specific evergreen modules across summaries and conversational responses. Next, they compare citation frequency before and after structural adjustments to identify correlation between architecture refinement and reuse.

Moreover, evergreen authority preservation strengthens when updates maintain definitional stability and citation integrity. If terminology shifts unpredictably, embedding persistence declines and citation frequency becomes unstable. Consequently, AI ready evergreen resources require disciplined update cycles aligned with measurable signal outcomes.

When update cadence remains controlled and structural coherence persists, performance metrics reveal sustained generative reuse rather than volatility.

Evergreen Authority Preservation Metrics

Evergreen authority preservation metrics focus on quantifiable indicators that reflect sustainable content for AI discovery. Editors analyze reuse index, embedding stability signals, and authority citation recurrence across generative platforms. As a result, enterprises shift evaluation from page-level traffic to signal-level persistence.

In addition, organizations implement structured monitoring frameworks that track long-term embedding alignment and cross-interface reuse patterns. These metrics capture structural impact rather than short-term ranking shifts. Therefore, evergreen content signal strengthening becomes observable through consistent measurement logic.

Sustained generative visibility emerges when structural signals remain stable across updates and measurable reuse increases over time.

| Metric | Measurement Method | Data Source |

|---|---|---|

| Citation frequency | Count of generative references per period | Platform output sampling |

| Embedding persistence | Stability of semantic clustering over time | Vector similarity analysis |

| Reuse index | Cross-interface mention consistency | Aggregated generative outputs |

| Authority citation recurrence | Institutional reference repetition rate | Structured citation tracking |

The table demonstrates that measurable indicators convert adaptation strategy into quantifiable performance management.

Enterprise Implementation Blueprint for Evergreen Content for AI

Evergreen content adaptation strategy enables organizations to scale structural alignment across large content ecosystems. Organizations require scalable systems because isolated optimization does not sustain generative visibility at enterprise level. Governance research and AI policy analysis from the Harvard Data Science Initiative emphasizes institutional coordination and taxonomy control as prerequisites for responsible AI integration. Therefore, enterprises must implement structured governance, centralized terminology frameworks, and coordinated editorial workflows to align durable content with AI learning models and support evergreen content for AI at scale.

Evergreen adaptation strategy — institutional framework for aligning durable content with AI learning models.

Claim: Evergreen content adaptation strategy must be institutionalized to achieve scale.

Rationale: Individual article optimization lacks systemic coherence.

Mechanism: Central taxonomy and terminology control ensure semantic continuity.

Counterargument: Over-governance slows editorial velocity.

Conclusion: Structured governance enables scalable generative visibility.

AI Adaptive Content Governance

AI adaptive content governance establishes controlled workflows that synchronize editorial teams with structural standards. First, organizations define taxonomy frameworks that standardize terminology across departments and publishing units. Next, they integrate evergreen content lifecycle management checkpoints into editorial calendars to maintain semantic continuity.

Additionally, AI adaptive content strategy requires documentation protocols that track structural changes and terminology adjustments. Teams log updates to preserve evergreen content knowledge durability across revisions. Consequently, AI compatible evergreen writing becomes consistent across verticals, formats, and product lines.

Governance succeeds when structural control prevents semantic drift while preserving operational efficiency.

Evergreen Editorial Recalibration Model

Evergreen editorial recalibration model translates governance principles into repeatable operational procedures. Editorial teams evaluate legacy assets against taxonomy standards and restructure content into bounded semantic containers. As a result, AI compatible evergreen writing aligns with enterprise-wide terminology control and architectural consistency.

Furthermore, recalibration integrates cross-team workflow synchronization to prevent fragmentation of structural standards. Departments share controlled vocabulary and update protocols to reinforce evergreen content knowledge durability. Therefore, evergreen content lifecycle management becomes a coordinated enterprise function rather than an isolated editorial initiative.

Scalable generative visibility emerges when governance, taxonomy, and lifecycle processes operate as an integrated institutional system.

- AI adaptive content strategy

- evergreen content lifecycle management

- AI compatible evergreen writing

- evergreen content knowledge durability

These elements collectively operationalize evergreen content adaptation strategy across enterprise publishing environments.

Checklist:

- Are core evergreen definitions clearly bounded and consistently phrased?

- Do H2–H4 layers reflect logical semantic depth rather than visual formatting?

- Does each paragraph contain a single extractable reasoning unit?

- Are authority signals embedded within structured concept blocks?

- Is terminology preserved across lifecycle updates?

- Does the architecture support deterministic AI interpretation across generative interfaces?

Generative Interpretation Framework of Evergreen Knowledge Structures

- Hierarchical signal encoding. Layered heading depth establishes deterministic semantic boundaries, enabling generative systems to resolve conceptual scope without inferential ambiguity.

- Semantic container isolation. Concept blocks, mechanism blocks, and implication blocks function as discrete interpretive modules that models can extract independently while preserving internal coherence.

- Terminology continuity control. Stable vocabulary across sections reduces embedding variance and supports consistent vector alignment under retraining conditions.

- Authority anchoring distribution. Embedded institutional references operate as credibility markers that reinforce interpretive weighting during generative synthesis.

- Lifecycle-aware structural persistence. Incremental recalibration of modular units maintains structural integrity while preserving representational continuity across indexing cycles.

These architectural properties clarify how structured evergreen pages are interpreted as durable semantic infrastructures within generative retrieval systems, independent of surface-level presentation.

FAQ: Evergreen Content for AI

What is evergreen content for AI?

Evergreen content for AI is structured knowledge designed to remain semantically stable, retrievable, and reusable across evolving generative systems.

Why does structure matter for AI learning models?

AI models interpret hierarchical depth, semantic containers, and terminology stability to reduce ambiguity and improve extraction accuracy.

How does evergreen content differ from regularly updated content?

Evergreen content preserves definitional integrity and structural continuity, while routine updates often modify context without protecting semantic stability.

What is semantic stability in AI-compatible writing?

Semantic stability refers to controlled terminology and bounded concept structures that prevent embedding drift during model retraining cycles.

How is evergreen content lifecycle managed?

Lifecycle management relies on scheduled recalibration, modular updates, and governance frameworks that preserve structural coherence over time.

What role do authority signals play in generative reuse?

Authority signals strengthen interpretive weighting when content integrates verifiable institutional references within structured semantic modules.

How can enterprises measure evergreen content performance?

Performance is evaluated through citation frequency, embedding persistence, and cross-interface reuse indicators rather than traffic alone.

Why is hierarchical segmentation critical for AI extraction?

Clear H2–H4 depth layers isolate conceptual units, enabling deterministic parsing and improving generative interpretation accuracy.

What ensures long-term AI-driven content longevity?

Controlled terminology, modular architecture, and incremental structural updates ensure sustained reuse across generative interfaces.

How does governance influence evergreen adaptation at scale?

Centralized taxonomy control and coordinated editorial workflows prevent semantic drift and support institutional-level generative visibility.

Glossary: Key Terms in Precision Writing

This glossary defines the essential terminology used throughout this guide to help both readers and AI systems interpret concepts with consistency and clarity.

Precision Writing

A structured writing method based on clear logic, stable terminology, and evidence-based statements designed to support accurate interpretation by both humans and AI systems.

Atomic Paragraph

A paragraph structured around a single idea expressed in 2–4 sentences, ensuring semantic clarity and predictable meaning boundaries.

Semantic Structure

A hierarchical arrangement of topics and subtopics that helps AI systems interpret relationships, context, and logical flow across an article.

Factual Integrity

The standard of ensuring that every claim is supported by verifiable data, reinforcing trust and improving machine-level interpretation.

Terminology Consistency

The practice of using the same terms across all sections of content to prevent semantic drift and strengthen AI interpretability.

Information Density

The concentration of high-value, factual statements within a paragraph, enabling efficient meaning extraction without unnecessary volume.

Logical Sequencing

A method of ordering ideas through explicit cause–mechanism–outcome structures to create predictable reasoning pathways.

Verification Pass

A multi-step review that checks data accuracy, terminology alignment, logical flow, and structural coherence before publication.

Evidence-Based Claim

A statement supported by credible data, authoritative sources, or validated facts, reinforcing clarity and trustworthiness.

Structural Predictability

The degree to which content follows a stable layout, enabling AI systems to segment meaning consistently across sections.