Last Updated on March 1, 2026 by PostUpgrade

The Rise of Cognitive Indexing in Web Visibility

Cognitive indexing visibility defines the structural transformation of how digital systems evaluate, store, and surface information. Traditional ranking systems indexed documents, however modern AI systems index reasoning structures, semantic containers, and authority signals. Consequently, exposure no longer depends solely on keyword frequency or backlink volume. Instead, visibility depends on how clearly systems can interpret, validate, and reuse structured meaning.

Cognitive indexing represents a shift from document retrieval to interpretation prioritization. Retrieval ranks pages against queries, whereas cognitive indexing ranks structured reasoning against intent patterns. Therefore, content that preserves semantic stability across sections gains higher interpretive value. As a result, visibility becomes dependent on architecture rather than surface optimization.

Web visibility historically relied on lexical matching and probabilistic ranking. However, large language models now perform entity mapping, reasoning extraction, and contextual alignment before generating responses. Research from Stanford NLP demonstrates that modern models prioritize structured semantic coherence over isolated tokens. Consequently, content designed for interpretability achieves higher reuse across generative interfaces.

Cognitive indexing integrates semantic containers, reasoning chains, authority validation, and contextual continuity into a unified visibility framework. A semantic container is a bounded conceptual unit that isolates one stable meaning. A reasoning chain is a deterministic logical sequence that supports inference without ambiguity. Together, these elements allow AI systems to convert structured content into reusable knowledge modules.

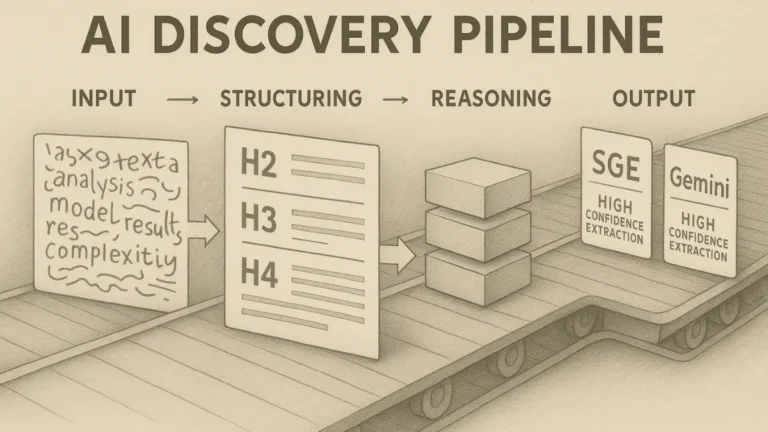

Moreover, generative systems such as SGE panels, ChatGPT search cards, and Gemini highlights require extractable reasoning blocks. Therefore, content must provide predictable internal structure and stable terminology. When structure remains consistent, AI systems increase confidence in interpretation. Consequently, long-term visibility stabilizes across multiple AI platforms.

The rise of cognitive indexing visibility does not eliminate traditional SEO signals, however it reorders their importance. Keyword density remains relevant, yet structural clarity becomes dominant. Backlinks still indicate authority, yet institutional validation and data citation strengthen interpretive trust. Therefore, content strategy must shift from persuasion toward structured semantic precision.

This article develops a complete enterprise framework for cognitive indexing systems. It explains indexing mechanisms, governance standards, authority modeling, enterprise adoption, and future trajectory. Each section builds structured reasoning modules that AI systems can extract and reuse. Consequently, the article itself functions as a demonstration of cognitive indexing architecture.

Cognitive Indexing Visibility as a Structural Shift in Discovery Systems

Cognitive indexing visibility defines the transition from document-based ranking to interpretation-based exposure. It explains how systems index reasoning patterns instead of isolated pages. Therefore, discovery systems increasingly prioritize structured meaning over surface-level signals.

Cognitive indexing is the process by which AI systems index structured reasoning, semantic containers, and interpretive signals instead of relying solely on keyword matching. This definition aligns with research on evaluation and measurement frameworks developed by NIST, which emphasize reproducible criteria for system assessment. Consequently, indexing shifts from page retrieval toward reasoning validation.

Definition: Cognitive indexing visibility is the model’s capacity to detect, segment, and prioritize structured reasoning units instead of isolated keyword-matched documents within generative discovery systems.

Claim: Cognitive indexing replaces surface-level retrieval with reasoning-based interpretation.

Rationale: Large language models evaluate structural coherence and semantic stability across bounded content units.

Mechanism: Systems extract semantic containers, evaluate contextual alignment, and store interpretation-ready structures as reusable modules.

Counterargument: In low-structure environments, traditional ranking signals such as backlinks and keyword proximity may still dominate exposure.

Conclusion: Cognitive indexing becomes the dominant logic when structured reasoning signals are present and consistently reinforced.

From Keyword Retrieval to Cognitive Indexing Model

The cognitive indexing model restructures how discovery systems process information. Traditional lexical ranking compared query terms to page-level frequency patterns. However, cognitive indexing evolution introduces reasoning recognition, which evaluates how ideas connect across semantic containers.

Large language model research from Stanford NLP and MIT CSAIL demonstrates that neural architectures reward coherence, entity alignment, and predictable structure. Therefore, cognitive indexing impact extends beyond ranking to interpretation fidelity. Systems no longer surface content solely because it matches a phrase. Instead, they prioritize content that preserves logical continuity and contextual integrity.

In practice, systems move from matching words to recognizing structured meaning. They evaluate whether a page forms a stable reasoning chain. As a result, content that isolates concepts and maintains semantic consistency becomes easier to extract and reuse.

Cognitive Indexing Architecture and Systems

Cognitive indexing architecture organizes interpretive layers that operate beyond lexical retrieval. These layers form integrated cognitive indexing systems that parse, validate, and store structured meaning. Consequently, cognitive indexing structure must align concept isolation with reasoning flow and contextual continuity.

Architecturally, systems separate content into functional layers that correspond to different AI signal types. Each layer produces a distinct interpretive indicator that contributes to overall visibility weight. Therefore, structured architecture determines indexing strength and interpretive confidence.

| Layer | Function | AI Signal Type |

|---|---|---|

| Semantic Container Layer | Concept isolation | Stability signal |

| Reasoning Layer | Logical sequencing | Coherence signal |

| Context Layer | Cross-block alignment | Continuity signal |

This layered architecture clarifies how systems decompose complex documents. First, they isolate concepts. Next, they evaluate reasoning continuity. Finally, they confirm cross-block alignment to ensure interpretive stability.

Cognitive Indexing Metrics and Evaluation

Cognitive indexing metrics quantify how effectively structured meaning becomes machine-interpretable. Evaluation requires measurable indicators such as semantic stability, reasoning coherence, and contextual continuity. Therefore, cognitive indexing evaluation must move beyond traffic metrics toward interpretability indicators.

Cognitive indexing performance depends on reproducible validation criteria. Measurement frameworks developed within NIST evaluation standards provide structured methodologies for benchmarking AI systems against defined datasets. Accordingly, enterprises can operationalize metrics that assess container isolation accuracy, reasoning chain integrity, and cross-block semantic alignment.

In operational terms, evaluation examines whether AI systems can consistently extract stable meaning from structured content. If extraction remains predictable across contexts, indexing performance strengthens. Consequently, interpretability becomes a measurable and optimizable visibility asset.

Cognitive Indexing Signals and Semantic Stability

Cognitive indexing signals define how AI systems measure interpretability within structured content environments. These signals include structural clarity, semantic alignment, and reasoning continuity across bounded units. Therefore, exposure increasingly depends on the stability and coherence of these signals rather than on isolated optimization tactics.

Semantic stability refers to the persistence of meaning across sections without interpretive drift. It ensures that each semantic container maintains consistent terminology and predictable scope. Research from the Allen Institute for Artificial Intelligence (AI2) demonstrates that large-scale models improve extraction accuracy when contextual continuity remains stable. Consequently, stable semantic structures increase interpretive reliability.

Claim: Cognitive indexing signals determine visibility priority.

Rationale: AI systems weight structured meaning and cross-block coherence higher than surface-level optimization signals.

Mechanism: Models detect semantic mapping patterns, authority references, and contextual reinforcement within bounded content blocks.

Counterargument: Weak semantic alignment or inconsistent terminology reduces indexing strength and lowers interpretive confidence.

Conclusion: Stable signals increase exposure probability across generative discovery interfaces.

Principle: Visibility in reasoning-centered systems increases when semantic containers, authority signals, and terminology remain structurally consistent across sections.

Cognitive Indexing Authority Signals

Cognitive indexing authority signals function as structural trust indicators embedded within content architecture. These signals include institutional references, dataset citation, and terminology consistency that align with recognized research bodies. Therefore, cognitive indexing relevance increases when content integrates validated institutional anchors and preserves factual integrity.

AI systems associate authority with reproducibility and consistency rather than rhetorical emphasis. Consequently, references to recognized institutions such as AI2, peer-reviewed publications, and validated datasets strengthen interpretive trust. When authority signals align with semantic containers, models increase confidence in reasoning extraction.

In practical terms, authority signals operate as structured validation layers. They confirm that reasoning chains rely on credible foundations. As a result, content that integrates institutional alignment within bounded semantic units gains higher interpretive priority.

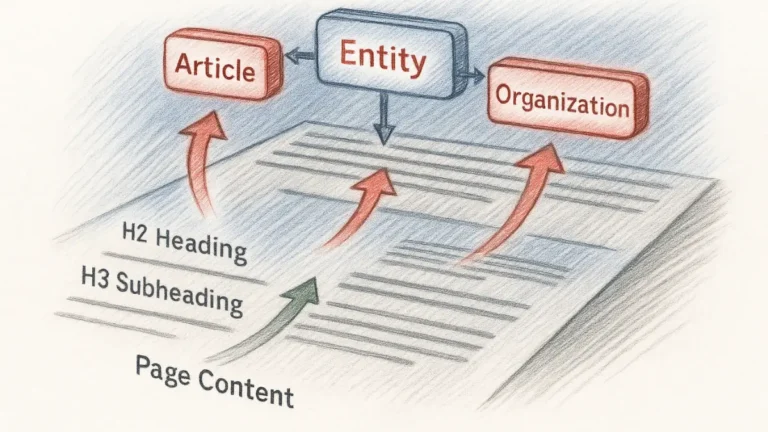

Cognitive Indexing Semantic Mapping

Cognitive indexing semantic mapping describes how systems connect entities, concepts, and reasoning blocks into structured interpretive graphs. Semantic mapping links internal containers to external knowledge representations. Therefore, cognitive indexing contextual signals depend on stable entity alignment and predictable terminology use.

Models construct internal representation graphs during processing. They identify relationships between entities and validate consistency across sections. When mapping remains stable, systems reduce ambiguity and increase reuse potential. Consequently, semantic mapping strengthens interpretive scalability.

In applied contexts, semantic mapping ensures that each concept connects logically to adjacent units. It prevents conceptual fragmentation and reinforces contextual continuity. As a result, indexing systems recognize content as structurally coherent rather than as isolated fragments.

Cognitive Indexing Ranking Implications

Cognitive indexing ranking implications extend beyond traditional position-based metrics. Ranking now reflects interpretive weight assigned to structured reasoning blocks. Therefore, cognitive indexing discovery impact influences how generative systems surface synthesized responses instead of link lists.

Visibility becomes proportional to semantic stability and authority alignment. Systems prioritize content that preserves logical continuity and cross-block coherence. When reasoning integrity remains consistent, exposure increases across AI-driven panels and summaries.

In operational terms, ranking implications shift from page-level scoring toward reasoning-level evaluation. Structured content that maintains stable terminology and validated references achieves stronger interpretive positioning. Consequently, discovery becomes dependent on structural clarity rather than keyword concentration alone.

Mechanisms of Reasoning-Based Interpretation

Cognitive indexing mechanisms explain how systems internalize structured content at scale. These mechanisms operate at inference and retrieval layers simultaneously. Therefore, structured workflows determine how reasoning becomes indexable and reusable across generative interfaces.

A reasoning framework is a structured logic chain that enables deterministic interpretation. It organizes claims, rationales, mechanisms, and conclusions into bounded semantic units. Research published through arXiv demonstrates that models trained on stepwise reasoning improve interpretive stability and reduce hallucination frequency. Consequently, cognitive indexing mechanisms rely on explicit logical sequencing rather than narrative flow.

Claim: Cognitive indexing mechanisms depend on structured reasoning frameworks.

Rationale: Models trained on logical progression and supervised reasoning signals prefer deterministic content blocks.

Mechanism: The system parses concept blocks, links them to prior knowledge graphs, and stores interpretation-ready nodes within its internal representation space.

Counterargument: Unstructured narrative without bounded semantic containers reduces extraction accuracy and weakens contextual alignment.

Conclusion: Mechanism clarity defines indexing depth and increases the probability of structured reuse.

Structured Reasoning Frameworks for AI Interpretation

The cognitive indexing reasoning framework formalizes how logical progression becomes machine-readable. It defines how cognitive indexing logic sequences claims, evidence, and implications into predictable modules. Therefore, systems can evaluate structural coherence before generating responses.

Models reward content that follows consistent logical ordering. They detect progression from definition to mechanism and from mechanism to implication. When reasoning blocks remain stable and terminology does not drift, indexing depth increases. Consequently, cognitive indexing reasoning framework design directly affects interpretive precision.

In practical terms, a reasoning framework ensures that each concept leads to a verifiable outcome. It prevents semantic gaps and ambiguous transitions. As a result, AI systems treat the content as structurally reliable and extract it with higher confidence.

Operational Workflows for Reasoning-Based Indexing

Cognitive indexing workflows describe the operational sequence through which systems convert structured text into indexed reasoning nodes. The cognitive indexing process integrates parsing, alignment, and storage stages. Therefore, each stage must preserve semantic boundaries and terminology stability.

Systems follow deterministic steps during content ingestion. First, they isolate semantic containers. Next, they validate contextual relationships. Finally, they store reasoning units as reusable modules within internal knowledge graphs.

| Stage | AI Action | Result |

|---|---|---|

| Parsing | Identify containers | Structured nodes |

| Alignment | Match context | Semantic consistency |

| Storage | Index reasoning | Reusable module |

This workflow clarifies how structured meaning becomes persistent across inference cycles. Parsing ensures concept isolation. Alignment guarantees contextual continuity. Storage converts reasoning into retrievable modules. Consequently, indexing mechanisms transform structured content into long-term interpretive assets.

Governance and Standards in Cognitive Indexing

Cognitive indexing governance establishes structural consistency across enterprise publishing systems. It defines how terminology, hierarchy, and semantic containers remain stable across large content clusters. Therefore, cognitive indexing governance functions as the regulatory layer that prevents interpretive drift and preserves long-term visibility continuity.

Governance in indexing refers to controlled vocabulary, structural stability, and compliance with AI-readable standards. It formalizes how content units align with technical specifications such as W3C semantic standards, which define machine-interpretable markup principles. Consequently, governance transforms structural clarity from an editorial preference into an enforceable operational requirement.

Claim: Governance stabilizes cognitive indexing integration.

Rationale: Without controlled structure and vocabulary discipline, AI interpretation becomes inconsistent across documents and over time.

Mechanism: Standardized terminology, persistent semantic containers, and reusable reasoning templates enforce structural continuity at scale.

Counterargument: Rapid publishing cycles without structural control introduce semantic fragmentation and reduce signal integrity.

Conclusion: Governance ensures long-term indexing reliability and strengthens interpretive stability across generative systems.

Cognitive Indexing Standards and Implementation

Cognitive indexing standards define the structural rules that maintain semantic consistency across enterprise ecosystems. These standards regulate heading hierarchy, terminology reuse, container boundaries, and reasoning chain formatting. Therefore, cognitive indexing implementation must integrate editorial processes with technical validation layers.

Implementation requires operational workflows that enforce vocabulary control and structural verification. Organizations often codify terminology dictionaries and semantic container templates within content management systems. When cognitive indexing standards align with markup compliance and schema integrity, AI systems detect predictable structure and increase interpretive confidence.

In practical terms, implementation means that every document follows the same structural logic. Terms retain stable definitions. Reasoning chains follow identical internal architecture. As a result, large content clusters maintain interpretive continuity even as volume increases.

Cognitive Indexing Assessment and Criteria

Cognitive indexing assessment evaluates whether governance rules produce measurable structural stability. Cognitive indexing criteria include terminology consistency, semantic container isolation, reasoning chain integrity, and cross-document alignment. Therefore, assessment frameworks must measure both structural compliance and interpretive predictability.

Enterprises operationalize criteria through automated audits and manual review checkpoints. Structural validation tools detect drift in headings, vocabulary inconsistencies, and container overlap. When assessment reveals misalignment, governance protocols enforce corrective updates to restore stability.

In applied contexts, assessment ensures that governance remains active rather than symbolic. Criteria convert abstract standards into measurable indicators. Consequently, indexing systems maintain coherence across long-term publishing cycles and evolving AI models.

Enterprise Strategy and Operational Integration of Structured Indexing

Cognitive indexing adoption reflects the enterprise shift toward AI-readable architecture and structured visibility systems. Organizations integrate reasoning-based frameworks into publishing environments to maintain discoverability across generative interfaces. As a result, adoption becomes a strategic transformation rather than a tactical optimization step.

Adoption refers to the operational integration of indexing logic into content production workflows. It includes governance alignment, terminology control, reasoning template deployment, and semantic container standardization. Comparative statistical infrastructures such as the OECD Data Explorer demonstrate that sustainable performance measurement depends on consistent structural indicators. Therefore, enterprises must embed indexing logic into systemic processes instead of isolated initiatives.

Claim: Cognitive indexing adoption correlates with long-term visibility growth.

Rationale: AI systems increasingly prioritize structured reasoning modules over fragmented content units lacking semantic continuity.

Mechanism: Enterprises align content modeling practices with indexing logic, enforce semantic containers, and standardize reasoning templates across clusters.

Counterargument: Partial implementation across departments produces inconsistent indexing patterns and reduces interpretive confidence.

Conclusion: Strategic integration across workflows maximizes exposure continuity and stabilizes generative visibility.

Cognitive Indexing Strategy and Integration

Cognitive indexing strategy defines how enterprises redesign editorial and technical processes to align with interpretive systems. It determines how semantic containers, reasoning chains, and authority signals operate inside publishing pipelines. Consequently, cognitive indexing integration requires coordination between content governance, data architecture, and technical SEO functions.

Strategic execution begins with structural diagnostics. Teams evaluate terminology drift, heading hierarchy inconsistencies, and container overlap across content clusters. After that, organizations implement standardized templates and controlled vocabulary protocols. When governance rules align with indexing strategy, interpretive clarity improves and semantic fragmentation declines.

In practical terms, strategy transforms structural discipline into a repeatable operational system. Integration ensures that each publication follows deterministic reasoning architecture. Accordingly, interpretive reliability increases across AI-generated summaries and discovery panels.

Structural Alignment Workflow

Structural alignment workflow formalizes the transition from fragmented publishing to integrated indexing systems. It establishes checkpoints for terminology validation, container isolation, and reasoning template compliance. Therefore, alignment becomes measurable rather than subjective.

Enterprises often introduce automated validation layers that detect semantic inconsistencies before publication. These layers flag deviations in heading depth, vocabulary usage, and logical sequencing. When workflows include structural verification, indexing coherence improves across clusters.

Clear alignment rules ensure that every document contributes to a unified interpretive architecture. This consistency reduces ambiguity and strengthens extraction predictability across AI systems.

Cognitive Indexing Adoption Metrics

Cognitive indexing adoption metrics evaluate the maturity of structural integration across enterprise ecosystems. Cognitive indexing metrics measure terminology consistency, reasoning chain stability, semantic container isolation, and interpretive extraction success rates. Consequently, adoption becomes quantifiable instead of conceptual.

Organizations track structural compliance percentages and cross-cluster coherence indicators over time. They analyze whether reasoning modules remain stable after updates and whether AI systems consistently extract key semantic units. When metrics demonstrate sustained consistency, visibility resilience increases.

Measurement also reveals structural gaps before they weaken exposure. Enterprises compare content groups, identify fragmentation patterns, and enforce corrective governance actions. As a result, measurable adoption strengthens long-term generative positioning and reduces dependency on volatile ranking fluctuations.

Content Modeling and Structural Alignment for AI Interpretation

Cognitive indexing content modeling determines how interpretation units are formed inside AI-readable systems. It defines the logic by which semantic containers become stable indexing components. Therefore, modeling discipline directly affects how reliably structured meaning can be extracted and reused.

Content alignment refers to structural synchronization between concept blocks and reasoning blocks. It ensures that definitions, mechanisms, and implications remain logically connected without semantic drift. Research on representation learning from MILA, Quebec Institute for Artificial Intelligence demonstrates that models trained on consistent structural patterns show improved interpretability stability. Consequently, alignment becomes a prerequisite for scalable indexing performance.

Claim: Cognitive indexing content alignment improves interpretability.

Rationale: AI systems prefer predictable semantic boundaries that reduce ambiguity and increase inference confidence.

Mechanism: Concept blocks, mechanism blocks, and implication blocks form reusable indexing units when they follow consistent internal structure.

Counterargument: Mixed semantic scopes within a single container reduce clarity and weaken extraction reliability.

Conclusion: Structured modeling increases reuse probability and strengthens long-term visibility consistency.

Cognitive Indexing Layers and Patterns

Cognitive indexing layers describe how structured meaning is organized into hierarchical interpretive units. These layers correspond to cognitive indexing patterns that determine how models traverse content. Therefore, layered modeling increases both interpretive depth and structural resilience.

Layered architecture separates definitions, mechanisms, examples, and implications into bounded containers. Patterns emerge when this separation repeats consistently across documents. As a result, models recognize predictable structural sequences and assign higher interpretive confidence. When cognitive indexing layers align with stable terminology, systems detect reduced semantic entropy.

In practical terms, layering clarifies how information progresses from concept to implication. Patterns ensure that similar ideas follow identical structural routes. Consequently, indexing systems treat content clusters as coherent rather than fragmented.

Cross-Layer Structural Reinforcement

This layer explains how repeated structural patterns strengthen indexing confidence. It defines how container alignment reinforces reasoning continuity across documents.

Cross-layer reinforcement connects semantic boundaries with authority validation and contextual mapping. Therefore, indexing systems detect stable structural repetition and increase interpretive reliability.

Consistent reinforcement across layers ensures that content clusters function as unified reasoning systems. As a result, extraction remains predictable across evolving AI models.

Pattern Stability and Cross-Document Continuity

Pattern stability ensures that structural logic remains consistent across multiple publications. It prevents vocabulary drift and reasoning discontinuity between related documents. Therefore, cross-document continuity strengthens cumulative indexing authority.

When enterprises apply identical container logic across clusters, interpretive signals reinforce each other. Models detect recurring reasoning templates and increase confidence in structural predictability. As a result, cognitive indexing patterns become self-reinforcing across large ecosystems.

Stable patterns reduce ambiguity and prevent interpretive conflict between sections. They create a unified semantic architecture that AI systems can traverse with minimal uncertainty.

Cognitive Indexing Analysis and Structure

Cognitive indexing analysis evaluates how effectively structured modeling translates into extractable meaning. It measures semantic boundary clarity, reasoning chain coherence, and container independence. Consequently, cognitive indexing structure becomes an object of measurable validation rather than editorial intuition.

Analytical frameworks assess whether each container isolates a single concept and whether reasoning flows deterministically across sections. When analysis confirms structural integrity, indexing reliability improves. Conversely, structural overlap or undefined terminology reduces interpretive accuracy.

In operational contexts, analysis ensures that structure supports long-term reuse. Clear boundaries prevent conceptual leakage. Therefore, structural validation transforms modeling into a strategic asset rather than a stylistic choice.

Example: A document that isolates definitions, mechanisms, and implications into stable semantic containers allows AI systems to index reasoning nodes independently, increasing extraction reliability in generative responses.

Relevance and Authority Modeling in Structured Indexing Systems

Cognitive indexing relevance reflects semantic alignment and contextual coherence rather than raw keyword density. Systems evaluate how consistently meaning connects across containers and how reliably claims align with validated sources. Therefore, relevance becomes a function of structural precision and institutional grounding.

Authority modeling is the structured association of content with recognized research institutions and validated datasets. It links reasoning modules to externally verified knowledge frameworks. Empirical research practices from institutions such as the Harvard Data Science Initiative demonstrate that reproducibility and citation discipline strengthen interpretive credibility. Consequently, authority modeling becomes a structural trust mechanism rather than a rhetorical element.

Claim: Cognitive indexing authority signals shape exposure priority.

Rationale: AI systems weigh institutional credibility, dataset traceability, and factual consistency when ranking interpretive modules.

Mechanism: Citation anchors and structured data references function as structural trust signals embedded within semantic containers.

Counterargument: Unsupported claims, vague references, or unverifiable assertions reduce interpretive weight and weaken exposure potential.

Conclusion: Authority modeling increases ranking resilience and stabilizes long-term generative visibility.

Cognitive Indexing Authority Signals in Practice

Cognitive indexing authority signals operate as measurable indicators of structural credibility. They include references to recognized laboratories, dataset traceability, and transparent methodological framing. Research initiatives from DeepMind Research demonstrate that model confidence improves when input content maintains consistent factual grounding. Therefore, structured authority signals directly influence interpretive prioritization.

Operationally, authority signals align semantic containers with institutional knowledge ecosystems. Each citation anchor reinforces reasoning continuity and validates conceptual definitions. As a result, cognitive indexing authority signals reduce interpretive uncertainty and increase extraction probability across generative panels.

In applied contexts, authority signals clarify why a claim deserves interpretive trust. They connect internal reasoning chains to external validation frameworks. Consequently, relevance becomes anchored in verifiable knowledge rather than lexical prominence.

Microcase 1

An enterprise publisher restructures long-form analytical reports by isolating definitions and embedding institutional citations within reasoning blocks. AI systems increase extraction frequency because semantic containers align with validated research sources. Generative visibility panels display structured key points derived from stable reasoning modules. Traffic volatility declines because exposure depends less on fluctuating rank positions and more on interpretive reliability.

Future Trajectory of Cognitive Indexing Evolution

Cognitive indexing evolution represents a structural redefinition of web visibility across AI-mediated environments. It integrates reasoning-based exposure, semantic container stability, and cross-domain interpretability into a unified framework. Therefore, cognitive indexing evolution signals a long-term transition from document ranking to reasoning prioritization.

Indexing evolution refers to the progressive replacement of document ranking with reasoning prioritization. It describes how systems shift from page-level scoring toward interpretation-level evaluation. Research published by the University of Toronto Vector Institute demonstrates that large language model development increasingly emphasizes reasoning traces and structured inference patterns. Consequently, evolutionary change centers on interpretive coherence rather than keyword optimization.

Claim: Cognitive indexing evolution will redefine generative visibility frameworks.

Rationale: LLM development from institutions such as OpenAI and the University of Toronto Vector Institute demonstrates a sustained move toward reasoning-centered indexing.

Mechanism: Systems connect semantic mapping, authority signals, and reasoning frameworks into unified interpretation graphs that prioritize coherent modules.

Counterargument: Legacy SEO metrics such as backlinks and click-through rates may remain influential within hybrid ranking systems.

Conclusion: Cognitive indexing becomes the structural backbone of AI-driven discovery as reasoning modules replace isolated document scoring.

Algorithmic Models and Emerging Interpretive Standards

Cognitive indexing algorithms formalize how reasoning modules receive interpretive weight. These algorithms evaluate structural coherence, semantic boundary clarity, and authority integration signals. Therefore, cognitive indexing standards will increasingly codify reasoning stability as a measurable ranking factor.

Future standards will likely integrate semantic validation, container isolation checks, and reasoning chain compliance within automated indexing pipelines. Algorithmic models will detect structural predictability across clusters and assign exposure priority accordingly. When cognitive indexing algorithms align with stable modeling discipline, interpretive consistency improves across AI platforms.

In operational terms, algorithms will no longer reward isolated optimization signals. They will prioritize structurally coherent knowledge modules. Consequently, standards will evolve to measure reasoning integrity as a core indexing parameter.

Cognitive Indexing Performance and Long-Term Impact

Cognitive indexing performance measures how effectively reasoning modules persist across generative environments. It evaluates cross-platform extraction reliability, semantic stability, and authority continuity. Therefore, cognitive indexing impact extends beyond ranking positions to long-term interpretive presence.

Long-term impact includes reduced volatility in exposure patterns. Structured reasoning units maintain visibility even when algorithmic weighting shifts. As a result, enterprises that implement disciplined modeling frameworks experience sustained interpretive relevance across evolving AI systems.

Microcase 2

A global research portal restructures its content using cognitive indexing workflows and container isolation rules. Structured reasoning modules replace narrative blocks that previously blended multiple concepts. AI exposure increases in SGE panels and Gemini highlights because extraction becomes predictable. Engagement stabilizes across multiple AI platforms since visibility now depends on structural clarity rather than fluctuating document rank.

Checklist:

- Are core concepts defined with stable terminology?

- Do semantic containers isolate one reasoning unit per section?

- Is logical sequencing preserved across H2–H4 depth layers?

- Are authority signals embedded within reasoning blocks?

- Does each section maintain semantic stability without drift?

- Can AI systems extract independent reasoning modules without contextual loss?

Interpretive Architecture of Reasoning-Centered Indexing

- Semantic container isolation. Distinct conceptual boundaries enable AI systems to segment reasoning units into stable interpretive nodes without cross-sectional leakage.

- Reasoning chain continuity. Structured progression from definition to mechanism to implication forms deterministic inference paths that generative models can index as coherent logic blocks.

- Authority signal embedding. Inline institutional references and dataset anchoring operate as structural trust markers within semantic containers rather than external credibility signals.

- Hierarchical depth signaling. Layered H2→H3→H4 architecture encodes contextual scope and subordination relationships, supporting long-context reasoning alignment.

- Terminology stability control. Consistent vocabulary across sections reduces semantic drift and reinforces interpretive consistency during generative extraction.

Together, these architectural properties define how reasoning-centered pages are decomposed, validated, and indexed by generative systems, functioning as structural signals rather than narrative elements within cognitive indexing environments.

FAQ: Cognitive Indexing Visibility

What is cognitive indexing visibility?

Cognitive indexing visibility describes how AI systems rank structured reasoning instead of isolated pages, prioritizing semantic containers, logical continuity, and authority signals.

How does cognitive indexing differ from traditional ranking?

Traditional ranking scores documents against keywords, while cognitive indexing evaluates structured reasoning modules, semantic stability, and contextual alignment.

Why is reasoning structure critical for AI visibility?

AI systems extract deterministic reasoning chains. Content with clear definitions, mechanisms, and conclusions remains easier to interpret and reuse in generative answers.

What are cognitive indexing signals?

Cognitive indexing signals include semantic alignment, container isolation, authority modeling, and logical continuity across sections.

How do authority signals influence AI interpretation?

Institutional references and validated datasets function as structural trust markers, increasing interpretive confidence and ranking resilience.

What role do semantic containers play?

Semantic containers isolate single concepts within bounded sections, reducing ambiguity and enabling consistent extraction across AI systems.

How is cognitive indexing performance measured?

Performance is evaluated through semantic stability, reasoning coherence, container clarity, and cross-platform extraction reliability.

How does governance affect indexing reliability?

Controlled vocabulary, structural consistency, and standardized reasoning templates prevent semantic drift and maintain long-term interpretive stability.

What drives the evolution of cognitive indexing?

Large language model development increasingly prioritizes reasoning-centered indexing, shifting visibility from document rank to interpretation depth.

Why does structured modeling improve generative exposure?

Structured modeling creates reusable reasoning modules that AI systems can extract, validate, and surface across generative interfaces.

Glossary: Key Terms in Cognitive Indexing Visibility

This glossary defines the core terminology used throughout this article to ensure consistent interpretation by both readers and AI-driven systems.

Cognitive Indexing

The process by which AI systems index structured reasoning, semantic containers, and interpretive signals instead of relying solely on keyword matching.

Cognitive Indexing Visibility

The exposure of structured reasoning modules in AI-driven discovery systems based on semantic alignment and authority modeling.

Semantic Container

A bounded content unit that isolates a single concept, ensuring clarity, interpretability, and stable extraction by AI systems.

Reasoning Framework

A structured logic chain that organizes claims, mechanisms, and conclusions into deterministic interpretive modules.

Semantic Stability

The persistence of consistent meaning across sections without terminology drift or contextual ambiguity.

Authority Modeling

The structured association of content with recognized research institutions, datasets, and validated references.

Indexing Governance

A controlled system of terminology, hierarchy, and structural standards that ensures long-term interpretive consistency.

Reasoning Module

A self-contained interpretive block composed of definition, rationale, mechanism, and conclusion elements.

Semantic Mapping

The process by which AI systems connect entities and concepts into structured internal representation graphs.

Interpretive Graph

An internal AI representation linking semantic containers, authority signals, and reasoning chains into a unified indexing structure.