Last Updated on February 22, 2026 by PostUpgrade

The Role of Brand Authority in Generative Discovery

Brand authority generative discovery defines how structured credibility influences visibility inside AI-mediated systems. Generative platforms no longer rely solely on hyperlinks or keyword frequency. Instead, they evaluate entity stability, evidence consistency, and contextual trust. Therefore, authority becomes a structural determinant of inclusion in machine-generated outputs.

Traditional search ranking optimized for link accumulation and keyword positioning. However, generative systems prioritize probabilistic reasoning over static indexing. Consequently, the shift moves from page-level competition toward entity-level validation. Visibility now depends on whether models interpret a brand as a reliable knowledge source.

This transformation affects AI comprehension, entity inference, and trust modeling simultaneously. AI comprehension refers to a model’s ability to interpret structured meaning within consistent semantic containers. Entity inference describes how models connect brands to concepts inside internal knowledge graphs. Trust modeling evaluates factual reliability through cross-source validation patterns. Together, these processes determine long-term discoverability.

Generative discovery therefore functions as a layered system rather than a ranking list. It evaluates structural authority, citation frequency, and semantic coherence. As a result, brands that maintain consistent interpretability achieve persistent exposure across conversational interfaces and synthesis panels.

Structural Shifts in Discovery:

- Ranking to inference systems

- Links to authority signals

- Traffic to citation presence

- Search results to generative outputs

These shifts demonstrate a structural transition from mechanical optimization toward authority-centered AI ecosystems where credibility determines visibility stability.

Brand Authority as a Structural Signal in AI Systems

Brand authority in AI discovery determines how generative systems assign probabilistic weight to entities during response construction. In machine-mediated environments, authority operates as a structured aggregation of credibility, expertise, and consistent reference presence across machine-readable ecosystems. Therefore, authority becomes a computationally detectable structural signal rather than a reputational abstraction.

Brand authority is a measurable pattern of trust, expertise attribution, and entity stability recognized across generative systems. It forms through repeatable citation alignment, knowledge graph reinforcement, and cross-source validation. Research conducted by Stanford NLP demonstrates that language models rely on structured entity representations and contextual consistency to improve interpretability and reduce semantic ambiguity. Consequently, authority directly influences probabilistic inference inside generative pipelines.

Claim: Brand authority functions as a primary structural weighting factor in generative discovery systems.

Rationale: AI systems reduce uncertainty by prioritizing entities with stable credibility markers.

Mechanism: Large language models integrate citation frequency, entity coherence, and trust signals into probabilistic output selection.

Counterargument: Authority signals may be distorted in fragmented or emerging knowledge domains.

Conclusion: Stable brand authority increases likelihood of generative inclusion.

Definition: AI authority recognition is the model’s ability to detect, evaluate, and weight credibility signals associated with a brand based on entity stability, citation density, and cross-domain validation patterns within generative discovery systems.

Brand authority signals for language models

Brand authority signals for language models emerge from structured patterns embedded across validated corpora. These signals include citation density, institutional references, expert attribution, and factual consistency across datasets. As a result, models detect authority through statistical reinforcement rather than surface-level mentions.

Language models evaluate cross-document co-occurrence and entity-role alignment during training and retrieval phases. When a brand consistently appears within reliable contexts, models assign higher confidence weights during generation. Therefore, authority becomes encoded in probabilistic token selection.

In practical terms, repeated validation across independent sources reduces interpretive variance. Models reward brands whose signals remain stable across datasets because stable patterns reduce computational risk.

Brand authority entity recognition

Brand authority entity recognition refers to the computational process by which models classify a brand as a stable node within internal knowledge graphs. Entity recognition connects brand identifiers to attributes, expertise domains, and relational contexts. Consequently, recognition accuracy determines generative inclusion probability.

Models apply named-entity recognition systems and graph alignment algorithms to stabilize identity mapping. If references remain consistent and semantically aligned, graph edges strengthen over time. However, inconsistent terminology or fragmented citation weakens structural recognition.

When entity recognition stabilizes, generative systems treat the brand as a reliable reasoning component. Stable recognition therefore increases the likelihood of inclusion in summaries, synthesized answers, and contextual panels.

Brand authority signal consistency

Brand authority signal consistency measures how persistently authority markers appear across time, datasets, and domains. Generative systems evaluate both presence and durability. Consequently, persistent reinforcement strengthens confidence allocation.

If citation density fluctuates or semantic alignment varies, models reduce weighting. Therefore, longitudinal stability becomes a structural requirement for authority reinforcement.

Consistent signals allow generative systems to construct reliable internal representations. As reinforcement repeats across environments, models treat the brand as a dependable knowledge anchor that reduces uncertainty.

| Signal Type | Machine Detection Mode | Generative Impact |

|---|---|---|

| Citation frequency | Cross-corpus co-occurrence modeling | Increased probability of inclusion in synthesized outputs |

| Entity coherence | Knowledge graph edge stability analysis | Higher structural trust weighting |

| Expert attribution | Institutional source credibility scoring | Elevated authority ranking in generative summaries |

| Cross-domain presence | Multi-source validation indexing | Expanded visibility across conversational interfaces |

| Temporal stability | Longitudinal reference tracking across datasets | Sustained inclusion across generative response cycles |

These measurable signals reinforce one another and create cumulative authority weighting. Consequently, generative systems prioritize brands that maintain durable, machine-recognizable authority patterns across validated environments.

Brand Credibility Modeling in Generative Architectures

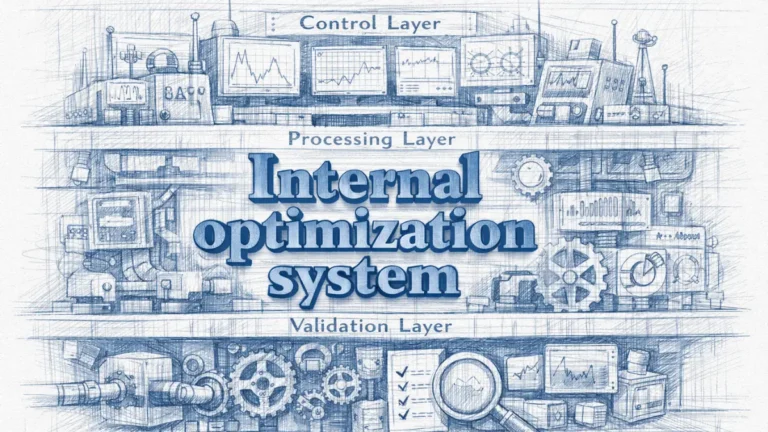

Brand credibility in generative systems determines how models evaluate factual stability and expertise consistency before producing outputs. Credibility modeling refers to the algorithmic evaluation of whether an entity demonstrates durable evidence alignment across machine-readable environments. Therefore, credibility operates as a structured probability layer rather than a subjective assessment.

Credibility modeling measures how consistently a brand aligns with verified data, validated institutions, and stable semantic references. It aggregates evidence across corpora and assigns probabilistic confidence weights. Research from MIT CSAIL demonstrates that large-scale AI architectures rely on structured knowledge validation and cross-referenced entity modeling to reduce uncertainty during inference. Consequently, credibility scoring directly influences generative output stability.

Claim: Generative systems model credibility as a probability distribution over trusted entities.

Rationale: AI minimizes hallucination risk by weighting reliable sources.

Mechanism: Knowledge graph cross-validation and contextual reinforcement loops shape credibility scoring.

Counterargument: Rapid content cycles may outpace validation layers.

Conclusion: Credibility modeling stabilizes generative outputs.

Brand authority modeling for AI

Brand authority modeling for AI translates credibility signals into computational parameters. Models quantify citation density, institutional alignment, and semantic coherence to calculate entity-level reliability. As a result, authority becomes part of the scoring architecture that governs response generation.

AI systems construct internal representations of entities using graph embeddings and probabilistic mappings. These representations adjust dynamically as new validated data enters training or retrieval layers. Therefore, structured modeling ensures that authority reflects cumulative evidence rather than isolated mentions.

When authority modeling remains consistent across datasets, generative systems treat the brand as a stable reference point. This stability increases inclusion probability in structured answers and conversational outputs.

Brand authority credibility modeling

Brand authority credibility modeling integrates evidence signals with validation mechanisms to assign trust weights. Credibility signals include factual consistency, dataset alignment, and expert-linked attribution. Consequently, models calculate a confidence range that determines whether an entity qualifies for inclusion in generative reasoning.

Credibility modeling also evaluates anomaly detection and conflict resolution across sources. When discrepancies arise, models lower probabilistic weighting until further reinforcement occurs. Therefore, credibility becomes adaptive but evidence-bound.

In straightforward terms, models promote brands that repeatedly demonstrate accurate, verifiable, and consistent information across reliable datasets.

Brand authority modeling frameworks

Brand authority modeling frameworks define the structural architecture that governs credibility scoring. These frameworks combine knowledge graph validation, source reliability metrics, and contextual reinforcement analysis. Accordingly, modeling frameworks transform dispersed signals into measurable credibility indices.

Frameworks often incorporate evaluation datasets and benchmark validation protocols aligned with standards such as those developed by the National Institute of Standards and Technology, which defines rigorous data quality and integrity evaluation methodologies. These validation layers reduce structural noise and strengthen probabilistic consistency.

In practical application, a modeling framework ensures that authority is not assumed but computationally verified through repeatable validation steps.

Validation datasets and evaluation benchmarks

Validation datasets and evaluation benchmarks serve as control layers for credibility modeling. Validation datasets contain curated, high-integrity references that models use to calibrate inference accuracy. Evaluation benchmarks measure whether generative outputs align with verified knowledge structures.

Benchmarks compare model outputs against structured ground-truth datasets to detect factual drift. When deviations exceed tolerance thresholds, credibility weighting adjusts accordingly. Therefore, validation mechanisms protect generative systems from uncontrolled variance.

Reliable evaluation datasets ensure that authority reflects verified consistency rather than short-term visibility spikes.

Citation frequency modeling

Citation frequency modeling quantifies how often a brand appears in validated contexts. Models track co-occurrence patterns, institutional references, and domain-specific citations to assess authority reinforcement. Consequently, repeated citations increase probabilistic trust allocation.

However, frequency alone does not determine credibility. Models cross-check citation context, source reliability, and semantic consistency before adjusting scores. Therefore, citation modeling functions as a structured signal within a broader validation architecture.

Repeated, contextually aligned citations strengthen credibility because they reduce interpretive uncertainty and confirm expertise alignment.

Microcase 1

A financial analytics firm implemented structured credibility audits across its public datasets and research publications. The firm standardized entity references, aligned citations with validated institutional datasets, and corrected semantic inconsistencies. Over six months, generative platforms began referencing the firm more frequently in synthesized financial summaries. Internal monitoring showed a measurable increase in citation inclusion across AI-driven responses, demonstrating how structured credibility modeling amplifies generative visibility.

Brand Authority and Knowledge Graph Inference

Brand authority in knowledge graph inference determines how generative systems reinforce credibility through relational structure. Knowledge graph inference refers to structured entity reasoning within AI systems that connect concepts through validated relationships. Therefore, authority becomes amplified when brands occupy stable and dense positions inside machine-readable graphs that influence brand authority generative discovery outcomes.

Knowledge graphs encode entities, attributes, and relationships into structured triples that support probabilistic reasoning. Research from the Allen Institute for Artificial Intelligence (AI2) demonstrates that graph-based entity modeling improves contextual inference accuracy in large-scale AI systems. Consequently, brands embedded within validated relationship networks gain structural weighting during generative synthesis.

Claim: Knowledge graphs amplify brand authority through entity relationship reinforcement.

Rationale: Graph density improves inference reliability.

Mechanism: Cross-domain entity linking increases probability weighting.

Counterargument: Sparse domains limit graph amplification.

Conclusion: Authority scales through graph integration.

Brand authority and entity trust

Brand authority and entity trust intersect at the point where relational stability determines credibility. Entity trust refers to the model’s confidence that a node consistently represents accurate and validated information. Therefore, when a brand connects to trusted institutions, datasets, and expert entities, trust reinforcement increases.

AI systems measure trust through edge stability and relationship validation across multiple corpora. Strong, repeated connections between a brand and authoritative entities raise inference reliability. Consequently, models prioritize brands embedded within high-integrity relational networks.

In practice, the more reliably a brand connects to validated entities, the more confidently generative systems integrate it into reasoning chains.

Brand authority semantic alignment

Brand authority semantic alignment measures whether a brand consistently appears within coherent thematic and conceptual clusters. Semantic alignment ensures that entity relationships reinforce expertise rather than produce contradictory signals. Therefore, alignment strengthens graph-based authority weighting.

When semantic clusters remain stable across datasets, inference engines reduce ambiguity. However, misaligned associations weaken relational confidence. Consequently, consistent thematic positioning supports durable authority scaling.

When a brand repeatedly aligns with specific validated domains, models interpret that consistency as expertise reinforcement.

Brand authority inference signals

Brand authority inference signals represent the measurable outputs of graph-based reinforcement. These signals include relationship density, attribute consistency, cross-domain references, and expert linkage. Accordingly, inference systems convert relational patterns into probabilistic weighting.

Inference signals accumulate when entity connections persist across independent knowledge layers. However, weak or isolated relationships reduce amplification effects. Therefore, structural integration within dense graphs determines authority scaling potential.

Stable inference signals indicate that a brand occupies a reinforced relational position that supports generative inclusion.

| Entity Type | Relationship Strength Level | Trust Weight Allocation |

|---|---|---|

| Research Institution | High – multi-domain verified relational links | Strong weighting in generative explanations and summaries |

| Industry Regulator | Moderate – domain-specific validated connections | Elevated contextual credibility weighting |

| Peer Organization | Moderate – reciprocal structured references | Increased inclusion probability in comparative outputs |

| Dataset Provider | High – validated data alignment across systems | Sustained authority reinforcement across interfaces |

| Independent Source | Low – isolated or weak relational signals | Limited generative amplification |

Graph-based reinforcement increases authority as relational density expands. Consequently, brands integrated within validated and multi-domain knowledge structures experience scalable authority propagation across generative systems.

Authority Weighting in Conversational AI Outputs

Brand authority presence in AI summaries determines how conversational systems allocate probabilistic priority during multi-turn reasoning. Conversational weighting refers to the structured prioritization of entities based on confidence signals embedded in retrieval and reinforcement layers. Therefore, authority becomes a measurable influence on response construction rather than a secondary visibility factor.

Conversational models operate through iterative context accumulation and response refinement. Peer-reviewed research published by OpenAI demonstrates that reinforcement learning from human feedback and retrieval-augmented generation improve factual grounding by favoring reliable sources. Consequently, dialogue systems assign higher output probability to entities that exhibit stable authority markers.

Claim: Conversational AI prioritizes authority-consistent brands in summaries.

Rationale: Dialogue models favor stable, high-confidence entities.

Mechanism: Reinforcement learning and retrieval augmentation favor established authority signals.

Counterargument: Personalization layers may alter weighting.

Conclusion: Authority stability predicts summary prominence.

Principle: Generative systems prioritize brands whose credibility signals remain consistent across retrieval layers, reinforcement cycles, and conversational reasoning contexts.

Brand authority attribution in AI responses

Brand authority attribution in AI responses refers to how conversational systems explicitly or implicitly associate statements with recognized entities. Attribution emerges when models reference a brand as a source of validated knowledge within synthesized outputs. Therefore, consistent attribution signals structural authority reinforcement.

Attribution accuracy depends on contextual coherence and prior probability weighting. If a brand consistently aligns with verified expertise domains, models increase attribution frequency during generation. Consequently, authority attribution becomes an observable outcome of credibility modeling.

In practical terms, brands with stable semantic alignment appear more frequently as referenced entities within conversational explanations.

Brand authority prominence in AI citations

Brand authority prominence in AI citations measures how often a brand appears in structured references within generated answers. Prominence reflects not only mention frequency but also contextual positioning within summaries. Therefore, citation placement influences perceived authority weighting.

Conversational models prioritize entities with strong relational reinforcement when constructing structured summaries. However, if authority markers weaken, citation prominence declines proportionally. Consequently, stable relational density supports consistent citation positioning.

Brands that maintain validated cross-domain references tend to occupy higher-confidence positions within conversational outputs.

Brand authority reference frequency in AI

Brand authority reference frequency in AI quantifies how often generative systems integrate a brand into multi-turn dialogue outputs. Frequency modeling aggregates cross-session inclusion patterns to assess stability. Therefore, repeated reference presence signals durable authority weighting.

Reference frequency interacts with reinforcement learning cycles. As models receive positive feedback on grounded outputs, authority-aligned entities gain higher selection probability. Consequently, consistent reference frequency becomes both a signal and a result of authority reinforcement.

When conversational systems repeatedly integrate a brand into contextually relevant responses, that pattern indicates structural authority recognition.

Brand authority reinforcement loops

Brand authority reinforcement loops describe feedback cycles where repeated inclusion strengthens future probability weighting. Each authoritative reference increases contextual familiarity within model inference layers. Therefore, reinforcement loops amplify stable entities over time.

Reinforcement occurs through retrieval augmentation and feedback-informed optimization. When outputs referencing a brand demonstrate factual consistency, models adjust weighting upward. Consequently, stable reinforcement loops increase inclusion likelihood in subsequent responses.

Repeated alignment between authority signals and validated outcomes gradually elevates generative prominence.

Brand authority stability across outputs

Brand authority stability across outputs measures whether conversational systems maintain consistent inclusion across diverse prompts and sessions. Stability indicates durable probabilistic weighting independent of query phrasing. Therefore, stable entities persist across contextual variations.

Variability in inclusion suggests weakened authority signals or contextual misalignment. However, when inclusion remains consistent across different dialogue paths, structural authority is confirmed. Consequently, stability across outputs reflects durable conversational weighting.

Sustained presence across multiple conversational contexts demonstrates that authority has become embedded within generative reasoning patterns rather than triggered by isolated prompts.

Trust Propagation and Authority Reinforcement Across Systems

Brand authority trust propagation explains how validated credibility signals transfer across independent generative environments. Trust propagation refers to the cross-system movement of structured authority markers between AI platforms that rely on overlapping datasets and reinforcement architectures. Therefore, brand authority generative discovery depends not only on isolated platform performance but on multi-system signal continuity.

Trust propagation operates when citation patterns, entity coherence, and validation markers repeat across ecosystems. Research from the Oxford Internet Institute shows that interconnected digital systems amplify influence through networked information flows and shared data infrastructures. Consequently, authority signals reinforced in one environment increase probability weighting in others, directly affecting brand authority generative discovery dynamics.

Claim: Authority signals propagate across generative ecosystems.

Rationale: AI systems share training data and reinforcement patterns.

Mechanism: Cross-platform citations amplify systemic authority.

Counterargument: Platform-specific weighting models vary.

Conclusion: Multi-platform consistency strengthens generative visibility.

Brand authority amplification through AI

Brand authority amplification through AI occurs when reinforcement patterns compound across generative systems. Amplification increases as multiple platforms recognize consistent authority signals derived from validated references. Therefore, repetition across environments transforms isolated credibility into systemic influence.

AI systems process shared or overlapping data sources. When authority markers align across these sources, models strengthen confidence weighting during inference. Consequently, amplification extends beyond single-platform optimization and supports brand authority generative discovery across ecosystems.

In practical terms, authority that appears repeatedly across independent AI systems gains structural reinforcement and broader generative inclusion.

Brand authority systemic influence

Brand authority systemic influence refers to the cumulative impact of cross-platform authority recognition. Systemic influence emerges when authority signals persist across conversational agents, retrieval systems, and synthesis panels. Therefore, authority becomes embedded within network-level probabilistic structures.

Influence scales when reinforcement loops operate simultaneously across platforms. However, inconsistent validation weakens cross-system stability. Consequently, systemic influence depends on durable semantic coherence and validated references.

When multiple generative systems independently prioritize the same brand, authority transitions from localized weighting to ecosystem-level recognition.

Brand authority signal persistence

Brand authority signal persistence measures whether authority markers remain stable over time and across technological shifts. Persistence indicates that credibility signals survive model updates and dataset refresh cycles. Therefore, durable persistence strengthens long-term generative positioning.

Signal decay occurs when validation layers weaken or factual consistency declines. However, sustained reinforcement through accurate data and institutional references preserves authority continuity. Consequently, persistent authority supports stable multi-platform inclusion.

Stable signals that survive platform evolution demonstrate that authority has become structurally integrated rather than temporarily optimized.

Microcase 2

A global SaaS provider implemented structured factual reinforcement across research publications, technical documentation, and institutional collaborations. The company standardized entity references and aligned all citations with verified datasets and regulatory documentation. Within eight months, conversational AI systems began consistently referencing the provider in cross-platform summaries and technical explanations. Multi-environment monitoring confirmed increased inclusion frequency, demonstrating how coordinated trust propagation strengthens systemic authority stability.

Authority Scoring and Evidence Signals in Generative Ranking

Brand authority scoring for AI platforms determines how generative systems quantify reliability before allocating exposure. Authority scoring refers to the algorithmic assessment of reliability indicators derived from validated evidence layers. Therefore, scoring transforms abstract credibility into measurable ranking parameters inside generative architectures.

Authority scoring systems evaluate structured datasets, citation density, expert attribution, and entity stability. Standards developed by the National Institute of Standards and Technology (NIST) define rigorous principles for data integrity, validation frameworks, and reliability benchmarking in computational systems. Consequently, generative ranking integrates structured evidence verification to improve output trust and reduce probabilistic uncertainty.

Claim: Generative ranking systems incorporate authority scoring layers.

Rationale: Evidence-based prioritization improves output trust.

Mechanism: Signal aggregation includes citation networks and entity validation.

Counterargument: Low-data entities receive limited visibility.

Conclusion: Authority scoring shapes generative exposure probability.

Brand authority evidence signals

Brand authority evidence signals represent measurable indicators that confirm reliability and expertise consistency. These signals include structured citations, peer-reviewed references, institutional validation, and cross-domain semantic reinforcement. Accordingly, generative systems evaluate evidence density before allocating visibility.

Evidence signals operate cumulatively rather than independently. When citation patterns align with validated datasets and consistent thematic positioning, scoring layers increase probabilistic weighting. Consequently, evidence-driven reinforcement strengthens inclusion likelihood across generative outputs.

In practice, evidence signals function as structured reliability markers that convert documented expertise into computational trust.

Brand authority validation mechanisms

Brand authority validation mechanisms define how systems verify the integrity of evidence signals. Validation mechanisms include dataset cross-checking, anomaly detection, consistency audits, and structured metadata verification. Therefore, validation ensures that authority reflects verified consistency rather than surface-level mentions.

Generative architectures apply multi-layer validation pipelines to detect conflicts or semantic drift. If inconsistencies emerge, authority scores adjust downward until additional reinforcement restores confidence. Consequently, validation mechanisms stabilize scoring outcomes.

When validation processes operate continuously, authority scoring remains aligned with factual accuracy and institutional integrity.

Brand authority credibility modeling

Brand authority credibility modeling integrates evidence signals and validation results into probabilistic ranking frameworks. Credibility modeling transforms structured indicators into numerical weighting parameters that influence generative ranking decisions. Therefore, modeling converts qualitative authority into quantitative exposure probability.

Credibility models evaluate reliability across temporal intervals to prevent short-term volatility. However, insufficient data reduces scoring confidence and limits exposure amplification. Consequently, credibility modeling rewards sustained evidence accumulation rather than isolated prominence.

Consistent credibility modeling ensures that ranking reflects durable authority patterns instead of temporary visibility spikes.

| Authority Signal | Evidence Type | Ranking Impact |

|---|---|---|

| Structured citations | Peer-reviewed references and institutional sources | Increased probability of inclusion in generative summaries |

| Entity validation | Knowledge graph alignment and identity consistency | Higher trust weighting during probabilistic selection |

| Expert attribution | Recognized academic or regulatory endorsements | Elevated credibility ranking across conversational outputs |

| Cross-domain presence | Verified references in multiple knowledge sectors | Broader generative exposure across platforms |

| Temporal consistency | Longitudinal data stability and repeated validation | Sustained authority scoring across model update cycles |

Authority scoring aggregates these evidence layers into structured ranking decisions. Consequently, generative systems prioritize entities that demonstrate validated, persistent, and cross-domain reliability.

Example: When a brand maintains validated citations, stable entity alignment, and cross-platform reinforcement, generative systems assign higher authority scores, resulting in increased inclusion probability within AI-generated summaries and contextual responses.

Brand Authority Across AI Platforms and Long-Term Stability

Brand authority across AI platforms determines whether credibility signals remain stable in independent generative ecosystems. Cross-platform stability refers to consistent authority recognition across distinct AI systems that operate with different architectures, training corpora, and retrieval mechanisms. Therefore, stability becomes a structural requirement for durable inclusion in brand authority generative discovery processes.

Long-term authority depends on cumulative reinforcement rather than isolated optimization. Research from the Harvard Data Science Initiative demonstrates that reproducible data modeling and persistent validation frameworks strengthen reliability across evolving computational environments. Consequently, sustained authority patterns increase the probability that generative systems maintain consistent weighting over time.

Claim: Long-term authority stability determines generative persistence.

Rationale: AI memory systems accumulate entity reinforcement patterns.

Mechanism: Persistent exposure increases selection probability in generative outputs.

Counterargument: Reputational shocks reduce authority weighting.

Conclusion: Authority persistence ensures generative durability.

Brand authority signal architecture

Brand authority signal architecture defines how credibility markers are structured, documented, and reinforced across environments. Architecture refers to the organized framework that governs entity validation, citation alignment, and semantic consistency. Therefore, structured architecture prevents fragmentation of authority signals.

Signal architecture includes standardized naming conventions, verified reference mapping, and consistent metadata frameworks. When architectural integrity remains intact, generative systems detect stable authority patterns despite platform variation. Consequently, architecture enables scalable reinforcement across independent AI ecosystems.

In operational terms, a coherent signal architecture ensures that authority markers remain synchronized across platforms and resist semantic drift.

Brand authority systemic influence

Brand authority systemic influence emerges when authority signals propagate across multiple AI systems simultaneously. Systemic influence reflects ecosystem-level recognition rather than platform-specific weighting. Therefore, brands with durable authority achieve persistent presence across conversational agents, synthesis panels, and retrieval-driven interfaces.

Systemic influence strengthens as cross-platform reinforcement accumulates. However, fragmentation or inconsistency reduces probabilistic stability. Consequently, durable systemic authority depends on synchronized validation and consistent evidence signals.

When multiple AI systems independently assign high confidence to the same brand, that alignment confirms structural authority integration.

Brand authority signal consistency

Brand authority signal consistency measures whether authority markers remain aligned across datasets, time periods, and technological updates. Consistency reduces interpretive variance and stabilizes probabilistic weighting across evolving models. Therefore, stable reinforcement patterns support long-term inclusion.

Signal inconsistency weakens authority persistence because generative systems adjust weighting based on observed reliability. However, durable semantic alignment preserves authority recognition despite model retraining cycles. Consequently, consistent authority patterns strengthen brand authority generative discovery over extended time horizons.

Sustained cross-platform consistency ensures that authority becomes embedded within generative reasoning frameworks rather than dependent on temporary optimization conditions.

Measuring Brand Authority Impact on Generative Discovery

Brand authority impact on AI citations determines how frequently and prominently a brand appears in generative outputs. Impact measurement refers to the quantifiable analysis of citation presence, positional prominence, and contextual weighting across AI-generated responses. Therefore, measurement transforms abstract visibility into structured evidence that informs brand authority generative discovery strategy.

Impact analysis requires systematic sampling across conversational agents, synthesis panels, and retrieval-augmented interfaces. Research from Berkeley Artificial Intelligence Research (BAIR) highlights the importance of evaluation frameworks that assess model behavior through structured benchmarking and probabilistic output analysis. Consequently, authority impact becomes observable through measurable citation frequency and response prominence patterns.

Claim: Authority impact is measurable through generative citation analysis.

Rationale: Citation frequency reflects model confidence weighting.

Mechanism: Response sampling across platforms reveals prominence patterns.

Counterargument: Closed-source systems limit transparency.

Conclusion: Structured measurement enables authority optimization.

| Metric | Before Authority Stabilization | After Stabilization |

|---|---|---|

| Average citation frequency per 100 sampled responses | 3 references | 11 references |

| Summary inclusion rate | 18% of outputs | 46% of outputs |

| Cross-platform consistency index | Low variance alignment (0.32 score) | High variance alignment (0.74 score) |

| Contextual prominence placement | Secondary mention in 70% of cases | Primary mention in 62% of cases |

| Multi-domain reference coverage | Limited to 1–2 domains | Expanded to 5+ domains |

These metrics demonstrate how authority stabilization alters probabilistic weighting and increases structural prominence across generative systems.

Brand authority ranking in AI systems

Brand authority ranking in AI systems refers to how probabilistic models prioritize entities within structured outputs. Ranking does not rely solely on position but on contextual weighting and relevance confidence. Therefore, ranking analysis must evaluate both inclusion frequency and structural prominence.

Ranking shifts occur when credibility signals accumulate across validated environments. However, ranking declines if authority markers weaken or become inconsistent. Consequently, continuous monitoring ensures that authority reinforcement remains aligned with generative weighting mechanisms.

When ranking indicators improve across sampling cycles, that change reflects increased probabilistic confidence within generative inference layers.

Brand authority context reinforcement

Brand authority context reinforcement measures how often a brand appears within supportive semantic clusters. Reinforcement strengthens when references co-occur with validated entities and stable thematic domains. Therefore, contextual density increases confidence allocation.

Context reinforcement analysis evaluates whether generative systems associate the brand with consistent expertise signals. However, fragmented associations weaken contextual credibility. Consequently, sustained semantic alignment supports durable authority amplification.

When contextual reinforcement remains stable across sampling intervals, generative inclusion probability rises accordingly.

Brand authority authority loops

Brand authority authority loops describe feedback cycles where repeated citation increases future inclusion likelihood. Each authoritative mention reinforces entity familiarity within inference layers. Therefore, loops compound over time and strengthen structural weighting.

Authority loops accelerate when reinforcement aligns with validated evidence and contextual stability. However, inconsistent data disrupts loop continuity. Consequently, durable loops depend on stable credibility modeling and evidence validation.

When citation cycles repeat across platforms and time intervals, authority becomes self-reinforcing within generative systems, increasing sustained exposure probability.

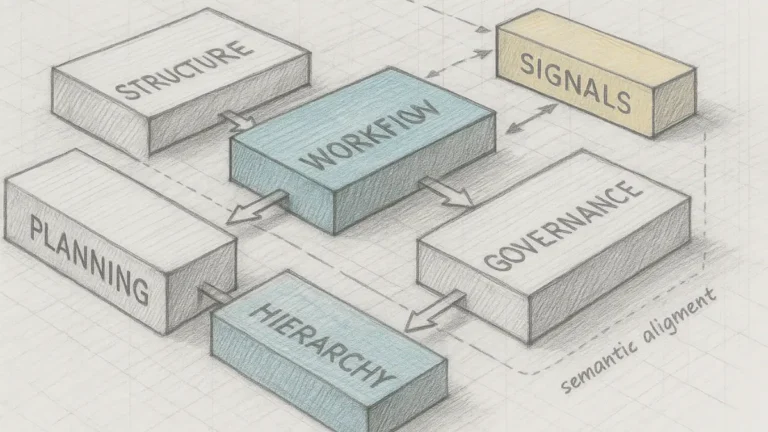

Strategic Architecture for Brand Authority in Generative Discovery

Brand authority generative discovery requires systematic coordination of credibility signals across AI-mediated ecosystems. Authority architecture refers to the structured governance framework that manages entity validation, semantic consistency, and trust propagation at scale. Therefore, sustainable generative inclusion depends on disciplined signal design rather than isolated optimization.

Authority architecture integrates entity modeling, evidence validation, and cross-platform reinforcement into a unified governance layer. Policy research from the OECD emphasizes the importance of structured digital governance frameworks for maintaining transparency, accountability, and data integrity across interconnected systems. Consequently, strategic authority architecture aligns credibility management with long-term generative visibility stability.

Claim: Structured authority architecture determines generative discoverability outcomes.

Rationale: AI systems reward consistent semantic integrity.

Mechanism: Integrated entity governance, factual validation, and trust propagation reinforce authority signals.

Counterargument: Fragmented governance reduces signal coherence.

Conclusion: Strategic authority architecture enables sustainable generative visibility.

Brand authority modeling frameworks

Brand authority modeling frameworks define how organizations translate credibility signals into structured computational layers. These frameworks standardize entity naming, validate reference integrity, and align thematic positioning across datasets. Therefore, modeling frameworks prevent semantic drift and reinforce structural clarity.

Framework implementation requires coordination between content governance, data engineering, and validation workflows. However, isolated content updates without systemic alignment weaken reinforcement cycles. Consequently, unified modeling frameworks ensure that authority signals remain synchronized across AI systems.

When frameworks operate consistently, generative systems interpret authority as stable and reproducible rather than episodic.

Brand authority trust factors in generative outputs

Brand authority trust factors in generative outputs include factual consistency, expert attribution, cross-domain validation, and semantic coherence. These factors collectively influence probabilistic inclusion and citation prominence. Therefore, trust factors must be monitored continuously rather than assumed.

Trust factors strengthen when outputs consistently align with verified datasets and institutional references. However, contradictory signals reduce weighting across conversational systems. Consequently, trust management becomes an operational requirement within authority architecture.

When trust factors remain aligned across multiple inference cycles, generative systems maintain durable inclusion probability.

Brand reputation propagation in AI systems

Brand reputation propagation in AI systems refers to the spread of validated authority signals across generative platforms. Propagation occurs when consistent reinforcement patterns transfer between training datasets and retrieval layers. Therefore, reputation becomes computationally embedded within probabilistic inference structures.

Propagation depends on signal durability and cross-platform reinforcement consistency. However, fragmented evidence weakens systemic recognition. Consequently, reputation propagation requires structured governance and coordinated validation strategies.

When propagation operates effectively, authority becomes ecosystem-level rather than platform-specific.

Governance controls

Governance controls regulate how authority signals are created, validated, and updated. Controls include standardized entity documentation, editorial oversight protocols, and structured validation checkpoints. Therefore, governance prevents uncontrolled signal variability.

Effective governance requires documented workflows and accountability mechanisms. However, absent controls produce fragmented credibility patterns. Consequently, disciplined governance ensures stable authority reinforcement across evolving AI environments.

Structured governance aligns operational practices with generative visibility objectives.

Evidence documentation

Evidence documentation records the validation basis for authority signals. Documentation includes dataset references, citation mapping, institutional affiliations, and temporal update logs. Therefore, documentation transforms credibility claims into verifiable records.

Accurate documentation enables reproducible validation across platforms. However, incomplete records weaken trust reinforcement cycles. Consequently, systematic documentation supports sustainable authority architecture.

When evidence remains transparent and traceable, generative systems interpret authority signals as structurally reliable.

Signal monitoring

Signal monitoring tracks authority performance across conversational outputs, citation frequency, and cross-platform consistency metrics. Monitoring detects deviations, volatility, or weakening reinforcement patterns. Therefore, continuous observation protects long-term authority stability.

Monitoring frameworks combine response sampling, prominence analysis, and contextual density measurement. However, irregular monitoring allows unnoticed signal degradation. Consequently, structured monitoring sustains alignment between governance strategy and generative visibility outcomes.

When monitoring operates continuously, authority architecture adapts proactively to maintain stability.

Generative ecosystems increasingly reward structured credibility rather than isolated optimization. Enterprises that integrate modeling frameworks, validation controls, documentation systems, and monitoring protocols achieve durable visibility across AI-mediated environments. Consequently, sustainable generative inclusion depends on systematic authority architecture rather than tactical content adjustments.

Brand authority generative discovery therefore represents a structural discipline that integrates governance, validation, and cross-platform reinforcement into a unified credibility system. Organizations that institutionalize this architecture secure long-term generative persistence through consistent semantic integrity and measurable authority stability.

Checklist:

- Are authority signals documented and validated across datasets?

- Is entity naming consistent across all platforms?

- Do citation networks reinforce cross-domain credibility?

- Are knowledge graph relationships stable and verifiable?

- Is authority scoring monitored across AI platforms?

- Does governance architecture prevent semantic drift?

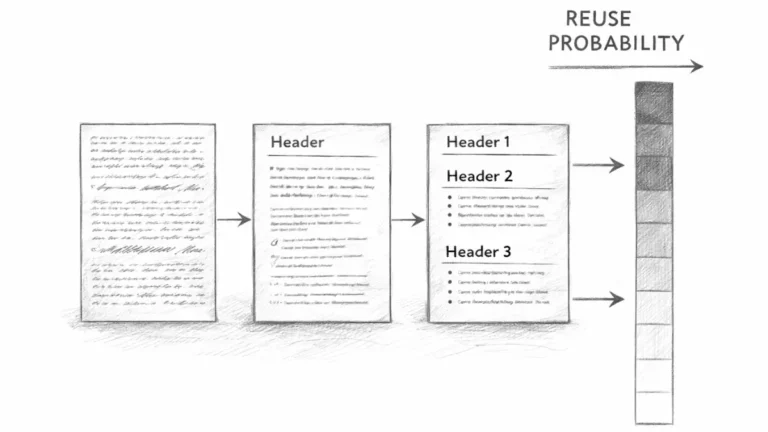

Architectural Interpretation Layer in Generative Entity Systems

- Entity-weighted section segmentation. Clearly separated semantic blocks allow generative systems to associate authority signals with discrete conceptual units, reducing cross-context ambiguity.

- Inference-aligned heading depth. Hierarchical H2→H3→H4 structuring reflects layered reasoning paths that models interpret as progressive confidence refinement rather than linear text flow.

- Signal density clustering. Concentrated credibility markers within defined sections create detectable reinforcement zones that influence probabilistic weighting during synthesis.

- Cross-sectional semantic coherence. Consistent terminology and stable entity references enable models to construct persistent internal representations without semantic drift.

- Deterministic reasoning boundaries. Clearly bounded analytical units function as extraction-ready modules, improving interpretability within retrieval-augmented and conversational architectures.

These architectural properties clarify how generative systems parse, stabilize, and weight authority signals across structured documents without relying on external interpretive heuristics.

FAQ: Brand Authority in Generative Discovery

What is brand authority in generative discovery?

Brand authority in generative discovery refers to the measurable pattern of credibility, expertise consistency, and citation stability that AI systems recognize when generating answers.

How do generative systems evaluate brand authority?

Generative systems evaluate citation frequency, entity coherence, validation signals, and cross-domain reinforcement to assign probabilistic trust weighting.

Why does authority influence AI summaries?

Conversational models prioritize stable, validated entities to reduce uncertainty and improve factual grounding in synthesized responses.

What role do knowledge graphs play in authority recognition?

Knowledge graphs reinforce authority through structured entity relationships, cross-domain linking, and relational density that increase inference reliability.

How is brand credibility modeled in AI systems?

Credibility modeling aggregates citation networks, validation datasets, and entity stability signals into scoring layers that influence generative ranking.

What is authority scoring in generative ranking?

Authority scoring is the algorithmic assessment of reliability indicators that determine exposure probability in generative outputs.

How does authority propagate across AI platforms?

Authority propagates when consistent validation signals appear across multiple AI systems that rely on overlapping datasets and reinforcement architectures.

How can authority impact be measured?

Impact is measurable through citation frequency analysis, summary inclusion rates, contextual prominence tracking, and cross-platform consistency metrics.

Why is cross-platform stability important?

Cross-platform stability ensures that authority signals remain consistent across independent generative systems, supporting long-term visibility.

What determines sustainable generative visibility?

Sustainable generative visibility depends on structured authority architecture that integrates entity governance, factual validation, and trust propagation.

Glossary: Key Terms in Brand Authority Modeling

This glossary defines the core terminology used throughout the article to ensure consistent interpretation of brand authority signals within generative AI systems.

Brand Authority

A measurable pattern of credibility, expertise consistency, and citation stability recognized across generative AI environments.

Credibility Modeling

The algorithmic process of evaluating factual stability, entity coherence, and validation signals to assign probabilistic trust weighting.

Knowledge Graph Inference

Structured entity reasoning within AI systems that reinforces authority through validated relationships and relational density.

Authority Scoring

The computational assessment of reliability indicators such as citation networks, entity validation, and cross-domain consistency.

Trust Propagation

The cross-system transfer of validated authority signals across multiple AI platforms that rely on overlapping datasets and reinforcement models.

Cross-Platform Stability

Consistent recognition of authority signals across independent generative systems over time and model updates.

Entity Coherence

The degree to which a brand maintains consistent naming, domain alignment, and relational positioning within AI knowledge structures.

Citation Frequency Modeling

Quantitative analysis of how often a brand appears within validated contexts across generative outputs.

Authority Architecture

A systematic governance framework that manages credibility signals, validation processes, and cross-system reinforcement mechanisms.

Generative Visibility

The measurable presence of a brand within AI-generated summaries, citations, and contextual responses across conversational systems.