Last Updated on February 23, 2026 by PostUpgrade

How to Future-Proof Your Website for AI Search

AI search readiness strategy is no longer optional for organizations that depend on digital visibility. An effective AI search readiness strategy establishes structural conditions that allow systems to interpret, extract, and reuse content without ambiguity. Consequently, companies that aim to future-proof website for AI environments must move beyond link-based optimization and toward structural coherence. Therefore, AI-driven discovery preparation becomes a foundational discipline rather than a tactical adjustment, and next-generation search readiness depends on architectural control.

AI search refers to AI-mediated information retrieval systems that synthesize responses instead of presenting ranked lists of hyperlinks. These systems generate answers by combining probabilistic modeling, entity resolution, and contextual weighting. As a result, visibility depends on semantic consistency and structural clarity rather than isolated ranking factors. Generative retrieval environments prioritize stable definitions, verifiable claims, and predictable information hierarchies.

Future-proofing means systematic structural preparation for long-term machine interpretation stability. It requires controlled terminology, explicit semantic containers, and governance mechanisms that prevent drift over time. Accordingly, this article addresses architecture design, governance systems, semantic engineering principles, performance metrics, and enterprise workflows. Each component contributes to durable interpretability in AI-mediated ecosystems where structural precision directly influences inclusion.

AI Search Ecosystem Shift and Structural Implications

The AI search readiness framework requires structural adaptation to multi-agent generative environments. According to research from the Stanford Natural Language Processing Group, modern retrieval systems combine entity modeling, probabilistic reasoning, and contextual ranking within unified generative pipelines. The AI search ecosystem consists of multi-agent generative retrieval systems that prioritize synthesis over indexing. Consequently, structural implications extend beyond ranking factors and reshape how content must be engineered.

Claim: Websites must adapt to generative retrieval logic.

Rationale: AI systems synthesize structured meaning instead of ranking hyperlinks.

Mechanism: Retrieval-augmented generation integrates structured signals into probabilistic response construction.

Counterargument: Traditional SEO structures may still generate measurable traffic in hybrid environments.

Conclusion: Long-term positioning requires structural adaptation aligned with generative reasoning models.

Definition: AI understanding is the capacity of generative retrieval systems to interpret structured semantic units, entity relationships, and hierarchical boundaries in a way that enables stable synthesis, citation eligibility, and cross-context reuse within AI-mediated search environments.

From Link-Based Retrieval to Synthesis-Based Retrieval

Traditional search systems ranked documents based on backlink authority, anchor signals, and keyword distribution. However, generative systems reconstruct meaning by extracting semantic units and recombining them into synthesized responses. Therefore, an AI search transformation strategy must prioritize structured interpretation rather than isolated ranking signals.

Generative retrieval evaluates entity coherence, contextual stability, and evidence alignment. Consequently, an AI-discovery aligned content model organizes information into predictable semantic containers that machines can reuse. This shift reduces dependence on link metrics and increases reliance on structural clarity and explicit definitions.

In practice, systems no longer reward isolated pages but instead favor content that supports coherent synthesis across multiple contexts.

Structural Signals in Generative Retrieval

Generative systems evaluate structural signals that indicate interpretability, stability, and semantic alignment. Therefore, AI-compatible site architecture must expose hierarchical clarity and deterministic relationships between concepts. Structural design becomes a measurable input to retrieval models rather than a secondary presentation layer.

Moreover, machine-aligned website structure ensures that entities, mechanisms, and implications appear in predictable patterns. Retrieval systems assign higher confidence to pages that reduce ambiguity and maintain stable terminology across sections. As a result, structured meaning blocks replace isolated optimization tactics.

In effect, structure becomes a primary signal of reliability because it enables consistent extraction and recombination of content elements.

| Retrieval Model | Primary Signal | Structural Requirement |

|---|---|---|

| Traditional ranking | Backlinks | Link authority |

| Generative retrieval | Semantic coherence | Structured meaning blocks |

| Hybrid models | Entity alignment | Machine-readable hierarchy |

Therefore, organizations that implement structural coherence increase eligibility for generative inclusion across evolving retrieval systems.

AI-Compatible Information Architecture

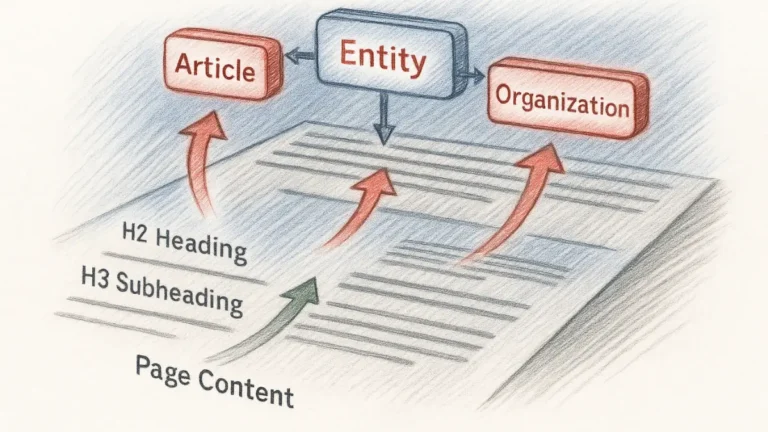

AI-focused information architecture is a core component of an AI search readiness strategy because structure determines interpretability at scale. Information architecture is the structured organization of semantic units enabling predictable interpretation across machine reasoning layers. Research from MIT CSAIL shows that scalable knowledge systems depend on hierarchical entity modeling and structured representation learning. Therefore, organizations that implement an AI search readiness strategy must treat architectural modeling as a primary visibility factor rather than a secondary design layer.

Claim: Information architecture determines generative interpretability and inclusion probability.

Rationale: Generative systems prioritize semantically stable structures over isolated keyword signals.

Mechanism: Hierarchical modeling aligns entities, mechanisms, and implications into machine-readable frameworks that support retrieval-augmented synthesis.

Counterargument: Flat content structures may still perform in limited, short-term ranking contexts.

Conclusion: Durable generative visibility requires an AI-compatible publishing model grounded in structural discipline.

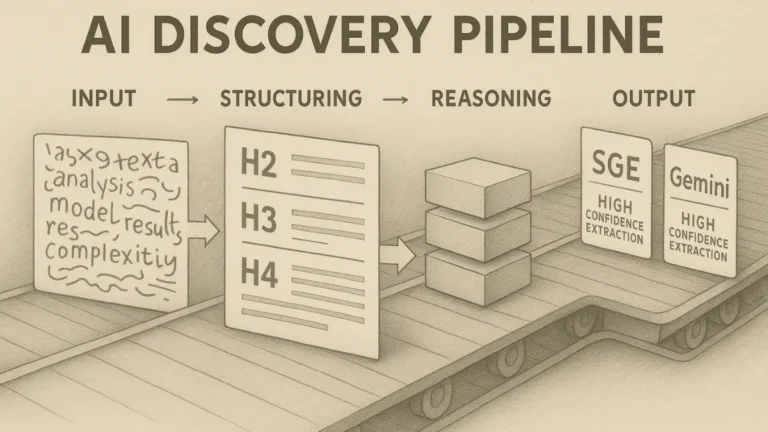

Semantic Hierarchy Modeling

Semantic hierarchy modeling establishes ordered relationships between entities, subtopics, and explanatory layers. An AI-native content structure separates concepts, mechanisms, and implications into distinct containers that reduce interpretive ambiguity. Therefore, AI-aware site structuring ensures that each heading corresponds to a discrete semantic unit with controlled boundaries.

Hierarchical alignment enables machines to detect dependency chains and causal logic across sections. Moreover, generative systems assign higher confidence to pages that preserve consistent terminology and avoid semantic drift. Consequently, structural coherence strengthens an AI search readiness strategy by stabilizing meaning across expandable layers.

Clear hierarchy ensures that meaning flows from broader conceptual anchors to narrower explanatory units without structural fragmentation.

Principle: Generative inclusion probability increases when information architecture enforces hierarchical clarity, stable terminology, and deterministic semantic containers that remain interpretable across evolving AI retrieval models.

Structural Depth and Controlled Expansion

Structural depth defines how many layers of meaning a page contains and how those layers interconnect. A future-oriented web architecture balances expansion with clarity, ensuring that deeper sections do not introduce ambiguity or conceptual overlap. Therefore, depth must remain proportional to semantic complexity rather than word count.

An AI-integrated visibility model depends on predictable depth progression across H2, H3, and H4 levels. Generative systems rely on structural gradients to evaluate contextual relevance and logical continuity. As a result, controlled expansion reinforces an AI search readiness strategy by maintaining consistent interpretability across increasing detail.

Proper depth control ensures that additional detail strengthens reasoning instead of destabilizing interpretation.

- Controlled heading depth

- Stable terminology

- Predictable block logic

- Deterministic navigation

These structural constraints establish an AI-interpretable site framework that supports long-term generative inclusion and architectural resilience within an AI search readiness strategy.

Machine-Readable Content Engineering

Machine-readable web foundation establishes the operational layer of an AI search readiness strategy because interpretation begins at the sentence and block level. Machine-readable content consists of structured semantic units interpretable without ambiguity by probabilistic reasoning systems. Research from Carnegie Mellon University Language Technologies Institute demonstrates that structured representations improve downstream reasoning reliability in large-scale language models. Therefore, an AI search readiness strategy must align content engineering with deterministic semantic modeling rather than stylistic variation.

Claim: Machine-readable engineering determines whether generative systems can reliably extract and recombine content.

Rationale: AI systems evaluate clarity, structural stability, and entity alignment before selecting information for synthesis.

Mechanism: Structured semantic units reduce ambiguity and enable retrieval-augmented models to map entities, mechanisms, and implications into coherent responses.

Counterargument: Human-readable narrative content may remain accessible without strict structural discipline.

Conclusion: Sustainable generative inclusion requires AI-adaptive content infrastructure embedded within an AI indexing readiness plan.

Declarative Sentence Modeling

Declarative sentence modeling enforces subject–predicate–meaning alignment to ensure computational clarity. An AI-context optimized website avoids layered clauses and ambiguous references because generative systems prioritize predictable semantic patterns. Consequently, declarative structure increases confidence scoring during response assembly.

Furthermore, AI-discovery performance planning depends on sentence-level determinism because retrieval pipelines decompose text into minimal reasoning units. Systems weigh explicit statements more heavily than implied claims, especially in multi-source synthesis. Therefore, structural sentence design directly influences selection probability within generative ranking layers.

Clear sentences with stable meaning reduce interpretive variance and improve inclusion consistency.

Entity-Level Content Structuring

Entity-level structuring organizes content around defined conceptual anchors. An AI-oriented content ecosystem aligns each entity with mechanisms, examples, and implications to maintain contextual stability. Consequently, entity blocks function as reusable nodes within generative knowledge graphs.

Moreover, an AI-discovery visibility strategy depends on stable entity references because synthesis models evaluate coherence across documents. When entity definitions remain explicit and consistent, retrieval pipelines can connect evidence and reasoning layers without ambiguity. Therefore, structured entity modeling increases probabilistic trust weighting.

Explicit entity anchoring ensures that generative systems can map content relationships without inference gaps.

| Content Layer | Structural Role | AI Interpretation Benefit |

|---|---|---|

| Entity block | Meaning anchor | Stable reference |

| Mechanism block | Causal logic | Reasoning clarity |

| Example block | Evidence grounding | Reusability |

| Implication block | Strategic inference | Decision extraction |

Therefore, machine-readable engineering transforms content into a machine-interpretable architecture that strengthens long-term generative inclusion within an AI search readiness strategy.

Governance for AI-Resilient Web Strategy

AI-resilient web strategy is a structural component of an AI search readiness strategy because stability over time determines interpretability. Governance is structured oversight ensuring semantic stability across time and preventing uncontrolled conceptual drift. According to policy research from the OECD, durable digital systems require institutionalized accountability frameworks to maintain long-term reliability. Therefore, organizations that pursue long-term AI search positioning must formalize AI-aligned website governance instead of relying on informal editorial control.

Claim: Governance preserves structural integrity across technological evolution.

Rationale: Generative systems reward consistency, traceability, and definitional stability over time.

Mechanism: Formal oversight enforces terminology control, revision documentation, and validation checkpoints that support website modernization for AI platforms.

Counterargument: Agile publishing without governance may accelerate short-term content deployment.

Conclusion: Sustainable AI-resilient web strategy requires institutionalized semantic control aligned with generative reasoning systems.

Terminology Control Systems

Terminology control systems prevent fragmentation across expanding knowledge domains. An adaptive website for AI discovery must maintain consistent entity definitions to ensure predictable interpretation across sections and updates. Consequently, AI-aligned website governance must treat vocabulary control as infrastructure rather than as editorial preference.

When terminology shifts without coordination, generative systems register multiple competing entity nodes. As a result, coherence scoring decreases and inclusion probability weakens. Therefore, centralized terminology management strengthens long-term AI search positioning by stabilizing semantic anchors.

Consistent definitions reduce ambiguity and preserve interpretive continuity across scalable publication cycles.

Versioning and Semantic Drift Prevention

Versioning protocols document structural and definitional change over time. AI-discovery ecosystem alignment requires that updates preserve conceptual continuity while allowing controlled evolution. Consequently, structured revision systems become necessary to prevent semantic drift.

Semantic drift occurs when definitions evolve without traceable documentation. This condition reduces entity coherence and weakens probabilistic trust weighting in generative systems. Therefore, governance must combine validation checkpoints with explicit update logs to maintain structural integrity.

Disciplined oversight relies on the following structural controls:

- Controlled vocabulary

- Entity registry

- Structured revision logs

- Definition enforcement

- Content lifecycle governance

These governance mechanisms reinforce structural durability and secure long-term AI search positioning within evolving generative environments.

Structured Visibility in Conversational Search

Preparing for conversational search systems requires structural adaptation to interactive, multi-turn AI interfaces. Conversational search is defined as multi-turn AI-mediated retrieval interfaces that generate context-aware responses through iterative dialogue rather than static query matching. Research from the Allen Institute for Artificial Intelligence (AI2) shows that dialogue-based retrieval systems depend on contextual memory and citation grounding to maintain response reliability. Therefore, AI-enabled discovery readiness must extend beyond indexing and address AI-integrated site optimization at the response construction level.

Claim: Structured visibility in conversational systems depends on citation eligibility and contextual stability.

Rationale: Multi-turn AI systems evaluate source consistency and structural coherence across dialogue exchanges.

Mechanism: Generative models integrate entity grounding, citation frequency, and contextual persistence into answer synthesis during interaction loops.

Counterargument: Static page optimization may still generate inclusion in single-turn answer environments.

Conclusion: Durable conversational inclusion requires structural adaptation aligned with AI-compatible site architecture principles.

Response-Level Citation Eligibility

Response-level citation eligibility determines whether a page can be referenced directly within conversational outputs. AI search ecosystem alignment requires that content present explicit claims, verifiable evidence, and stable entity references in discrete semantic containers. Consequently, generative systems can extract and attribute statements without reinterpreting ambiguous phrasing.

Conversational interfaces prioritize sources that reduce interpretive friction during multi-source synthesis. Therefore, citation-ready structuring increases the probability that specific paragraphs appear as attributed segments in generated responses. Pages that expose clear definitions and mechanism blocks receive higher inclusion weighting.

Clear citation boundaries allow conversational systems to reference content confidently and repeatedly across interaction cycles.

Example: A documentation page structured with explicit entity definitions, mechanism blocks, and evidence-aligned paragraphs enables conversational AI systems to extract high-confidence segments, increasing citation recurrence within multi-turn synthesized responses.

Context Windows and Answer Cards

Context windows define how much information a generative model can process within a single interaction sequence. AI search readiness strategy requires that content remain interpretable even when only partial sections are retrieved into limited conversational memory spans. Consequently, answer-card compatibility depends on block-level independence and semantic closure.

Generative systems compress extracted content into concise answer units for display in conversational cards. Therefore, structured meaning blocks must retain coherence when isolated from surrounding context. This constraint reinforces AI-integrated site optimization at the micro-structural level.

Content that maintains clarity under partial extraction becomes more resilient within evolving conversational interfaces.

An enterprise SaaS firm restructured its technical documentation by separating entities, mechanisms, and implications into clearly labeled semantic containers. The firm implemented explicit citation-ready definitions and reduced cross-paragraph ambiguity. Within six months, generative platforms began referencing its documentation in synthesized summaries more consistently. Internal monitoring showed measurable increases in attributed conversational responses.

Structured visibility within conversational search systems strengthens long-term generative inclusion by aligning architectural clarity with dialogue-based retrieval dynamics.

Data Integrity and Trust Infrastructure

AI-discovery aligned content model requires formal validation mechanisms to support reliable generative inclusion. Trust infrastructure is defined as structured validation mechanisms ensuring factual reliability across retrieval and synthesis pipelines. Standards published by the National Institute of Standards and Technology (NIST) emphasize traceability, data integrity, and verification as foundational elements in trustworthy AI systems. Therefore, AI-aware website infrastructure must embed validation controls directly into content architecture rather than treat credibility as an external attribute.

Claim: Data integrity directly influences generative inclusion probability.

Rationale: Generative systems prioritize sources that demonstrate verifiable evidence and structural consistency.

Mechanism: Validation layers integrate citation frequency, dataset verification, and definitional stability into probabilistic output selection.

Counterargument: High-traffic sources without formal validation structures may still appear in short-term generative responses.

Conclusion: Sustainable generative visibility depends on a trust infrastructure embedded within an AI-compatible publishing model.

Source Attribution Systems

Source attribution systems formalize how claims connect to authoritative references. A machine-aligned website structure must expose citations as discrete, extractable units rather than as stylistic footnotes. Consequently, generative systems can evaluate evidence strength during response synthesis.

Explicit attribution reduces ambiguity in multi-source retrieval scenarios. Moreover, consistent anchor usage increases inclusion probability when conversational systems require traceable claims. Therefore, structured attribution strengthens interpretability while reinforcing AI-aware website infrastructure principles.

Clear source mapping allows generative systems to reuse statements without compromising credibility weighting.

Evidence-Based Block Design

Evidence-based block design integrates factual claims with structured validation layers. AI-discovery performance planning requires that each semantic container include explicit evidence boundaries to prevent probabilistic distortion. Consequently, content engineering must align conceptual clarity with verifiable data references.

Generative systems assign higher trust weighting to content that presents data alongside defined entities and mechanisms. Therefore, separating evidence blocks from interpretation blocks improves reuse confidence and reduces hallucination risk. Structured validation enhances probabilistic stability across retrieval cycles.

Evidence alignment ensures that extracted content maintains integrity during synthesis.

| Trust Layer | Implementation | Visibility Effect |

|---|---|---|

| Citation layer | Authoritative anchors | Inclusion probability |

| Data layer | Verified datasets | Reuse confidence |

| Structural layer | Stable definitions | Reduced hallucination risk |

Integrated trust infrastructure strengthens long-term generative reliability by aligning structural validation with AI-discovery aligned content model requirements.

Performance Measurement Beyond Traffic

AI-discovery visibility strategy shifts evaluation from page visits to inclusion in synthesized responses. Generative visibility is defined as inclusion in synthesized AI outputs that integrate and attribute structured content. Research from the Harvard Data Science Initiative highlights that data-driven measurement frameworks improve model evaluation and reproducibility across complex systems. Therefore, organizations must implement AI-discovery performance planning supported by an AI-integrated visibility model rather than rely exclusively on traffic metrics.

Claim: Traffic metrics do not adequately measure generative inclusion.

Rationale: Generative systems synthesize responses independently of click-based navigation patterns.

Mechanism: Visibility must be measured through inclusion frequency, citation recurrence, and entity stability across AI-mediated outputs.

Counterargument: Traditional analytics still capture measurable audience engagement in parallel channels.

Conclusion: Durable performance assessment requires generative-specific metrics integrated into structured evaluation systems.

Generative Inclusion Metrics

Generative inclusion metrics quantify how often structured content appears in synthesized outputs. An AI indexing readiness plan must incorporate monitoring systems that detect entity references across AI platforms. Consequently, performance evaluation shifts from page-level impressions to response-level inclusion.

Structured measurement requires tracking visibility across multiple conversational and generative environments. Moreover, systems that integrate entity-level logging can detect patterns of reuse across contexts. Therefore, generative inclusion metrics provide a direct signal of AI-discovery visibility strategy effectiveness.

Reliable measurement clarifies whether structural adaptation translates into probabilistic selection.

Citation Frequency Analysis

Citation frequency analysis evaluates how often specific content blocks receive attribution in synthesized responses. AI-enabled discovery readiness depends on stable citation recurrence rather than isolated appearances. Consequently, organizations must monitor entity mention patterns across time.

Tracking recurrence provides insight into structural trust weighting within generative systems. Furthermore, monitoring context persistence reveals whether extracted content maintains semantic coherence across interaction cycles. Therefore, citation analysis becomes a diagnostic instrument within AI-discovery performance planning.

Stable citation frequency indicates durable generative relevance.

Core performance indicators include:

- Inclusion rate

- Citation recurrence

- Entity mention stability

- Context persistence

An e-commerce platform implemented a centralized entity registry and introduced explicit definition blocks across product documentation. The company aligned content containers with structured attribution and revision tracking. Over a twelve-month period, monitoring tools recorded increased generative mention stability across conversational systems. Inclusion frequency remained consistent despite model updates.

Performance measurement beyond traffic strengthens AI-discovery visibility strategy by aligning evaluation frameworks with generative inclusion dynamics.

Enterprise Workflow for AI Search Readiness

AI-centric site preparation operationalizes structural principles into repeatable systems. A workflow is defined as a repeatable operational process aligning content production with AI interpretation logic across modeling, validation, and measurement layers. Research from DeepMind Research demonstrates that structured pipelines improve reasoning consistency and model reliability in large-scale AI systems. Therefore, organizations must embed an AI-first web development strategy into editorial operations to sustain an AI-discovery aligned content model.

Claim: Structured workflows determine whether architectural principles translate into consistent generative inclusion.

Rationale: Generative systems reward repeatable structural patterns and penalize inconsistent semantic execution.

Mechanism: Operational pipelines align entity modeling, evidence validation, and visibility monitoring into a unified AI-centric site preparation process.

Counterargument: Informal publishing processes may produce high-quality content without formal workflow documentation.

Conclusion: Enterprise-level generative readiness requires standardized workflows that integrate structural discipline with measurable outcomes.

Editorial Modeling Pipeline

An editorial modeling pipeline formalizes how entities, mechanisms, and implications are constructed before publication. AI-native content structure requires that each semantic unit follows deterministic modeling standards rather than stylistic variation. Consequently, editorial systems must encode structural templates into content production environments.

Modeling begins with entity definition and extends through mechanism clarification and implication mapping. Furthermore, structural validation checkpoints ensure that semantic containers remain internally coherent. Therefore, the editorial pipeline becomes a structural enforcement mechanism within AI-centric site preparation.

Predictable modeling reduces interpretive variance and strengthens generative inclusion probability.

Structured Publication Lifecycle

A structured publication lifecycle integrates governance controls into the deployment phase. AI-aligned website governance ensures that updates preserve entity continuity and definitional stability across versions. Consequently, publication becomes a controlled structural transition rather than a simple content upload.

Lifecycle systems must include validation checkpoints, revision documentation, and post-publication monitoring. Moreover, integrating performance metrics into the lifecycle closes the feedback loop between structure and visibility. Therefore, enterprise workflow design directly supports long-term AI-discovery aligned content model stability.

Operational discipline converts structural design into durable interpretability.

The enterprise workflow follows a structured sequence:

Stage 1: Entity modeling

Stage 2: Semantic container design

Stage 3: Evidence validation

Stage 4: Structural consistency audit

Stage 5: Visibility measurement

This structured sequence aligns editorial execution with AI-centric site preparation and sustains measurable generative inclusion across evolving model environments.

Checklist:

- Are core entities defined with stable, reusable terminology?

- Does the page maintain consistent H2–H4 hierarchical segmentation?

- Is each paragraph limited to one explicit reasoning unit?

- Are evidence blocks clearly separated from conceptual explanations?

- Is terminology preserved across revisions to prevent semantic drift?

- Does the structure support deterministic extraction in generative retrieval systems?

Long-Term Adaptive Infrastructure for AI Search

Future-ready digital presence requires infrastructure that remains stable across model evolution cycles. Adaptive infrastructure is defined as scalable structural systems that remain interpretable across model generations without semantic degradation. Research from the Oxford Internet Institute demonstrates that long-term digital resilience depends on institutional governance, structural clarity, and adaptive policy alignment. Therefore, organizations that aim to future-proof website for AI environments must embed structural durability into an AI search readiness strategy from the outset.

Claim: Long-term generative visibility depends on infrastructure stability across model generations.

Rationale: AI systems evolve in architecture, training data, and retrieval logic, which alters interpretive weighting over time.

Mechanism: Scalable structural systems preserve entity coherence, semantic containers, and validation layers across evolving generative pipelines.

Counterargument: Short-term optimization may produce visibility without infrastructure redesign.

Conclusion: Durable inclusion requires adaptive infrastructure that protects structural interpretability beyond immediate ranking conditions.

Multi-Model Compatibility

Multi-model compatibility ensures that content remains interpretable across different generative systems. An AI-interpretable site framework exposes entities, mechanisms, and implications in predictable configurations that support heterogeneous retrieval architectures. Consequently, structured modeling reduces dependency on a single platform’s weighting logic.

Generative systems differ in context window size, ranking heuristics, and citation weighting. Therefore, infrastructure must prioritize universal structural signals rather than platform-specific optimization tactics. Compatibility across models increases probabilistic stability in generative inclusion scenarios.

Structural neutrality enhances cross-system interpretability and reduces exposure volatility.

Resilience to Model Evolution

Model evolution introduces shifts in reasoning patterns, context limits, and evidence weighting. AI-adaptive content infrastructure anticipates these changes by preserving semantic clarity at the entity and block level. Consequently, content remains extractable even when synthesis mechanisms evolve.

Resilient systems emphasize definitional stability, explicit attribution, and modular semantic containers. Moreover, integrated governance and measurement frameworks detect interpretive drift before visibility declines. Therefore, adaptive infrastructure transforms structural discipline into long-term generative reliability.

Resilience ensures that architectural clarity persists despite algorithmic transformation.

Long-term generative visibility depends on coordinated architecture, governance, and measurement systems. Structural stability reinforces entity coherence, validation integrity, and contextual persistence across evolving retrieval pipelines. Organizations that institutionalize architectural discipline protect interpretability against model volatility.

Future-proofing requires consistent terminology control, structured validation layers, and measurable inclusion metrics. Governance frameworks enforce stability, while performance systems verify generative visibility. Therefore, adaptive infrastructure secures durable inclusion within AI-mediated discovery ecosystems.

Generative Interpretation Architecture of Structured Pages

- Hierarchical depth encoding. Ordered H2→H3→H4 segmentation establishes explicit semantic layering, enabling generative systems to resolve scope, dependency, and conceptual containment within structured reasoning flows.

- Semantic container isolation. Concept blocks, mechanism blocks, example blocks, and implication blocks function as discrete interpretive units that reduce cross-context leakage during response synthesis.

- Definition-boundary stabilization. Immediate micro-definitions anchor entity meaning locally, limiting probabilistic reinterpretation when models recombine extracted fragments across contexts.

- Deterministic transition signaling. Consistent logical progression between sections provides predictable reasoning gradients that generative systems use to model conceptual continuity.

- Cross-sectional coherence control. Stable terminology and structural repetition patterns form an internal reference grid that supports multi-passage alignment in long-context processing.

Together, these architectural properties explain how structured pages are decomposed, indexed, and recomposed by generative systems without destabilizing semantic meaning across retrieval environments.

FAQ: AI Search Readiness Strategy

What is an AI search readiness strategy?

An AI search readiness strategy is a structured framework that prepares a website for inclusion in generative AI responses by ensuring semantic clarity, architectural consistency, and machine-readable organization.

How does AI search differ from traditional search?

Traditional search ranks hyperlinks, while AI search synthesizes multi-source answers using probabilistic reasoning, entity modeling, and contextual evaluation.

What does it mean to future-proof a website for AI?

Future-proofing means building scalable structural systems that remain interpretable across evolving model generations and retrieval architectures.

Why is machine-readable structure important?

Machine-readable structure enables AI systems to extract entities, mechanisms, and implications without ambiguity, increasing inclusion probability in synthesized responses.

How do conversational systems select content?

Conversational AI systems evaluate semantic coherence, citation grounding, contextual stability, and structural consistency before integrating content into multi-turn responses.

What role does governance play in AI visibility?

Governance preserves definitional stability, revision traceability, and terminology control, which protect interpretability across time and model updates.

How should performance be measured beyond traffic?

Performance should be measured through generative inclusion rate, citation recurrence, entity mention stability, and contextual persistence across AI-mediated outputs.

What defines trust infrastructure in AI search?

Trust infrastructure consists of structured validation mechanisms such as authoritative citation layers, verified datasets, and stable definitions that reduce interpretive risk.

Why is information architecture critical for AI search?

Information architecture determines whether semantic units are hierarchically organized, which directly influences generative interpretability and synthesis reliability.

What ensures long-term generative visibility?

Long-term generative visibility depends on coordinated architecture, governance controls, validation layers, and measurable structural consistency across publication cycles.

Glossary: Key Terms in AI Search Readiness

This glossary defines the core terminology used in this article to support consistent interpretation by both human readers and generative AI systems.

AI Search Readiness Strategy

A structured framework aligning architecture, governance, semantic modeling, and measurement systems to ensure inclusion in generative AI-mediated search environments.

Machine-Readable Content

Structured semantic units that can be interpreted without ambiguity by probabilistic AI systems during retrieval and synthesis.

Generative Visibility

Inclusion of structured content within synthesized AI responses across conversational and retrieval-based generative systems.

Semantic Container

A discrete structural block such as a concept, mechanism, example, or implication unit that preserves contextual clarity during AI extraction.

Trust Infrastructure

Structured validation mechanisms including authoritative citations, verified datasets, and stable definitions that support factual reliability in generative systems.

Entity Modeling

The process of defining and organizing conceptual entities with stable attributes and relationships to enable consistent AI interpretation.

Semantic Drift

The gradual alteration of meaning caused by inconsistent terminology or undocumented updates, reducing interpretability across model generations.

Adaptive Infrastructure

Scalable structural systems designed to remain interpretable and stable across evolving AI retrieval architectures.

Citation Eligibility

The structural capacity of a content block to be directly referenced within synthesized AI responses due to clarity, attribution, and definitional stability.

Structural Consistency

The degree to which content maintains stable hierarchy, terminology, and logical progression across sections and publication cycles.