Last Updated on March 2, 2026 by PostUpgrade

Building an Information Hierarchy That AI Understands

An AI information hierarchy is a structured ordering of concepts, mechanisms, and implications that enables models to interpret meaning consistently across sections. This structure defines how semantic units relate to each other and how reasoning flows through a document. In enterprise publishing, AI information hierarchy becomes a design requirement rather than an editorial preference because generative systems depend on stable internal representations. Therefore, structuring information for AI understanding directly influences extraction accuracy, reuse probability, and long-term visibility.

AI-readable information hierarchy refers to a hierarchy that language models can parse without ambiguity. It relies on predictable section boundaries, local definitions, and consistent terminology. Generative systems build internal attention graphs that map conceptual relationships across tokens, and consequently they reward coherent structure over surface-level stimulation. Research from the Stanford Natural Language Institute demonstrates that transformer-based models depend on contextual reinforcement and distributional stability when generating responses across multi-section documents.

Enterprise environments require machine-readable structure that remains stable across updates and scaling cycles. Large documentation ecosystems, knowledge portals, and research libraries must maintain semantic continuity over time. Accordingly, AI information hierarchy supports long-term AI-driven accessibility by preserving meaning even when interfaces, search layers, or generative panels evolve. When organizations treat hierarchy as infrastructure, they reduce interpretive drift and increase generative reuse across platforms.

A multinational publisher reorganized its technical library into clearly layered concept, mechanism, and implication blocks. It introduced explicit local definitions and aligned terminology across articles. Within six months, AI citation frequency in conversational systems increased measurably. Generative panels began extracting structured sections with greater consistency because the underlying semantic hierarchy reduced ambiguity.

Conceptual Foundations of AI Information Hierarchy

AI information hierarchy structure defines a formal ordering of semantic units that governs how models interpret relationships across sections. Information hierarchy design for AI systems establishes predictable conceptual boundaries that support semantic hierarchy for AI interpretation. This section provides conceptual grounding for hierarchical content structure for AI and explains why a machine-interpretable information hierarchy determines long-term extraction stability. According to research from the Stanford Natural Language Institute, transformer models rely on structural consistency to maintain contextual coherence across extended sequences.

Information hierarchy refers to the ordered semantic layering of concepts, mechanisms, examples, and implications within a document. It defines how meaning propagates through structured segments and how interpretive dependencies are preserved across hierarchical levels.

Definition: AI information hierarchy is a structured ordering of concepts, mechanisms, and implications that enables models to interpret semantic depth, preserve entity stability, and extract reusable knowledge modules across generative systems.

Claim: AI information hierarchy determines how models prioritize semantic units.

Rationale: Language models weight structure when forming internal attention graphs that map token dependencies.

Mechanism: Hierarchical content structure for AI improves token alignment and entity stability across sections and reduces contextual ambiguity.

Counterargument: Flat content may still rank when entity authority signals are strong and external credibility compensates for structural weakness.

Conclusion: Structured hierarchy provides predictable extraction, stable reasoning paths, and reusable semantic modules for generative systems.

Hierarchical Content Structure for AI

Hierarchical Content Structure for AI organizes knowledge into layered semantic blocks that reflect conceptual dependency rather than visual formatting. Multi-level content hierarchy for AI aligns entities, definitions, and mechanisms into coherent vertical sequences. AI-compatible content hierarchy therefore mirrors the internal processing logic of transformer architectures and reinforces stable interpretation across extended documents.

AI structural hierarchy principles prioritize explicit definitions, ordered reasoning chains, and consistent terminology. When authors anchor entities at defined conceptual levels, models form stronger relational mappings between sections. Consequently, hierarchical organization supports retrieval accuracy and reduces misalignment in generative outputs.

When content layers follow a logical order, models detect patterns more reliably. Clear boundaries between concept, mechanism, and implication blocks help systems connect ideas without confusion. As a result, hierarchy becomes a structural signal rather than a stylistic choice.

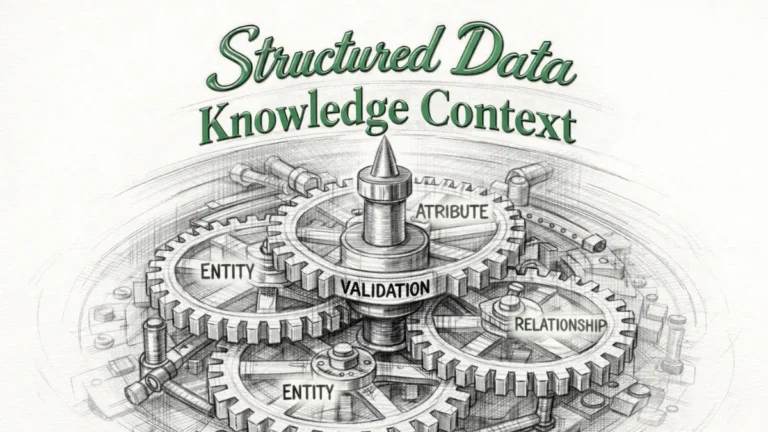

Semantic Layering and Node Stability

Semantic layering establishes node stability within a machine-interpretable information hierarchy. A node represents a conceptual anchor that models reference during reasoning. AI hierarchy clarity signals emerge when each node maintains a stable definition and a fixed position within the structural sequence.

AI comprehension through hierarchy depends on consistent relational depth. If a concept appears at multiple hierarchical levels without redefinition, models may redistribute attention weights inconsistently. Therefore, controlled layering preserves entity alignment and ensures predictable semantic reinforcement across sections.

Stable layers function as structural checkpoints. Each layer defines a clear role and prevents overlap between explanatory units. When these roles remain consistent, interpretation becomes more deterministic.

| Layer | Function | AI Signal Strength |

|---|---|---|

| Concept Layer | Defines entity scope | High |

| Mechanism Layer | Explains process | Medium |

| Implication Layer | Extends reasoning | Contextual |

Structured layers reduce semantic drift.

Information Architecture for AI Comprehension

Information architecture for AI comprehension defines how structural organization enables models to interpret relationships across documents and platforms. AI-oriented hierarchical design transforms abstract structure into an operational blueprint that supports predictable parsing. A scalable information hierarchy for AI platforms requires explicit ordering rules, stable terminology, and an AI-first hierarchical content framework that remains consistent under growth conditions. Research conducted at MIT CSAIL demonstrates that structural regularity directly influences machine reasoning accuracy in large-scale neural systems.

Information architecture refers to the systematic organization of content elements into ordered relational layers that support retrieval and interpretation. It specifies how entities connect, how sections depend on each other, and how structural patterns remain stable across revisions.

Claim: Information architecture for AI comprehension influences retrieval precision.

Rationale: Models infer relational patterns from layout consistency and repeated structural signals.

Mechanism: An AI-first hierarchical content framework encodes predictable structural signals that reinforce entity alignment and reduce interpretive ambiguity.

Counterargument: Dynamic layouts and reactive components can disrupt structural continuity and confuse parsers.

Conclusion: Stable architecture increases model confidence, improves retrieval precision, and strengthens generative reuse.

Designing Scalable Hierarchies

Designing scalable hierarchies requires formal information hierarchy governance for AI that aligns taxonomy, structure, and semantic boundaries. A structured knowledge hierarchy for AI ensures that definitions, mechanisms, and implications remain ordered even as content volume expands. AI-compatible knowledge architecture therefore integrates conceptual modeling with publishing workflows to prevent structural fragmentation.

As organizations scale, hierarchical consistency becomes a governance issue rather than an editorial preference. Information hierarchy governance for AI establishes rules that regulate entity placement and definition reuse. Consequently, scalable systems preserve semantic continuity while supporting distributed authoring environments.

When hierarchy rules are explicit, teams maintain consistency across thousands of pages. Clear structural constraints reduce ambiguity during updates. Over time, this stability reinforces predictable model interpretation.

Governance Models for Enterprise Publishing

Governance models operationalize hierarchical information modeling strategy within enterprise systems. AI-driven structural content modeling defines how definitions, mechanisms, and implications are distributed across documents and synchronized during revisions. Without governance, structural layers diverge and weaken semantic reinforcement.

A governance framework must align taxonomy, versioning, and definition control. These mechanisms ensure that AI-compatible knowledge architecture remains stable during iterative publishing cycles. Therefore, governance transforms structural design into an enforceable system rather than a theoretical guideline.

- Taxonomy alignment

- Version control

- Definition anchoring

Governance maintains hierarchy stability at scale.

A large documentation platform implemented a structured knowledge hierarchy for AI across its technical portal. It introduced standardized conceptual layers and centralized definition anchoring. As a result, search summaries generated by conversational systems became more consistent. Citation overlap decreased because structural clarity reduced redundant extraction across similar documents.

Semantic Layering and Content Depth Strategy

Layered information hierarchy for AI engines determines how depth and sequence influence interpretive stability across extended documents. Semantic layering for AI systems refers to the deliberate distribution of conceptual units across structured depth levels. An AI-aligned content depth strategy integrates hierarchical reasoning structure for AI to preserve clarity while supporting scalable interpretation. Research from Berkeley Artificial Intelligence Research (BAIR) emphasizes that deep neural architectures rely on ordered representational layers to sustain reasoning consistency across long contexts.

Semantic layering is the controlled arrangement of conceptual, procedural, and inferential elements into vertical structural levels. It defines how meaning accumulates progressively rather than appearing as undifferentiated content blocks.

Claim: Layered hierarchy improves reasoning stability.

Rationale: AI systems process nested dependencies sequentially and assign attention weights based on structural depth.

Mechanism: Hierarchical reasoning structure for AI mirrors transformer depth logic and reinforces token relationships across layers.

Counterargument: Over-layering increases token cost and may introduce unnecessary structural complexity.

Conclusion: Optimal depth balances clarity, computational efficiency, and semantic reinforcement.

Organizing Complex Information for AI Systems

Organizing complex information for AI systems requires hierarchical data organization for AI that reflects conceptual dependency rather than editorial preference. AI cognition and hierarchy design align structural depth with the way transformer architectures propagate attention across sequential layers. Consequently, layered information hierarchy for AI engines improves interpretive coherence when topics span multiple reasoning stages.

Complex domains often include interdependent entities and cross-referenced mechanisms. Therefore, semantic layering for AI systems must explicitly separate concept blocks, mechanism blocks, and implication blocks to prevent overlap. When hierarchical data organization for AI preserves clear vertical sequencing, generative systems maintain consistent inference paths across extended documents.

Clear structural depth enables models to follow reasoning without redistributing attention unpredictably. When content appears in ordered layers, systems detect progression rather than fragmentation. As a result, hierarchy becomes a stabilizing factor for complex interpretation.

Precision Hierarchy in Long-Form Publishing

Precision hierarchy for AI systems defines the exact placement of conceptual units within long-form content. Content hierarchy modeling for AI ensures that each reasoning layer has a defined scope and does not duplicate adjacent layers. Therefore, depth becomes a measurable structural parameter rather than an abstract editorial quality.

Long-form publishing introduces risks of redundancy and semantic drift. Accordingly, precision hierarchy for AI systems regulates how concepts expand across sections while preserving hierarchical reasoning structure for AI. When depth boundaries remain explicit, models interpret layered sequences as coherent reasoning chains rather than isolated fragments.

Structured depth reduces interpretive noise. Each layer carries a specific cognitive load and signal weight. Predictable layering increases AI extraction probability.

| Hierarchy Level | Cognitive Load | AI Extraction Probability |

|---|---|---|

| Concept Level | Low to Medium | High |

| Mechanism Level | Medium | Medium |

| Implication Level | Medium to High | Contextual |

Depth must remain bounded and predictable.

Machine-Interpretable Structure and Extraction Logic

Machine-interpretable information hierarchy defines how structural patterns enable deterministic parsing across AI-driven systems. AI-understandable structural design transforms editorial organization into a formalized model that machines can evaluate without inference gaps. AI-driven information structuring operationalizes hierarchical organization for machine understanding by encoding consistent boundary markers and semantic depth signals. Research frameworks from NIST emphasize that standardized structural representation improves reliability, reproducibility, and evaluation consistency in computational systems.

Machine-interpretable information hierarchy refers to a structured content model in which each semantic unit has a defined scope and position. It ensures that extraction logic remains stable across interfaces, crawlers, and generative systems.

Principle: Generative systems prioritize content whose structural hierarchy, definition proximity, and semantic containers remain consistent enough to reduce interpretive variance during parsing and extraction.

Claim: Machine-interpretable structure increases deterministic parsing.

Rationale: AI crawlers depend on consistent boundary markers to identify semantic units accurately.

Mechanism: AI-driven information structuring reduces ambiguity by reinforcing explicit hierarchical organization for machine understanding.

Counterargument: Over-standardization may constrain narrative flexibility and reduce adaptive expression.

Conclusion: Structured design supports scalable interpretation while preserving semantic clarity.

Structural Signals for AI Crawlers

Structural signals for AI crawlers determine how models identify hierarchical transitions and contextual dependencies. AI hierarchy clarity signals emerge when section boundaries and semantic containers align with logical progression. AI-based structural interpretation relies on hierarchical content mapping for AI to detect patterns across documents rather than isolated formatting cues.

Hierarchical organization for machine understanding requires that structural signals remain consistent across pages. When heading depth, paragraph boundaries, and definition placement follow repeatable rules, AI-driven information structuring becomes predictable. Consequently, crawlers prioritize structured segments that demonstrate semantic continuity and interpretive stability.

Clear structural signals reduce parsing uncertainty. Models recognize ordered patterns and preserve entity alignment. As a result, extraction logic becomes more deterministic and less dependent on surface heuristics.

Predictable Content Containers

Predictable content containers formalize the internal composition of a machine-interpretable information hierarchy. A concept block defines an entity and establishes its semantic scope within the hierarchy. A mechanism block explains the process or logic associated with that entity. An implication block extends reasoning to consequences or contextual applications.

AI-understandable structural design requires that these containers appear in a consistent sequence. When concept blocks precede mechanism blocks and implication blocks, hierarchical content mapping for AI reinforces progressive reasoning. Therefore, extraction systems identify semantic roles without redistributing attention unpredictably.

- Clear H2–H4 nesting

- Definition proximity

- Consistent terminology

Containers form stable semantic anchors.

Governance, Taxonomy, and Semantic Consistency

Information hierarchy governance for AI establishes the rules that preserve structural integrity across evolving content ecosystems. A governance model defines how terminology, hierarchy depth, and structural containers remain synchronized within a scalable information hierarchy for AI platforms. Structured knowledge hierarchy for AI depends on enforceable standards that regulate definition placement and conceptual ordering. According to policy frameworks analyzed by the OECD, digital governance systems require consistent classification logic to maintain interoperability and long-term semantic stability.

Information hierarchy governance refers to the formal system of policies, controls, and validation mechanisms that maintain hierarchical coherence across documents. It ensures that definitions remain stable, taxonomies remain aligned, and structural signals remain predictable over time.

Claim: Governance prevents semantic drift.

Rationale: Long-term AI-driven accessibility requires terminology stability across publishing cycles and platform updates.

Mechanism: Taxonomy control synchronizes hierarchical updates and preserves alignment within a structured knowledge hierarchy for AI.

Counterargument: Excessive rigidity may reduce adaptability and slow structural evolution in dynamic environments.

Conclusion: Balanced governance maintains structural continuity while enabling controlled adaptation.

Taxonomy as Hierarchical Backbone

Taxonomy functions as the hierarchical backbone that supports hierarchical information modeling strategy across enterprise systems. AI-compatible content hierarchy relies on controlled vocabularies that define entity scope and prevent terminological overlap. AI-compatible knowledge architecture therefore integrates taxonomy alignment into content workflows to ensure that structural consistency persists at scale.

When taxonomy aligns with hierarchical depth, semantic layering remains coherent across documents. Information hierarchy governance for AI ensures that each conceptual unit occupies a defined position within the scalable information hierarchy for AI platforms. Consequently, taxonomy acts as a structural stabilizer that reinforces predictable AI comprehension through hierarchy.

Clear taxonomy boundaries reduce ambiguity during updates. Defined relationships between entities preserve semantic alignment. As a result, hierarchy functions as an operational model rather than a descriptive abstraction.

Versioning and Definition Stability

Versioning and definition stability maintain AI hierarchy clarity signals across iterative revisions. When structured knowledge hierarchy for AI integrates controlled updates, models detect continuity instead of fragmentation. AI comprehension through hierarchy depends on consistent entity definitions that do not shift meaning across versions.

Hierarchical information modeling strategy requires version logs that document structural adjustments. Governance systems must track definition changes and enforce backward compatibility within AI-compatible knowledge architecture. Therefore, controlled updates prevent divergence between conceptual layers and preserve interpretive stability.

Stable definitions protect long-term accessibility. When terminology remains consistent, generative systems maintain coherent reasoning paths. Controlled taxonomy preserves meaning over time.

AI Visibility and Generative Reuse

Information hierarchy planning for AI visibility determines how structured documents enter generative answer environments and remain extractable across platforms. Generative reuse refers to the process by which structured semantic units are reassembled, summarized, or cited by AI systems without loss of contextual integrity. An AI-focused information architecture strategy integrates AI-driven structural content modeling to ensure that hierarchical modules remain independently reusable. Empirical findings discussed in OpenAI research articles show that structured reasoning patterns increase reliability and consistency in large language model outputs.

Generative reuse depends on modular semantic units that preserve meaning outside their original document context. Information hierarchy planning for AI visibility therefore organizes concept blocks, mechanism blocks, and implication blocks into discrete yet interconnected layers.

Claim: AI information hierarchy enhances generative reuse.

Rationale: Structured modules are extracted into answer panels, summaries, and conversational responses when structural signals remain consistent.

Mechanism: Hierarchical content structure for AI enables block-level summarization and preserves entity stability across retrieval environments.

Counterargument: Weak authority or insufficient evidence limits reuse even when structural organization is strong.

Conclusion: Structure and credibility must align to sustain generative visibility.

Extraction in SGE and Conversational Systems

Extraction in SGE and conversational systems depends on AI-optimized content layers that encode stable semantic roles. Semantic hierarchy for AI interpretation allows models to isolate concept definitions and procedural explanations without reprocessing entire documents. AI cognition and hierarchy design influence how generative engines identify reusable segments during response construction.

Conversational interfaces prioritize clarity and compression. Therefore, hierarchical content structure for AI must separate definitional units from extended reasoning chains to support efficient extraction. When AI-driven structural content modeling aligns depth with interpretive clarity, visibility in generative panels becomes more predictable.

Clear structural modules increase extraction precision. Systems detect boundaries and preserve contextual meaning during summarization. Consequently, hierarchical planning directly influences generative exposure.

Example: A layered AI information hierarchy that separates concept blocks from mechanism and implication blocks allows generative systems to isolate high-confidence reasoning units, increasing the probability of structured reuse in answer panels and conversational summaries.

| Extraction Format | Hierarchy Requirement | Visibility Outcome |

|---|---|---|

| Direct Answer Panel | Explicit concept block | High reuse probability |

| Summarized Insight Card | Defined mechanism block | Moderate to high reuse |

| Conversational Response | Integrated concept and implication blocks | Context-dependent reuse |

Extraction systems reward structural clarity.

Comparative Models of Hierarchical Design

Hierarchical content mapping for AI enables comparative evaluation of structural depth and semantic organization across publishing systems. Structural benchmarking refers to the systematic comparison of hierarchy configurations to measure interpretive reliability and extraction performance. AI-compatible knowledge architecture must therefore support multi-level content hierarchy for AI while remaining measurable under standardized evaluation criteria. Research from Carnegie Mellon LTI demonstrates that representation learning and structural cues significantly influence downstream reasoning stability in large language models.

Structural benchmarking evaluates how hierarchical organization supports consistent interpretation across environments. It analyzes depth alignment, definition placement, and boundary clarity as measurable variables rather than stylistic choices.

Claim: Comparative hierarchy models reveal structural weaknesses.

Rationale: Different systems interpret structural depth differently based on learned attention patterns and representational bias.

Mechanism: Hierarchical organization for machine understanding varies by training data distribution and structural exposure during pretraining.

Counterargument: Benchmark conditions may not fully replicate real-world complexity or domain-specific variability.

Conclusion: Comparative evaluation refines enterprise hierarchy models by identifying gaps in depth consistency and semantic reinforcement.

Flat vs Layered Structures

Flat structures present content without enforced depth boundaries, whereas layered systems embed content hierarchy modeling for AI within explicit vertical organization. Hierarchical reasoning structure for AI depends on progressive conceptual ordering that mirrors attention propagation. AI structural hierarchy principles therefore prioritize layered organization over linear accumulation of statements.

Flat content may appear concise; however, it limits semantic reinforcement across levels. Multi-level content hierarchy for AI enables controlled expansion of reasoning paths and reduces ambiguity during extraction. Consequently, layered systems provide stronger alignment with AI-compatible knowledge architecture and machine-understandable structural patterns.

Layered design increases interpretive predictability. Ordered depth clarifies conceptual dependencies. Structured hierarchy therefore improves extraction consistency across generative systems.

Performance Comparison Framework

A performance comparison framework evaluates structural impact on interpretive stability and extraction reliability. Content hierarchy modeling for AI measures how structure type influences response precision across retrieval environments. Hierarchical reasoning structure for AI provides quantifiable indicators such as boundary clarity and definition proximity.

Benchmarking requires standardized metrics that compare extraction accuracy and semantic stability under identical content conditions. When AI structural hierarchy principles guide evaluation, structural differences become measurable variables rather than subjective assessments. Therefore, comparative analysis strengthens enterprise-level structural decisions.

| Structure Type | Extraction Accuracy | Semantic Stability |

|---|---|---|

| Flat Structure | Moderate in simple contexts | Low in complex domains |

| Layered Structure | High in structured contexts | High across domains |

Layered systems outperform flat systems in complex domains.

Long-Term AI-Driven Accessibility

Scalable information hierarchy for AI platforms determines whether structured content remains interpretable across model generations and interface shifts. Long-term accessibility refers to the sustained ability of AI systems to extract, interpret, and reuse structured knowledge over extended temporal cycles. An AI-aligned content depth strategy reinforces continuity by embedding stable semantic boundaries within an AI-first hierarchical content framework. Longitudinal datasets published by World Bank Open Data illustrate how structured classification systems preserve interpretability across multi-decade data revisions.

Long-term accessibility requires durable structural logic rather than temporary formatting adjustments. Scalable information hierarchy for AI platforms must therefore encode persistent entity relationships that remain stable even when indexing layers evolve.

Claim: Long-term accessibility depends on hierarchy durability.

Rationale: AI memory layers accumulate structured patterns over time and reinforce previously observed hierarchical signals.

Mechanism: Stable semantic hierarchy for AI interpretation preserves entity continuity and reduces interpretive variance across updates.

Counterargument: Rapid model updates or architectural shifts may alter extraction logic despite consistent hierarchy.

Conclusion: Durable hierarchies adapt to system changes while maintaining core structural coherence.

Hierarchy as Persistent Knowledge Infrastructure

Hierarchy functions as persistent knowledge infrastructure when hierarchical data organization for AI aligns with consistent semantic layering. AI-driven information structuring encodes stable conceptual positions that models reference repeatedly during retrieval cycles. AI-compatible content hierarchy therefore becomes a structural backbone that supports both current and future generative systems.

Over time, structured patterns reinforce model expectations. When AI-first hierarchical content framework maintains depth stability and definition consistency, generative systems exhibit lower interpretive variance. Consequently, scalable information hierarchy for AI platforms ensures that knowledge remains accessible even as interface layers change.

Persistent hierarchy reduces interpretive erosion. Stable semantic positions anchor entities across revisions. As a result, long-term accessibility becomes measurable rather than assumed.

Monitoring and Updating Hierarchical Systems

Monitoring hierarchical systems ensures that structural durability remains intact during expansion. Structural audits evaluate whether AI-aligned content depth strategy remains consistent across new publications. Definition validation confirms that semantic hierarchy for AI interpretation preserves entity continuity across versions.

Extraction testing measures how AI-driven information structuring performs in live retrieval environments. Regular evaluation identifies drift before it affects generative visibility. Therefore, scalable information hierarchy for AI platforms requires operational oversight rather than passive maintenance.

- Structural audits

- Definition validation

- Extraction testing

Continuous evaluation protects generative visibility.

AI information hierarchy functions as a structural prerequisite for AI comprehension, generative visibility, machine-readable structure, and long-term AI-driven accessibility. It integrates conceptual grounding, architectural governance, semantic layering, extraction logic, and durability into a unified enterprise model. When organizations implement AI information hierarchy as infrastructure, they align structural clarity with generative reuse and scalable interpretation. Consequently, AI information hierarchy becomes a foundational enterprise design principle that sustains visibility across evolving AI ecosystems.

Checklist:

- Are core entities defined within immediate structural proximity?

- Does the hierarchy preserve stable H2–H4 depth across sections?

- Are concept, mechanism, and implication blocks clearly separated?

- Is terminology consistent throughout the scalable information hierarchy?

- Does the semantic layering support deterministic AI extraction?

- Is structural governance aligned with long-term AI-driven accessibility?

Hierarchical Interpretation Framework in Generative Systems

- Depth-encoded semantic segmentation. Multi-level structural layers function as discrete interpretive segments that large language models parse as ordered reasoning units rather than continuous text blocks.

- Definition-boundary stabilization. Immediate proximity between terms and their formal definitions reduces semantic dispersion and strengthens entity anchoring within the model’s internal representation.

- Progressive reasoning alignment. Ordered concept–mechanism–implication sequences mirror transformer attention propagation, reinforcing hierarchical reasoning structure during extraction.

- Cross-layer coherence signaling. Consistent terminology and predictable depth transitions enable generative systems to maintain context continuity across extended sections.

- Structural modularity under generative indexing. Clearly bounded semantic containers allow block-level reuse without fragmentation when content is surfaced in answer panels or conversational summaries.

Together, these architectural properties define how structured pages are interpreted as layered semantic systems, supporting stable extraction, consistent reasoning, and long-context comprehension in generative environments.

FAQ: AI Information Hierarchy and Generative Visibility

What is AI information hierarchy?

AI information hierarchy is a structured ordering of concepts, mechanisms, and implications that allows generative systems to interpret meaning, preserve entity stability, and reuse semantic blocks reliably.

Why does AI information hierarchy matter for generative visibility?

Generative systems extract structured modules rather than entire pages, so visibility depends on clearly layered semantic units and stable structural boundaries.

How does AI information hierarchy differ from traditional content structure?

Traditional structure focuses on readability and navigation, while AI information hierarchy encodes machine-interpretable layers that support deterministic parsing and block-level summarization.

What role does semantic layering play in AI interpretation?

Semantic layering separates concept blocks, mechanism blocks, and implication blocks, which strengthens hierarchical reasoning structure and reduces ambiguity during extraction.

How do generative systems reuse structured content?

Models identify bounded semantic containers within a machine-interpretable information hierarchy and reassemble them into answer panels, summaries, or conversational responses.

Why is definition proximity important in AI-first architecture?

Immediate definitions anchor entities within the structural hierarchy, stabilizing meaning and reinforcing consistent semantic interpretation across long contexts.

How does hierarchy durability influence long-term AI accessibility?

Durable hierarchical organization preserves entity continuity across model updates and indexing changes, ensuring that structured knowledge remains interpretable over time.

What structural signals improve AI extraction reliability?

Clear heading depth, consistent terminology, stable taxonomy alignment, and explicit semantic containers increase deterministic parsing and generative reuse.

How does governance support scalable information hierarchy for AI platforms?

Governance synchronizes taxonomy, versioning, and structural modeling to maintain semantic continuity across expanding content ecosystems.

What defines an AI-first hierarchical content framework?

An AI-first hierarchical content framework encodes ordered semantic layers that align with model attention logic and support consistent interpretation across retrieval environments.

Glossary: Core Terms in AI Information Hierarchy

This glossary defines the structural terminology used throughout this article to ensure consistent interpretation by both human readers and generative systems.

AI Information Hierarchy

A structured ordering of concepts, mechanisms, and implications that enables AI systems to interpret, extract, and reuse semantic units with consistency.

Semantic Layering

The controlled distribution of conceptual elements across hierarchical levels to preserve reasoning order and entity stability.

Machine-Interpretable Structure

A content architecture designed with explicit boundaries and consistent depth markers to support deterministic parsing by AI systems.

Generative Reuse

The process by which structured semantic blocks are extracted and recomposed by AI systems into summaries, panels, or conversational responses.

Hierarchical Reasoning Structure

An ordered sequence of conceptual and procedural layers that mirrors the attention propagation logic of transformer-based models.

Structural Governance

A system of policies and controls that preserves terminology stability, taxonomy alignment, and hierarchical consistency over time.

Semantic Container

A bounded content unit such as a concept block, mechanism block, or implication block that isolates meaning within a defined structural role.

Definition Proximity

The placement of a term and its formal definition within immediate structural proximity to reinforce entity anchoring.

Structural Predictability

The degree to which a document maintains consistent depth layers and boundary signals across sections.

Long-Term AI Accessibility

The sustained interpretability of structured content across evolving generative systems and indexing models.