Last Updated on February 7, 2026 by PostUpgrade

Optimizing the Fold: Above vs. Below for AI

Content interpretation has shifted from visual UX patterns to structural analysis performed by AI systems. Modern search engines and generative models no longer depend on scrolling behavior or screen visibility to assess importance. Instead, they rely on content order, semantic placement, and early structural signals to determine relevance and reuse. In this environment, above the fold optimization operates as a meaning-prioritization mechanism rather than a design technique.

This article explains how AI systems interpret content positioned above and below the fold when building internal relevance and extraction models. The analysis focuses on how above the fold optimization affects semantic weighting, trust alignment, and generative reuse across AI-driven discovery surfaces. Rather than treating the fold as a UX boundary, the discussion frames it as a structural signal that influences machine-readable hierarchy.

By separating the functional roles of above and below the fold content, the article clarifies how page structure shapes AI interpretation, visibility outcomes, and long-term accessibility in generative systems.

The Conceptual Role of the Fold in AI Interpretation

AI systems do not perceive screens, scroll depth, or visual breakpoints. Instead, they infer importance from structural order and early semantic signals embedded in the document flow. In this context, above the fold content operates as a prioritization cue that informs extraction, summarization, and reuse decisions in generative systems, as reflected in standards for document order and interpretation defined by the W3C.

Definition: AI understanding refers to a model’s ability to interpret content priority, structural order, and semantic boundaries in a way that enables stable extraction, accurate summarization, and consistent reuse across generative systems.

Claim: Generative systems infer content priority from early structural placement rather than visual presentation.

Rationale: Language models require deterministic signals to rank meaning without relying on human interaction patterns.

Mechanism: Models assign higher interpretive weight to content that appears earlier in the document tree and semantic hierarchy.

Counterargument: Visual prominence can still influence user engagement metrics that indirectly affect downstream signals.

Conclusion: Structural position relative to the fold operates as a primary indicator of importance for AI interpretation.

Above the Fold as a Priority Signal

Content placed early in the document establishes a baseline for relevance assessment. When above the fold visibility aligns with clear semantic framing, AI systems treat that content as foundational for subsequent interpretation. As a result, early sections disproportionately shape summaries, entity extraction, and answer synthesis.

Moreover, early placement reduces ambiguity by presenting core concepts before modifiers or supporting details. This ordering allows models to stabilize meaning and limit conflicting inferences as the document progresses. Consequently, priority signals emerge from sequence and structure rather than presentation style.

Put simply, content that appears first tells AI systems what matters most and how to interpret everything that follows.

Below the Fold as a Contextual Extension

Information placed later in the document expands, qualifies, or operationalizes previously introduced concepts. Below the fold content often supplies mechanisms, examples, and implications that depend on earlier context for correct interpretation. This dependency limits its standalone priority while increasing its explanatory role.

Because generative systems process documents sequentially, later sections inherit constraints established by earlier definitions and claims. This structure prevents late content from redefining meaning unless it introduces explicit contradictions. Therefore, contextual extensions reinforce rather than compete with the primary narrative.

In simpler terms, content placed later supports and clarifies earlier ideas instead of challenging their priority.

Fold-Based Visibility Signals in Generative Systems

Generative engines do not evaluate visibility through scrolling, viewport exposure, or interaction depth. Instead, they rank extractable meaning based on early structural signals that indicate priority and semantic prominence. Within this logic, above the fold optimization determines how generative systems weight content for summarization, reuse, and citation, a principle aligned with research on language model salience and representation conducted by the Stanford Natural Language Institute.

Definition: Visibility signals are indicators that influence extractable relevance in AI outputs by communicating priority, clarity, and semantic confidence through structure.

Claim: Generative systems derive visibility from structural prominence rather than user-facing exposure metrics.

Rationale: Language models optimize for reliable extraction and must identify stable meaning signals without behavioral data.

Mechanism: Visibility signals above the fold emerge from early placement, clear framing, and controlled semantic density that reinforce above the fold optimization in generative ranking logic.

Counterargument: Engagement-based signals can still affect upstream ranking layers that influence content availability.

Conclusion: Structural visibility signals act as the primary weighting mechanism for generative interpretation.

Principle: Content achieves generative visibility when its structural placement, semantic hierarchy, and conceptual boundaries remain stable enough for AI systems to assign priority without interpretive conflict.

Content Prominence and Salience

Structural prominence determines how strongly content anchors downstream interpretation. When content prominence above the fold coincides with explicit definitions and declarative framing, models treat that material as a reference point for the entire document. In this context, above the fold optimization increases the likelihood that early concepts become dominant anchors for generative summaries and answer synthesis.

At the same time, content salience above the fold reduces interpretive noise by signaling which concepts deserve attention first. Because visibility signals above the fold are inferred structurally, salience depends on position and semantic isolation rather than visual emphasis. As a result, models rely on prominence patterns to guide relevance scoring.

In practical terms, content that appears early and is clearly framed becomes the semantic center that later sections depend on.

Informational Density and Early Placement

Informational density above the fold affects how much meaning AI systems can extract per token during initial processing. Dense early sections allow models to build compact internal representations that support accurate summarization and reasoning. This efficiency becomes critical in constrained context windows.

However, density must remain controlled to preserve interpretability. Overloaded early sections can reduce clarity and weaken visibility signals above the fold if concepts overlap or compete. Therefore, effective early placement balances completeness with semantic separation.

Simply stated, concentrated and well-structured information early in the document improves how reliably AI systems understand and reuse content.

| Position | Signal Strength | Extractability | Typical AI Use |

|---|---|---|---|

| Top structural section | High | High | Summaries, direct answers |

| Mid-document section | Medium | Medium | Context expansion |

| Late document section | Low | Low | Supporting detail |

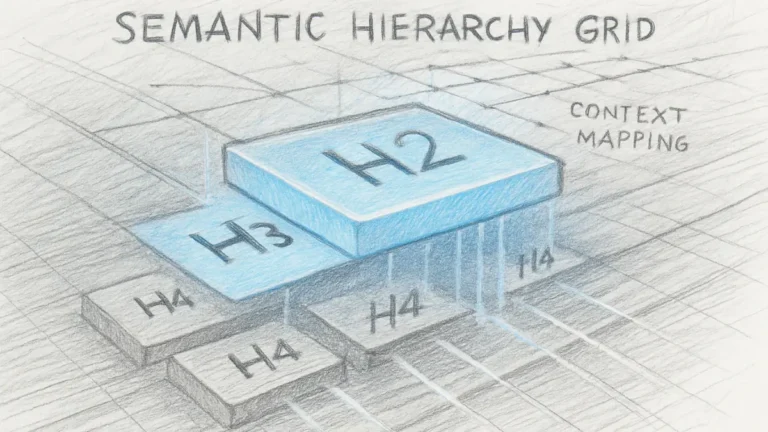

Structural Hierarchy Above vs. Below the Fold

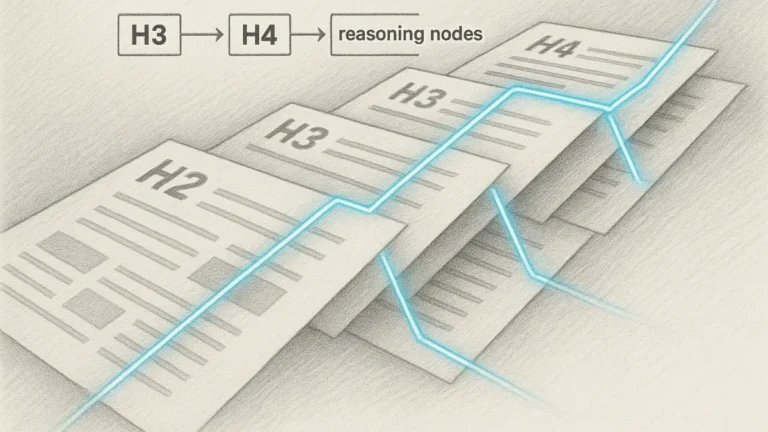

AI systems construct internal relevance graphs from early structural cues rather than visual affordances. Because hierarchy emerges from order and segmentation, fold-based information hierarchy determines how models assign importance before integrating supporting context. In this process, above the fold optimization plays a stabilizing role by fixing which concepts become top-level nodes in the internal graph, a pattern consistent with hierarchical representation learning studied at MIT CSAIL.

Definition: Information hierarchy is the ordered distribution of meaning units by importance, established through structural placement and semantic segmentation.

Claim: Generative systems infer hierarchical importance from early structural placement rather than from later contextual expansion.

Rationale: Models require a stable ordering of concepts to build reliable internal graphs for extraction and reasoning.

Mechanism: Early sections define top-level nodes, while subsequent sections attach dependent nodes with constrained scope.

Counterargument: Highly explicit late definitions can reweight hierarchy if they introduce authoritative corrections.

Conclusion: Hierarchical weighting originates above the fold and governs how later content is interpreted and integrated.

Hierarchy Formation Above the Fold

Hierarchy forms when early sections introduce core concepts with clear boundaries and declarative framing. In this phase, fold hierarchy on pages determines which ideas become primary nodes in the model’s internal graph. These nodes anchor subsequent references and constrain how new information can attach.

Furthermore, early hierarchy reduces ambiguity by limiting the number of competing top-level interpretations. When foundational concepts appear first, models assign them greater stability and reuse potential. This effect persists across summarization and answer generation.

Put simply, what appears first defines the top of the hierarchy and sets the rules for everything that follows.

Supportive Meaning Below the Fold

Later sections expand the hierarchy by supplying detail, evidence, and implications that depend on earlier nodes. Below the fold relevance emerges from alignment with established concepts rather than from independent priority. This alignment ensures that added information strengthens coherence instead of fragmenting meaning.

Because generative systems process content sequentially, later material inherits scope constraints set earlier. As a result, supportive sections rarely override hierarchy unless they explicitly redefine terms. This preserves stability across long documents.

In practical terms, later content explains and supports earlier ideas rather than competing with them for dominance.

Meaning Reinforcement Patterns

Reinforcement occurs when later sections repeat concepts using compatible framing and controlled variation. Fold-based meaning emphasis strengthens internal graph edges by confirming relationships already established above the fold. This repetition improves confidence without introducing redundancy.

At the same time, reinforcement relies on consistency rather than volume. Sparse but aligned references carry more weight than frequent but loosely connected mentions. Models favor confirmation patterns that validate earlier structure.

In essence, reinforcement solidifies hierarchy by confirming earlier meaning instead of reshaping it.

Content Placement and AI Interpretation Logic

Content placement functions as a semantic instruction rather than a layout choice. Generative systems interpret sequence and position as signals that define priority, dominance, and scope, which directly affects extraction and reuse decisions. Within this framework, content placement above the fold interacts with above the fold optimization to determine how models weight meaning during early parsing, consistent with research on representation and selection mechanisms described by the Allen Institute for Artificial Intelligence (AI2).

Definition: Content placement is the positional encoding of informational priority that guides how AI systems rank, interpret, and reuse meaning units.

Claim: Generative systems interpret placement as a directive that determines which content receives dominant semantic weight.

Rationale: Models require unambiguous signals to resolve priority without relying on visual cues or user behavior.

Mechanism: Early placement assigns higher weighting to concepts, while later placement constrains meaning within predefined scope.

Counterargument: Highly authoritative content placed later can still influence interpretation if it introduces explicit redefinition.

Conclusion: Placement operates as a control mechanism that governs dominance and limits reinterpretation in generative processing.

Dominant Content Zones

Dominant zones emerge where placement aligns with early structural position and clear declarative framing. When above the fold content dominance is established through early definitions and claims, AI systems treat these elements as anchors for the entire document. This anchoring effect increases the likelihood of reuse in summaries and synthesized answers.

Additionally, dominant zones reduce interpretive variance by signaling which concepts should guide subsequent reasoning. Because models process content sequentially, early dominance constrains how later information can attach or modify meaning. This constraint stabilizes interpretation across long-form content.

In simple terms, content placed early tells AI systems what should lead the interpretation and what should follow.

Supporting Content Zones

Supporting zones appear later in the document and provide elaboration, justification, or operational detail. Below the fold content support derives its relevance from alignment with earlier dominant concepts rather than from independent priority. This relationship preserves coherence and prevents fragmentation.

Moreover, supporting zones inherit semantic boundaries defined earlier. They extend meaning without challenging established dominance unless they introduce explicit corrective statements. This pattern ensures that added detail strengthens rather than disrupts interpretation.

Put plainly, later content explains and reinforces earlier ideas instead of competing with them.

- content prioritization by fold

- content weighting above the fold

- content depth below the fold

Together, these factors illustrate how placement distributes authority across the page while maintaining a stable interpretive hierarchy.

Page Segmentation and Meaning Distribution

Segmentation defines how AI systems establish boundaries between meaning units during document processing. Rather than relying on visual breaks, models infer separation and priority from structural order and semantic transitions. In this process, page segmentation by fold works together with above the fold optimization to control how meaning is distributed and constrained across sections, a pattern aligned with research on digital interpretation and information boundaries analyzed by the Oxford Internet Institute.

Definition: Page segmentation is the division of content into interpretable meaning zones that guide how AI systems separate, weight, and connect ideas.

Claim: Generative systems distribute meaning according to structural segmentation rather than visual grouping.

Rationale: Models require explicit boundaries to prevent semantic leakage between unrelated concepts.

Mechanism: Segmentation establishes zones with defined scope, while above the fold optimization fixes the initial meaning center that constrains later interpretation.

Counterargument: Highly repetitive themes can blur segmentation if boundaries are not structurally reinforced.

Conclusion: Clear segmentation governs how meaning is distributed and preserved across the document.

Example: A page that introduces its primary concepts above the fold with clear definitions and bounded sections allows AI systems to segment meaning reliably, increasing the likelihood that these sections persist across generated summaries and ranking contexts.

Meaning Distribution Above the Fold

Early segments concentrate primary meaning by introducing core concepts within clearly bounded zones. When page meaning above the fold is defined through concise definitions and declarative statements, AI systems treat these zones as authoritative reference frames. In this configuration, above the fold optimization increases semantic stability by limiting competing interpretations during early parsing.

Furthermore, early distribution limits interpretive drift by anchoring subsequent references to a known semantic center. Because models process content sequentially, early zones influence how later information is scoped and connected. This ordering maintains coherence across long-form documents.

In simple terms, meaning introduced early becomes the reference point for everything that follows.

Contextual Expansion Below the Fold

Later segments expand meaning by adding context, evidence, or implications that depend on earlier zones. Meaning distribution by fold ensures that these expansions remain subordinate to established boundaries rather than redefining them. This dependency preserves interpretive stability while allowing depth.

Additionally, segmented expansion prevents late content from competing for primary importance unless it explicitly revises earlier claims. AI systems recognize this structure and attach later details as extensions rather than new centers of meaning. As a result, segmentation supports both depth and control.

Put plainly, later sections enrich understanding without shifting the original meaning focus.

Fold-Aware Page Structure for AI Systems

AI systems favor predictable structural patterns because consistency reduces interpretive variance during parsing and extraction. Instead of inferring meaning from presentation, models rely on structural regularity to identify priority signals and scope boundaries. Within this context, fold-aware content structure defines how page architecture communicates intent and hierarchy to generative systems, a principle aligned with guidance on information structuring and signal clarity outlined by the NIST.

Definition: Fold-aware structure is page architecture aligned with AI prioritization logic through predictable ordering, stable segmentation, and consistent semantic cues.

Claim: Generative systems interpret fold-aware structures as reliable indicators of priority and scope.

Rationale: Predictable structure enables models to assign weight without relying on probabilistic inference from layout noise.

Mechanism: Consistent ordering, early definitions, and bounded sections create repeatable signals that models reuse across documents.

Counterargument: Highly dynamic or creative layouts can still be interpreted when reinforced by explicit textual markers.

Conclusion: Fold-aware structure improves interpretive stability by providing deterministic cues for AI systems.

Structural Cues Above the Fold

Structural cues placed early communicate how the document should be read and prioritized. When structural cues above the fold include clear headings, definitions, and declarative statements, models establish a stable parsing strategy from the outset. This strategy reduces ambiguity in downstream extraction.

Additionally, early cues constrain interpretation by signaling which sections represent core concepts versus supporting detail. Because AI systems process content sequentially, these cues shape how later information is scoped and attached. The result is a more controlled and reusable semantic graph.

In simple terms, early structure tells AI systems how to organize meaning before they read further.

Layout Signals and Interpretation

Layout signals contribute to interpretation when they align with structural expectations rather than visual styling. Page layout fold signals such as consistent heading depth, spacing tied to semantic units, and orderly progression reinforce how models separate and connect ideas. These signals complement textual cues without requiring visual perception.

Moreover, aligned layout signals reduce the likelihood of misclassification between primary and secondary content. When structure and layout agree, models can confidently assign relevance and extraction priority. This agreement supports consistent summarization across contexts.

Put plainly, orderly layout that follows structure helps AI systems confirm what the structure already indicates.

Attention Modeling Without Human Scrolling

AI systems model attention without observing clicks, scroll depth, or viewport exposure. Instead, they infer importance from structural order, early placement, and semantic clarity. In this setting, attention signals above the fold determine which content becomes salient during extraction and summarization, reflecting research on computational attention and language understanding from Carnegie Mellon University’s Language Technologies Institute.

Definition: Attention modeling is the algorithmic estimation of importance without interaction, based on structural cues and semantic signals.

Claim: Generative systems infer attention from structural signals rather than from human behavior.

Rationale: Models must assign importance in environments where interaction data is unavailable or unreliable.

Mechanism: Early placement, explicit definitions, and bounded sections increase salience and guide extraction order.

Counterargument: Interaction signals can still inform upstream ranking layers when such data exists.

Conclusion: Structural attention modeling enables consistent prioritization without dependence on user behavior.

Attention Hierarchy Formation

Attention hierarchy forms when early sections establish dominant concepts with clear scope and ordering. In this process, fold-based attention hierarchy assigns greater weight to concepts introduced before supporting detail appears. These early anchors guide how subsequent information is interpreted and connected.

Furthermore, hierarchical attention reduces competition between concepts by limiting which ideas can claim primary importance. Because models process content sequentially, early hierarchy constrains later attachments and stabilizes relevance scoring. This stabilization improves reuse across summaries and generated answers.

Put simply, the order of presentation defines what AI systems pay attention to first and most consistently.

Extractable Priority Signals

Extractable signals indicate which content should be selected during summarization and answer synthesis. Content discovery above the fold benefits from clear framing, concise definitions, and separation from secondary detail. These signals increase confidence during extraction and reduce the risk of misinterpretation.

At the same time, extractability depends on alignment between structure and semantics. When early sections present unambiguous claims, models can reliably surface them across contexts. This reliability supports consistent representation in generative outputs.

In practical terms, content that is easy to extract early is more likely to appear in AI-generated responses.

An enterprise documentation page was restructured to move definitions and core claims above the fold. Before the change, AI summaries emphasized secondary examples and missed the central concept. After restructuring, summaries consistently reflected the primary definition and its implications. This shift occurred without changes to wording, indicating that placement alone altered attention modeling.

Implications for Generative Visibility and Ranking

Fold optimization shapes how content persists and reappears across AI-driven discovery surfaces over time. Rather than influencing short-term interaction metrics, structural placement affects whether meaning is extracted, summarized, and reused consistently. In this context, page structure above vs below fold determines how generative systems rank and retain information, a relationship examined in research on model generalization and representation stability by DeepMind Research.

Definition: Generative visibility is the likelihood that content is selected, reused, and cited in AI-generated answers across different contexts.

Claim: Generative visibility depends on structural placement that stabilizes meaning during initial interpretation.

Rationale: Models favor content that can be reliably extracted and reused without reinterpretation risk.

Mechanism: Early placement increases semantic confidence, enabling consistent ranking and repeated reuse across outputs.

Counterargument: Highly authoritative sources can still achieve visibility despite suboptimal placement.

Conclusion: Structural positioning governs long-term generative visibility more than transient ranking factors.

Reuse Probability and Early Placement

Reuse probability increases when core concepts appear early and are framed with clear boundaries. In this configuration, fold influence on content interpretation limits ambiguity and supports stable extraction across summarization tasks. Models repeatedly surface such content because its meaning remains consistent under compression.

Additionally, early placement improves alignment between ranking and reuse. When interpretation stabilizes at the beginning of processing, later transformations preserve intent rather than altering emphasis. This stability supports durable visibility across generative answers.

In simpler terms, content placed early is easier for AI systems to reuse accurately and repeatedly.

Strategic Implications for Enterprise Content

Enterprise content strategies benefit from aligning structure with generative reuse logic. Fold-based content hierarchy signals help organizations ensure that core definitions and claims persist across AI summaries and search results. This alignment reduces dependency on ranking volatility.

Moreover, strategic placement enables predictable performance across different models and platforms. When hierarchy and placement remain consistent, generative systems converge on similar interpretations. This convergence strengthens long-term discoverability.

Put plainly, enterprises that structure content for reuse gain more consistent visibility than those that rely on ranking alone.

Checklist:

- Is primary meaning introduced before contextual expansion?

- Are fold boundaries aligned with semantic hierarchy rather than layout?

- Does early structure constrain later interpretation?

- Are definitions placed before dependent explanations?

- Is semantic priority reinforced through consistent section ordering?

- Does the page maintain interpretive stability across its full length?

Interpretive Signals in Fold-Oriented Page Architecture

- Positional salience encoding. Early structural zones function as priority anchors, enabling AI systems to assign relative importance before contextual expansion occurs.

- Sequential meaning constraint. Ordered section flow limits reinterpretation by fixing conceptual scope prior to downstream elaboration.

- Boundary-based segmentation. Structural breaks associated with fold-aware layout establish discrete meaning zones that prevent semantic overlap.

- Hierarchy-driven weighting. Depth placement within the page architecture influences how generative systems weight concepts during extraction and reuse.

- Early-context stabilization. Initial structural framing reduces interpretive variance by stabilizing reference points used throughout the document.

This structural layer illustrates how fold-oriented architecture shapes AI interpretation by governing priority, scope, and meaning stability without relying on visual perception or interaction signals.

FAQ: Generative Engine Optimization (GEO)

What is Generative Engine Optimization?

Generative Engine Optimization focuses on structuring content so AI systems can interpret, prioritize, and reuse meaning units in generated answers.

How does GEO differ from traditional SEO?

Traditional SEO emphasizes ranking signals, while GEO addresses how AI systems interpret structure, hierarchy, and semantic priority during extraction.

Why is GEO relevant to fold-based content structure?

Fold-aware structure influences which content AI systems treat as primary meaning versus supporting context during interpretation.

How do generative engines evaluate content importance?

Generative systems infer importance from structural order, early placement, and semantic clarity rather than from visual layout or scrolling behavior.

What role does page structure play in AI interpretation?

Page structure defines semantic boundaries and hierarchy, enabling AI systems to distribute meaning consistently across sections.

Why does early content placement affect AI reuse?

Content introduced early establishes reference points that guide summarization, ranking, and long-term reuse in generative answers.

How does fold-aware structure influence generative visibility?

Fold-aware structure stabilizes interpretation, increasing the likelihood that core concepts persist across AI-generated responses.

Does GEO replace SEO in AI-driven search?

GEO complements SEO by addressing interpretation and reuse logic that ranking-based optimization does not fully cover.

What determines whether content is reused by AI systems?

Reuse depends on structural clarity, stable hierarchy, and consistent semantic framing rather than on popularity signals alone.

How does GEO support long-term AI accessibility?

By aligning structure with AI interpretation patterns, GEO helps content remain understandable and reusable as generative systems evolve.

Glossary: Key Terms in Fold-Based Content Interpretation

This glossary defines core terminology related to fold-aware structure, semantic priority, and AI interpretation used throughout the article.

Above the Fold Optimization

A structural optimization approach that places primary meaning units early in the document to guide AI interpretation, extraction, and reuse.

Fold Boundary

A conceptual structural threshold separating primary meaning zones from contextual expansion within a page.

Semantic Priority

The relative importance assigned to content units by AI systems based on placement, structure, and clarity.

Meaning Zone

A structurally bounded section of content interpreted by AI systems as a coherent semantic unit.

Structural Hierarchy

The ordered relationship between content sections that determines dominance, dependency, and interpretive scope.

Visibility Signal

A structural indicator that influences whether content is extracted, summarized, or reused by generative systems.

Interpretive Stability

The degree to which content maintains consistent meaning across AI extraction, summarization, and reuse.

Sequential Interpretation

The process by which AI systems interpret content in order, allowing early sections to constrain later meaning.

Generative Reuse

The repeated inclusion of content in AI-generated answers based on structural clarity and semantic confidence.

Fold-Aware Structure

A page architecture that aligns content placement with AI prioritization logic to control interpretation and reuse.