Last Updated on March 22, 2026 by PostUpgrade

From SERPs to Summaries: Competing in AI Response Panels

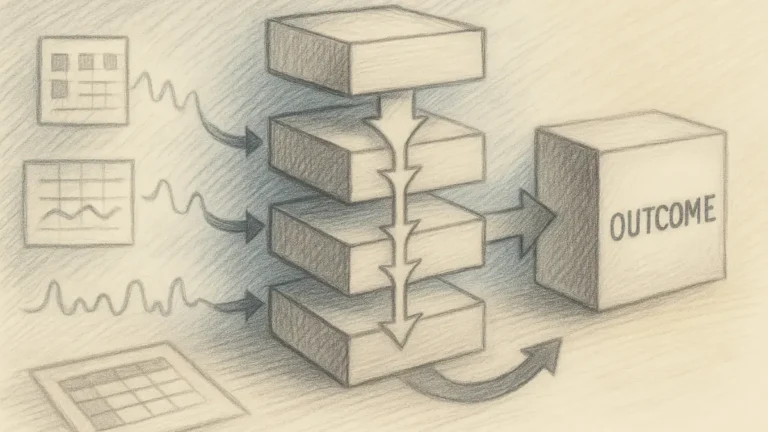

AI does not rank your page—it extracts stable semantic fragments, validates entities across sources, and only then decides if your content deserves inclusion in a synthesized answer.

TL;DR: Traditional SEO focuses on ranking pages, but AI panels prioritize extracting structured meaning, causing strong pages to lose visibility if their content is not modular and coherent. This leads to reduced clicks and missed inclusion in summaries despite high authority. To solve this, content must be rebuilt as semantic containers with stable entities, clear hierarchy, and reinforced citations so AI can interpret, extract, and reuse it reliably. The result is consistent inclusion in AI-generated panels and expanded visibility beyond clicks.

If your content cannot be cleanly extracted and reused by AI, it will be ignored even if it ranks.

AI response panels optimization defines the structural discipline required to compete in environments where synthesized summaries replace traditional link-based rankings. Generative systems now determine visibility through inclusion in answer surfaces rather than through positional prominence in SERPs. Therefore, enterprises must realign architecture, authority modeling, and measurement systems to secure sustainable placement inside AI-generated panels.

Search ecosystems have shifted from index-first retrieval to synthesis-first delivery. Large language models evaluate structured signals, entity consistency, and contextual clarity before generating panel outputs. As a result, competing in AI response panels requires systemic transformation rather than incremental SEO adjustments.

Structural Shift: From Blue Links to AI Panels

The transition from link listings to synthesized summaries redefines ranking mechanics and alters the logic of from blue links to AI panels competition. Research conducted by Stanford Natural Language Processing Group demonstrates that transformer-based architectures prioritize contextual coherence over positional ordering. Consequently, panel based search competition now depends on semantic stability and structured traversal rather than homepage authority alone.

An AI response panel is a synthesized answer layer generated by large language models that aggregates multiple sources into a unified contextual summary. Unlike traditional SERPs, this response surface evaluates structured extraction eligibility before citation placement.

Claim: The migration from SERPs to AI-generated panels changes competitive visibility models.

Rationale: Generative systems reward contextual integrity and structural clarity instead of hyperlink prominence.

Mechanism: Models aggregate content signals, entity authority, and semantic containers to construct ranking inside AI summaries.

Counterargument: Traditional retrieval layers still influence upstream document selection.

Conclusion: Sustainable visibility requires architectural adaptation to AI response surface visibility dynamics.

Retrieval Pipeline Transformation

Generative search pipelines prioritize contextual assembly over sequential ranking. Instead of presenting blue links, the system consolidates high-confidence fragments into a coherent response. Therefore, SERP to AI panel transition demands structural precision at the content layer.

Moreover, large language models integrate retrieval augmentation, entity resolution, and confidence scoring before synthesis. As a result, AI driven summary inclusion depends on predictable structural traversal and not on isolated keyword signals.

Content must function as modular knowledge units rather than isolated landing pages. Clear segmentation improves eligibility for AI curated response placement.

Ranking Inside AI Summaries

Ranking inside AI summaries operates through probabilistic weighting of semantic consistency. Models assign greater weight to structurally stable sections that reinforce entity definitions across multiple contexts. Consequently, AI summary ranking factors include entity alignment, citation reinforcement, and structured hierarchy.

At the same time, models penalize ambiguous phrasing and inconsistent terminology. Thus, AI panel relevance scoring reflects semantic predictability rather than aesthetic formatting.

In practical terms, panels favor coherent knowledge containers over promotional phrasing. Structured depth increases inclusion probability.

| System Layer | Traditional SERP Model | AI Panel Model |

|---|---|---|

| Retrieval Logic | Keyword ranking and backlink authority | Contextual synthesis and entity validation |

| Visibility Driver | Position in link list | Inclusion in synthesized summary |

| Ranking Signal | Page-level authority metrics | Fragment-level semantic stability |

| Measurement Focus | Click-through rate | Citation presence and surface inclusion |

This structural shift establishes the foundation for AI response panels optimization across enterprise ecosystems. Competing effectively inside AI-generated summaries requires a broader strategic framework that defines how content becomes interpretable and reusable for generative systems. The foundational principles behind this approach are explained in this guide to building a generative visibility strategy, which outlines how semantic clarity, entity modeling, and structured architecture enable AI-driven discovery.

AI Response Panels Optimization Framework

AI response panels optimization requires a structured eligibility framework grounded in model interpretability research published by MIT CSAIL. Generative systems reward clarity, hierarchy, and evidence-based assertions over density or repetition. Therefore, optimization must align with structural extraction criteria instead of surface-level manipulation.

An optimization framework is a repeatable architecture that aligns semantic containers, authority signals, and measurement systems to increase AI panel content eligibility. It ensures that models interpret content as stable knowledge modules rather than fragmented narratives.

Claim: AI response panels optimization depends on semantic clarity rather than keyword frequency.

Rationale: Generative models evaluate internal coherence and entity reinforcement across sections.

Mechanism: Structured headings, defined terminology, and citation consistency increase AI panel citation strategy effectiveness.

Counterargument: Over-structuring may reduce narrative continuity if not managed carefully.

Conclusion: Balanced structural precision maximizes optimizing for AI generated summaries outcomes.

Definition: AI understanding is the model’s ability to interpret meaning, structure, and conceptual boundaries in a way that enables accurate reasoning, reliable summarization, and consistent content reuse across generative response surfaces.

Structural Eligibility Signals

Structural eligibility signals determine whether a document qualifies for AI response extraction criteria. These signals include predictable heading depth, atomic paragraph boundaries, and defined terminology. Consequently, content signals for AI summaries originate from architecture rather than style.

Additionally, semantic clarity for AI panels improves when definitions appear immediately after concept introduction. Models construct internal knowledge graphs based on definitional anchors.

Clear structure signals reliability. When content follows predictable patterns, models increase extraction confidence.

Authority Reinforcement Mechanisms

Authority reinforcement strengthens AI panel citation visibility across domains. Generative systems compare entity references across multiple trusted datasets before synthesizing summaries. Therefore, authority signals for AI panels amplify inclusion probability.

Moreover, entity authority in AI panels increases when definitions align with external research citations. Source attribution in AI panels becomes a reinforcing loop when consistent terminology appears across authoritative references.

Structured authority modeling produces cumulative trust. Over time, citation overlap improves panel answer positioning.

| Signal Type | Model Impact | Optimization Action |

|---|---|---|

| Defined Terminology | Increases extraction clarity | Provide micro-definitions immediately after term introduction |

| Citation Consistency | Improves trust weighting | Reference authoritative institutions and research |

| Entity Alignment | Enhances semantic stability | Maintain stable vocabulary across all sections |

| Structural Hierarchy | Strengthens fragment extraction | Use predictable H2–H4 architecture |

This framework establishes operational discipline for competing in AI response panels at scale.

Content Architecture for AI Panels

Structuring content for AI panels requires adherence to machine-readable architecture standards documented by W3C. Generative systems evaluate hierarchical clarity before extracting fragments for AI panel answer positioning. Consequently, content adaptation for AI panels must prioritize semantic containers over narrative expansion.

Principle: Content achieves higher AI response surface visibility when structural hierarchy, entity definitions, and conceptual containers remain stable enough for generative systems to interpret without ambiguity.

A semantic container is a bounded conceptual unit that isolates a definable idea, mechanism, or implication. Models interpret these containers as stable graph nodes, which strengthens content adaptation for AI panels.

Claim: Structured architecture directly affects AI panel content eligibility.

Rationale: Generative systems extract bounded knowledge modules more reliably than blended paragraphs.

Mechanism: Layered headings and atomic sections enable precise AI response extraction criteria alignment.

Counterargument: Excessive fragmentation may reduce reading continuity.

Conclusion: Modular architecture with logical transitions improves AI panel answer positioning.

Semantic Containers and Concept Blocks

Semantic containers organize content into concept, mechanism, example, and implication units. This organization reduces ambiguity and increases AI-friendly web page structure clarity. Therefore, models interpret semantic blocks as reusable knowledge components.

Furthermore, stable terminology prevents semantic drift across sections. Consistency strengthens AI panel response triggers.

Structured blocks improve comprehension for both humans and systems. Clear boundaries enhance extraction readiness.

Extraction-Friendly Formatting

Extraction-friendly formatting requires precise heading depth and predictable paragraph sequencing. Additionally, defined terminology immediately after introduction improves content readiness for AI summaries.

However, structure alone is insufficient without internal coherence. Thus, semantic containers must maintain consistent meaning across references.

In practice, models select fragments that present self-contained reasoning units. Well-structured formatting increases AI curated response placement probability.

- Predictable heading hierarchy

- Defined terminology per concept

- Consistent entity references

- Atomic paragraph boundaries

Disciplined architecture enhances AI response surface visibility across generative platforms and strengthens long-term eligibility for AI response panels optimization.

Authority Signals in AI Panels

Authority signals for AI panels define how generative systems determine which sources qualify for inclusion in synthesized answers. Research from the Allen Institute for Artificial Intelligence (AI2) demonstrates that large language models rely on structured evidence patterns and cross-source validation to assess credibility. Therefore, AI panel trust indicators directly influence AI panel citation visibility and long-term AI summary dominance tactics.

An authority signal is a verifiable reference or entity marker that increases model confidence in factual stability. Authority signals include institutional citations, dataset references, peer-reviewed validation, and consistent entity attribution across domains.

Claim: Authority signals determine inclusion priority in AI panels.

Rationale: Models prioritize trusted sources during synthesis to reduce probabilistic uncertainty.

Mechanism: Citation overlap and entity reinforcement increase trust scores within internal knowledge graphs.

Counterargument: Emerging sources may lack authority history and therefore receive limited initial inclusion.

Conclusion: Distributed citation presence across domains strengthens sustained AI panel citation visibility.

Trust Modeling in Generative Systems

Generative systems construct probabilistic confidence models before generating panel outputs. These systems evaluate entity reliability, citation recurrence, and semantic alignment across datasets. Consequently, AI panel trust indicators emerge from measurable evidence patterns rather than subjective authority claims.

Moreover, models compare factual assertions with known reference corpora. When content aligns with established institutional data, the system increases synthesis confidence. As a result, authority signals for AI panels operate as structured credibility layers embedded within retrieval-augmented reasoning.

Trust modeling functions as a scoring mechanism rather than a reputation label. If evidence signals remain consistent across contexts, inclusion probability increases.

Citation Overlap Effects

Citation overlap effects occur when multiple trusted sources reference the same entity or factual claim. Generative systems detect these overlaps and assign higher confidence to the shared information. Therefore, AI summary dominance tactics often rely on distributed authority reinforcement rather than isolated publications.

Additionally, citation overlap enhances AI panel citation visibility by strengthening graph connectivity. When entity references appear across academic, institutional, and industry sources, models interpret this repetition as stability. Consequently, distributed citations increase the likelihood of consistent panel inclusion.

In practical terms, a single citation rarely guarantees panel visibility. However, repeated entity reinforcement across domains produces measurable authority accumulation.

Microcase: Distributed Citation Strategy in Practice

A B2B analytics platform initially struggled to achieve AI panel citation visibility despite ranking well in traditional SERPs. The company expanded its authority footprint by contributing research data to academic repositories and securing references in institutional reports. Over a six-month period, the platform observed increased inclusion in AI-generated summaries for core queries.

The improvement correlated with citation overlap across three independent knowledge domains. As a result, authority signals for AI panels strengthened through distributed reinforcement rather than content expansion alone.

| Authority Layer | Signal Type | Model Confidence Effect |

|---|---|---|

| Institutional Validation | Academic or research lab citation | High trust amplification |

| Cross-Domain Reference | Multiple independent domain mentions | Reinforced entity stability |

| Data Transparency | Public dataset attribution | Increased factual confidence |

| Terminology Consistency | Stable entity naming across contexts | Reduced interpretive ambiguity |

Authority modeling therefore operates as a measurable system of structured signals. When organizations align entity consistency, citation breadth, and definitional clarity, AI panel trust indicators become cumulative and scalable.

Entity Modeling and Inclusion Criteria

Understanding how AI selects panel sources requires examination of entity resolution logic and graph-based retrieval systems developed in computational linguistics research at the Carnegie Mellon Language Technologies Institute. Generative models rely on entity-level coherence to determine eligibility for synthesis. Therefore, AI panel response triggers originate from structured entity modeling rather than surface-level keyword matching.

Entity modeling is the structured representation of named concepts and their relationships inside knowledge graphs. It enables models to connect definitions, references, and contextual signals across distributed corpora.

Claim: Entity consistency increases selection likelihood.

Rationale: Models match entity patterns across corpora to evaluate stability and trust.

Mechanism: Repeated consistent definitions strengthen entity nodes inside internal knowledge graphs.

Counterargument: Overuse of entity mentions may appear artificial and reduce interpretive confidence.

Conclusion: Stable entity references combined with contextual support improve improving inclusion in AI panels outcomes.

Entity Density Thresholds

Entity density thresholds define the minimum frequency and contextual reinforcement required for a concept to become extraction-eligible. Generative systems evaluate not only presence but distribution of entity references across semantic containers. Consequently, content signals for AI summaries strengthen when entity mentions appear in structured and meaningful contexts.

However, density alone does not guarantee eligibility. Models penalize mechanical repetition if semantic variation remains limited. Therefore, entity modeling must balance recurrence with contextual expansion to maintain interpretive credibility.

Effective entity density supports structural recognition without triggering redundancy filters. Consistency increases inclusion probability, whereas excessive repetition reduces confidence.

Example: A page that defines entities consistently, maintains controlled terminology, and separates mechanisms from implications enables AI systems to segment meaning accurately, increasing the likelihood that its high-confidence sections will appear in panel-based summaries.

Knowledge Graph Alignment

Knowledge graph alignment measures how closely internal entity definitions match established references across trusted datasets. When definitions align with academic or institutional sources, models assign higher trust weights. As a result, AI panel response triggers activate more reliably.

Additionally, cross-domain reinforcement enhances graph stability. If the same entity relationships appear across independent knowledge bases, models interpret the pattern as confirmation rather than coincidence.

Alignment strengthens long-term visibility because models reuse graph nodes across multiple summaries. Stable entity modeling therefore supports sustainable improving inclusion in AI panels strategies.

- Controlled definitions

- Cross-domain citations

- Consistent terminology

These practices ensure that entity references remain coherent, verifiable, and structurally aligned across all semantic containers.

Measurement and Performance Tracking

Measuring traffic from AI panels requires a shift from click-based metrics to inclusion-based evaluation models aligned with standards from the National Institute of Standards and Technology (NIST). Generative systems reduce direct clicks while expanding surface-level exposure through synthesized summaries. Therefore, AI panel exposure strategy must incorporate measurable inclusion metrics rather than rely exclusively on CTR.

An inclusion metric is a measurable indicator reflecting whether content is cited or summarized in AI panels. It captures fragment-level visibility inside synthesized outputs rather than page-level traffic performance.

Claim: Traditional SEO metrics do not capture AI panel performance.

Rationale: Traffic may decrease while influence increases through summary-level exposure.

Mechanism: Panel citation logs, structured brand mentions, and entity references reflect indirect reach within generative systems.

Counterargument: Attribution complexity limits measurement accuracy because generative outputs do not always disclose source weight.

Conclusion: Organizations must combine quantitative traffic data with systematic citation tracking to evaluate AI summary optimization framework effectiveness.

| Metric | Traditional SEO Model | AI Panel Model |

|---|---|---|

| Primary Indicator | Click-through rate | Inclusion frequency in AI summaries |

| Visibility Signal | SERP ranking position | Fragment citation inside panel output |

| Attribution Logic | Page-level referral tracking | Entity-level mention tracking |

| Competitive Benchmark | Keyword ranking comparison | AI panel competitive analysis of citation presence |

Measurement frameworks must therefore expand beyond ranking dashboards. Generative systems require structural visibility tracking that aligns with panel-level exposure.

Citation Frequency Tracking

Citation frequency tracking measures how often a domain appears inside AI-generated summaries for predefined queries. Organizations should maintain structured logs of inclusion instances across generative platforms. Consequently, AI panel competitive analysis becomes measurable through repeat citation detection.

Moreover, frequency analysis reveals patterns in panel answer positioning. If inclusion appears consistently across related queries, entity reinforcement strengthens. Therefore, measuring traffic from AI panels must account for citation density rather than session volume alone.

Tracking citation recurrence provides a clearer representation of generative influence. Structured monitoring converts abstract visibility into quantifiable exposure data.

Panel Impression Monitoring

Panel impression monitoring evaluates how frequently content appears within AI response surfaces even when no click occurs. Generative systems may display summaries that influence user perception without generating direct traffic. As a result, AI panel exposure strategy must include impression-level observation.

Additionally, organizations can track brand and entity mentions inside conversational search outputs. This method identifies shifts in inclusion patterns and supports iterative AI summary optimization framework adjustments.

Monitoring impression-level presence clarifies whether influence grows despite fluctuating clicks. When citation frequency rises while traffic stabilizes, generative visibility strengthens beyond traditional ranking logic.

Competitive Landscape in AI Panels

Panel based search competition operates under concentration dynamics similar to other digital information markets, as analyzed by the Oxford Internet Institute. Generative systems consolidate citations around domains that demonstrate structural stability and recurring authority signals. Therefore, AI panel competitive analysis must account for cumulative entity reinforcement rather than isolated ranking events.

Panel competition is the contest among domains for inclusion in model-generated summaries. It reflects fragment-level visibility inside AI outputs rather than page-level ranking inside traditional SERPs.

Claim: AI panel competition favors structurally mature domains.

Rationale: Stable structured archives produce consistent signals across time and queries.

Mechanism: Long-term content depth increases graph reinforcement and strengthens AI panel trust indicators.

Counterargument: New specialized players can win niche panels when entity clarity exceeds broader competitors.

Conclusion: Depth combined with authority produces stable dominance within AI summary ranking factors.

Concentration Effects

Concentration effects emerge when generative systems repeatedly cite a limited set of domains for high-frequency queries. This pattern occurs because structured archives generate predictable semantic containers that models reuse. Consequently, AI summary ranking factors reward domains that maintain long-term entity coherence.

Moreover, concentration amplifies trust accumulation. When a domain appears across multiple AI panels, models interpret recurrence as reliability. As a result, AI panel trust indicators strengthen through repeated synthesis.

In practice, concentration does not depend solely on brand size. It depends on structural maturity and consistent semantic architecture.

Niche Strategy for AI Panels

Niche strategy allows specialized domains to compete effectively within specific semantic territories. Generative systems evaluate topical depth and entity precision at fragment level. Therefore, a smaller site with controlled terminology and consistent definitions can outperform a broader competitor in a focused domain.

Additionally, niche domains often present fewer semantic conflicts. When entity modeling remains tightly scoped, models assign higher confidence to extracted fragments. Consequently, panel based search competition can favor specialized expertise over generalized authority.

Focused semantic depth can outweigh scale. When a domain owns a well-defined entity cluster, inclusion likelihood increases.

Microcase: Specialized Domain Outperforming Scale

A cybersecurity documentation platform with limited overall traffic achieved consistent AI panel inclusion for advanced protocol queries. The domain maintained tightly controlled definitions and referenced academic standards bodies. Meanwhile, a larger technology portal with higher traffic but inconsistent terminology failed to appear in the same summaries.

Over time, the specialized platform accumulated repeated citations within AI-generated answers. This case illustrates how structural precision and entity alignment can outperform broad visibility in AI panel competitive analysis.

Future Evolution of AI Response Surfaces

AI response surface visibility will increasingly determine competitive outcomes as generative systems expand toward multimodal synthesis, a trajectory explored in research from DeepMind Research. As AI response panels optimization matures, models prioritize structured precision to maintain stable synthesis across platforms. Therefore, AI curated response placement depends on cross-modal coherence, semantic stability, and machine-readable consistency.

A response surface is the visible synthesized layer presented to users instead of raw search listings. It consolidates retrieved fragments, entity references, and structured signals into a unified output designed for direct consumption. Within this framework, AI response panels optimization becomes a continuous architectural process rather than a one-time configuration.

Claim: AI response surfaces will prioritize structured precision.

Rationale: Scaling models increases reliance on predictable patterns to ensure stable synthesis and reduce probabilistic drift.

Mechanism: Machine-readable architectures, stable entity definitions, and structured hierarchies reduce hallucination risk and improve ranking inside AI summaries.

Counterargument: Over-optimization may reduce adaptability when model architectures evolve or integrate new modalities.

Conclusion: AI response panels optimization must balance structural discipline with adaptive flexibility to sustain AI summary dominance tactics.

Multimodal Panel Integration

Multimodal panel integration introduces combined text, visual, and structured data references inside single response surfaces. Generative systems process heterogeneous inputs and synthesize them into unified summaries, which directly influences AI response surface visibility. Consequently, AI response panels optimization must account for cross-format compatibility rather than text-only formatting.

Moreover, multimodal integration increases verification complexity. Models cross-reference textual claims with visual cues and structured datasets before generating final output. As a result, AI curated response placement favors content that demonstrates definitional clarity and consistent entity alignment across modalities.

Structured content becomes reusable when it supports both textual and non-textual validation. Therefore, AI response panels optimization strengthens long-term inclusion by maintaining predictable semantic containers across formats.

Cross-Platform Visibility Patterns

Cross-platform visibility patterns describe how inclusion in one generative system influences placement in others. When entity modeling remains stable and definitions stay consistent, ranking inside AI summaries propagates across conversational engines. Consequently, AI response surface visibility becomes cumulative rather than isolated.

Additionally, distributed citation patterns reinforce AI summary dominance tactics. If a domain appears repeatedly across multiple systems, models interpret recurrence as structural reliability. Therefore, AI response panels optimization must focus on sustained entity reinforcement rather than short-term ranking signals.

Visibility shifts from individual query wins to ecosystem-wide stability. Consistent architecture, adaptive semantic containers, and reinforced authority signals ensure durable AI response surface visibility across evolving generative environments.

Enterprise Playbook for AI Panel Dominance

Competing in AI response panels demands enterprise-level transformation aligned with digital governance principles analyzed by the OECD Data Explorer. Generative ecosystems reward structural coherence, measurable authority, and systematic performance tracking. Therefore, content adaptation for AI panels must operate within an integrated operational framework rather than through isolated adjustments.

An enterprise playbook is a repeatable operational framework aligning architecture, authority, and measurement systems. It establishes standardized procedures that ensure AI panel answer positioning remains consistent across domains and over time.

Claim: Competing in AI response panels requires systemic alignment.

Rationale: Isolated tactics fail without architectural consistency across semantic containers and authority signals.

Mechanism: Integrating entity modeling, authority reinforcement, and performance tracking creates cumulative eligibility for AI panel citation strategy execution.

Counterargument: Small teams may lack resources for full-scale implementation and structured monitoring.

Conclusion: Modular adoption enables phased transformation while preserving structural integrity.

Stepwise Implementation Model

A structured implementation model ensures that enterprise adaptation progresses through predictable stages. Organizations should stabilize architecture before expanding authority and integrating measurement systems. Consequently, AI panel answer positioning improves when foundational structures remain consistent.

Additionally, content adaptation for AI panels must follow controlled sequencing. Architectural refinement precedes citation expansion, which in turn precedes performance calibration. As a result, competing in AI response panels becomes a measurable operational discipline rather than a reactive optimization effort.

Implementation clarity reduces interpretive drift and strengthens AI panel citation strategy coherence. Phased alignment increases durability across generative systems.

- Architecture stabilization

- Authority expansion

- Measurement integration

- Cross-domain reinforcement

This sequence produces structural continuity and ensures that each phase reinforces the previous one.

Checklist:

- Does the page define core entities using stable terminology?

- Are H2–H4 boundaries clearly segmented for fragment-level extraction?

- Does each paragraph represent a single reasoning unit?

- Are authority signals distributed across independent domains?

- Is citation presence measurable within AI panels?

- Does the architecture support consistent ranking inside AI summaries?

Final Summary

Generative systems replace positional ranking with synthesis-based inclusion, which redefines competitive logic across digital ecosystems. Structured architecture, semantic containers, and entity consistency determine eligibility for AI panel citation visibility. Authority signals and citation overlap amplify trust weighting and stabilize inclusion probability.

Measurement must shift from click-based metrics to inclusion and citation tracking to reflect AI response surface visibility accurately. Furthermore, panel based search competition favors structurally mature domains while allowing specialized entities to win through focused semantic alignment. Multimodal evolution increases the importance of machine-readable precision and cross-platform stability.

Therefore, AI response panels optimization becomes a long-term architectural commitment rather than a tactical adjustment. Enterprises that integrate entity modeling, authority reinforcement, and systematic measurement build cumulative advantage. Competing in AI response panels ultimately requires consistent structural logic, distributed authority presence, and phased operational discipline.

Interpretive Architecture of AI Panel-Oriented Pages

- Hierarchical depth signaling. Layered H2→H3→H4 segmentation establishes bounded semantic zones that generative systems parse as discrete reasoning modules within response synthesis.

- Entity-position alignment. Stable placement of entity definitions near structural entry points reinforces graph node consistency during fragment extraction.

- Fragment-level eligibility cues. Predictable paragraph boundaries and atomic reasoning blocks function as extraction anchors for ranking inside AI summaries.

- Cross-sectional coherence patterns. Recurrent terminology and structured argument chains reduce interpretive entropy across long-context model traversal.

- Panel-surface compatibility logic. Pages constructed with modular semantic containers remain adaptable to AI curated response placement across generative interfaces.

These architectural properties clarify how generative systems interpret page structure when determining fragment eligibility, citation weighting, and response surface assembly within AI panel environments.

FAQ: AI Response Panels Optimization

What is AI response panels optimization?

AI response panels optimization is a structural approach that prepares content for inclusion in synthesized AI-generated summaries instead of traditional ranked listings.

How do AI response panels differ from traditional SERPs?

Traditional SERPs rank links, while AI response panels synthesize information from multiple sources into a unified answer surface.

How does AI select panel sources?

Generative systems evaluate entity consistency, citation overlap, structural clarity, and authority signals before including content in panel outputs.

What are authority signals for AI panels?

Authority signals are verifiable institutional references, consistent entity markers, and cross-domain citations that increase model confidence during synthesis.

How can organizations improve inclusion in AI panels?

Improving inclusion in AI panels requires stable terminology, defined semantic containers, distributed citations, and measurable inclusion metrics.

Why do traditional SEO metrics fail to capture AI panel performance?

AI panel visibility depends on citation presence and summary inclusion, which may not generate direct clicks measurable through standard CTR metrics.

What role does entity modeling play in panel inclusion?

Entity modeling aligns structured definitions and relationships within knowledge graphs, increasing the likelihood of ranking inside AI summaries.

What is panel based search competition?

Panel based search competition refers to the contest among domains for inclusion in AI-generated summaries rather than link-based rankings.

How does multimodal synthesis affect AI response surface visibility?

Multimodal synthesis integrates text, structured data, and visual references, increasing the importance of machine-readable architecture for panel eligibility.

What defines an enterprise AI panel citation strategy?

An enterprise AI panel citation strategy integrates architecture stabilization, authority expansion, and inclusion tracking to sustain long-term panel visibility.

Glossary: Key Terms in AI Response Panels Optimization

This glossary defines the essential terminology used throughout this guide to support consistent interpretation by both enterprise teams and generative AI systems.

AI Response Panel

A synthesized answer layer generated by AI systems that aggregates multiple sources into a unified summary instead of presenting ranked links.

Authority Signal

A verifiable citation, institutional reference, or entity marker that increases model confidence during generative synthesis.

Entity Modeling

The structured representation of named concepts and their relationships inside knowledge graphs to support consistent extraction and citation.

Inclusion Metric

A measurable indicator reflecting whether a domain, entity, or fragment is cited or summarized inside AI-generated panels.

Panel Based Search Competition

The contest among domains for inclusion in synthesized AI summaries rather than traditional search engine ranking positions.

Response Surface

The visible synthesized output layer presented to users by generative systems instead of raw indexed listings.

Citation Overlap

The recurrence of consistent references across independent domains, increasing graph-level confidence during AI synthesis.

Semantic Container

A bounded conceptual block that isolates a definable idea, mechanism, or implication for reliable machine interpretation.

AI Panel Citation Strategy

An enterprise-level approach that coordinates authority reinforcement, entity stability, and inclusion tracking to sustain panel visibility.

Structural Stability

The consistency of hierarchical organization and terminology across sections, enabling reliable extraction by generative models.