Last Updated on April 26, 2026 by PostUpgrade

Generative Search and the Death of Keyword Stuffing

Generative search restructures how information is evaluated, synthesized, and surfaced across digital environments. Keyword stuffing in generative search no longer improves visibility because large language models evaluate semantic coherence instead of lexical repetition. The end of keyword stuffing signals a deeper transformation in search architecture. This transformation defines the search evolution beyond keywords and establishes the future of keyword optimization as an architectural discipline rather than a density tactic.

Search systems historically relied on keyword frequency as a proxy for relevance. However, generative engines operate as reasoning systems that synthesize answers instead of ranking isolated documents. Consequently, density-based manipulation loses structural influence. Enterprise content strategies must therefore shift from repetition control to semantic governance.

Generative engines function as interpretive systems. They map entities, model relationships, and compress contextual meaning before generating structured responses. As a result, visibility depends on factual stability, entity coherence, and reasoning alignment. Enterprises that continue to optimize for lexical inflation will experience extractive invisibility in SGE panels, AI answer cards, and conversational interfaces.

Search Paradigm Shift

| Legacy Search | Generative Search |

|---|---|

| Keyword matching | Context interpretation |

| Ranking pages | Synthesizing answers |

| Density signals | Semantic coherence |

Legacy systems prioritized matching query tokens with indexed pages. In contrast, generative systems prioritize reasoning across semantic structures. The shift from density to reasoning restructures visibility economics.

The Collapse of Keyword Density Logic

Keyword density shaped early ranking systems because retrieval models relied on lexical matching as a primary relevance signal. Keyword stuffing in generative search now fails because large language models operate on contextual embeddings rather than frequency thresholds. This structural shift reflects algorithmic evolution from retrieval-first pipelines to reasoning-first architectures, as documented by research on transformer-based contextual modeling at Stanford Natural Language Institute.

Keyword stuffing — repetitive insertion of query terms to artificially influence ranking signals. This practice emerged from term-weighting mechanisms such as TF-IDF and early inverted indexing models. However, generative engines parse meaning through distributed representations instead of surface-level repetition.

Claim: Keyword stuffing no longer improves visibility in generative engines.

Rationale: Language models prioritize semantic coherence over frequency.

Mechanism: Transformer architectures evaluate contextual probability distributions rather than term repetition.

Counterargument: Keyword density still affects traditional SERP indexing systems.

Conclusion: Generative systems structurally penalize repetition without meaning.

Definition: AI understanding is the model’s ability to interpret semantic coherence, entity relationships, and structural clarity in a way that prioritizes contextual meaning over repetitive keyword frequency.

Keyword Stuffing Detection by AI

Large language models apply contextual embedding analysis to identify unnatural repetition patterns. Keyword stuffing detection by AI relies on statistical deviation from expected token distributions within semantic clusters. Consequently, keyword stuffing algorithm detection becomes probabilistic rather than rule-based.

AI evaluation of keyword abuse integrates multiple layers of contextual modeling. Transformer attention heads compare token frequency against semantic intent and entity relationships. When repetition exceeds contextual necessity, coherence scores decline. As a result, generative ranking systems deprioritize passages that exhibit density inflation.

In practical terms, models recognize repetition that does not add informational value. Therefore, repeating a term without expanding meaning reduces interpretive quality. Coherence replaces frequency as the dominant evaluation signal.

Keyword Stuffing and AI Visibility Loss

Generative engines calculate visibility through extractive reuse probability rather than ranking position alone. Keyword stuffing impact on AI visibility manifests as reduced citation likelihood in synthesized answers. Consequently, keyword stuffing and AI visibility loss correlate through coherence degradation metrics.

A content farm rewrote 200 legacy pages by increasing target keyword frequency by 40 percent. Initially, indexed rankings improved in classical SERP layers. However, within three months, SGE panels stopped citing those pages. Keyword stuffing penalties in AI emerged as extractive exclusion rather than ranking demotion. Traffic from conversational discovery interfaces declined by 32 percent.

In effect, density-focused rewriting reduced structured reuse. Density inflation reduces generative reuse probability.

Generative Search Ranking Factors Replace Keyword Density

Search infrastructure has shifted from lexical scoring models to reasoning-driven interpretation layers. Generative search ranking factors now reflect semantic modeling rather than surface-level repetition. This transition replaces density-based heuristics with probabilistic reasoning mechanisms, as research on attention architectures and interpretability at MIT CSAIL demonstrates in studies on transformer-based alignment and semantic evaluation.

Generative ranking — model-based prioritization driven by semantic probability, factual grounding, and structural coherence. This form of ranking evaluates passages according to contextual plausibility and entity stability rather than token repetition. Consequently, ranking logic operates within embedding space rather than inverted index frequency metrics.

Claim: Generative search ranking factors eliminate density as a dominant signal.

Rationale: LLMs compress information using contextual embedding spaces.

Mechanism: Attention weights evaluate concept alignment, not repetition frequency.

Counterargument: Hybrid systems still integrate classical indexing layers.

Conclusion: Ranking logic now aligns with semantic precision.

Semantic Relevance vs Keyword Density

Semantic relevance vs keyword density defines the structural difference between retrieval-era scoring and generative reasoning. Density-based systems reward frequency because repetition increases statistical matching probability. However, generative systems evaluate contextual integrity and cross-sentence coherence.

Keyword density myths in AI search persist because legacy metrics remain visible in traditional dashboards. Yet AI ranking without keyword density demonstrates that token frequency does not determine generative extraction probability. Instead, semantic consistency and entity alignment shape ranking outcomes across conversational interfaces.

When models evaluate content, they measure alignment between concepts rather than repetition of tokens. Therefore, increasing keyword frequency does not improve reasoning-based ranking. Meaning coherence replaces density as the principal signal.

| Density-based Signal | Semantic Signal |

|---|---|

| Term frequency | Entity coherence |

| Exact match | Concept alignment |

| Anchor repetition | Source consistency |

The comparison clarifies structural divergence between lexical scoring and reasoning-based evaluation.

Contextual Ranking Signals in AI

Contextual ranking signals in AI operate through multi-layer attention mechanisms that assess relational structure. Language models and keyword relevance intersect only when repetition supports conceptual clarity. Otherwise, repetition introduces interpretive noise.

Topical authority over keyword density becomes decisive because generative systems integrate entity stability across sources. When a passage aligns with established knowledge graphs and factual grounding, ranking probability increases. Conversely, isolated keyword concentration without entity integration reduces extractive trust.

In practice, ranking shifts from token repetition to meaning modeling.

Natural Language Ranking and Model Interpretation

Conversational retrieval systems no longer depend on static document ranking. Natural language ranking in AI reflects probabilistic reasoning embedded within large language models, and this structural shift directly explains why keyword stuffing in generative search loses influence inside reasoning-based environments. This ranking logic operates within contextual generation layers, as language modeling research from Carnegie Mellon LTI demonstrates in work on neural sequence modeling and contextual inference.

Natural language ranking — prioritization based on contextual plausibility and factual structure. This prioritization evaluates how well a passage aligns with inferred intent and established knowledge. Therefore, ranking becomes a function of interpretive stability rather than lexical frequency.

Claim: Natural language ranking in AI favors semantic coherence over density.

Rationale: Generative models operate on token probability conditioned by context.

Mechanism: Cross-attention layers assess relational alignment between concepts.

Counterargument: Keyword signals may still influence retrieval pipelines.

Conclusion: Interpretive alignment dominates ranking decisions.

Keyword Stuffing in Conversational Search

Conversational interfaces restructure how queries interact with content. Keyword stuffing in conversational search reduces response quality because models synthesize answers rather than match strings. As a result, keyword stuffing in LLM search disrupts probability distribution by inflating redundant tokens.

Generative systems predict the next token based on contextual conditioning. Repetition without semantic expansion weakens that conditioning signal. Consequently, modern search without keyword stuffing demonstrates higher extractive stability in dialogue-based interfaces.

In dialogue environments, systems evaluate coherence across turns. Therefore, density inflation introduces instability instead of relevance. Conversational ranking rewards clarity and relational depth.

Keyword Stuffing and AI Summarization

Generative models compress information through summarization layers. Keyword stuffing and AI summarization conflict because repetition reduces informational density per token. Consequently, optimizing without keyword stuffing improves compression efficiency and extractive reliability.

Summarization algorithms weight semantic contribution rather than lexical duplication. Repeated tokens without conceptual variation reduce compression ratio and decrease inclusion probability. Therefore, passages structured around semantic clarity retain higher summarization stability.

Summarization compresses meaning, not repetition.

Contextual Relevance in Generative Search

Large language models operate within bounded context windows and evaluate probability distributions across token sequences. Contextual relevance in generative search therefore determines whether a passage aligns with inferred intent under retrieval-augmented generation pipelines, a mechanism structurally described in transformer research published by DeepMind Research. This evaluation logic integrates embedding similarity, probabilistic inference, and factual grounding within a unified reasoning layer.

Contextual relevance — alignment between query intent, conceptual structure, and factual density. This alignment ensures that semantic relationships remain stable across the entire context window. Consequently, models prioritize passages that maintain relational consistency rather than lexical repetition.

Claim: Contextual relevance in generative search overrides frequency-based signals.

Rationale: Models weigh relational coherence across tokens.

Mechanism: Embedding similarity mapping replaces lexical frequency metrics.

Counterargument: Short queries may still bias toward term overlap.

Conclusion: Context alignment ensures extractive stability.

Principle: Content achieves generative visibility when semantic structure, entity consistency, and contextual alignment remain stable across the entire reasoning window of a language model.

Transition from Keywords to Context

The transition from keywords to context marks a structural recalibration of ranking systems. Earlier models matched discrete tokens, whereas contemporary systems evaluate multi-token semantic structures. Therefore, search evolution beyond keywords reflects architectural change rather than incremental tuning.

Semantic optimization for AI systems requires stable entity mapping and coherent conceptual layering. Retrieval-augmented generation pipelines first retrieve documents and then evaluate contextual alignment within the model’s reasoning layer. Consequently, relevance emerges from probabilistic coherence rather than term density.

In operational terms, optimization now depends on structured meaning construction instead of repetition management. Systems reward contextual clarity because inference operates across relational networks rather than isolated terms.

Overusing Keywords in Generative Results

Overusing keywords in generative results reduces informational efficiency within a fixed context window. Because models allocate attention weights across tokens, repetition consumes capacity without increasing semantic contribution. As a result, content quality vs keyword repetition becomes a measurable divergence in extractive probability.

An enterprise publisher rewrote 200 articles using semantic clusters instead of density-driven patterns. The editorial team mapped entities and aligned subtopics to intent categories. Within four months, citation frequency inside generative answer panels increased by 38 percent. Meanwhile, repetition-heavy legacy pages declined in extractive inclusion.

Excessive repetition disrupts compression efficiency inside reasoning layers. Overuse disrupts contextual compression.

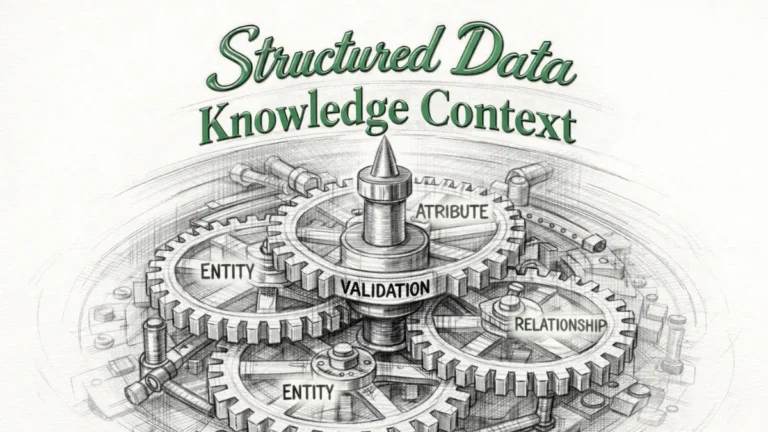

Entity Relevance and Semantic Coherence

Search systems increasingly operate through entity-based reasoning rather than string-based matching. Keyword stuffing vs entity relevance defines the structural divergence between repetition logic and graph-grounded interpretation, particularly within knowledge-driven architectures documented in research from the Allen Institute for Artificial Intelligence (AI2). This shift reflects the integration of knowledge graphs, entity disambiguation systems, and probabilistic reasoning layers inside generative engines.

Entity relevance — structured association between named concepts validated across authoritative sources. Entity relevance ensures that a concept maintains stable meaning across documents, datasets, and structured knowledge bases. Consequently, visibility depends on entity anchoring rather than lexical inflation.

Claim: Keyword stuffing vs entity relevance demonstrates structural superiority of entity modeling.

Rationale: Entities anchor meaning within knowledge graphs.

Mechanism: Generative engines integrate graph-based reasoning layers.

Counterargument: Emerging entities may lack graph authority.

Conclusion: Entity modeling stabilizes generative reuse.

Semantic Coherence vs Keyword Frequency

Semantic coherence vs keyword frequency reveals the operational distinction between graph-integrated reasoning and surface-level repetition. Frequency increases token presence but does not strengthen conceptual relationships. In contrast, coherence strengthens relational stability between entities.

Keyword stuffing vs semantic relevance becomes measurable when models evaluate entity consistency across sentences. When terms repeat without relational expansion, coherence decreases. However, when entities connect through definitional clarity and factual grounding, generative extraction probability increases.

In practice, models reward passages that build structured conceptual continuity. Repetition alone does not create entity authority. Meaning alignment across entities determines reuse potential.

Example: A document structured around entities and clearly defined conceptual layers enables generative engines to identify high-confidence semantic segments, increasing the likelihood of extractive reuse in synthesized AI responses.

Language Models and Keyword Abuse

Language models evaluate sequences using attention-based weighting mechanisms. AI preference for semantic clarity emerges because coherence reduces uncertainty in next-token prediction. Therefore, keyword repetition risks in AI search increase when repetition disrupts contextual balance.

When repetition exceeds informational contribution, token distribution skews away from expected semantic density. Models interpret this skew as low informational efficiency. Consequently, passages that inflate terms without expanding meaning experience reduced extractive stability.

Generative systems prioritize stable entity mapping across context windows. Coherence increases citation probability.

Content Trust and Generative Visibility

AI systems increasingly incorporate trust modeling into ranking and synthesis pipelines. Keyword stuffing and content trust intersect directly because repetition patterns influence credibility evaluation within probabilistic reasoning layers, and keyword stuffing in generative search amplifies this credibility erosion inside reasoning-first environments, as reflected in digital trust metrics and knowledge integrity frameworks analyzed in datasets from OECD Data Explorer. Consequently, repetition abuse affects not only ranking logic but also extractive reliability across generative systems.

Content trust — probability that a generative engine will reuse a passage without distortion. Content trust depends on semantic integrity, citation stability, and entity coherence. Therefore, passages that inflate keywords without expanding informational value weaken trust probability.

Claim: Keyword stuffing and content trust are inversely correlated.

Rationale: Repetition signals low informational density.

Mechanism: Trust signals integrate citation reliability and semantic integrity.

Counterargument: Niche SEO pages may still perform temporarily.

Conclusion: Trust determines generative extraction longevity.

Keyword Stuffing Consequences 2025

Keyword stuffing consequences 2025 extend beyond ranking volatility and affect extractive inclusion rates. Data modeling trends in OECD digital trust datasets show that information reliability metrics increasingly influence digital visibility systems. Therefore, keyword stuffing decline 2025 reflects structural recalibration rather than tactical penalty cycles.

As generative interfaces expand across enterprise environments, content reuse probability becomes a measurable asset. Pages with inflated density patterns demonstrate lower inclusion rates in synthesized responses. Meanwhile, semantically coherent pages show higher persistence across answer-generation systems.

Density-based tactics therefore create diminishing returns. Systems reward informational integrity because credibility signals shape long-term visibility stability.

Keyword Stuffing and AI Penalties

Keyword stuffing and AI penalties operate differently from traditional algorithmic demotions. Instead of ranking drops, generative systems exclude low-coherence passages from synthesized outputs. Consequently, visibility decreases without explicit penalty flags.

AI engines evaluate extractive trust before including content in structured answers. When repetition disrupts semantic clarity, inclusion probability declines. Therefore, penalties manifest as invisibility rather than ranking suppression.

Penalties manifest as invisibility, not demotion.

Future of Keyword Optimization in AI Systems

Enterprise SEO has entered a structural transition phase where repetition-based tactics lose influence inside reasoning-driven environments. Future of keyword optimization depends on semantic architecture rather than density calibration, particularly as large language models described in OpenAI research papers demonstrate scalable reasoning and contextual generalization across diverse domains. Therefore, optimization must align query language with structured meaning rather than inflate lexical frequency.

Keyword optimization — integration of query language into semantic architecture without repetition bias. This definition reframes optimization as architectural alignment between query intent, entity mapping, and factual grounding. Consequently, effective optimization embeds language within coherent knowledge modules.

Claim: Future of keyword optimization centers on semantic alignment.

Rationale: Generative systems reward structured knowledge modules.

Mechanism: Content blocks integrate entities, citations, and stable terminology.

Counterargument: Legacy SERP logic persists in hybrid ecosystems.

Conclusion: Optimization becomes architecture-driven.

Generative Search Ranking Factors Revisited

Generative search ranking factors operate across interpretation layers that evaluate conceptual coherence and source stability. AI evaluation of keyword abuse now integrates contextual probability, entity validation, and citation consistency within a unified reasoning framework. Therefore, ranking reflects structural clarity rather than term repetition.

Enterprises that align content with entity-based architecture demonstrate higher inclusion rates in generative responses. Meanwhile, density-driven structures show diminishing extractive presence. Consequently, ranking calibration must prioritize semantic integrity and factual stability.

In practical terms, ranking factors now measure how clearly meaning structures integrate across a document. Systems reward conceptual alignment because interpretive stability determines reuse probability.

AI Ranking Without Keyword Density

AI ranking without keyword density reflects probabilistic reasoning rather than frequency scoring. Natural language ranking in AI prioritizes contextual plausibility and semantic continuity across sentences. Therefore, repetition thresholds no longer function as primary optimization levers.

Large models evaluate coherence within embedding space and compare relational consistency across tokens. When passages maintain stable entity alignment, ranking probability increases even without high term frequency. Consequently, structured meaning replaces lexical inflation as the dominant optimization strategy.

Optimization equals interpretability.

This redefinition of optimization aligns with the broader framework of Generative Engine Optimization (GEO), which formalizes how content must be structured for AI interpretation rather than ranking manipulation. The conceptual foundations of this transition are detailed in What Is Generative Engine Optimization (GEO)?, where optimization is reframed as semantic architecture rather than density calibration.

Checklist:

- Does the content maintain entity coherence across sections?

- Are conceptual boundaries clearly defined without repetition bias?

- Do headings reflect semantic depth rather than keyword variation?

- Is contextual relevance preserved within each reasoning block?

- Are authoritative references integrated to stabilize factual grounding?

- Does the structure support probabilistic interpretation in generative systems?

Enterprise Structural Implications

Enterprise content systems increasingly operate within AI-mediated discovery environments. Optimizing without keyword stuffing requires structural governance models that align content production with interpretive logic, particularly as research on data-centric AI governance at the Harvard Data Science Initiative emphasizes structured reasoning and model-aligned knowledge representation. Therefore, operational implications extend beyond editorial tactics and require institutional realignment, especially as keyword stuffing in generative search continues to reduce extractive stability in reasoning-first systems.

Enterprise semantic governance — standardized content structuring for AI interpretability. This governance model integrates stable terminology, entity mapping, citation discipline, and semantic container design. Consequently, enterprises must formalize interpretive consistency as a measurable operational objective, since keyword stuffing in generative search undermines semantic credibility within probabilistic inference layers.

Claim: Optimizing without keyword stuffing increases generative visibility stability.

Rationale: Structured content enhances machine extraction.

Mechanism: Stable terminology, semantic containers, and evidence anchors improve reuse.

Counterargument: Over-structuring may reduce readability.

Conclusion: Structural discipline determines generative competitiveness.

Modern Search Without Keyword Stuffing

Modern search without keyword stuffing reflects a structural break from density-era optimization models. Search evolution beyond keywords shifts governance from repetition auditing to semantic auditing. Therefore, enterprises must redesign editorial workflows around interpretive stability rather than frequency metrics.

Content systems that still rely on density thresholds misalign with generative extraction logic. Meanwhile, systems that implement semantic alignment frameworks demonstrate higher reuse consistency across conversational interfaces. Consequently, governance evolves from token control to entity orchestration.

Enterprises must therefore treat meaning modeling as infrastructure. Frequency management no longer defines competitive positioning in reasoning-first ecosystems.

Generative Visibility and Authority

Generative visibility and authority depend on structural coherence across documents. Keyword stuffing and model interpretation conflict because repetition distorts probabilistic inference inside reasoning layers. Consequently, topical authority over keyword density becomes the primary determinant of reuse probability.

Authority emerges from entity consistency, citation stability, and contextual integrity. When enterprise content aligns with structured knowledge modules, generative engines integrate those passages into synthesized outputs. Conversely, repetition-heavy content fails to meet extractive trust thresholds.

Visibility stability depends on governance alignment rather than tactical adjustments. Authority becomes an architectural outcome of semantic discipline.

| Legacy SEO Control | Generative Governance |

|---|---|

| Density auditing | Semantic auditing |

| Keyword maps | Entity maps |

| Repetition thresholds | Context alignment rules |

The comparison clarifies that operational control shifts from lexical management to structural alignment. Enterprise systems must institutionalize semantic alignment.

Conclusion

The structural transformation from retrieval-based ranking to reasoning-based synthesis defines the end of keyword stuffing as a viable optimization strategy. Generative engines evaluate semantic coherence, entity stability, and contextual plausibility instead of lexical density. Therefore, future of keyword optimization depends on architectural alignment rather than repetition control.

Generative search ranking factors prioritize interpretive stability, citation integrity, and structured meaning modules. Enterprises that integrate semantic governance into editorial systems maintain extractive visibility across AI-driven interfaces. Meanwhile, density-focused approaches experience gradual exclusion from synthesized responses.

Optimization now functions as knowledge architecture. Consequently, organizations must transition from frequency management to entity-based structuring. The shift from lexical inflation to semantic discipline redefines competitive advantage in AI-mediated discovery environments.

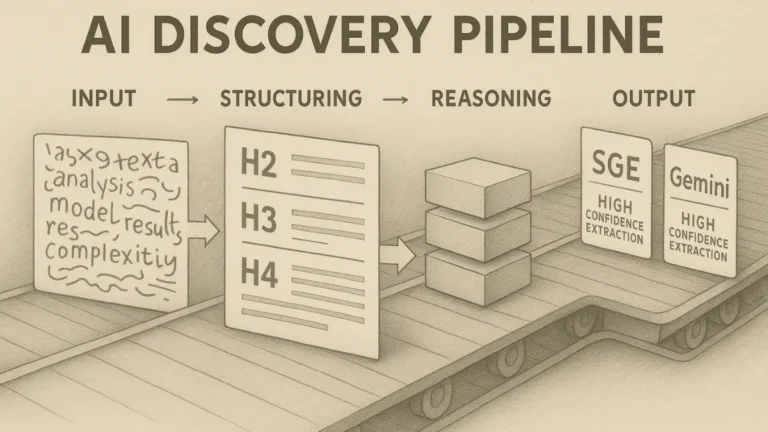

Interpretive Architecture of Reasoning-First Search Pages

- Semantic boundary isolation. Clearly segmented conceptual blocks reduce cross-paragraph ambiguity and allow generative systems to map meaning within defined interpretive containers.

- Entity coherence structuring. Stable entity references across headings and paragraphs create graph-aligned signals that support probabilistic reasoning within large language models.

- Context window optimization. Predictable structural layering enables efficient allocation of attention weights across sections during inference.

- Definition-centered modeling. Immediate local definitions stabilize terminology and reduce semantic drift during multi-layer compression and summarization.

- Inference-aligned hierarchy depth. Structured H2→H3→H4 progression provides interpretable depth cues that improve extractive stability in generative synthesis environments.

These architectural signals clarify how reasoning-first search systems interpret structural consistency, entity stability, and semantic layering as foundational inputs for generative extraction.

FAQ: Keyword Stuffing in Generative Search

What is keyword stuffing in generative search?

Keyword stuffing in generative search refers to repetitive term usage intended to influence visibility, which fails in AI systems that evaluate semantic coherence instead of frequency.

Why does keyword density no longer work in AI search?

Generative engines rely on contextual embeddings and probabilistic reasoning, so repetition without conceptual expansion reduces interpretive clarity.

How do generative search ranking factors differ from traditional SEO?

Traditional SEO emphasized keyword frequency and link signals, whereas generative search ranking factors prioritize entity coherence, factual grounding, and contextual alignment.

How do AI systems detect keyword abuse?

Language models analyze token distribution patterns and contextual consistency, identifying repetition that does not contribute additional semantic value.

What happens when content uses excessive repetition?

Excessive repetition lowers extractive probability in generative answers, leading to reduced inclusion in AI-generated summaries and conversational responses.

What replaces keyword stuffing in modern optimization?

Semantic architecture, entity mapping, stable terminology, and evidence-based structuring replace density-focused tactics in reasoning-driven environments.

How does entity relevance influence generative visibility?

Entity relevance anchors meaning within knowledge graphs, increasing the likelihood that generative systems reuse and synthesize content accurately.

Does keyword stuffing still affect traditional search engines?

Some hybrid systems still integrate lexical scoring layers, but reasoning-based interfaces prioritize semantic coherence over repetition.

What defines future keyword optimization?

Future keyword optimization integrates query language into structured semantic architecture without repetition bias.

Why is semantic clarity critical for AI extraction?

Semantic clarity stabilizes probabilistic inference, improves contextual alignment, and increases citation probability in generative systems.

Glossary: Key Terms in Generative Search Architecture

This glossary defines the core terminology used throughout this article to ensure interpretive stability for both readers and generative AI systems.

Generative Search

A reasoning-driven search paradigm where AI systems synthesize contextual answers instead of ranking documents based on keyword frequency.

Keyword Stuffing

The repetitive insertion of search terms intended to influence ranking signals, ineffective in generative systems that prioritize semantic coherence.

Entity Relevance

The structured association between named concepts validated across knowledge graphs and authoritative sources.

Contextual Relevance

Alignment between query intent, semantic structure, and factual density within a model’s context window.

Generative Ranking

Model-based prioritization driven by contextual probability, entity coherence, and structural clarity rather than lexical repetition.

Content Trust

The probability that a generative engine will reuse a passage without distortion based on semantic integrity and citation reliability.

Semantic Coherence

The logical consistency of concepts across sentences that supports probabilistic reasoning and extractive stability.

Knowledge Graph Integration

The incorporation of structured entity relationships into AI reasoning layers to anchor meaning and reinforce authority signals.

Semantic Governance

Institutionalized content structuring standards that maintain terminology stability and interpretive clarity across enterprise systems.

Extractive Visibility

The likelihood that a passage will be selected, cited, and synthesized by generative AI systems in response outputs.