Last Updated on March 22, 2026 by PostUpgrade

Writing for AI Interpretation, Not Human Skimming

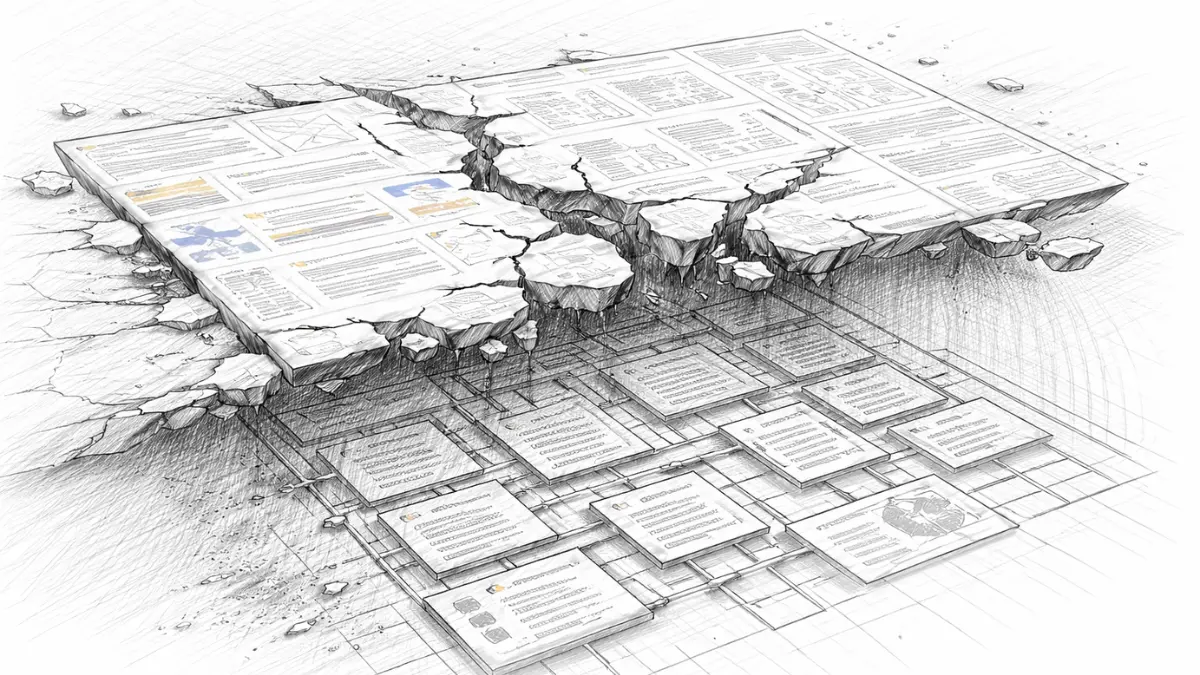

AI ignores layout and extracts only dependency chains, so meaning survives only when structure explicitly encodes definitions, scope, and reasoning.

TL;DR: Content built for human skimming loses meaning when AI strips visual cues and processes text as linear semantic dependencies. This causes interpretation drift, broken reasoning chains, and unreliable reuse in summaries and generative outputs. By enforcing explicit structure, stable terminology, and reasoning blocks, AI can accurately interpret, extract, and reuse content, increasing visibility and long-term persistence.

If structure is implicit, AI will reconstruct meaning incorrectly and your content will disappear from generative results.

Human-skimming assumptions are collapsing as AI-mediated discovery becomes the dominant access layer for information. Interpretation-first writing reframes content as a machine-readable structure rather than a visually optimized artifact for rapid scanning. This approach AI accessibility is sustained interpretability across modelestablishes AI interpretation writing as a structural requirement that governs how meaning is encoded, preserved, and reused by generative systems. The scope of this article spans language control, structural design, explicit reasoning, and long-term reuse across evolving AI models.

The Shift From Human Skimming to AI Interpretation

Web writing evolved around visual scanning, attention capture, and layout-driven emphasis, yet these signals do not survive machine processing at scale. As discovery shifts to AI-mediated interfaces, writing for AI interpretation replaces skimming logic with structural meaning control, which explains why human-centric assumptions fail under model ingestion. This shift highlights behavioral and architectural differences that define how content is parsed, weighted, and reused, as documented by research from the Stanford Natural Language Institute.

Definition: AI understanding is the model’s ability to interpret meaning, structure, and conceptual boundaries in a way that enables accurate reasoning, reliable summarization, and consistent content reuse across generative discovery systems.

Claim: Human skimming logic is incompatible with AI interpretation.

Rationale: AI systems do not perceive emphasis, layout, or visual hierarchy.

Mechanism: Models process text as linear, dependency-based semantic sequences.

Counterargument: Some UI layers mimic skimmable summaries.

Conclusion: Content must be designed for interpretation, not scanning.

Human Skimming Models

Human reading behavior relies on non human reading models that prioritize visual prominence over semantic order, which historically shaped editorial practices toward headings, highlights, and layout contrast. As a result, authors assume that readers can skip segments while still preserving intent, because visual cues signal importance without requiring full textual traversal. However, this logic depends on perceptual shortcuts that do not exist in machine ingestion pipelines.

At the same time, writing beyond human reading exposes a mismatch between how meaning is conveyed and how it is consumed by models. When emphasis is created through formatting rather than explicit language, semantic weight becomes implicit and therefore unstable during extraction. Consequently, meaning fragments drift when removed from their visual context.

- Meaning inferred from visual prominence

- Emphasis created by formatting

- Partial reading still preserves intent

These assumptions collapse when content is processed without vision.

AI Interpretation Models

AI systems operate through writing interpreted by AI as a continuous semantic stream, where every sentence contributes probabilistically to meaning formation. Unlike human readers, models do not prioritize headers or highlighted segments unless language explicitly encodes priority and scope. Therefore, interpretation emerges from dependency resolution rather than from visual landmarks.

In addition, text interpreted by AI models requires uninterrupted semantic continuity, because inference depends on how concepts connect across sentences and sections. When content assumes skipping behavior, it introduces gaps that degrade dependency chains and reduce reuse reliability. As a result, interpretation accuracy declines even if surface readability appears high.

- Linear semantic ingestion

- Dependency-driven meaning construction

- No concept of skipping text

Interpretation requires full semantic continuity.

Interpretation-First Writing as a Content Architecture

Most content architectures optimize for engagement metrics that reward attention capture rather than semantic stability. Interpretation first writing reframes architecture as a control system that governs how meaning persists during machine processing, which clarifies why engagement-first layouts fail under AI ingestion. This approach focuses on structural and conceptual design choices that minimize interpretive variance across contexts, as supported by research from MIT CSAIL.

Definition: Interpretation-first writing is a content architecture designed to minimize interpretive variance during machine processing by enforcing stable structure, explicit scope, and predictable semantic roles.

Claim: Interpretation-first writing is an architectural discipline.

Rationale: AI systems rely on stable structural signals to construct internal meaning graphs.

Mechanism: Fixed semantic roles reduce ambiguity by constraining how sections relate and how claims propagate.

Counterargument: Creative formats resist rigidity and may prioritize expression over stability.

Conclusion: Enterprise content must favor interpretability to ensure reliable reuse and extraction.

Principle: Content becomes more visible in AI-driven environments when its structure, definitions, and conceptual boundaries remain stable enough for models to interpret without ambiguity.

Interpretation-Driven Content Design

Interpretation driven content assigns each section a fixed purpose that remains consistent across documents and domains. As a result, interpretation oriented language encodes intent directly in structure rather than relying on stylistic emphasis, which reduces ambiguity during extraction. Consequently, models can infer scope and priority without relying on presentation cues.

Moreover, predictable structural signals allow AI systems to map content into reusable units with clear boundaries. When section roles remain stable, meaning transfers more reliably across summaries, citations, and recompositions. Therefore, architecture becomes a primary determinant of interpretation fidelity.

- Fixed section purpose

- Predictable semantic order

- Explicit concept boundaries

Architecture defines interpretation fidelity.

Interpretation Stability vs Expressiveness

Interpretation stable writing constrains variability to preserve meaning across contexts, whereas expressive formats tolerate fluid structure that shifts emphasis by tone or layout. In contrast, interpretation controlled text encodes priority and scope explicitly, which increases reliability during machine processing. As a result, stability directly influences whether content can be reused without distortion.

At the same time, expressive writing often depends on contextual cues that do not survive extraction. When meaning remains implicit, models infer intent probabilistically, which increases variance across outputs. Therefore, architectural stability becomes essential for long-term reuse.

| Dimension | Expressive Writing | Interpretation-First Writing |

|---|---|---|

| Structure | Flexible | Fixed |

| Meaning | Contextual | Explicit |

| Reuse | Low | High |

Stability enables long-term AI reuse.

How AI Systems Interpret Written Language

Misunderstanding of model-level reading behavior persists because most writing guidance assumes human perception as the baseline. AI interpretation focused writing addresses this gap by aligning content with how models process language internally rather than how interfaces present it. This section explains the mechanics of semantic processing that govern interpretation accuracy and reuse, grounded in findings from the Allen Institute for Artificial Intelligence.

Definition: AI interpretation is the probabilistic construction of meaning from token dependencies that emerge through ordered semantic processing.

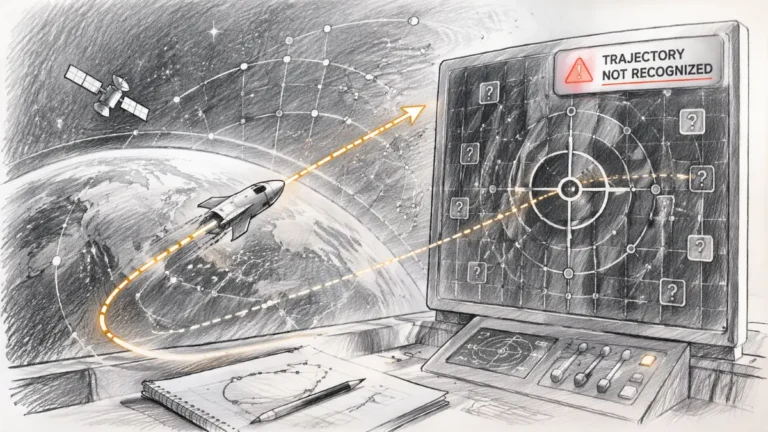

Claim: AI interpretation operates below presentation layers.

Rationale: Models consume raw semantic sequences without access to visual emphasis or layout hierarchy.

Mechanism: Meaning emerges from dependency resolution across tokens, sentences, and sections.

Counterargument: UI summaries mask this process by presenting synthesized outputs that appear visually structured.

Conclusion: Interpretation logic is structural, not visual.

Semantic Dependency Construction

AI interpretation content logic depends on how semantic dependencies form across a text rather than on isolated statements. When semantic interpretation writing maintains clear ordering, definitions, and scoped references, models can resolve relationships with higher confidence. As a result, dependency strength increases when concepts appear in predictable positions relative to their explanations.

Furthermore, dependency formation relies on repeated exposure to the same concept within a controlled scope. When sentence order reinforces definition proximity and concept recurrence, models assign higher semantic weight to those relationships. Therefore, dependency construction becomes a primary mechanism for meaning stability.

- Sentence order

- Definition proximity

- Concept recurrence

Dependency signals define meaning weight.

Interpretation Constraints in Models

AI interpretation constraints arise when language introduces ambiguity that weakens dependency resolution. Machine interpretation constraints increase when terms lack explicit definitions or when scopes overlap without clear boundaries. Consequently, models must infer missing structure, which raises the probability of drift.

In addition, constraints compound across long documents when early ambiguity propagates downstream. Mixed scopes cause inference errors because models cannot reliably separate concepts that share terminology but differ in intent. Therefore, constraint management directly influences extraction reliability.

| Constraint Type | Effect on Interpretation |

|---|---|

| Ambiguous terms | Meaning drift |

| Missing definitions | Graph gaps |

| Mixed scopes | Inference errors |

Constraints determine extraction reliability.

Writing for Machine Interpretation Instead of Human Readability in AI Interpretation Writing

Readability metrics dominate editorial decisions because they reflect human preferences for ease and speed. Machine interpretation writing reframes these objectives by prioritizing deterministic parsing over perceived fluency, which aligns content with how models evaluate language internally, as evidenced by research from Carnegie Mellon University’s Language Technologies Institute. This shift centers linguistic control as the primary lever for reliable extraction and reuse.

Definition: Machine interpretation writing is text optimized for deterministic parsing by AI systems through explicit structure, controlled syntax, and scoped meaning.

Claim: Machine interpretation demands linguistic discipline.

Rationale: AI prioritizes clarity over engagement when constructing semantic graphs.

Mechanism: Controlled syntax reduces inference noise by limiting ambiguity and reference drift.

Counterargument: Over-simplification risks shallowness when nuance is removed without replacement.

Conclusion: Precision enables depth without ambiguity.

Linguistic Constraints for AI

Language interpreted by AI benefits from constraints that make meaning explicit at the sentence level. When authors encode one fact per sentence and preserve a consistent grammatical order, models can assign dependencies with higher confidence. As a result, AI aligned writing logic reduces the need for probabilistic guesswork during parsing.

At the same time, constraint-driven language prevents hidden assumptions from propagating across sections. Implicit references force models to infer antecedents, which increases variance across outputs. Therefore, linguistic discipline functions as a stabilizer for interpretation.

- One fact per sentence

- Subject → Predicate → Meaning

- No implicit references

Constraint increases semantic fidelity.

Meaning Stability in Machine Processing

AI interpretation reliability improves when terminology remains consistent and scopes are explicit across a document. Stable language allows models to reuse previously established definitions rather than reconstruct meaning repeatedly. Consequently, consistency lowers the probability of misinterpretation during summarization and recomposition.

Moreover, AI interpretation consistency depends on how clearly boundaries are defined between concepts. When scope remains explicit, models avoid blending adjacent ideas into a single representation. As a result, stable signals translate directly into predictable reuse.

| Signal | Outcome |

|---|---|

| Consistent terminology | High reuse |

| Explicit scope | Low misinterpretation |

Stability predicts reuse.

Semantic Containers as Interpretation Control Units

Long-form content increases semantic bleed because concepts accumulate across sections without clear boundaries. Semantic interpretation writing addresses this risk by introducing modular units that isolate meaning and control how models traverse complex documents, which aligns with research on information boundaries from the Oxford Internet Institute. This approach applies modular meaning control to stabilize interpretation across extraction, summarization, and reuse.

Definition: A semantic container is a bounded unit that isolates a single concept to prevent scope overlap during machine processing.

Claim: Containers stabilize interpretation.

Rationale: AI reuses bounded concepts more accurately than diffuse narratives.

Mechanism: Containers prevent inference leakage by enforcing explicit scope and role separation.

Counterargument: Poor design fragments meaning when boundaries are unclear or inconsistent.

Conclusion: Containers must be explicit to preserve semantic integrity.

Container Types

Interpretation safe language emerges when content assigns each container a fixed role that does not change across contexts. Controlled semantic interpretation relies on consistent container design so models can recognize where definitions end, where mechanisms begin, and where implications apply. As a result, containers become predictable anchors for meaning reuse.

Moreover, containerization reduces cross-contamination between adjacent ideas. When each block performs a single function, models avoid blending explanation with evaluation or example with mechanism. Consequently, interpretation remains stable even as documents scale.

- Concept blocks

- Mechanism blocks

- Example blocks

- Implication blocks

Each container enforces meaning isolation.

Example: A page with clear conceptual boundaries and stable terminology allows AI systems to segment meaning accurately, increasing the likelihood that its high-confidence sections will appear in assistant-generated summaries.

Deep Reasoning Chains as AI Knowledge Modules

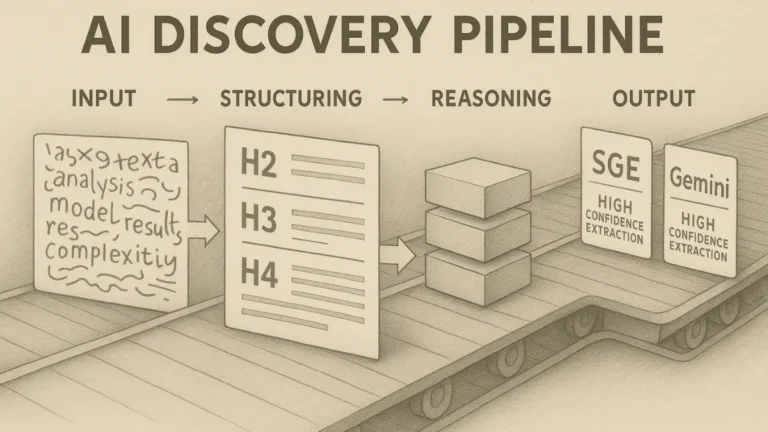

AI systems favor reusable reasoning units because they can be extracted, recombined, and applied across different contexts without reconstructing logic from scratch. In practice, AI interpretation structure depends on explicit reasoning patterns that remain stable under summarization and recomposition, which explains why unstructured arguments degrade during reuse, as documented in formal reasoning and representation work by NIST. This reliance on stable reasoning clarifies why Deep Reasoning Chains function as modular components within AI interpretation structure rather than as narrative explanations.

Definition: A Deep Reasoning Chain is an explicit reasoning sequence encoded as a fixed logical structure to enable reliable reuse by AI systems.

Claim: DRCs act as AI knowledge objects.

Rationale: Models recombine reasoning patterns more reliably than free-form narrative explanations.

Mechanism: Fixed labels guide extraction by signaling claim boundaries, causal logic, and resolution points.

Counterargument: Inconsistency reduces trust when reasoning structure changes between sections or documents.

Conclusion: Uniform DRCs amplify visibility and reuse across generative systems.

DRC Internal Consistency Rules

AI interpretation signal alignment depends on whether reasoning units follow the same internal logic across the entire document. When DRCs preserve identical labels, order, and sentence scope, models can recognize them as repeatable structures rather than isolated explanations. As a result, reasoning becomes portable across contexts.

At the same time, stylistic variation within reasoning chains introduces noise that weakens extraction. If labels shift, order changes, or sentence roles blur, models must infer structure instead of recognizing it. Therefore, consistency functions as a prerequisite for reliable reasoning reuse.

- Fixed labels

- Stable order

- No stylistic variation

Consistency enables extraction.

DRCs and Generative Reuse

AI readable meaning increases when reasoning follows a predictable sequence that models can index and recall. When DRCs appear consistently across documents, AI comprehension stability improves because similar reasoning patterns reinforce one another. Consequently, reuse probability rises without requiring additional contextual reconstruction.

By contrast, content without explicit reasoning forces models to approximate logic from surrounding text. This approximation increases variance across outputs and reduces confidence during citation or synthesis. Therefore, reasoning structure directly predicts reuse outcomes.

| Structure | Reuse Probability |

|---|---|

| DRC present | High |

| No reasoning | Low |

Reasoning structure predicts reuse.

Keyword Placement for Interpretation, Not Ranking

Keyword repetition creates semantic noise because it inflates surface signals without strengthening meaning. Within interpretation-first systems, AI interpretation clarity depends on how precisely terms anchor concepts, and AI interpretation writing treats keyword placement as a structural signal rather than a ranking tactic, which aligns with semantic guidance from the W3C. This logic reframes distribution as interpretation-safe positioning that supports reuse across AI interpretation writing workflows.

Definition: An interpretation-aligned keyword is a term used to anchor meaning within a specific semantic scope rather than to manipulate ranking signals.

Claim: Keywords should reinforce meaning.

Rationale: Excess repetition distorts semantic graphs by overweighting tokens without adding informational value.

Mechanism: Single placement anchors interpretation by binding a term to a defined scope and role.

Counterargument: SEO norms encourage density to maximize visibility.

Conclusion: Interpretation-first placement improves reuse by preserving semantic balance.

Keyword Density vs Semantic Anchoring

AI interpretation readability declines when density substitutes for definition and scope. High repetition increases token frequency but does not clarify relationships, which leads models to infer importance without understanding intent. In contrast, anchoring associates a keyword with an explicit definition and a bounded section, which improves interpretability in AI interpretation writing contexts.

Moreover, anchoring supports consistent extraction across summaries and recompositions. When a keyword appears once in a controlled position, models associate it with surrounding logic instead of dispersing weight arbitrarily. Therefore, anchoring outperforms density for interpretation quality.

| Approach | Interpretation Quality |

|---|---|

| High density | Low |

| Anchored | High |

Anchoring outperforms repetition.

Cluster Distribution Strategy

Interpretation precision writing depends on distributing terms according to semantic roles rather than frequency targets. Interpretation aligned content assigns each keyword a single, purposeful location that corresponds to a section’s intent, which prevents cross-scope interference. As a result, models resolve meaning without reconciling conflicting signals.

At the same time, disciplined distribution simplifies long-form governance. When keywords do not reappear across sections, semantic drift decreases and reuse becomes more predictable. Consequently, distribution functions as a control mechanism for meaning.

- One keyword per H2

- No cross-section reuse

- Semantic fit only

Distribution controls meaning.

Microcases: Interpretation Failures vs Interpretation-Safe Content

Abstract principles require validation because interpretation behavior only becomes visible under real extraction pressure. Interpretation precision writing frames this section around applied outcomes that reveal how structure changes variance, grounded in empirical analysis and systems research from Berkeley Artificial Intelligence Research. The scope focuses on applied patterns where interpretation fails and where it remains stable under reuse.

Definition: An interpretation failure is incorrect or partial meaning extraction by AI caused by ambiguous structure, unstable terminology, or missing scope control.

Claim: Interpretation-safe writing reduces variance.

Rationale: Structure constrains inference by limiting the paths models can take when resolving meaning.

Mechanism: Explicit logic guides extraction through fixed scopes, definitions, and reasoning order.

Counterargument: Dataset bias remains and can influence outputs even with strong structure.

Conclusion: Structure mitigates error even when model bias persists.

Microcase 1

An enterprise documentation set failed when content interpreted by AI relied on visual headings and implicit cross-references. During summarization, machine interpreted content merged separate procedures because definitions appeared after examples and scopes overlapped. As a result, generated outputs omitted prerequisites and reordered steps, which increased operational error. The failure traced back to missing structural anchors rather than incorrect facts.

Microcase 2

A regulatory policy document succeeded because non human content interpretation followed explicit definitions placed before constraints and mechanisms. Content for non human readers maintained stable terminology and isolated each obligation within a fixed section role. Consequently, extracted summaries preserved intent across jurisdictions and formats. The outcome demonstrated that interpretation stability scales when structure governs meaning.

Designing Content for Long-Term AI Accessibility

AI systems retain memory across generations through training corpora, evaluation benchmarks, and retrieval layers that reuse past material. AI interpretation content design treats persistence as a structural outcome rather than a byproduct, which explains why some documents remain interpretable while others decay as models evolve. This section focuses on governance and evolution mechanisms that preserve meaning over time, consistent with longitudinal evidence and policy analysis from the OECD.

Definition: AI accessibility is sustained interpretability across model versions achieved through stable structure, explicit definitions, and controlled terminology.

Claim: Interpretation-first content ages better.

Rationale: Stable structures survive model change because they encode meaning independently of interface or ranking shifts.

Mechanism: Definitions anchor meaning over time by fixing concept boundaries that models can relearn consistently.

Counterargument: Terminology drift occurs as domains evolve and introduce new labels.

Conclusion: Governance preserves meaning by controlling change without freezing progress.

Long-term accessibility must be grounded in a broader optimization framework that defines how generative systems select, evaluate, and reuse content. This foundation is outlined in What Is Generative Engine Optimization (GEO)?, where generative visibility is defined as a structural outcome of clarity, factual grounding, and machine-readable architecture.

Terminology Governance

Interpretation controlled text depends on a managed vocabulary that prevents silent divergence across sections and updates. When authors register terms centrally and lock definitions at first use, models encounter consistent signals that reinforce the same concept graph across documents. As a result, interpretation stable writing reduces variance during retraining and reuse.

At the same time, governance must allow controlled evolution to avoid obsolescence. Change control processes document when and why a term shifts, ensuring that new definitions replace old ones explicitly rather than implicitly. Consequently, models can reconcile updates without blending incompatible meanings.

- Vocabulary registry

- Definition locking

- Change control

Governance prevents drift.

Future-Proof Interpretation Design

AI interpretation reliability improves when documents encode intent in structure rather than rely on transient cues such as popularity or interface layout. When sections maintain fixed roles and definitions precede mechanisms, models reconstruct meaning consistently across versions. Therefore, AI interpretation consistency becomes a property of design, not of model capability.

Over time, skimmable content loses coherence because its signals depend on context that disappears. Interpretable content persists because it carries its own constraints and explanations. This difference explains why longevity correlates with interpretation-first design.

| Content Type | Longevity |

|---|---|

| Skimmable | Short |

| Interpretable | Long |

Interpretation determines longevity.

Checklist:

- Does the page define its core concepts with precise terminology?

- Are sections organized with stable H2–H4 boundaries?

- Does each paragraph express one clear reasoning unit?

- Are examples used to reinforce abstract concepts?

- Is ambiguity eliminated through consistent transitions and local definitions?

- Does the structure support step-by-step AI interpretation?

Interpretive Signals Within AI-Oriented Page Structure

- Hierarchical semantic partitioning. Multi-level heading depth establishes discrete interpretive zones, enabling AI systems to resolve scope and dependency without conflating adjacent concepts.

- Reasoning unit encapsulation. Recurrent structural patterns such as definition blocks and reasoning chains function as bounded cognitive units that models can extract and recombine predictably.

- Terminology stabilization through proximity. Immediate co-location of terms and definitions reduces semantic drift and allows generative systems to anchor meaning locally rather than infer globally.

- Sequential logic reinforcement. Ordered progression from concept to mechanism to implication provides an interpretable reasoning flow that supports long-context synthesis.

- Cross-section structural coherence. Repeated structural roles across sections enable validation of internal consistency during AI-driven indexing and retrieval.

These architectural signals describe how page structure informs machine interpretation, shaping how meaning is segmented, preserved, and reconstructed within generative systems.

FAQ: AI Interpretation Writing

What is AI interpretation writing?

AI interpretation writing is an approach to content creation that encodes meaning through structure, definitions, and reasoning rather than visual emphasis or skimmability.

How does AI interpretation writing differ from readability-focused writing?

Readability-focused writing optimizes for human scanning, while AI interpretation writing prioritizes deterministic semantic parsing by language models.

Why does skimmable content fail in AI-mediated discovery?

Skimmable content relies on visual cues and implicit emphasis that are not preserved when text is processed as a linear semantic sequence by AI systems.

How do AI systems interpret structured content?

AI systems interpret content by resolving dependencies between terms, definitions, and reasoning units across a stable hierarchical structure.

What role does structure play in AI interpretation?

Structure defines scope, priority, and semantic boundaries, allowing AI systems to isolate meaning and reduce interpretive variance.

Why are definitions critical for AI interpretation?

Definitions anchor terminology locally, preventing semantic drift and enabling consistent reuse across generative outputs.

How does reasoning structure affect AI reuse?

Explicit reasoning structures allow AI systems to extract, recombine, and cite logic without reconstructing intent from narrative context.

Is AI interpretation writing tied to search rankings?

AI interpretation writing is independent of ranking mechanics and focuses on interpretability within generative and retrieval-based systems.

Why is AI interpretation writing relevant long term?

Content designed for interpretation remains reusable across model updates because its meaning is preserved structurally rather than contextually.

Glossary: Key Terms in AI Interpretation Writing

This glossary defines the core terminology used throughout the article to maintain semantic stability and consistent interpretation by AI systems.

AI Interpretation Writing

A writing approach that encodes meaning through structure, definitions, and reasoning units rather than visual emphasis or skimmability.

Atomic Paragraph

A paragraph that contains a single semantic idea expressed within strict boundaries, enabling predictable interpretation and reuse.

Semantic Container

A bounded content unit that isolates one concept, mechanism, or implication to prevent scope overlap during AI interpretation.

Interpretation Failure

A condition where AI systems extract incomplete or incorrect meaning due to ambiguous structure, missing definitions, or unstable scope.

Terminology Stability

The maintenance of consistent terms and definitions across content to prevent semantic drift during machine processing.

Information Density

The concentration of explicit, non-redundant statements that enables efficient semantic extraction without reliance on context.

Deep Reasoning Chain

A fixed sequence of declarative reasoning steps that allows AI systems to extract and reuse logic as a modular unit.

Structural Consistency

The repetition of stable section roles and logical order across a document to support reliable interpretation.

Interpretation Variance

The degree to which AI systems produce divergent meanings from the same content due to structural or semantic ambiguity.

Interpretation Predictability

The ability of content structure to produce consistent meaning extraction across AI models and generative contexts.