Last Updated on March 22, 2026 by PostUpgrade

Link Ecosystems: Strengthening the Semantic Network

AI ignores individual links and instead reconstructs your entire site as a link graph, where only repeated relational patterns—not isolated connections—define meaning.

TL;DR: Isolated internal links fail to provide consistent relational signals, causing AI systems to misinterpret structure, fragment meaning, and reduce content reuse. This leads to weak extraction, unstable context propagation, and low visibility across generative systems. By building a link ecosystem with stable patterns, AI can reliably interpret relationships, extract structured meaning, and reuse content across contexts. The result is stronger semantic clarity, higher AI visibility, and scalable generative reuse.

If your linking is not systematic, AI will rebuild your meaning without you—and get it wrong.

The modern web is shifting away from linear internal linking toward systems that express meaning through structured relationships. In this context, link ecosystem architecture defines how content units connect to form a coherent semantic network instead of isolated pages. AI-driven retrieval systems rely on these relationships to interpret relevance, context, and intent with higher precision. As a result, links now function as semantic signals rather than simple navigation elements.

This transition reflects how generative and analytical models process information through relational patterns and stable contextual boundaries. Link ecosystem architecture enables AI systems to read content as an interconnected structure with predictable meaning flow. Such ecosystems support machine interpretation, reuse, and long-term accessibility at scale. The goal of this article is to explain how link ecosystems strengthen semantic networks and make them interpretable for AI systems.

Concept of a Link Ecosystem in Semantic Systems

A link ecosystem represents a structural approach to meaning organization that goes beyond page-to-page navigation and focuses on relational interpretation. Within semantic systems, link ecosystem architecture defines how content units connect to preserve contextual continuity and support machine-level understanding, a principle widely discussed in research on structured knowledge representation by MIT CSAIL. Unlike traditional internal linking, this approach treats links as carriers of semantic intent rather than directional pointers.

Definition: AI understanding is the model’s ability to interpret semantic relationships, structural boundaries, and contextual signals in a way that enables reliable reasoning, stable interpretation, and consistent reuse across interconnected content systems.

Claim: Link ecosystems act as semantic infrastructures rather than navigation mechanisms.

Rationale: AI systems interpret relational consistency more reliably than isolated links.

Mechanism: Stable link relationships create persistent meaning pathways across documents.

Counterargument: Simple hierarchical linking may suffice for small or static sites.

Conclusion: At scale, link ecosystems become required for semantic stability.

Difference Between Links and Link Ecosystems

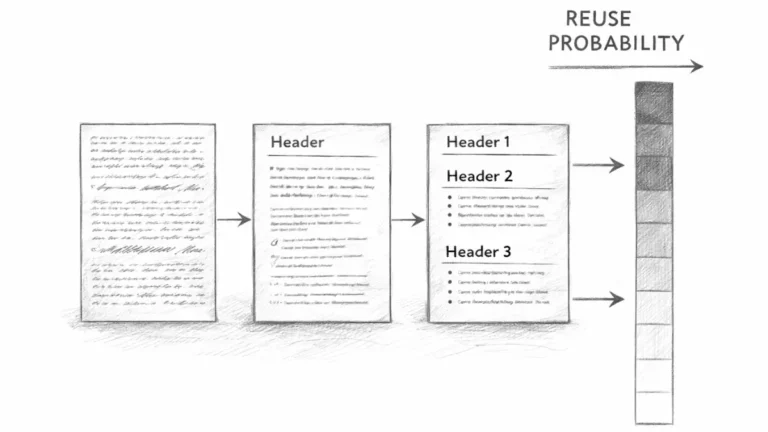

Isolated links traditionally serve a navigational purpose by directing users from one page to another. This model assumes that meaning resides primarily within individual documents, while links merely support movement. As a result, semantic interpretation depends almost entirely on local content rather than on the structure of relationships.

In contrast, a link ecosystem model treats links as part of a broader relational system. Each connection reinforces context, priority, and conceptual alignment across multiple content units. Over time, these reinforced relationships form a semantic link ecosystem that supports interpretation even when individual pages are accessed out of sequence.

In simpler terms, a single link helps someone move between pages, while an ecosystem of links helps systems understand how ideas belong together and why those relationships matter.

From Navigation to Meaning Propagation

Traditional linking logic optimizes for clicks, page depth, and crawl paths. While effective for discovery, this logic does not guarantee that meaning transfers consistently between connected pages. As content volume grows, purely navigational links often fail to signal conceptual relevance.

Meaning driven linking shifts the focus from movement to interpretation. Links propagate context by consistently connecting related concepts, which allows AI systems to infer how ideas relate across documents. This process forms a semantic connectivity model that prioritizes conceptual alignment over navigational convenience.

Put simply, navigation shows where to go next, while meaning propagation explains how ideas connect and remain understandable across an entire content system.

Structural Properties of Link Ecosystems

Structural properties determine whether a link ecosystem can be interpreted reliably by AI systems or remains a loose collection of connections. In this context, link ecosystem design describes how relational patterns, hierarchy, and distribution rules shape meaning at scale, a principle aligned with structural interpretation research referenced by the W3C. These properties define how context flows between content units and how consistently meaning is reinforced across the network.

Definition: Structural link property: a consistent relational pattern that defines how content units reference and reinforce each other.

Claim: Structural consistency is a prerequisite for semantic interpretation.

Rationale: AI models infer meaning from repeated relational patterns rather than isolated signals.

Mechanism: Link hierarchy and topology encode priority and context flow across connected units.

Counterargument: Flat linking structures reduce implementation complexity in small systems.

Conclusion: Structural rigor improves machine-level understanding in scalable environments.

Principle: Content becomes interpretable in AI-driven environments when its link structures, conceptual definitions, and relational patterns remain stable enough for models to resolve meaning without relying on isolated textual signals.

Link Topology and Hierarchy

Link topology describes the overall shape of connections within a content system and how those connections form paths of interpretation. A well-defined link topology strategy establishes predictable routes through which context travels, allowing AI systems to infer which concepts hold central importance and which serve supportive roles. Hierarchical patterns further clarify how information should be weighted and interpreted.

Link hierarchy logic assigns relative importance through consistent parent–child and peer relationships. When hierarchy remains stable, AI systems can distinguish foundational concepts from derivative ones without relying solely on textual cues. This structural clarity reduces ambiguity and strengthens semantic alignment across large content clusters.

Put simply, topology shows how everything connects, while hierarchy explains which connections matter most and how meaning should flow through them.

Relationship Density and Distribution

Relationship density refers to how many meaningful connections exist between related content units. Balanced link relevance distribution ensures that important concepts receive sufficient reinforcement without overwhelming the system with redundant signals. AI systems respond more accurately when relational density reflects conceptual importance rather than arbitrary linking volume.

Semantic density of links emerges when connections consistently reinforce the same conceptual boundaries across multiple contexts. Excessive concentration can blur meaning, while sparse distribution weakens interpretability. Effective ecosystems maintain density levels that support clarity and stable inference.

In straightforward terms, too few links make ideas feel disconnected, while too many links make them noisy. The right balance helps systems understand what truly belongs together.

| Dimension | Description | Semantic Effect |

|---|---|---|

| Topology | Overall shape and routing of link connections | Guides context flow and interpretive paths |

| Hierarchy | Ordered relationships between content units | Signals priority and conceptual importance |

| Density | Volume of meaningful links per concept | Reinforces or dilutes semantic clarity |

| Distribution | Spread of links across the ecosystem | Stabilizes interpretation at scale |

These dimensions work together to ensure that structural properties support clear, machine-readable meaning rather than accidental connectivity.

Semantic Reinforcement Through Linking

Semantic reinforcement explains how links actively shape meaning rather than merely connecting pages. Within link ecosystem architecture, semantic link relationships determine how repeated contextual alignment stabilizes interpretation across documents, a mechanism studied in semantic representation research by the Stanford Natural Language Institute. This approach treats links as persistent semantic signals that support interpretation consistency as content scales.

Definition: Semantic reinforcement: the process by which repeated contextual references stabilize meaning across documents.

Claim: Links reinforce semantic meaning when context remains consistent.

Rationale: Repeated contextual cues reduce interpretive ambiguity in large content systems.

Mechanism: Consistent anchor context and target relevance form reinforcement loops across the link ecosystem architecture.

Counterargument: Excessive linking without contextual control can weaken semantic signals.

Conclusion: Effective reinforcement depends on precision and structural discipline, not on link volume.

Contextual Anchoring and Meaning Stability

Contextual anchoring defines how the semantic environment around a link shapes interpretation. When anchors appear within stable conceptual frames, semantic reinforcement through links becomes predictable and machine-interpretable. This predictability allows AI systems to associate linked content with durable meaning boundaries instead of transient associations.

Context preserving links maintain alignment between anchor intent and destination meaning. Within link ecosystem architecture, this alignment ensures that repeated references reinforce the same interpretation over time. As a result, semantic meaning remains stable even when documents are retrieved independently or out of sequence.

In simpler terms, when links always appear in the same type of context, systems stop guessing and start recognizing what those connections represent.

Example: A semantic link ecosystem with consistent anchor context and stable link relationships allows AI systems to confirm meaning through repeated patterns, increasing the likelihood that these sections will be reused in generative summaries and long-context reasoning.

Meaning Stabilization Effects

Meaning stabilization occurs when links repeatedly confirm the same conceptual relationship across different documents. Meaning stabilization via links allows AI systems to validate interpretation through pattern recognition instead of relying on isolated textual signals. This effect becomes more important as link ecosystem architecture expands and content volume increases.

Semantic continuity links prevent abrupt contextual shifts between related concepts. By reinforcing the same relationships consistently, these links reduce semantic drift and preserve internal coherence. Over time, continuity strengthens the reliability of the semantic network.

Put plainly, stable linking keeps ideas consistent and recognizable, even as the surrounding content grows and evolves.

Link Ecosystems as Interpretation Signals for AI

Link ecosystems function as interpretive infrastructure that guides how models infer meaning, not as ranking shortcuts. In this framing, link ecosystems for AI operate as structural cues that stabilize context across documents, aligning with research on relational inference and representation learning from the Allen Institute for Artificial Intelligence (AI2). This approach positions links as inputs to semantic reasoning rather than signals for ordering results.

Definition: Interpretation signal: a structural cue that helps AI systems infer meaning relationships.

Claim: AI systems treat link ecosystems as interpretation scaffolds.

Rationale: Relational patterns guide semantic inference more reliably than isolated textual features.

Mechanism: Machine interpretable linking enables controlled context propagation across related content units.

Counterargument: Content-only signals may override link-based inference in narrowly scoped documents.

Conclusion: Links remain critical when content scale increases and interpretation must generalize.

Machine-Interpretable Linking Patterns

Machine-interpretable linking relies on predictable relational patterns that models can parse consistently. When links follow stable placement rules and align with clear conceptual intent, link driven interpretation becomes feasible without deep reliance on surface text. These patterns reduce ambiguity by signaling how concepts relate across documents.

Consistent patterns also constrain context expansion. By limiting how far meaning travels through links, systems preserve local relevance while maintaining global coherence. This balance allows models to reuse information accurately across varied retrieval scenarios.

In simpler terms, when links behave the same way everywhere, systems learn how to read them and apply that understanding reliably.

Link Graph Interpretation by Models

Link graph interpretation describes how models analyze the structure formed by interconnected content units. Rather than evaluating links individually, systems assess the overall topology to infer importance, proximity, and conceptual clusters. Semantic linkage patterns emerge when connections repeatedly reinforce the same relationships across the graph.

As graphs grow, these patterns help models distinguish foundational concepts from peripheral ones. Stable linkage patterns prevent misinterpretation by anchoring meaning to consistent relational evidence. Over time, the graph itself becomes a source of semantic validation.

Put plainly, models read the shape of the link network to understand how ideas fit together and which relationships carry the most meaning.

Internal Link Semantics and Context Flow

Internal links transmit meaning by shaping how context moves between related content units across a site. In this framework, internal link semantics describe the rules that govern contextual transfer and interpretation, a concept aligned with research on discourse structure and coherence from Carnegie Mellon University Language Technologies Institute. When links follow consistent semantic intent, they enable AI systems to preserve context beyond individual documents.

Definition: Context flow: the controlled transfer of semantic meaning through linked content units.

Claim: Internal links define how context flows between concepts.

Rationale: Context continuity improves interpretability across distributed content.

Mechanism: Semantic link pathways align related conceptual units and constrain meaning locally.

Counterargument: Excessive cross-linking can create noise and weaken signal clarity.

Conclusion: Controlled flow preserves semantic clarity at scale.

Conceptual Navigation vs Physical Navigation

Conceptual navigation focuses on meaning alignment rather than movement between pages. Through conceptual navigation links, internal structures guide AI systems to understand how ideas relate, regardless of the order in which content appears. This approach emphasizes semantic relevance over click efficiency.

Physical navigation, by contrast, optimizes for user movement and crawl accessibility. While effective for discovery, it does not inherently explain why two pages connect. Link induced context emerges only when navigation choices reflect consistent conceptual intent rather than arbitrary pathways.

In simple terms, physical navigation shows where content lives, while conceptual navigation explains how ideas connect and why those connections matter.

Internal Links as Semantic Signals

Internal links function as semantic signals when they consistently reinforce the same relationships. This role of internal links becomes especially important when they support a broader visibility architecture. A practical implementation of this approach is described in this guide to building a generative visibility strategy, which explains how structured content systems enable AI engines to interpret and reuse knowledge across interconnected pages.Internal links as signals allow AI systems to infer conceptual importance by observing repeated, contextually aligned connections. This signaling effect strengthens interpretation without relying on explicit annotations.

Link supported understanding emerges when these signals remain stable across the site. Each repeated connection confirms prior interpretations and reduces ambiguity. Over time, consistent signaling enables systems to trust the internal logic of the content network.

Put simply, when internal links always point to related ideas in the same way, systems learn to rely on those links to understand meaning accurately.

Link Ecosystem Coherence and Reliability

Coherence functions as a trust signal that determines whether a semantic network remains interpretable over time. In this context, link coherence architecture describes how consistently relationships support the same interpretation across documents, a principle aligned with reliability and consistency standards discussed by the National Institute of Standards and Technology (NIST). When coherence holds, AI systems can rely on relational evidence instead of recalculating meaning for each retrieval.

Definition: Link coherence: the degree to which link relationships consistently support the same semantic interpretation.

Claim: Coherent link ecosystems improve semantic reliability.

Rationale: Inconsistency reduces confidence in interpretation and increases ambiguity.

Mechanism: Relationship-based linking enforces conceptual boundaries through repeated, aligned connections.

Counterargument: Dynamic content may temporarily disrupt coherence during updates.

Conclusion: Long-term coherence outweighs short-term inconsistency in scalable systems.

Relationship-Based Linking Models

Relationship-based linking models define connections according to conceptual roles rather than proximity or convenience. Through relationship based linking, each connection reflects a stable semantic intent that remains consistent across contexts. This stability allows AI systems to infer meaning from patterns instead of isolated occurrences.

Structural semantic links formalize these relationships by maintaining clear boundaries between concepts. When links consistently reinforce the same roles, models recognize dependable structures that reduce interpretive variance. Over time, these structures support reliable semantic inference even as content volume grows.

In simpler terms, when links always express the same kind of relationship, systems trust those links to mean what they appear to mean.

Coherence Measurement Factors

Coherence measurement focuses on signals that indicate whether link behavior remains stable. Link coherence signals emerge from repeated alignment between anchor context, destination meaning, and relational intent. When these signals remain consistent, AI systems treat the network as reliable.

A semantic reinforcement network amplifies these effects by confirming interpretations across multiple paths. If reinforcement aligns, coherence strengthens; if it diverges, reliability declines. Monitoring these factors helps maintain interpretive trust over time.

Put plainly, coherence becomes measurable when links consistently tell the same semantic story across the entire network.

Scaling Semantic Networks with Link Ecosystems

As content volume grows, semantic interpretation degrades unless structure scales with it. Internal link ecosystems provide a structural solution by embedding meaning into relationships rather than relying on isolated documents, a challenge extensively analyzed in large-scale knowledge systems research by the Oxford Internet Institute. Within link ecosystem architecture, scaling depends on whether relational logic remains consistent as new content nodes appear.

Definition: Semantic scaling: expansion of content volume without loss of interpretability.

Claim: Link ecosystems enable semantic scalability within link ecosystem architecture.

Rationale: Distributed meaning requires structured reinforcement to remain interpretable at scale.

Mechanism: Contextual link networks preserve alignment by repeating stable relational patterns across expanding link ecosystem architecture.

Counterargument: Manual governance becomes complex as content volume increases.

Conclusion: Ecosystem logic reduces scaling risk by encoding meaning directly into link ecosystem architecture.

Contextual Link Networks at Scale

Contextual link networks ensure that meaning propagates predictably as systems grow. Within link ecosystem architecture, these networks repeat the same relational logic across many documents, which prevents semantic fragmentation. As a result, AI systems can infer relevance without recalculating context for every new node.

Semantic link pathways further constrain how meaning travels across the network. By preserving pathway consistency, link ecosystem architecture allows models to assess proximity and conceptual distance even in large systems. This stability supports scalable interpretation without loss of clarity.

In simpler terms, when the same linking logic applies everywhere, large content systems remain understandable instead of becoming chaotic.

Managing Growth Without Semantic Drift

Growth introduces semantic risk when new content diverges from established meaning patterns. Meaning aware linking addresses this risk by enforcing consistent relational intent across link ecosystem architecture. Each new link confirms existing interpretations instead of weakening them.

Link coherence architecture anchors expansion to stable semantic structures. When coherence holds, additional content strengthens interpretation rather than distorting it. Over time, link ecosystem architecture absorbs growth without semantic decay.

Put plainly, controlled linking keeps meaning intact even as the system grows much larger.

Implications for Generative and AI-Driven Systems

Generative systems depend on structured context to extract, combine, and reuse meaning across sources. In this setting, a semantic link ecosystem connects content units in ways that support inference and synthesis, a dependency consistently observed in generative modeling research summarized by DeepMind Research. When relational structure remains stable, AI systems interpret linked content as a coherent semantic field rather than fragmented text.

Definition: Generative reuse: the ability of AI systems to extract and recombine content meaning.

Claim: Link ecosystems improve generative reuse quality.

Rationale: AI systems rely on relational context to synthesize information accurately.

Mechanism: Semantic link relationships support stable inference by preserving contextual alignment across sources.

Counterargument: Poor content quality limits reuse even when links are well structured.

Conclusion: Links amplify value only when meaning remains clear and consistent.

Generative Interpretation and Context Retention

Generative interpretation depends on how well systems retain context across multiple retrieval steps. Semantic link relationships help models recognize which concepts belong together during synthesis, reducing the risk of partial or distorted interpretations. When these relationships remain consistent, generative outputs reflect integrated understanding rather than stitched fragments.

Link induced context further stabilizes interpretation by constraining how far meaning travels between content units. By observing repeated, aligned connections, models retain relevant context while discarding unrelated signals. This behavior improves coherence in generated summaries and explanations.

In simple terms, stable linking helps systems remember what information belongs together when they combine ideas from different places.

Long-Term Semantic Network Strength

Long-term performance of generative systems depends on whether semantic structures remain reliable over time. A semantic reinforcement network strengthens interpretation by confirming meaning through multiple, consistent pathways. This reinforcement allows models to reuse information confidently even as content updates occur.

The link ecosystem model supports durability by embedding meaning into relationships rather than transient text patterns. As systems evolve, stable link structures continue to signal relevance and alignment. This durability ensures that generative reuse remains accurate across long time horizons.

Put simply, strong link networks help AI systems keep understanding intact long after the content landscape changes.

Microcase: Enterprise Semantic Network Evolution

Large enterprise knowledge systems often reveal how structural changes affect interpretation at scale. In this context, link ecosystem design illustrates a transition from page-level linking toward integrated semantic structures, a shift aligned with organizational knowledge management findings reported by the OECD. This microcase highlights how structural alignment alters AI interpretation behavior across large content clusters.

An enterprise documentation platform migrated from isolated internal links to an ecosystem-based structure that grouped content by conceptual roles rather than page proximity. After the transition, AI-assisted search tools showed higher consistency in concept attribution and fewer contradictory summaries across related topics. Teams observed reduced semantic ambiguity in clusters exceeding several thousand documents. Interpretation stability improved even when content updates occurred asynchronously.

Claim: Enterprise systems benefit measurably from link ecosystems.

Rationale: Large-scale content requires structured semantic reinforcement to remain interpretable.

Mechanism: Ecosystem-based linking stabilizes interpretation by embedding meaning into consistent relationships.

Counterargument: Initial implementation cost is high and requires governance discipline.

Conclusion: Long-term semantic reliability offsets setup cost in enterprise environments.

Checklist:

- Are link relationships defined by consistent semantic intent rather than navigation convenience?

- Do H2–H4 sections maintain stable conceptual boundaries across the article?

- Does each paragraph express a single, interpretable reasoning unit?

- Are link patterns reused to reinforce the same semantic relationships?

- Is contextual flow controlled to prevent semantic drift?

- Does the structure allow AI systems to interpret meaning progressively and reliably?

Interpretive Structure of Semantic Link Ecosystems

- Relational hierarchy signaling. The ordered H2→H3→H4 structure establishes clear semantic layers that allow AI systems to distinguish foundational concepts from derivative link relationships.

- Context propagation boundaries. Section-level segmentation defines how semantic context flows through linked concepts without uncontrolled expansion across unrelated units.

- Definition-driven graph stabilization. Immediate local definitions anchor abstract link concepts into explicit nodes, enabling models to form stable internal semantic graphs.

- Reinforcement pattern detection. Repeated structural alignment between headings, explanations, and link semantics allows generative systems to validate meaning through pattern consistency.

- Coherence preservation across depth. Maintaining uniform structural logic across sections supports long-context interpretation and prevents semantic drift in large-scale link ecosystems.

Together, these structural signals explain how generative systems interpret link ecosystems as coherent semantic architectures rather than collections of isolated connections.

FAQ: Link Ecosystem Architecture

What is a link ecosystem?

A link ecosystem is a structured network of semantic relationships where links preserve context, reinforce meaning, and support machine interpretation across content units.

How does a link ecosystem differ from internal linking?

Internal linking connects pages, while a link ecosystem encodes consistent semantic relationships that allow AI systems to infer meaning beyond individual documents.

Why are link ecosystems important for AI systems?

AI systems rely on relational structure to interpret context. Link ecosystems provide stable semantic signals that improve understanding, reuse, and inference at scale.

How do link ecosystems support semantic interpretation?

They reinforce meaning through repeated, context-aligned connections, allowing models to validate interpretation through structural consistency rather than isolated signals.

What role does coherence play in a link ecosystem?

Coherence ensures that link relationships consistently support the same interpretation, increasing trust and reliability in semantic inference.

How do link ecosystems affect generative AI reuse?

Generative systems reuse content more accurately when semantic relationships remain stable, allowing synthesis without contextual distortion.

Can link ecosystems scale with large content systems?

Yes. When relational logic remains consistent, link ecosystems preserve interpretability even as content volume grows significantly.

What causes semantic drift in large link networks?

Semantic drift occurs when new links introduce inconsistent relational intent, weakening established meaning patterns across the network.

How do AI systems interpret link graphs?

AI systems analyze the topology, density, and consistency of link graphs to infer conceptual importance and relationship strength.

Glossary: Key Terms in Link Ecosystem Architecture

This glossary defines the core terminology used throughout the article to ensure consistent interpretation of link ecosystems by both human readers and AI systems.

Link Ecosystem

A structured network of semantic relationships where links preserve context, reinforce meaning, and support interpretation across connected content units.

Semantic Link Relationship

A context-aligned connection between content units that consistently expresses the same conceptual meaning across multiple occurrences.

Link Ecosystem Architecture

The structural logic governing how link relationships are organized to maintain semantic coherence, interpretability, and scalability.

Context Flow

The controlled transfer of semantic meaning through linked content units, enabling consistent interpretation across documents.

Semantic Reinforcement

The stabilization of meaning through repeated, context-consistent link relationships across a content network.

Link Coherence

The degree to which link relationships consistently support the same semantic interpretation across a network.

Interpretation Signal

A structural cue derived from link patterns that helps AI systems infer relationships between concepts.

Semantic Scaling

The expansion of content volume without loss of interpretability due to stable relational structures.

Link Graph

The network formed by content units and their link relationships, used by AI systems to infer structure and importance.

Semantic Drift

The gradual loss of consistent meaning caused by misaligned or contradictory link relationships over time.