Last Updated on March 22, 2026 by PostUpgrade

Facts, Citations, and Sources: The New SEO Currency

AI does not rank your content as a page—it decomposes it into atomic facts, validates each against sources, and only then decides whether your content deserves visibility.

TL;DR: The content explains that modern search systems no longer rely on links but extract and validate facts as independent ranking signals, which means unclear structure or weak sourcing leads to reduced visibility. As a result, even correct content gets ignored if AI cannot reliably interpret, verify, and reuse its claims. By structuring facts, aligning them with credible sources, and maintaining consistency, the content becomes machine-readable and gains stable ranking and reuse potential.

If your facts cannot be extracted and validated instantly, AI will skip your content regardless of its quality.

Modern search systems increasingly rely on facts as ranking signals to evaluate content reliability, relevance, and long-term informational value. This approach reflects a structural shift in how ranking mechanisms interpret meaning, moving away from surface indicators toward verifiable and traceable knowledge units.

As facts as ranking signals gain importance, citations and sources become integral components of content evaluation rather than optional references. Ranking systems now assess how clearly factual statements can be extracted, validated, and connected to authoritative external evidence. This change alters both publishing standards and the technical requirements for large-scale content systems.

This systemic transformation aligns with the broader framework of Generative Engine Optimization (GEO), which defines how factual structure, citation stability, and semantic clarity increase inclusion probability within AI-driven search systems.

The growing reliance on factual grounding directly affects enterprise publishing models. Content architectures must support consistent terminology, explicit sourcing, and stable structural patterns to remain interpretable at scale. Without these properties, even accurate material risks reduced visibility in environments governed by automated interpretation.

The sections that follow examine how facts, citations, and sources function as structural inputs in modern ranking logic. Each block isolates a specific mechanism and explains how factual integrity translates into measurable evaluation outcomes.

Facts as Ranking Signals in Modern Search Systems

Modern search systems increasingly shift from link-centric evaluation toward fact-centric assessment models. In this context, facts as ranking signals determine reliability and relevance with higher precision. Consequently, ranking systems require evaluation inputs that they can interpret consistently across large content corpora, as reflected in information quality frameworks developed by the National Institute of Standards and Technology (NIST). At the same time, this section limits its scope strictly to ranking assessment logic rather than to optimization tactics or publishing workflows.

Definition: AI understanding is the ability of ranking and generative systems to interpret factual meaning, source relationships, and structural boundaries in a way that enables consistent evaluation, validation, and reuse of content across search environments.

Claim: Facts increasingly function as first-order ranking signals in modern search systems.

Rationale: Ranking systems require stable and verifiable inputs to reduce uncertainty during content evaluation.

Mechanism: Ranking pipelines extract factual statements, validate them against known references, and then assign weight based on consistency and reliability.

Counterargument: In highly competitive domains, link authority can still influence ranking outcomes more strongly than factual signals alone.

Conclusion: Facts now operate alongside links as parallel evaluative signals within modern ranking logic.

From Link Authority to Factual Authority

Early ranking models treated links as primary indicators of trust and relevance. However, this approach assumed that references between pages reflected collective judgment, while in practice it often rewarded popularity over accuracy. As a result, facts in search ranking gained importance because they allow systems to assess informational value directly rather than infer it indirectly.

At the same time, factual ranking signals introduce a distinct evaluation layer that centers on verifiability and coherence. Instead of relying on network effects, ranking systems examine whether statements align with established data and maintain internal consistency. Consequently, factual authority emerges from demonstrable correctness rather than from amplification.

In practical terms, links show where attention accumulates, whereas facts show whether information withstands validation. Therefore, modern ranking systems increasingly treat these signals as complementary inputs with clearly separated evaluative roles.

Evidence as an Evaluative Input

Evidence enables ranking systems to compare factual claims across documents with higher confidence. In evidence based ranking models, systems prioritize statements that connect to documented sources and consistent data patterns. As a result, this approach reduces ambiguity and supports predictable interpretation across diverse content sets.

Moreover, factual authority signals strengthen when evidence aligns with institutional research, standardized datasets, or recognized technical benchmarks. Ranking systems actively weigh these signals to distinguish substantiated claims from unsupported assertions. Over time, this process stabilizes ranking behavior by favoring content with measurable informational grounding.

At a practical level, evidence allows systems to evaluate not only what a text states but also whether the information remains trustworthy. Thus, content that consistently aligns with validated frameworks gains structural priority during ranking decisions.

| Evaluation Dimension | Link-Based Logic | Fact-Based Logic |

|---|---|---|

| Primary signal source | External references | Verifiable statements |

| Trust inference | Network-driven | Evidence-driven |

| Stability over time | Sensitive to link changes | Stable with consistent facts |

| Error detection | Limited | Direct validation processes |

Overall, the comparison illustrates how fact-based logic introduces clearer validation paths. As a consequence, ranking systems improve consistency and lower interpretive risk when they operate at scale.

How Search Systems Assess Sources and Citations

Search systems treat sources as ranking factor within a formal assessment layer that operates alongside factual extraction. Consequently, systems distinguish between the mere presence of a citation and the credibility of the cited origin, because credibility determines whether a factual claim can sustain long-term evaluation reliability, as documented by research from the Oxford Internet Institute. At the same time, this section addresses assessment logic rather than editorial practices or optimization techniques.

Definition: A source is an external origin of verifiable information that ranking systems can evaluate for reliability, authority, and consistency over time.

Claim: Sources operate as independent ranking factors when associated with factual claims.

Rationale: Facts require external validation to maintain ranking reliability across changing information environments.

Mechanism: Systems assess source credibility through institutional authority, historical accuracy, and cross-reference consistency.

Counterargument: Novel domains may lack established authoritative sources, which limits immediate evaluation depth.

Conclusion: Source evaluation strengthens factual ranking reliability by anchoring claims to verifiable origins.

Citations Impact on Ranking Stability

Ranking systems do not treat all citations equally, because citations impact on ranking stability only when they support verifiable claims. Accordingly, systems evaluate whether a cited source consistently reinforces factual statements across multiple documents and contexts. When citations meet this condition, they reduce interpretive uncertainty and support predictable ranking behavior.

In addition, source backed content ranking emerges when citations connect claims to externally validated knowledge rather than to self-referential material. Systems compare citation patterns over time to identify whether sources continue to align with established data and institutional standards. As a result, stable citation relationships contribute to long-term ranking resilience rather than short-term visibility shifts.

In simple terms, citations help rankings only when they point to sources that remain trustworthy and consistent. A citation without credibility adds little value, while a reliable citation stabilizes evaluation outcomes.

Source Credibility Signals

Ranking systems derive source credibility ranking from multiple observable indicators that together define trustworthiness. These indicators extend beyond brand recognition and instead focus on measurable attributes such as institutional grounding and publication discipline. By combining these signals, systems determine whether a source can reliably support factual claims.

Over time, reliable sources ranking strengthens when sources demonstrate accuracy across repeated references and diverse contexts. Systems track correction rates, alignment with peer-reviewed material, and consistency across datasets. Consequently, sources that maintain these properties gain higher weighting during factual evaluation.

Put plainly, credibility depends on how often a source proves correct and consistent. Systems reward sources that repeatedly confirm facts and penalize those that introduce instability.

- Institutional affiliation

- Publication standards

- Historical accuracy

- Peer recognition

Together, these criteria form a coherent evaluation framework that allows ranking systems to differentiate durable sources from transient or unreliable ones.

Credibility Signals and Trust Indicators in Content

Search systems treat credibility signals in search as measurable constructs that directly affect how they rank and reuse information. Accordingly, systems infer trust from observable properties such as structural consistency, sourcing discipline, and factual alignment, as described in research on language interpretation and trust modeling by the Stanford Natural Language Institute. At the same time, this section focuses on credibility inference logic rather than on stylistic quality or audience persuasion.

Definition: Credibility signals are measurable indicators that reflect content trustworthiness and reliability across repeated evaluations.

Claim: Credibility signals directly influence ranking outcomes.

Rationale: Search systems prioritize low-risk information environments to reduce propagation of incorrect or unstable knowledge.

Mechanism: Consistency, sourcing, and factual alignment generate trust indicators that systems can measure and compare.

Counterargument: High credibility does not guarantee topical relevance in every retrieval context.

Conclusion: Credibility is a prerequisite for ranking inclusion, not a substitute for relevance.

Trust Indicators in Content Evaluation

Ranking systems infer trust indicators in content by examining how consistently a document presents verifiable information. When statements align internally and maintain coherence across sections, systems register lower interpretive risk. Consequently, consistency acts as a baseline requirement for reliable evaluation.

In addition, factual credibility signals emerge when content demonstrates alignment with external references and avoids contradiction over time. Systems monitor whether factual claims remain stable across updates and across related documents. As a result, content that preserves factual integrity gains higher trust weighting during ranking decisions.

In everyday terms, systems trust content that says the same thing in the same way and continues to match known facts. When information stays consistent and verifiable, systems treat it as safer to surface.

Authority Through Sources

Authority through sources forms when content connects its claims to origins that demonstrate reliability beyond a single publication. Systems evaluate whether cited sources maintain accuracy across multiple contexts and whether they align with recognized research or institutional outputs. Therefore, authority emerges from repeated confirmation rather than from isolated references.

At the same time, content credibility factors extend beyond the source itself to include how content integrates that source. Systems examine whether claims reflect the source accurately and whether citations support the stated meaning without distortion. Over time, this alignment strengthens the authority signal attached to the content.

Put simply, sources matter not only because they exist but because they remain reliable and correctly represented. Content gains authority when it uses sources precisely and consistently, not when it merely lists them.

Principle: Content gains durable visibility in AI-driven ranking systems when factual claims, source references, and credibility signals remain structurally stable enough to be interpreted without contextual ambiguity.

Editorial Verification and Fact-Checked Content

Search systems increasingly treat fact checked content ranking as a structural input that shapes evaluation stability. Accordingly, editorial verification complements algorithmic validation by reducing uncertainty before content enters automated pipelines, a distinction supported by research on information reliability and system confidence from MIT CSAIL. At the same time, this section focuses on how verification affects ranking behavior rather than on newsroom workflows or compliance practices.

Definition: Editorial verification is the process of validating factual accuracy before publication through documented checks, source confirmation, and internal consistency review.

Claim: Fact-checked content receives higher ranking stability.

Rationale: Verified content reduces correction and retraction risk, which lowers evaluation volatility.

Mechanism: Verification flags increase confidence scores inside content evaluation pipelines and persist across re-indexing cycles.

Counterargument: Verification processes vary across publishers, which introduces uneven signal strength.

Conclusion: Verification enhances ranking consistency by lowering systemic uncertainty.

Evidence-Driven Content Models

Evidence driven content relies on explicit confirmation of claims before publication rather than on post hoc correction. As a result, systems encounter fewer contradictions when they reassess documents over time. This approach strengthens structural predictability because verified claims maintain alignment with referenced materials.

At the same time, source supported content reinforces this model by anchoring each factual statement to a confirmable origin. Systems track whether sources remain consistent across updates and related pages. Consequently, content that preserves evidence alignment retains stable evaluation outcomes during ranking recalculations.

Put simply, evidence-driven models help systems encounter fewer surprises. When facts remain verified and supported, rankings change less often and with clearer justification.

Accuracy and Consistency Signals

Accuracy signals in content emerge when statements remain correct across revisions and contexts. Systems detect these signals by comparing current claims against historical versions and related documents. Therefore, accuracy contributes directly to evaluation confidence rather than acting as a secondary quality marker.

In addition, factual consistency ranking reflects whether content preserves meaning across sections without contradiction. Systems penalize documents that drift semantically, even when individual statements appear correct. As a result, consistent factual alignment strengthens long-term ranking stability.

In practice, systems favor content that stays accurate and says the same thing each time. Consistency reduces interpretive cost and supports predictable ranking behavior.

An enterprise publisher introduced mandatory source validation across all editorial outputs. Over the following twelve months, ranking volatility decreased as correction frequency declined. Systems reassessed the content less often, which reduced visibility fluctuations. This pattern illustrates how verification translates into measurable ranking stability.

Example: A page that separates factual claims, verification context, and source references into stable structural blocks allows AI systems to confirm accuracy more efficiently, increasing the likelihood of consistent citation and reuse in generated responses.

Information Reliability and Reference Quality

Search systems treat information reliability signals as longitudinal metrics that reflect how content performs across repeated evaluations. Accordingly, reliability depends on sustained factual accuracy and reference discipline over time, a perspective grounded in research on information validation and citation integrity published in the ACM Digital Library. At the same time, this section limits its scope to assessment logic rather than to publishing cadence or distribution strategy.

Definition: Information reliability reflects the long-term accuracy and consistency of published content as measured across revisions, references, and reuse contexts.

Claim: Reliability accumulates over time through consistent factual accuracy.

Rationale: Systems track historical correctness to reduce future evaluation uncertainty.

Mechanism: Past validation outcomes influence future weighting during reassessment cycles.

Counterargument: New publishers lack historical data, which limits early reliability signals.

Conclusion: Reliability compounds with consistency and strengthens evaluation confidence over time.

Reference Quality Evaluation

Reference quality evaluation determines whether cited materials provide durable support for factual claims. Systems assess how references behave across updates, cross-documents, and related topics, thereby identifying sources that maintain accuracy rather than fluctuate. Consequently, references that remain stable across contexts contribute positively to long-term evaluation.

At the same time, information reliability signals intensify when references demonstrate alignment with recognized standards, peer-reviewed research, or institutional datasets. Systems compare reference behavior over time to detect corrections, retractions, or inconsistencies. As a result, content that relies on stable references receives higher confidence during ranking reassessments.

In practical terms, strong references keep content reliable across months and years. When sources remain accurate and consistent, systems trust the content more with each evaluation pass.

Verifiable Information Ranking

Verifiable information ranking prioritizes claims that systems can confirm against durable references. When content presents statements that remain checkable across time, systems reduce interpretive cost and increase confidence. Therefore, verifiability directly supports predictable ranking outcomes.

In addition, ranking based on accuracy reflects how closely current claims match validated historical records. Systems compare present assertions with prior confirmations and related documents to detect drift. Consequently, accurate content that preserves meaning across updates gains stable positioning during ranking recalculations.

Simply stated, systems favor information they can verify again and again. Accuracy that holds over time becomes a reliable basis for ranking decisions.

| Reference Type | Validation Difficulty | Reliability Weight |

|---|---|---|

| Peer-reviewed research | High | High |

| Institutional reports | Medium | Medium–High |

| Industry analyses | Medium | Medium |

| Unverified publications | Low | Low |

Overall, reference quality defines how reliably systems can reassess content in the future. As reference stability increases, information reliability strengthens and ranking behavior becomes more predictable.

Facts as Ranking Signals in Modern Search Systems

Modern search systems increasingly rely on facts as ranking signals to evaluate content reliability and relevance at scale. As ranking pipelines process growing volumes of information, facts as ranking signals provide stable evaluation inputs that systems can interpret consistently across domains, a shift reflected in information quality models described by the National Institute of Standards and Technology (NIST). Therefore, facts as ranking signals define assessment logic rather than optimization tactics or editorial preferences.

Definition: Facts as ranking signals are discrete, verifiable informational units that ranking systems use to assess content reliability and relevance independently of link structures.

Claim: Facts increasingly function as first-order ranking signals in modern search systems.

Rationale: Ranking systems require stable and verifiable inputs, and facts as ranking signals reduce uncertainty during large-scale evaluation.

Mechanism: Ranking pipelines extract factual statements, validate them, and weight them as independent units, allowing facts as ranking signals to operate consistently across contexts.

Counterargument: In highly competitive domains, link authority can still influence outcomes more strongly than facts as ranking signals alone.

Conclusion: Facts as ranking signals now operate alongside links as parallel evaluative inputs.

From Link Authority to Factual Authority

Early ranking models treated links as primary indicators of trust and relevance. However, this approach assumed that popularity reflected correctness, which often proved unreliable. As a result, facts as ranking signals gained importance because they allow systems to assess informational value directly instead of inferring it through network behavior.

At the same time, factual ranking signals introduce an evaluation layer that prioritizes verifiability and internal coherence. Instead of amplifying pages through references alone, ranking systems analyze whether statements align with established data. Consequently, facts as ranking signals shift authority from connectivity to correctness.

In practical terms, links show where attention flows, whereas facts as ranking signals show whether information withstands validation. Therefore, modern systems treat both signals as complementary but structurally distinct.

Evidence as an Evaluative Input

Evidence enables ranking systems to operationalize facts as ranking signals across large document sets. In evidence based ranking models, systems prioritize claims that connect to documented sources and consistent data patterns. As a result, facts as ranking signals gain measurable weight during evaluation cycles.

Moreover, factual authority signals strengthen when evidence aligns with institutional research, standardized datasets, or recognized benchmarks. Ranking systems weigh these alignments to determine how effectively facts as ranking signals distinguish substantiated claims from unsupported assertions. Over time, this process stabilizes ranking behavior.

Simply stated, evidence allows systems to confirm that facts as ranking signals remain correct and reusable. When validation holds across time, ranking outcomes become more predictable.

| Evaluation Dimension | Link-Based Logic | Fact-Based Logic |

|---|---|---|

| Primary signal source | External references | Facts as ranking signals |

| Trust inference | Network-driven | Evidence-driven |

| Stability over time | Sensitive to link changes | Stable with consistent facts |

| Error detection | Limited | Explicit factual validation |

Overall, the comparison demonstrates that facts as ranking signals introduce clearer validation paths. As a consequence, ranking systems improve consistency and reduce interpretive risk when they operate at scale.

Ranking Influence of Accuracy and Evidence

Search systems increasingly adjust ranking priority based on measurable accuracy, which reshapes how they weigh evidence against sheer content volume. Accordingly, the ranking influence of sources emerges when systems favor correctness and validation over scale, a pattern documented in research on language technologies and evaluation frameworks by the Carnegie Mellon University Language Technologies Institute (LTI). This section concentrates on ranking logic that elevates accurate information rather than on production speed or distribution reach.

Definition: Accuracy influence refers to ranking adjustments based on factual correctness as measured through validation outcomes and consistency checks.

Claim: Accurate content receives preferential ranking treatment.

Rationale: Accuracy minimizes corrective system overhead and reduces reassessment frequency.

Mechanism: Accuracy metrics feed into relevance scoring and persist across ranking recalculations.

Counterargument: Accuracy alone does not ensure usefulness for every query context.

Conclusion: Accuracy amplifies relevance but does not replace it.

Factual Relevance Signals

Ranking systems derive factual relevance signals by examining how closely claims align with validated information while still addressing the query context. When content maintains factual correctness and topical alignment, systems register lower interpretive risk. Consequently, relevance strengthens when facts remain accurate and directly applicable.

At the same time, the ranking influence of sources becomes visible when systems compare how different origins support the same claims. Sources that consistently reinforce correct statements increase confidence in relevance scoring. Therefore, factual relevance grows not only from correctness but also from the reliability of the sources that underpin those facts.

In plain terms, systems prefer content that answers the question correctly and comes from sources that have proven reliable. When both conditions hold, relevance signals become stronger and more stable.

Evidence as Ranking Driver

Evidence operates as ranking driver when systems use it to differentiate accurate claims from merely plausible ones. In this process, evidence as ranking driver reflects how well documented information supports factual assertions across contexts. As a result, systems assign higher weight to content that demonstrates clear evidence alignment.

Furthermore, credibility as ranking driver emerges when evidence consistently confirms claims over time. Systems track whether evidence remains valid across updates and related documents. Consequently, content supported by durable evidence gains ranking advantages that persist beyond individual evaluation cycles.

Put simply, evidence pushes accurate content upward when it repeatedly confirms the same facts. Credibility grows through consistent validation, which allows systems to rank information with greater confidence.

Checklist:

- Are factual claims clearly separated from interpretation or implication?

- Do sources appear in close structural proximity to the claims they support?

- Are credibility and verification signals grouped into consistent sections?

- Does each paragraph represent one complete factual or evaluative unit?

- Is terminology reused consistently without semantic drift?

- Does the structure allow AI systems to validate facts independently of links?

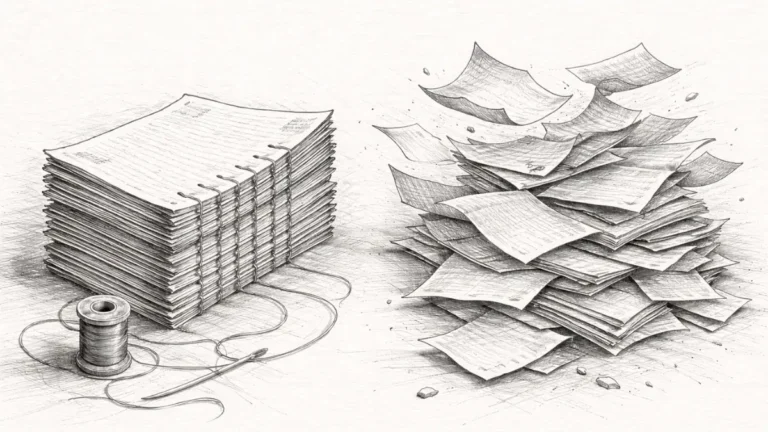

From Links to Facts: Structural Ranking Transformation

Search infrastructures increasingly implement facts over links ranking as part of a broader architectural evolution in evaluation hierarchy. As systems scale automated interpretation, they reduce reliance on indirect trust signals and instead prioritize validated information units, a shift aligned with analysis of digital information systems published by the Organisation for Economic Co-operation and Development (OECD). This section addresses structural ranking change rather than tactical adjustments or transitional optimization practices.

Definition: Structural ranking transformation is a systemic change in evaluation hierarchy where ranking logic reorders primary signals based on reliability, validation, and interpretability.

Claim: Ranking systems are structurally shifting from links to facts.

Rationale: Links act as indirect trust proxies, while facts provide direct evaluable inputs.

Mechanism: Systems increasingly prioritize validated statements as core ranking units.

Counterargument: Links still signal discovery pathways and content connectivity.

Conclusion: Facts now anchor ranking logic while links support navigation.

Sources Over Backlinks

Ranking systems increasingly privilege sources over backlinks when evaluating informational reliability. While backlinks once indicated endorsement through network behavior, systems now examine whether sources consistently support validated claims across documents. As a result, information quality ranking improves when content references origins that demonstrate long-term accuracy rather than transient popularity.

At the same time, this shift does not eliminate links entirely but repositions them within the evaluation stack. Systems treat backlinks as discovery aids rather than as primary trust determinants. Consequently, source reliability plays a greater role in ranking decisions than link volume alone.

In straightforward terms, systems care more about where facts come from than how many pages link to them. Reliable sources outweigh dense backlink profiles when systems assess informational value.

Verification-Driven Visibility

Verification driven visibility emerges when ranking systems surface content based on confirmed factual integrity rather than on network amplification. When content repeatedly passes validation checks, systems gain confidence in its reuse and display it more consistently across contexts. Therefore, visibility increasingly depends on validation outcomes rather than on referral patterns.

In addition, source quality impact becomes measurable as systems track how often a source supports validated claims without correction. High-quality sources reduce reassessment cost and stabilize visibility signals over time. As a result, content tied to strong sources maintains presence even as ranking models evolve.

Put simply, verified facts stay visible longer. When sources continue to prove accurate, systems return to them more often and with higher confidence.

A knowledge-heavy platform reduced its emphasis on backlink acquisition and invested in structured verification and source discipline. Over time, systems increased factual citation inclusion from that platform across related topics. This shift led to more consistent visibility despite lower link growth. The outcome illustrates how structural ranking transformation favors validated information over network prominence.

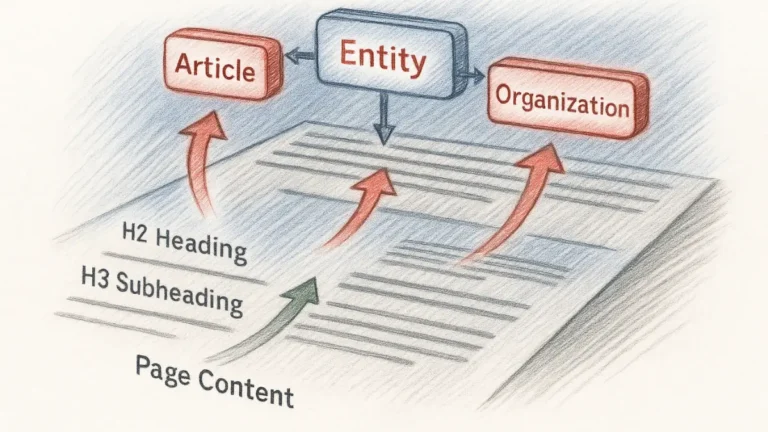

Interpretive Structure of Fact-Oriented Ranking Pages

- Fact-centered semantic partitioning. The separation of factual statements into discrete structural segments enables AI systems to isolate claims as independent evaluative units without contextual bleed.

- Source-aligned structural binding. Proximity between factual assertions and their referenced origins creates stable association signals that support reliable interpretation during generative processing.

- Credibility signal compartmentalization. Dedicated structural zones for trust, verification, and authority indicators allow models to distinguish evaluative signals from narrative content.

- Temporal consistency framing. Recurrent structural patterns across sections provide longitudinal anchors that help AI systems assess reliability over repeated indexing cycles.

- Interpretive boundary enforcement. Clear hierarchical depth limits prevent conflation between evidence, claims, and implications, supporting precise extraction and reasoning.

Together, these structural properties explain how fact-oriented pages remain interpretable as stable knowledge artifacts, allowing AI and generative systems to evaluate accuracy, sourcing, and credibility without reliance on implicit inference.

FAQ: Facts, Sources, and Ranking Interpretation

What are facts as ranking signals?

Facts as ranking signals are verifiable informational units that search systems evaluate to determine reliability, relevance, and reuse potential.

How do facts differ from links in ranking logic?

Links act as indirect trust proxies, while facts provide direct evaluable inputs that systems can validate against known information sources.

Why do sources matter in factual ranking?

Sources allow systems to validate factual claims externally, reducing uncertainty and improving long-term ranking stability.

What makes a source credible for ranking systems?

Credible sources demonstrate institutional grounding, historical accuracy, and consistent alignment with validated information over time.

How do citations influence ranking outcomes?

Citations influence ranking when they connect claims to reliable sources that consistently support factual accuracy across contexts.

What role does verification play in ranking stability?

Verification reduces correction risk and increases confidence signals, which stabilizes ranking behavior across reassessment cycles.

How do search systems assess factual accuracy?

Systems decompose claims into atomic units and validate them against knowledge graphs, datasets, and trusted references.

Why does consistency affect information reliability?

Consistent factual alignment over time allows systems to accumulate reliability signals and reduce interpretive uncertainty.

How does this shift affect future search evaluation?

As ranking logic prioritizes validated facts, search systems increasingly favor content structured for clarity, accuracy, and source alignment.

Glossary: Key Terms in Factual Ranking

This glossary defines core terminology related to facts, sources, and verification to ensure consistent interpretation by both readers and AI-driven ranking systems.

Facts as Ranking Signals

Verifiable informational units that ranking systems evaluate to determine reliability, relevance, and suitability for reuse independently of link structures.

Source Credibility

The assessed reliability of an information origin based on institutional grounding, historical accuracy, and consistency across references.

Citation Integrity

The degree to which citations accurately and consistently support factual claims without distortion or selective interpretation.

Editorial Verification

A pre-publication process that validates factual accuracy, source alignment, and internal consistency before content enters ranking pipelines.

Information Reliability

A longitudinal measure of how consistently content maintains factual accuracy and reference stability across time and reuse contexts.

Factual Assessment

The process by which ranking systems decompose claims into atomic units and validate them against trusted datasets and knowledge graphs.

Accuracy Signal

A measurable indicator derived from repeated validation outcomes that influences ranking stability and confidence scoring.

Structural Ranking Transformation

A systemic shift in evaluation hierarchy where ranking systems prioritize validated facts over indirect trust proxies such as backlinks.

Verification-Driven Visibility

Visibility patterns that emerge when content consistently passes validation checks and remains stable across ranking reassessments.

Interpretive Stability

The ability of ranking systems to extract and reuse meaning from content without semantic drift across different contexts and timeframes.