Last Updated on March 22, 2026 by PostUpgrade

AI Literacy for Writers: Understanding the Reader Behind the Model

AI systems do not read your article linearly—they reconstruct meaning from distributed signals, so unclear structure causes silent distortion before humans ever see it.

TL;DR: The article shows that writers lose control when AI interprets ambiguous or weakly structured content first. This leads to distorted summaries, reduced visibility, and inconsistent reuse across AI systems. By enforcing semantic clarity, stable terminology, and structured boundaries, content becomes reliably interpretable and reusable. As a result, AI systems extract meaning accurately, improving visibility, consistency, and downstream impact.

If your structure is weak, AI will reshape your meaning—and that version will scale faster than your original.

AI literacy writers now work in an environment shaped by AI-mediated readership. Written content is often processed by intelligent systems before humans encounter it. These systems extract meaning, compress arguments, and reuse statements across multiple outputs. As a result, authors must consider machine interpretation alongside human reading.

This role extends beyond tool awareness or automation skills. It reflects a writing discipline focused on how models interpret, segment, and prioritize text at scale. The discipline emphasizes meaning control, semantic stability, and interpretive predictability. In this context, stylistic expression becomes secondary to structural clarity.

This article develops an analytical framework for understanding the reader behind the model. It explains how AI systems read text and how this behavior reshapes professional writing practices. The structure follows clearly bounded sections with stable terminology. Each section supports long-term accessibility within AI-driven information systems.

AI Literacy as a Writer Competency

AI literacy for writers defines a professional capability rather than a technical add-on, because interpretation now happens inside intelligent systems. Increasingly, models process written material before humans engage with it, and therefore writers must encode clarity and intent earlier in the communication chain. For this reason, large-scale writing practices now align with research on digital skills and knowledge work published by the OECD.

Definition: AI understanding is the model’s ability to interpret meaning, structure, and conceptual boundaries in a way that enables accurate reasoning, reliable summarization, and consistent content reuse across generative discovery systems.

Claim: AI literacy is a core writer competency.

Rationale: Intelligent systems now interpret written content at scale before human readers.

Mechanism: Models derive meaning from definitions, semantic boundaries, and internal consistency.

Counterargument: Human readers still remain the primary audience for most texts.

Conclusion: Writers must design meaning for both human and machine interpretation.

Writer AI Literacy Skills

Writer AI literacy skills form a coherent set of competencies that support predictable interpretation. Specifically, each skill addresses a distinct failure mode that emerges when models reconstruct meaning from text. As a result, these skills reduce ambiguity and improve reuse across AI-mediated systems.

At the same time, these competencies operate at the level of structure and semantics rather than style. They actively shape how statements connect, how scope remains controlled, and how terms retain stable meaning across sections. Consequently, they function as safeguards against unintended inference.

In practical terms, these skills help writers state one idea clearly and ensure machines interpret it consistently.

- semantic precision

- boundary definition

- interpretive predictability

- terminology stability

- context containment

Together, these competencies operate as an integrated system that governs how meaning is encoded, preserved, and extracted.

AI Literacy in Authorship

AI literacy in authorship reflects a shift from expressive writing toward meaning governance. Instead of focusing only on articulation, authors now influence outcomes by shaping how models interpret, summarize, and reuse statements. Therefore, authorship increasingly depends on semantic control rather than narrative expression.

At the editorial level, this shift changes decision-making priorities. Writers must actively enforce definitional clarity, prevent scope drift, and maintain internal consistency across long texts. As a result, authorship evolves into a form of systems thinking applied directly to language.

In simpler terms, authors no longer write only to express ideas. They write to ensure ideas survive interpretation without distortion.

Understanding AI Readers

Understanding AI readers requires treating machine interpretation as a distinct cognitive process rather than an extension of human reading. Increasingly, intelligent systems evaluate, summarize, and reuse text before humans encounter it, which changes how meaning travels through information systems. For this reason, research on language interpretation from the Stanford Natural Language Institute helps frame AI readers as a separate interpretive class with specific constraints.

Definition: An AI reader is an inference system that reconstructs meaning without intent or lived context.

Claim: AI readers are structurally different from human readers.

Rationale: They lack experiential grounding and subjective intention.

Mechanism: Interpretation relies on probabilistic pattern recognition across learned representations.

Counterargument: Large models increasingly approximate human reading behavior.

Conclusion: Approximation improves output quality but does not remove structural differences.

Human Reader vs AI Reader

Human reader vs AI reader distinctions become clear when comparing how each processes meaning. Humans rely on lived experience, contextual memory, and intent recognition when interpreting text. In contrast, AI systems operate through statistical inference, which prioritizes patterns over situational understanding.

As a result, these two reader types diverge in how they handle ambiguity, narrative flow, and correction of errors. While humans actively resolve gaps using common sense, AI readers depend on probability distributions shaped by training data. Therefore, similar outputs may mask fundamentally different reasoning paths.

In simple terms, humans read by understanding situations, while machines read by matching patterns.

| Human reader | AI reader |

|---|---|

| intent recognition | pattern inference |

| context dependency | context approximation |

| ambiguity tolerance | ambiguity amplification |

| narrative inference | sequence modeling |

| error correction | probability adjustment |

These differences show why writers must account for machine interpretation even when texts appear clear to human audiences.

AI as Secondary Reader

AI as secondary reader describes a condition where machines interpret content before humans see its final form. For example, search interfaces, assistants, and summaries often reshape text based on model interpretation. Consequently, AI readers influence visibility, framing, and reuse long before human judgment occurs.

Because of this position, AI readers act as gatekeepers rather than passive intermediaries. They decide which statements remain salient and which ones disappear during compression or summarization. Therefore, writers indirectly communicate with humans through an additional interpretive layer.

Put simply, machines often read first, and humans read what remains. This shift directly influences modern content strategy. A practical explanation of how writers should structure articles for this environment appears in this complete guide to writing for AI search engines, which explains how generative systems evaluate structure, clarity, and factual signals when selecting content for reuse.

Understanding Non Human Readers

Understanding non human readers requires accepting that machines do not share human assumptions. They do not infer intent, recognize irony, or resolve contradictions through experience. Instead, they depend on explicit signals embedded in text.

As a result, unclear boundaries or unstable terminology create compounding errors during reuse. Each subsequent interpretation amplifies small ambiguities. Over time, this process reshapes meaning in ways the author did not intend.

In everyday terms, if writers do not explain meaning clearly for machines, machines will guess, and those guesses will travel further than the original text.

How AI Reads Text

How AI reads text defines a core constraint for AI literacy writers, because models interpret meaning without following human reading order. Instead of moving line by line, intelligent systems evaluate language fragments in parallel and assemble meaning from internal representations. Research from MIT CSAIL shows that this behavior results from representation learning rather than sequential comprehension, which directly affects how writers should structure content.

Definition: AI reading is the reconstruction of meaning through token relationships and learned representations.

Claim: AI does not read text sequentially.

Rationale: Meaning emerges from distributed representations rather than ordered sentences.

Mechanism: Weighted token interactions generate inference across the entire context window.

Counterargument: Attention mechanisms resemble human focus on specific segments.

Conclusion: Similar output can mask fundamentally different interpretation processes.

How Language Models Interpret Text

How language models interpret text depends on global pattern evaluation rather than linear progression. Models assess relationships between tokens across the full input, which allows them to connect distant statements without respecting paragraph order. As a result, AI literacy writers cannot rely on narrative flow alone to guide interpretation.

At the same time, this process favors internal coherence over authorial intent. Models prioritize combinations of terms that align with learned statistical patterns, even when rhetorical emphasis suggests a different hierarchy. Therefore, writers must encode importance through structure and repetition rather than placement.

In simple terms, models do not read from beginning to end. They scan everything at once and decide what fits together.

AI Perception of Content

AI perception of content relies on signals that stabilize interpretation across fragmented input. Models actively search for cues that reduce uncertainty and reinforce semantic consistency. Consequently, certain textual features influence interpretation far more than stylistic nuance.

These signals determine whether models reconstruct meaning reliably during summarization and reuse. When writers align content with these priorities, interpretation becomes more predictable across systems. For AI literacy writers, this alignment defines practical control over downstream outputs.

Put simply, models trust content that stays consistent and clearly bounded.

- definitional clarity

- repetition consistency

- structural boundaries

- semantic proximity

Together, these signals increase interpretation reliability by narrowing the range of plausible inferences.

AI Attention Patterns in Text

AI attention patterns in text reveal how models distribute focus across fragments rather than sentences. Attention mechanisms assign weights to tokens based on relevance within learned representations, not narrative position. As a result, distant terms can influence interpretation as strongly as nearby ones.

This behavior reshapes how emphasis works in written content. Headings, repeated terms, and explicit boundaries attract stronger attention signals, while isolated statements lose influence. Therefore, AI literacy writers must design emphasis structurally rather than rhetorically.

In everyday terms, models notice what appears important many times and ignore what appears only once, no matter where it appears.

Writing for AI Interpretation

Writing for AI interpretation treats language as a system of constraints rather than a channel for expression. As intelligent systems increasingly summarize, rank, and reuse text, writers must encode meaning in ways that limit unintended inference. Research from the Allen Institute for Artificial Intelligence supports this view by emphasizing the role of explicit semantic structure in reliable model interpretation.

Definition: Writing for AI interpretation means reducing ambiguity to control inference outcomes.

Claim: Writers can influence AI interpretation.

Rationale: AI relies on explicit semantic cues rather than implicit intent.

Mechanism: Stable definitions constrain inference paths and narrow possible meanings.

Counterargument: Models hallucinate unpredictably even when structure exists.

Conclusion: Structure reduces interpretation variance even when it cannot eliminate error.

Principle: Content becomes more visible in AI-driven environments when its structure, definitions, and conceptual boundaries remain stable enough for models to interpret without ambiguity.

Example: A page with clear conceptual boundaries and stable terminology allows AI systems to segment meaning accurately, increasing the likelihood that its high-confidence sections will appear in assistant-generated summaries.

Writing for AI Comprehension

Writing for AI comprehension requires authors to anticipate how models assemble meaning from fragments. Models evaluate relationships across the entire context window, which means unclear scope or shifting terms quickly distort interpretation. Therefore, writers must encode clarity at the paragraph level rather than relying on cumulative narrative flow.

At the same time, comprehension depends on consistency more than emphasis. When writers repeat definitions and maintain scope boundaries, models infer stronger semantic alignment. As a result, content becomes easier for systems to summarize and reuse without distortion.

In practical terms, clear paragraphs help machines understand one idea at a time without guessing connections.

Interpretability Driven Writing

Interpretability driven writing focuses on designing text so that models infer the intended meaning with minimal variance. This approach prioritizes constraints that limit how far interpretation can drift during reuse. Consequently, writers shift attention from stylistic variation to semantic discipline.

These constraints operate together rather than in isolation. When writers stack them consistently, models receive reinforcing signals that stabilize inference. Over time, this stacking effect increases reliability across different AI systems and contexts.

Put simply, interpretability improves when writers remove choices from how meaning can be interpreted.

- single-scope paragraphs

- immediate definitions

- stable terminology

Together, these constraints reinforce one another and produce predictable interpretation across AI-mediated systems.

Meaning Alignment for AI

Meaning alignment for AI addresses the gap between author intent and model inference. Models do not verify intent; they infer meaning from available signals. Therefore, alignment depends on how clearly writers encode relationships, scope, and definitions.

When alignment weakens, small ambiguities compound during summarization and reuse. Each reinterpretation amplifies uncertainty, which eventually alters meaning. Strong alignment prevents this drift by keeping inference paths narrow and consistent.

In simple terms, aligned writing tells machines exactly what to understand and leaves little room for alternative guesses.

Writing for Hybrid Audiences

Writing for hybrid audiences reflects a reality where humans and intelligent systems consume the same text in different ways. As AI systems increasingly mediate access through summaries, previews, and rankings, writers must address both interpretive layers at once. Research from the Oxford Internet Institute shows that algorithmic mediation now shapes how information reaches human readers across digital platforms.

Definition: Hybrid audiences consist of human readers and algorithmic interpreters.

Claim: Hybrid writing is unavoidable.

Rationale: AI intermediates content delivery across search, recommendation, and assistant systems.

Mechanism: Content is parsed, summarized, and filtered by machines before humans engage with it.

Counterargument: Humans still judge value and relevance.

Conclusion: Both layers shape outcomes and must be addressed together.

Writing Beyond Human Readership

Writing beyond human readership requires authors to acknowledge that machines often encounter text first. AI systems scan, segment, and evaluate content to determine visibility and reuse, which directly affects what humans later see. Therefore, writers influence outcomes earlier in the information chain than before.

This shift expands the writer’s responsibility. Authors must ensure that meaning remains stable during machine interpretation, not only during human reading. As a result, clarity, structure, and consistency become prerequisites for reach, not optional refinements.

Put simply, writers no longer write only for people. They also write for systems that decide what people encounter.

Writing for Algorithmic Audiences

Writing for algorithmic audiences focuses on how machines process text under operational constraints. Models favor predictable structure, bounded scope, and consistent terminology because these features reduce uncertainty during inference. Consequently, writers must design content that machines can process reliably at scale.

This approach changes how texts balance clarity and flexibility. Human-first writing often tolerates ambiguity and stylistic variation, while hybrid-first writing limits both to preserve meaning across interpretations. Therefore, writers face trade-offs that affect reuse and visibility.

In practical terms, algorithmic audiences reward content that stays clear, consistent, and structurally disciplined.

| Human-first writing | Hybrid-first writing |

|---|---|

| expressive design goal | interpretive stability goal |

| flexible paragraph structure | single-scope paragraphs |

| high ambiguity tolerance | low ambiguity tolerance |

| limited reuse potential | high reuse potential |

These trade-offs show why hybrid-first writing improves reach while narrowing stylistic freedom.

Writing with AI Awareness

Writing with AI awareness means anticipating how systems will reinterpret text during summarization and ranking. Machines do not infer intent or context beyond what appears explicitly in language. Therefore, writers must encode relationships and boundaries directly into the text.

When awareness is absent, small ambiguities grow during reuse and compression. Over time, this drift alters meaning and weakens visibility. Awareness reduces this risk by aligning structure and semantics with machine interpretation.

In everyday terms, AI-aware writing ensures that what machines extract still matches what the writer intended.

AI Cognition and Meaning Processing

AI cognition in writing creates a concrete risk for AI literacy writers because machines infer meaning without understanding intent. When models process text, they rely on correlation instead of experience, which changes how meaning survives reuse. Research from the Carnegie Mellon Language Technologies Institute shows that this gap between inference and understanding directly affects how written knowledge propagates across systems.

Definition: AI cognition is correlation-based inference without intentional understanding.

Claim: AI cognition differs from human cognition.

Rationale: Models lack experiential grounding and subjective awareness.

Mechanism: Meaning is inferred statistically from learned associations across representations.

Counterargument: Scale compensates for missing grounding through broader pattern coverage.

Conclusion: Scale amplifies frequent patterns, not intent or lived context.

How AI Processes Written Meaning

How AI processes written meaning depends on associative strength rather than communicative purpose. Models connect terms based on frequency and representation similarity, which allows inference without comprehension. As a result, AI literacy writers must encode relationships explicitly to prevent unintended meaning shifts.

At the same time, this process rewards consistency over nuance. When writers vary terminology or expand scope without control, models infer new associations that distort meaning. Therefore, stable phrasing and bounded concepts reduce statistical drift during interpretation.

In simple terms, machines treat repeated patterns as meaning and ignore why those patterns exist.

AI Understanding of Context

AI understanding of context emerges only from signals visible in the text. Models approximate context through proximity, repetition, and structural markers rather than external knowledge. Consequently, missing signals cause shallow or incorrect inference, even when meaning seems obvious to humans.

Context also degrades when long texts lack clear boundaries. Models then merge separate ideas into a single representation. For AI literacy writers, explicit context markers prevent this collapse and preserve interpretive accuracy.

Put simply, machines understand only the context that writers clearly show them.

AI Perspective on Narrative

AI perspective on narrative treats stories as ordered signals rather than coherent experiences. Models prioritize statistical continuity instead of narrative intent, which changes how they extract conclusions. As a result, emphasis that matters to humans often disappears during summarization.

In enterprise environments, this behavior becomes visible when documentation favors flow over definition. AI systems then reuse conclusions without conditions, which spreads partial interpretations. AI literacy writers reduce this risk by separating narrative flow from semantic rules.

A large organization encountered this issue when internal guidelines lacked explicit definitions. AI-generated summaries removed constraints and led teams to apply rules incorrectly. Clear boundaries later restored reliable interpretation.

Responsibility and Visibility in the AI Era

Writer responsibility in AI era emerges from the direct link between interpretation quality and content visibility. As AI systems increasingly select, summarize, and redistribute text, they determine which ideas surface and which disappear. Analysis from the Pew Research Center shows that algorithmic mediation now plays a decisive role in how information reaches public audiences.

Definition: Writer responsibility in AI era is accountability for downstream machine reuse.

Claim: Writers influence AI-mediated visibility.

Rationale: AI systems select, compress, and rank content before human review.

Mechanism: Interpretability affects whether systems treat content as eligible for reuse and prominence.

Counterargument: Platforms ultimately control distribution and exposure.

Conclusion: Authors shape interpretive readiness, which directly conditions visibility.

AI Influenced Content Visibility

AI influenced content visibility depends on how systems interpret and prioritize meaning signals. When models encounter clear definitions, stable terminology, and bounded scope, they classify content as reliable and reusable. Consequently, such content appears more often in summaries, previews, and synthesized responses.

At the same time, weak interpretability reduces visibility even when content quality remains high. Ambiguous structure or shifting terms increase inference uncertainty, which lowers selection confidence. Therefore, visibility reflects not only relevance but also interpretive clarity.

In simple terms, machines promote content they can understand with confidence and suppress content that introduces doubt.

Anticipating AI Interpretation

Anticipating AI interpretation requires writers to think beyond initial publication. AI systems often reuse content across contexts that the author never anticipated. As a result, writers must encode meaning so it survives compression, extraction, and recombination.

This anticipation changes how writers evaluate completeness. A statement that feels sufficient for human readers may lack explicit conditions for machines. Therefore, writers who anticipate reuse add definitions and boundaries early to preserve meaning.

Put simply, writers who expect machines to reinterpret their work reduce the risk of misrepresentation.

A large knowledge base experienced this effect when AI systems consistently prioritized certain articles. Those articles placed definitions at the beginning of sections and maintained stable terminology. As a result, models selected them more often during summarization, while equally accurate but less structured content remained unseen.

Checklist:

- Does the page define its core concepts with precise terminology?

- Are sections organized with stable H2–H4 boundaries?

- Does each paragraph express one clear reasoning unit?

- Are examples used to reinforce abstract concepts?

- Is ambiguity eliminated through consistent transitions and local definitions?

- Does the structure support step-by-step AI interpretation?

Future-Ready Writing Skills

Future ready writing skills define a class of competencies that remain effective despite rapid changes in AI models and delivery systems. These skills focus on meaning stability, interpretive clarity, and structural coherence rather than adaptation to specific technologies. As a result, future ready writing skills operate independently of short-term platform shifts.

Future-ready writing skills are competencies that preserve interpretability, clarity, and reuse value across evolving AI systems and changing generative environments.

Claim: Core writing skills remain durable across AI system evolution.

Rationale: Interpretation mechanisms change gradually because models depend on stable principles of meaning extraction.

Mechanism: Clear structure, precise definitions, and scoped reasoning maintain effectiveness as models scale and diversify.

Counterargument: Model architectures and capabilities change unpredictably over time.

Conclusion: Foundational writing skills outlast individual implementations and platform cycles.

Writing with Model Awareness

Writing with model awareness reflects an understanding that text is interpreted by systems before reaching human readers. This awareness shapes decisions about terminology stability, paragraph scope, and logical sequencing. As a result, authors align expression with predictable interpretation patterns.

Moreover, writing with model awareness reduces reliance on stylistic inference. Instead, it emphasizes explicit meaning signals that survive summarization and extraction. This shift supports consistent reuse across AI-mediated contexts.

In simpler terms, the writer anticipates how meaning will be reconstructed, not how it will be read line by line.

Writing for Intelligent Systems

Writing for intelligent systems prioritizes clarity at the level of structure rather than surface fluency. Sentences function as discrete meaning units that models can isolate and recombine without distortion. Therefore, coherence depends on controlled transitions and consistent definitions.

At the same time, writing for intelligent systems minimizes ambiguity that could expand inference space. Each paragraph constrains interpretation through explicit scope and stable reference points. This approach improves reliability under generative reuse.

Put simply, the text is built to remain understandable even when removed from its original context.

AI Cognition and Language

AI cognition and language interact through statistical pattern recognition rather than intentional understanding. Language signals guide inference by reinforcing relationships between concepts, not by conveying intent. Consequently, writers influence outcomes through structural choices rather than expressive nuance.

Research on language and cognition published by UNESCO highlights the importance of clarity and consistency in machine-mediated communication environments. These findings reinforce the role of stable writing skills in long-term interpretability.

In essence, durable writing aligns language form with the way AI systems infer meaning, not with how humans intuitively interpret text.

Interpretive Signals in AI-Readable Writing Architecture

- Semantic depth alignment. A strict H2→H3→H4 hierarchy enables AI systems to separate conceptual layers and preserve meaning boundaries across long-context processing.

- Interpretive modularity. Self-contained sections with stable internal logic allow generative systems to extract, summarize, and recombine content without semantic leakage.

- Definition-driven graph formation. Early local definitions act as anchor nodes that guide how models construct internal knowledge graphs for the page.

- Reasoning chain detectability. Consistent declarative reasoning patterns signal causal structure, supporting reliable inference and explanation synthesis.

- Structural coherence persistence. Uniform paragraph scope and predictable section composition maintain interpretability when content is reused across AI-mediated environments.

Taken together, these architectural signals describe how generative systems interpret page structure as a semantic framework rather than a linear document, enabling stable reasoning under extraction, summarization, and recomposition.

FAQ: Generative Engine Optimization (GEO)

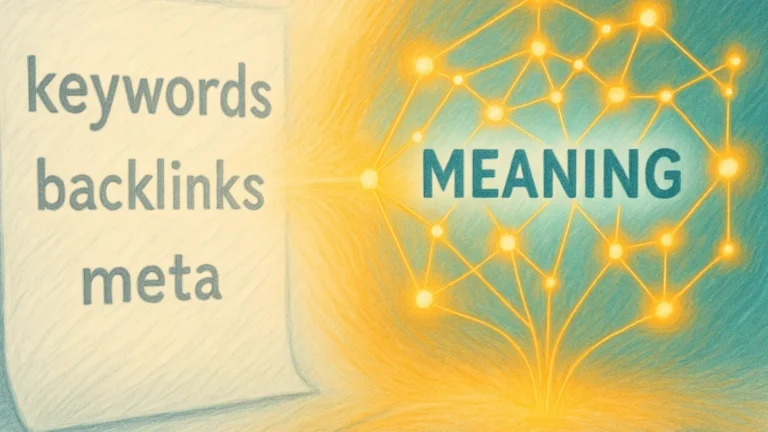

What is Generative Engine Optimization?

Generative Engine Optimization describes an approach to structuring content so that AI systems can interpret, extract, and reuse meaning reliably within generative search environments.

How does GEO differ from traditional SEO?

Traditional SEO focuses on ranking signals, while GEO focuses on interpretability, ensuring that AI systems can reconstruct intent, context, and logical boundaries.

Why is GEO important in modern AI search?

Modern AI search systems generate answers by assembling meaning, so visibility depends on semantic clarity and structural reliability rather than link-based prominence.

How do generative engines select content?

Generative systems evaluate definitional clarity, internal consistency, contextual stability, and source reliability when selecting content for reuse.

What role does structure play in GEO?

Clear structural hierarchy and bounded semantic blocks allow AI systems to isolate concepts and preserve meaning during summarization and recomposition.

Why are citations more important than backlinks?

In generative systems, citations signal factual grounding and trustworthiness, which directly influence whether content is reused in generated responses.

How do AI systems interpret factual reliability?

AI systems infer reliability through consistency, source attribution, and the absence of semantic contradiction within and across content blocks.

What are best practices for GEO-aligned content?

GEO-aligned content maintains stable terminology, explicit definitions, single-scope paragraphs, and predictable reasoning patterns.

How does GEO influence long-term AI visibility?

GEO improves long-term visibility by aligning content with how AI systems reason, extract meaning, and recombine information over time.

What skills support GEO-focused writing?

Effective GEO-focused writing requires semantic precision, structural discipline, and awareness of how AI systems interpret language.

Glossary: Key Terms in Precision Writing

This glossary defines the core terminology used throughout the article to ensure consistent interpretation by both human readers and AI systems.

AI Literacy for Writers

A professional capability that enables writers to anticipate and control how intelligent systems interpret, extract, and reuse written meaning.

AI Reader

An inference system that reconstructs meaning through statistical patterns rather than intent, experience, or situational understanding.

Hybrid Audience

A combined readership consisting of human readers and algorithmic systems that interpret the same content through different mechanisms.

Interpretive Constraint

A semantic or structural boundary that limits how AI systems can infer meaning from text, reducing interpretive variance.

Semantic Stability

The property of content that preserves consistent meaning across interpretation, summarization, and reuse by AI systems.

Atomic Paragraph

A paragraph designed to express a single idea within clear semantic boundaries, enabling predictable machine interpretation.

Interpretive Readiness

The degree to which content is prepared for accurate extraction and reuse by AI systems without loss of intended meaning.

Context Boundary

A structural or semantic marker that separates ideas and prevents AI systems from merging unrelated concepts during interpretation.

Inference Drift

The gradual distortion of meaning that occurs when AI systems repeatedly reinterpret ambiguous or weakly constrained content.

Structural Predictability

The consistency of layout and logical organization that enables AI systems to segment and process meaning reliably.