Last Updated on April 25, 2026 by PostUpgrade

The Rise of Cognitive Readability Metrics

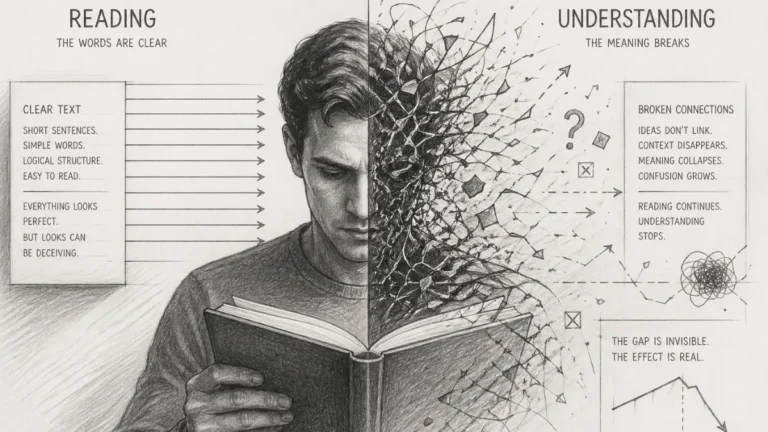

Your content is not hard to read — it fails because readers and AI cannot maintain a stable meaning across its structure.

TL;DR: Most texts appear readable but collapse during comprehension, causing both users and AI systems to abandon interpretation. This happens because surface readability ignores cognitive load, structure continuity, and meaning integration. Cognitive readability metrics measure how interpretation is sustained, enabling stable extraction, reuse, and visibility. When structure aligns with cognitive processing, comprehension stabilizes and content becomes reliably interpretable across systems.

If meaning breaks even once, everything that follows becomes unreliable — and this is exactly where most content fails.

If understanding requires effort, AI will skip your content in favor of clearer alternatives.

Readability no longer depends on surface formulas that count words and sentences. Researchers now evaluate how readers process meaning, connect ideas, and sustain understanding across complex texts. This shift explains why traditional scores often misclassify texts that readers still find confusing. Cognitive readability metrics address this gap by measuring comprehension as a cognitive process rather than a visual or stylistic property.

This approach treats readability as a function of mental effort. It focuses on how structure, information density, and concept order shape understanding. Instead of asking whether a text looks simple, cognitive analysis asks whether readers can build a stable mental model while reading analytical material. As a result, readability becomes a measurable property of comprehension, not of presentation.

This article examines readability through the lens of human cognition and comprehension effort. It focuses on analytical and longform knowledge texts where clarity depends on structure and meaning continuity. The discussion excludes stylistic persuasion and focuses on how readers interpret, retain, and validate information during reading.

From Traditional Readability to Cognitive Measurement

Traditional readability formulas emerged to approximate difficulty through surface indicators such as sentence length and word frequency. Over time, these approaches proved insufficient because they explain decoding effort but not understanding quality. Cognitive readability measurement responds to this limitation by evaluating how readers process meaning, integrate concepts, and sustain comprehension, a direction supported by comparative education research summarized by the OECD.

Definition: AI understanding refers to a system’s capacity to interpret text by resolving meaning, structure, and conceptual boundaries in a way that supports coherent reasoning, stable comprehension modeling, and reliable reuse across generative environments.

Claim: Traditional readability scores fail to capture real comprehension.

Rationale: They rely on surface proxies rather than cognitive processing.

Mechanism: Cognitive metrics evaluate effort, structure, and meaning integration.

Counterargument: Simple texts may still perform well under classic formulas.

Conclusion: Cognitive measurement extends rather than replaces readability baselines.

What changes here is not the definition of readability, but the way meaning is evaluated during reading. If interpretation cannot be sustained, measurement becomes irrelevant.

Limits of Formula-Based Readability

Readability assessment frameworks historically emphasized quantifiable proxies that allow fast scoring at scale. These frameworks correlate sentence length and vocabulary frequency with presumed difficulty, which enables comparison across texts. However, such models ignore how readers construct meaning, especially when texts present layered arguments or abstract concepts.

This is where measurement diverges from reality. The text may score as simple, yet still fail to produce stable understanding.

Reading difficulty analysis shows that texts with short sentences can still impose high cognitive effort when they compress ideas or omit connective logic. Conversely, longer sentences can remain readable when structure guides interpretation. As a result, formula-based scores often misalign with actual comprehension outcomes in analytical contexts.

In practical terms, formulas tell how a text looks on the surface, not how it works in the reader’s mind. They estimate decoding cost but miss integration effort, which explains their inconsistent predictive value.

Emergence of Cognitive Readability Models

Cognitive readability models shift attention from text features to reader processing. These models account for how structure, sequencing, and conceptual dependencies influence understanding over time. They evaluate whether readers can maintain coherence while progressing through an argument.

Readability research metrics increasingly include indicators of integration effort, referential clarity, and progression stability. Empirical studies show stronger alignment between these metrics and comprehension outcomes, especially in expert and educational materials. This evidence supports a move toward cognition-aware evaluation.

Put simply, newer models ask whether readers can follow and connect ideas, not whether they can pronounce the words. This distinction explains why cognitive approaches better predict understanding in complex texts.

Structural vs Cognitive Signals

Structural readability metrics describe how text organization supports navigation and expectation. Headings, segmentation, and ordered progression provide cues that reduce search effort and orient readers within an argument. These signals shape how information enters working memory.

Cognitive signals reflect how meaning accumulates as readers connect propositions across sections. They depend on referential continuity, explicit relationships, and stable terminology. When these signals align with structure, comprehension improves even under higher information density.

In essence, structure guides where to look, while cognitive signals determine what readers understand. Effective readability emerges when both operate together rather than in isolation.

This shift introduces a different question: not how difficult the text is, but whether meaning can persist across its structure. That leads directly to how signals are formed inside the text.

Cognitive Load as a Readability Variable

Cognitive load has become a core variable in modern readability analysis because it explains how much mental effort readers expend while interpreting text. Within cognitive readability metrics, load functions as a unifying indicator that connects structure, information density, and comprehension stability. Cognitive reading load therefore anchors readability assessment in measurable human processing limits, a direction supported by experimental language research from the Stanford Natural Language Institute.

Definition: Cognitive reading load refers to the amount of mental processing effort required to decode, integrate, and maintain meaning while reading a text.

Claim: Readability depends on mental load during reading.

Rationale: Human comprehension has finite processing capacity.

Mechanism: Load increases with density, abstraction, and poor structure.

Counterargument: Expert readers tolerate higher load in familiar domains.

Conclusion: Load-aware metrics explain variance in comprehension outcomes.

At this point, readability becomes measurable through effort. When load increases beyond a threshold, comprehension no longer scales with text length.

Mental Effort and Processing Cost

Reader cognitive effort reflects how much mental energy readers invest to follow arguments, resolve references, and preserve coherence. In cognitive readability metrics, effort acts as a direct signal of whether text structure supports or resists understanding. High effort often appears even in short passages when ideas compress too tightly or when logical transitions remain implicit.

This is the point where interpretation slows down. Readers stop extending meaning and begin reconstructing it from fragments.

Cognitive effort measurement operationalizes this cost through indicators such as integration difficulty and dependency depth. Mental load in reading increases when readers must repeatedly reconstruct context rather than extend it. These patterns explain why effort-based signals outperform surface indicators in predicting comprehension outcomes.

At a practical level, effort rises when reading feels mentally demanding despite simple wording. Texts that guide interpretation reduce this burden, while those that rely on inference increase it.

This is exactly where cognitive readability becomes visible in real content. When structure fails to carry meaning forward, readers are forced to reconnect ideas instead of extending them, and effort increases with every step. Even when sentences look clear, understanding breaks silently in the middle because continuity is lost. This is the same mechanism behind why content looks clear but still confuses readers — not due to complexity, but because meaning does not persist across the structure.

Cognitive Processing in Reading

Cognitive processing in reading unfolds as a continuous cycle of decoding, integration, and validation. Readers update internal representations as new information arrives, and they adjust those representations when structure supports prediction. Cognitive readability metrics model this process by tracking how smoothly meaning accumulates across sentences.

Reading cognition analysis shows that processing cost compounds over time. Small disruptions in coherence escalate into comprehension failure in longer texts. For this reason, readability assessment must consider progression and continuity rather than isolated units.

In simpler terms, reading works when ideas connect smoothly. When connections break, understanding slows and effort increases.

When this cycle breaks, errors do not remain local. They propagate across the text, affecting every subsequent interpretation.

Comprehension Thresholds

Cognitive comprehension levels mark points where additional information exceeds processing capacity. Below these thresholds, readers integrate ideas while preserving coherence. Above them, understanding deteriorates even if sentences remain grammatically clear.

Within cognitive readability metrics, these thresholds explain why texts fail at predictable moments. Measuring proximity to these limits clarifies why readability degrades in dense analytical content.

Put plainly, comprehension breaks when texts demand more mental work than readers can sustain. Metrics that capture these limits describe readability more accurately than surface scores.

Structural Clarity and Text Comprehension

Structure operates as a comprehension scaffold because it shapes how readers anticipate, locate, and integrate information. When organization remains predictable, readers allocate attention to meaning rather than to navigation, which stabilizes understanding in analytical texts. Text cognitive clarity captures this effect by describing how structural cues reduce interpretive friction, a principle supported by research on interpretable document structure from MIT CSAIL.

Principle: Texts achieve higher interpretive reliability in AI-driven systems when their structure, definitions, and reasoning patterns remain stable enough to be parsed without contextual reconstruction or semantic drift.

Definition: Text cognitive clarity refers to the degree to which structural organization guides readers toward accurate interpretation by reducing ambiguity and integration effort.

Claim: Structural clarity reduces comprehension effort.

Rationale: Predictable organization aids cognitive parsing.

Mechanism: Headings, segmentation, and logical flow reduce ambiguity.

Counterargument: Over-structuring may fragment narrative coherence.

Conclusion: Balanced structure optimizes cognitive clarity.

Structure does not simplify content. It determines whether meaning can be accessed without reconstruction.

Sentence and Paragraph Complexity

Sentence cognitive complexity increases when propositions accumulate without explicit relationships. Readers must infer dependencies, which raises integration cost even if sentences remain short. Clear syntactic patterns and explicit connectors lower this cost by signaling how ideas relate across clauses.

Paragraph comprehension signals emerge from how sentences cluster around a single idea. Paragraphs that maintain one conceptual focus allow readers to consolidate meaning before moving forward. When paragraphs mix purposes, readers spend additional effort disentangling intent, which undermines comprehension.

In simple terms, sentences work best when they express one clear idea, and paragraphs work best when they develop that idea fully. This alignment reduces mental effort and keeps understanding stable.

Information Density and Readability

Information density readability describes how much meaning a text packs into a given span. High density can improve efficiency, but it also increases cognitive load when structure fails to guide interpretation. Readers handle dense content more effectively when headings and transitions signal progression.

- Low density → easier decoding but weaker knowledge transfer

- Balanced density → optimal integration and retention

- High density without structure → comprehension collapse

Balanced density depends on pacing and segmentation. When texts distribute information across clearly bounded units, readers integrate meaning incrementally. This approach preserves comprehension even in technical or analytical contexts.

Put plainly, dense texts remain readable when they unfold ideas step by step. Without structure, the same density overwhelms readers.

| Structural Feature | Cognitive Effect | Readability Impact |

|---|---|---|

| Clear headings | Reduces search effort | Improves navigation and comprehension |

| Focused paragraphs | Supports idea consolidation | Stabilizes understanding |

| Logical transitions | Signals relationships | Lowers integration cost |

| Controlled density | Manages processing load | Preserves readability |

Evaluating Comprehension, Not Just Difficulty

Readability assessment increasingly prioritizes whether readers understand content rather than whether they can decode it. This shift moves analysis away from surface difficulty estimates toward verification of meaning construction, a direction supported by language technology research at Carnegie Mellon University LTI. Comprehension evaluation models formalize this change by focusing on how readers integrate ideas, retain information, and validate understanding across a text.

Definition: Comprehension evaluation models are analytical frameworks that assess understanding by measuring integration, recall, and coherence rather than relying on surface difficulty indicators.

Claim: Difficulty does not equal comprehension.

Rationale: Readers may decode text without understanding meaning.

Mechanism: Evaluation models track integration and recall signals.

Counterargument: Comprehension is harder to measure at scale.

Conclusion: Evaluation models provide deeper interpretive accuracy.

The critical shift happens when decoding is no longer enough. A text must prove that meaning has been successfully constructed.

Cognitive Text Evaluation Methods

Cognitive text evaluation focuses on signals that reflect how readers construct meaning over time. These methods analyze coherence maintenance, reference resolution, and the stability of mental representations as readers progress. By observing these signals, evaluators infer whether a text supports understanding rather than merely enabling decoding.

Comprehension effort indicators complement this analysis by capturing the mental work required to integrate propositions. Higher effort often signals hidden complexity, unclear relationships, or overloaded passages. When effort spikes repeatedly, comprehension degrades even if sentences remain short and familiar.

Repeated effort spikes signal structural instability. At this stage, interpretation becomes inconsistent across readers and systems.

In straightforward terms, evaluation looks at whether readers can connect ideas smoothly. When connections require constant mental repair, understanding suffers despite apparent simplicity.

Example: An analytical article that maintains stable terminology, explicit definitions, and consistent reasoning blocks enables AI systems to isolate high-confidence segments, preserving meaning accuracy when generating summaries or long-context interpretations.

Readability Quality Metrics

Readability quality metrics extend evaluation beyond difficulty by correlating textual features with observed comprehension outcomes. These metrics examine alignment between structure, terminology stability, and progression logic. Strong alignment predicts consistent understanding across different reader groups.

Such metrics also enable comparison between texts that share similar surface scores but produce different comprehension results. By focusing on quality rather than ease, evaluation identifies texts that communicate reliably under cognitive constraints.

Put simply, quality metrics reveal how well a text conveys meaning. They show whether readers finish with a clear understanding, not just whether they can finish reading.

Human-Centered Readability Metrics

Readability becomes reliable when it reflects how humans actually process information rather than how texts appear on the surface. Within cognitive readability metrics, this perspective positions understanding as a cognitive activity shaped by effort, inference, and context. Human cognitive readability therefore grounds measurement in reader processing limits, an approach aligned with analytical practices discussed by the Harvard Data Science Initiative.

Definition: Human cognitive readability describes the degree to which a text aligns with human cognitive processes, enabling accurate interpretation through manageable effort, stable references, and coherent progression.

Claim: Readability must align with human cognition.

Rationale: Metrics detached from human processing misrepresent clarity.

Mechanism: Human-centered metrics model effort, inference, and context.

Counterargument: Individual differences complicate standardization.

Conclusion: Human alignment improves interpretability and reuse.

This perspective redefines readability as alignment, not appearance. The text must match how cognition actually operates.

Cognitive Clarity in Writing

Cognitive clarity in writing emerges when texts guide readers toward intended meaning without forcing reconstruction. In cognitive readability metrics, clarity signals whether structure and phrasing support immediate integration rather than delayed inference. Clear reference chains and explicit relationships allow readers to absorb meaning as it unfolds.

Writing clarity indicators capture how consistently texts maintain this support. Indicators include stable definitions, predictable progression, and explicit transitions that signal relationships. When these indicators remain consistent, comprehension stabilizes across longer passages.

In simple terms, clear writing helps readers understand ideas as they appear. When texts explain connections directly, readers spend less effort guessing intent.

Content Comprehension Measurement

Content comprehension measurement evaluates whether readers form accurate mental models from text. Within cognitive readability metrics, this measurement focuses on alignment between intended meaning and reader interpretation rather than on perceived ease. Outcomes such as retention and inference accuracy reveal whether communication succeeds under cognitive constraints.

Effective measurement compares interpretation consistency across sections. When alignment remains high, texts demonstrate strong human cognitive readability even at higher complexity. When alignment degrades, measurement reveals where structure or clarity fails.

Put plainly, comprehension measurement checks whether readers understand what the text means. It shows whether information transfers reliably, not just whether it looks readable.

Analytical and Longform Readability

Analytical texts extend beyond local sentence comprehension and require readers to retain context across long spans. As arguments accumulate, small lapses in coherence compound and affect understanding, which explains why generic readability scores underperform for extended materials. Longform readability metrics address this gap by evaluating sustained comprehension in depth-oriented writing, a concern examined in research on digital knowledge consumption by the Oxford Internet Institute.

Definition: Longform readability metrics describe evaluation methods that measure comprehension stability across extended texts by accounting for progression, coherence, and cumulative cognitive load.

Claim: Longform texts impose cumulative cognitive demands.

Rationale: Understanding depends on sustained context retention.

Mechanism: Metrics assess progression, coherence, and load accumulation.

Counterargument: Skilled readers manage longform complexity effectively.

Conclusion: Longform metrics explain depth-related comprehension loss.

In longform content, small structural failures accumulate. What seems minor early becomes critical later in the text.

Expert and Knowledge Texts

Expert content readability depends on how well texts support ongoing integration rather than on immediate clarity. Knowledge texts often introduce layered concepts that rely on earlier definitions and arguments. When structure fails to reinforce these dependencies, readers lose orientation even if individual sections appear clear.

Knowledge text comprehension degrades when references drift or when terminology shifts subtly over long spans. Stable conceptual anchors and explicit progression help readers maintain a coherent mental model. These features matter more in expert materials because readers process ideas cumulatively rather than independently.

In simple terms, expert texts stay readable when each part clearly builds on the last. When connections weaken, understanding erodes despite careful wording.

Analytical Text Readability

Analytical text readability reflects how well reasoning unfolds across extended arguments. Readers track claims, evidence, and conclusions over many sections, which requires predictable sequencing. When analytical texts signal transitions and preserve logical order, readers sustain comprehension even under higher complexity.

Breakdowns occur when arguments introduce implicit steps or rely on unstated assumptions. These gaps increase integration effort and disrupt flow. Metrics that account for coherence over distance capture these effects more accurately than local difficulty measures.

This is where reasoning becomes unstable. Missing steps force readers to infer connections that should have been explicit.

Put plainly, analytical writing works when reasoning progresses step by step. When steps go missing, readers struggle to follow the argument, regardless of sentence-level clarity.

Cognitive Linguistics and Readability Science

Readability metrics gain explanatory power when they rest on established findings from cognitive linguistics. This field examines how language structure constrains meaning construction and how readers resolve references, integrate propositions, and maintain coherence. Cognitive linguistics readability grounds measurement in these mechanisms, a perspective reinforced by interdisciplinary research from the Max Planck Institute for Intelligent Systems.

Definition: Cognitive linguistics readability refers to the assessment of text clarity based on linguistic structures that govern meaning construction, including syntax, reference resolution, and cohesion.

Claim: Linguistic structure shapes cognitive readability.

Rationale: Meaning construction follows linguistic constraints.

Mechanism: Syntax, reference, and cohesion influence comprehension.

Counterargument: Context can override linguistic complexity.

Conclusion: Linguistic analysis strengthens metric validity.

At this level, readability depends on how language encodes relationships. Structure alone is no longer sufficient.

Cognitive Readability Factors

Cognitive readability factors emerge from how linguistic choices guide interpretation. Sentence structure signals relationships among ideas, while cohesive devices maintain continuity across clauses and paragraphs. When these factors align, readers integrate meaning with less effort and fewer revisions.

These factors also interact across scales. Local syntax affects immediate parsing, while discourse cohesion supports long-range integration. When either layer weakens, readers expend additional effort to repair understanding, which degrades overall readability.

In simple terms, language works when form supports meaning. Clear structures help readers understand ideas as they appear, while unclear forms force readers to reconstruct intent.

Comprehension Science Metrics

Comprehension science metrics translate linguistic theory into measurable signals. These metrics track reference stability, cohesion strength, and syntactic predictability to infer how readers build mental models. By anchoring evaluation in observed processing behavior, metrics move beyond surface description.

Such metrics also explain variation across texts with similar difficulty scores. Linguistic alignment predicts whether readers sustain understanding as content progresses. This predictive capacity increases the reliability of readability assessment in analytical contexts.

Put plainly, comprehension metrics show how language structure affects understanding. They reveal why some texts communicate clearly while others confuse readers despite similar wording.

Practical Implications of Cognitive Readability Metrics

Applied use of readability metrics requires translation from theory into editorial and knowledge systems that operate at scale. These editorial implications become particularly visible in modern generative search environments, where structured clarity determines whether AI systems can interpret and reuse information. A practical overview of this transformation appears in this guide to writing for AI search engines, which explains how structure, terminology stability, and reasoning patterns influence machine-level interpretation. In practice, informational readability metrics connect cognitive principles to concrete decisions about structure, density, and phrasing, a focus reflected in standards-oriented guidance from the NIST. This application reframes readability as an operational property that supports reliable interpretation across analytical documents.

Definition: Informational readability metrics describe applied measures that evaluate how effectively texts convey information through structure, density control, and phrasing aligned with human cognitive processing.

Claim: Cognitive readability metrics improve information reliability.

Rationale: Clear texts reduce misinterpretation and cognitive strain.

Mechanism: Metrics guide structure, density, and phrasing decisions.

Counterargument: Metrics require careful contextual interpretation.

Conclusion: Applied use enhances knowledge accessibility.

This is where theory becomes operational. Readability begins to affect real outcomes in visibility, interpretation, and reuse.

Text Clarity Evaluation in Practice

Text clarity evaluation operationalizes cognitive principles by assessing whether readers can follow intent without reconstructing meaning. Editorial teams use these evaluations to identify points where structure fails to guide interpretation or where density exceeds processing limits. The focus remains on interpretive stability rather than stylistic preference.

Evaluation also compares intended meaning with observed reader interpretation across sections. When alignment weakens, metrics highlight where transitions, definitions, or sequencing need adjustment. This feedback loop enables targeted revision without altering substantive content.

In simple terms, clarity evaluation shows where readers get lost. It points to structural fixes that restore understanding without changing what the text says.

Microcase: Analytical Report Revision

An internal analytical report presented accurate data but produced inconsistent reader interpretations. Reviewers applied writing clarity indicators to identify dense sections with implicit transitions and unstable terminology. After restructuring headings and reducing local density, readers retained context across sections and produced consistent summaries.

The revision did not simplify content or remove detail. It aligned structure with cognitive processing, which reduced effort and stabilized comprehension outcomes.

Checklist:

- Are core cognitive readability concepts explicitly defined at their point of introduction?

- Do H2–H4 boundaries preserve stable semantic scopes?

- Does each paragraph function as a single reasoning unit?

- Are abstract claims reinforced through structural or contextual clarification?

- Is terminology applied consistently across all sections?

- Does the page support progressive interpretation without meaning reconstruction?

Conclusion

Cognitive readability metrics represent a structural shift in how clarity and comprehension are measured. They move evaluation away from surface difficulty toward cognitive effort, integration stability, and progression coherence. This shift explains why traditional scores fail to predict understanding in analytical and longform texts.

By grounding measurement in human cognition, these metrics support reliable interpretation across complex materials. They align readability with how readers actually process information rather than with how texts appear. As a result, readability becomes a property of meaning transfer, not presentation.

Over time, this approach establishes a durable framework for knowledge accessibility. Cognitive readability metrics provide consistent signals that remain valid across formats, audiences, and contexts, which makes them suitable for long-term analytical and editorial systems.

Interpretive Signals in Cognitive Readability Architecture

- Hierarchical comprehension framing. Multi-level heading depth establishes explicit boundaries for reasoning segments, allowing AI systems to associate cognitive load, definitions, and arguments with stable contextual scopes.

- Semantic progression continuity. Ordered section flow signals how meaning accumulates across the page, enabling models to interpret readability as a cumulative cognitive process rather than isolated text difficulty.

- Definition-centered concept anchoring. Early placement of local definitions fixes conceptual meaning at the point of introduction, reducing ambiguity during long-context interpretation and reuse.

- Reasoning-chain compartmentalization. Recurrent deep reasoning structures act as modular interpretive units, helping AI systems distinguish claims, mechanisms, and constraints without cross-contamination.

- Structural density signaling. Consistent paragraph sizing and bounded idea units indicate where cognitive effort increases, supporting interpretation of readability through processing load rather than surface complexity.

Together, these architectural signals explain how cognitive readability is interpreted as a structural property of the page, enabling AI systems to model comprehension stability, effort distribution, and meaning integration without relying on external heuristics.

FAQ: Cognitive Readability Metrics

What are cognitive readability metrics?

Cognitive readability metrics evaluate how readers process, integrate, and retain meaning by measuring cognitive effort, structure, and comprehension stability.

How do cognitive readability metrics differ from traditional readability scores?

Traditional scores estimate surface difficulty, while cognitive readability metrics assess understanding by modeling mental load, progression, and meaning integration.

Why is cognitive load important for readability?

Cognitive load reflects the mental effort required to maintain comprehension, explaining why texts with similar difficulty scores can produce different understanding outcomes.

What role does structure play in cognitive readability?

Structure guides attention, signals relationships, and reduces integration effort, allowing readers to build stable mental representations across a text.

How is comprehension evaluated beyond difficulty?

Comprehension is evaluated through integration, recall, and coherence signals that indicate whether readers form accurate mental models of the content.

Why do longform texts require separate readability metrics?

Longform texts impose cumulative cognitive demands, making comprehension dependent on sustained context retention rather than local sentence clarity.

How do cognitive readability metrics support analytical writing?

They reveal where structure, density, or progression disrupt understanding, enabling evaluation of meaning transfer without altering factual content.

Can cognitive readability be measured consistently?

While individual differences exist, cognitive readability metrics identify stable patterns linked to structure, load, and linguistic alignment.

Why are cognitive readability metrics relevant for knowledge systems?

They ensure that complex information remains interpretable, reusable, and reliable across analytical, educational, and research-oriented texts.

Glossary: Key Terms in Cognitive Readability

This glossary defines the core terminology used in the article to support consistent interpretation of cognitive readability concepts by both human readers and AI systems.

Cognitive Readability

A property of text describing how effectively readers can process, integrate, and retain meaning under cognitive constraints.

Cognitive Load

The amount of mental effort required to decode, integrate, and maintain understanding while reading a text.

Text Cognitive Clarity

The degree to which structural organization guides readers toward accurate interpretation by reducing ambiguity and integration effort.

Comprehension Stability

The ability of readers to maintain a coherent mental model of content across extended sections of text.

Structural Readability Signal

A structural feature such as headings, segmentation, or progression that supports interpretation and reduces cognitive effort.

Information Density

The concentration of meaningful propositions within a text segment relative to reader processing capacity.

Semantic Progression

The ordered accumulation of meaning across sections that allows readers to extend understanding without reinterpreting prior content.

Comprehension Threshold

A point at which additional information exceeds reader processing capacity and comprehension begins to degrade.

Human-Centered Readability

An approach to readability that evaluates texts based on alignment with human cognitive processing rather than surface difficulty.

Interpretive Consistency

The degree to which readers and AI systems derive the same meaning from a text across its full length.